Your content team has been publishing consistently. Your domain authority is solid. Your backlink profile looks healthy. But when a potential buyer asks ChatGPT, “What’s the best tool in [your category]?”, your brand isn’t in the answer. Traditional SEO can’t tell you why, because it was never built to measure what happens inside a synthesized response.

That gap is widening fast. And agentic AI is now the layer where brand visibility is actually won or lost.

1. AI Platforms Are Being Monitored Automatically, Across Every Prompt Variation

Manual spot-checks on ChatGPT or Perplexity used to be the norm. A marketer would run a few queries, see whether the brand appeared, and call it done. That approach misses most of what’s actually happening.

The core problem is that AI responses are non-deterministic. Run the same prompt twice and you’ll often get different brand mentions, different positions, different framing. A single check gives you one data point. What you need is a probability.

That’s where agentic AI comes in. Platforms like Topify run high volumes of prompts, often 100 or more per query variation, to calculate a statistically reliable visibility score. The output isn’t “your brand appeared” or “it didn’t.” It’s a confidence interval. You learn that your brand shows up in 34% of relevant prompts on ChatGPT, versus 61% on Perplexity. Those are numbers you can actually act on.

The other variable is scale. Agentic systems track across ChatGPT, Gemini, Perplexity, and other platforms simultaneously, running continuously rather than on demand. That kind of coverage makes it possible to catch shifts as they happen, not two weeks later when a competitor has already pulled ahead.

2. Competitor Positions in AI Recommendations Are Being Tracked in Real Time

In traditional search, rank tracking is straightforward. Your keyword, their keyword, ten blue links, a number from 1 to 10. Generative engine rankings don’t work that way.

AI responses are narrative. Your brand might be mentioned first, third, or not at all, depending on how the question was framed, which AI platform answered, and what day it is. Research from Princeton University has established that brands mentioned earlier in a synthesized answer carry significantly more weight, leading to what researchers call the Position-Adjusted Word Count (PAWC) metric: a brand mentioned in the first sentence with ten words is mathematically more visible than one appearing fourth with twenty.

That means position isn’t just a vanity metric. It predicts discovery.

Agentic AI handles this by running continuous competitor probes. Not “did Brand X appear?” but “where did Brand X appear relative to us, across which prompt types, on which platforms, and is that changing?” The output is a dynamic competitive map, not a static leaderboard.

What makes this useful in practice: teams can see when a competitor gains first-mention advantage in a specific category and correlate that shift with what changed in their content or PR coverage. The insight is actionable, not just observational.

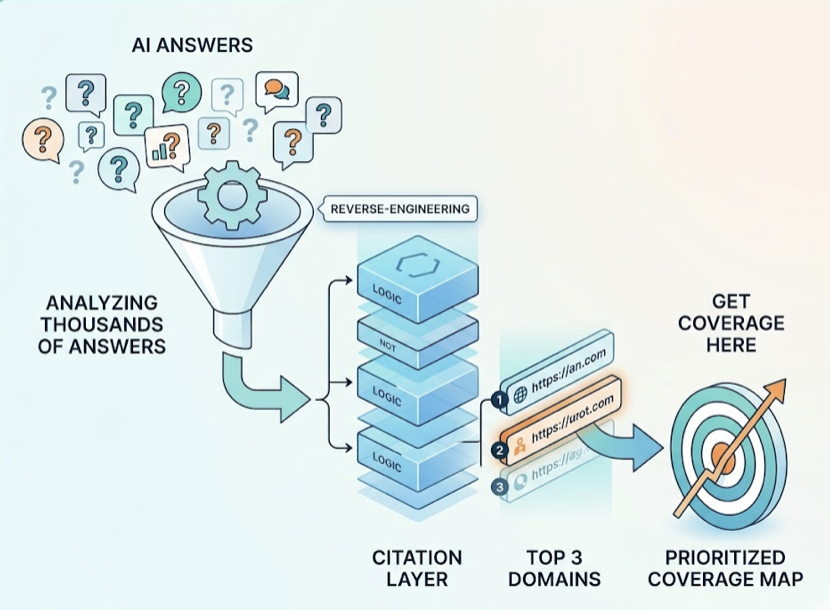

3. The Sources AI Trusts Most Are Being Reverse-Engineered

Most brand teams don’t know which third-party domains AI engines are actually pulling from when they generate answers in their category. That’s a problem, because the source layer is where AI recommendations are built.

The research here is clear. Third-party coverage gets cited between 72% and 92% of the time in AI responses, while brand-owned content accounts for only 18% to 27%. Wikipedia, Reddit, industry blogs, and review platforms like G2 carry far more weight than your own website. That’s the “Earned Media Gap,” and it means your PR and community strategy is effectively your GEO strategy.

Agentic AI platforms can now reverse-engineer the citation layer at scale. By analyzing which domains and specific URLs are being retrieved across thousands of AI answers in your category, you get a prioritized map of where your brand needs coverage. Not “write more content,” but “get coverage on these three domains, because those are what ChatGPT is pulling from when users ask about your product type.”

Topify’s Source Analysis feature does exactly this, identifying the specific third-party URLs that AI platforms cite most frequently. For content teams, that data reshapes the editorial calendar. For PR teams, it replaces guesswork with a ranked target list.

It also works the other way. If AI platforms are citing outdated pages about your brand, or pulling from a forum thread that misrepresents your pricing, you’ll see it here first.

4. Sentiment Shifts Are Being Caught Before They Spread

AI responses aren’t neutral. They carry framing. Your brand might be cited as the leading option, the budget alternative, the one that “works well but has a learning curve,” or the one “not recommended for enterprise use.” Each of those framings affects buyer behavior differently.

The mechanism behind this is what researchers call AI bias. Confirmation bias in LLMs tends to reinforce existing patterns repeatedly: if the training data associated your brand with a particular limitation, that characterization persists in AI responses even after you’ve fixed the underlying issue. It doesn’t correct itself automatically.

This is why real-time sentiment monitoring now matters more than a quarterly brand audit. Agentic AI systems score the qualitative framing of brand mentions on a scale, tracking whether your brand is being positioned as a primary recommendation, a secondary alternative, or a cautionary mention. Topify’s sentiment engine runs this continuously, outputting a score from -100 to +100 across platforms.

The strategic value is in early detection. If Gemini starts describing your product with a “good but expensive” qualifier, you have a window to act before that framing becomes entrenched. The intervention: identify which source documents are feeding that characterization, displace them with current, authoritative content, and monitor whether the sentiment score shifts over the following weeks.

That’s the difference between narrative control and narrative cleanup.

5. High-Value Prompts That Drive Competitor Discovery Are Being Surfaced

Most brands optimize for keywords. Short queries, 4 words on average, designed for a traditional search box. AI search doesn’t work that way.

The average AI prompt is 23 words long, conversational, and often includes specific constraints: budget ranges, team sizes, technical integrations, use cases. “Best project management tool for a 10-person remote agency that uses Slack and needs a Kanban board under $30 a month.” That single prompt surfaces completely different recommendations than “best project management tool.”

Most brands have no visibility into which prompts their competitors are being discovered through. That’s the gap agentic AI fills. By continuously mining what researchers call a “Prompt Matrix,” platforms like Topify identify the high-intent questions users are asking AI engines in your category, map them to brand performance data, and surface the specific prompt patterns where competitors have an advantage.

The practical output: a prioritized list of prompt types where your brand should be appearing but isn’t. Each one becomes a content brief. Not based on keyword volume, but on actual AI discovery behavior.

One concrete starting point for teams building this capability manually: filter Google Search Console queries using a regex pattern for 10+ word queries. Those long-form questions approximate the prompts users are taking to AI engines, and they reveal the intent structure that generative optimization should be targeting.

What These Five Use Cases Share

Each of these applications requires the same underlying capability: continuous, automated, cross-platform execution at a volume that no human team can replicate manually.

AI citations turn over at a rate of 40 to 60% per month. A competitor that was absent from ChatGPT recommendations last month might dominate them this month. A source that AI trusted six weeks ago might have dropped off entirely. The volatility makes periodic audits nearly useless.

That’s the core argument for agentic AI in brand visibility: not that it produces better insights than a smart analyst, but that it produces them continuously, across every platform, at a cadence that matches how fast the underlying system is changing.

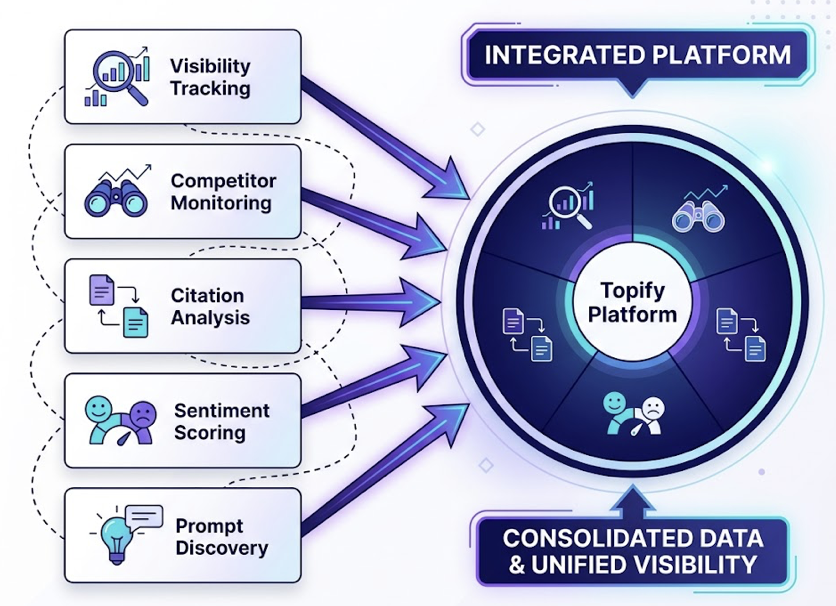

Topify integrates all five of these workflows into a single platform, covering visibility tracking, competitor monitoring, citation analysis, sentiment scoring, and prompt discovery. For teams moving from manual GEO checks to a structured, measurable program, that consolidation matters. The alternative is stitching together five separate tools, each with its own data model and update frequency, and trying to find the signal across all of them.

The brands that will be recommended by AI in 2026 are the ones that started treating AI visibility as a measurement problem, not a content problem, early enough to build the feedback loop. The data infrastructure is the strategy. Get started with Topify to see where your brand stands today.

Conclusion

Agentic AI didn’t just make brand visibility tracking faster. It made it possible at the scale the problem actually requires. The five use cases covered here, from automated platform monitoring to high-value prompt discovery, each address a different layer of how generative engines decide what to recommend.

The underlying principle is the same in each case: you can’t optimize what you can’t measure continuously. Traditional SEO tools measure a static ranking system. Agentic AI tools measure a probabilistic, constantly shifting one. Getting that right, consistently, at scale, is the foundation of brand authority in the synthesis economy.

FAQ

Q: What is agentic AI in the context of brand visibility?

A: Agentic AI refers to autonomous AI systems that can plan, execute, and iterate on tasks without constant human input. In brand visibility, this means running thousands of prompts across multiple AI platforms, analyzing citation sources, tracking sentiment shifts, and surfacing high-intent prompts continuously, rather than in one-off manual audits.

Q: How is agentic AI different from traditional SEO tools?

A: Traditional SEO tools measure a relatively stable system: keyword rankings, backlink counts, page authority. Generative engine responses are non-deterministic, meaning the same query produces different outputs across sessions. Agentic AI tools are built for this volatility, using probabilistic scoring across large sample sizes rather than static rank positions.

Q: Can smaller brands benefit from agentic AI visibility tools?

A: Yes, often more than larger ones. Smaller brands typically have narrower category footprints and fewer resources for manual monitoring. Agentic AI tools surface the specific prompt types and citation sources where they can gain ground efficiently, rather than requiring broad content coverage across the entire category.

Q: How often should brands run AI visibility audits?

A: Given that AI citation patterns turn over at 40 to 60% per month, periodic audits are generally insufficient. Continuous monitoring is the baseline expectation for brands treating AI search as a real acquisition channel. Weekly reporting on key prompt clusters is a practical starting point for teams new to GEO.