You rank first on Google. You’ve spent years earning it.

But when a prospective buyer opens ChatGPT and asks “what’s the best CRM for small teams,” your brand isn’t mentioned once. A competitor you’ve never worried about gets cited three times.

That’s not a ranking problem. That’s an AI search visibility problem, and fixing it starts with understanding what’s actually happening.

The Gap Between Google Rankings and AI Answers

Traditional SEO optimizes for a directory. You fight for a spot on a list of links, and users click through to decide. AI search works differently. ChatGPT, Perplexity, and Gemini don’t return a list of destinations. They synthesize an answer, pick a handful of sources, and deliver a verdict.

In that environment, the objective shifts from “rank high” to “become an ingredient” in the final output.

The divergence is already measurable. Research indicates that roughly 80% of the sources cited in Google’s AI Overviews don’t rank in the top 100 organic results for the same keyword. Traditional link-based authority and AI citation logic are running on different rails.

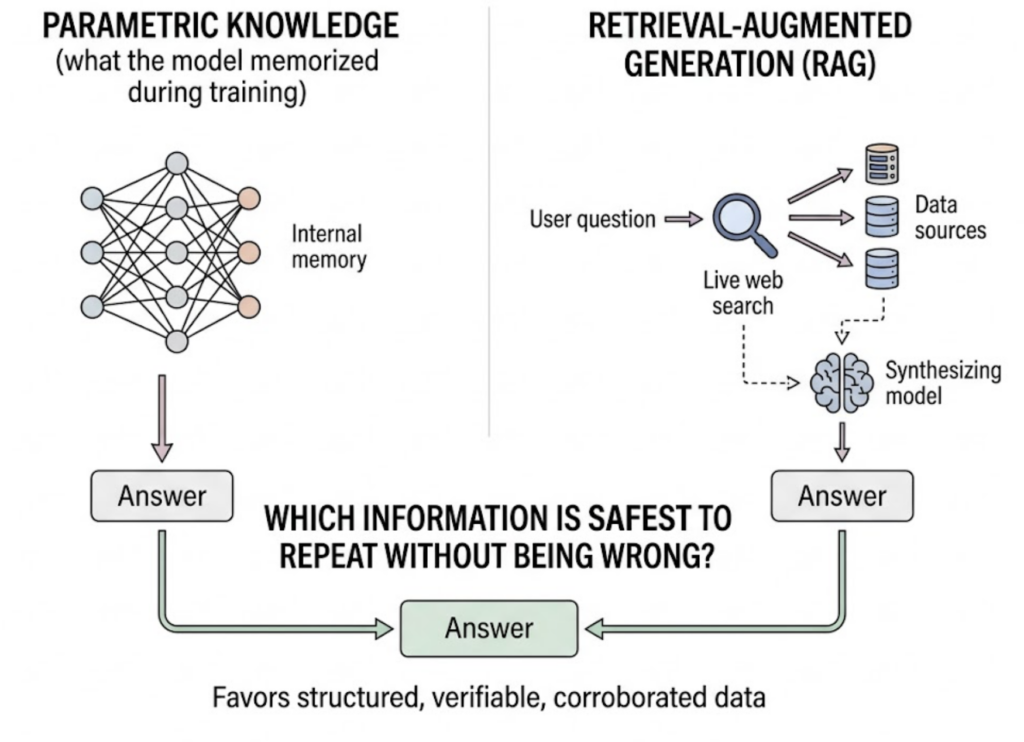

Here’s how AI engines actually select which brands to mention. Some answers pull from parametric knowledge (what the model memorized during training), while others use Retrieval-Augmented Generation, or RAG, where the model runs a live search and synthesizes fresh results. Across both pathways, the model isn’t asking “which page is best?” It’s asking “which information is safest to repeat without being wrong?” That favors structured, verifiable, corroborated data over polished brand copy.

AI search visibility (ASV) is the composite measure of how often your brand appears in AI answers, where it appears, and how it’s framed. Unlike a static SERP rank, it’s a living signal that shifts as models update, content ages, and competitors move.

5 Numbers That Tell You Where You Actually Stand

You can’t manage what you can’t measure. These five metrics define the core of any AI visibility audit.

1. Visibility Rate. The percentage of relevant prompts where your brand is explicitly mentioned. For established brands, 25%+ is a healthy baseline. Emerging brands should target 5-10% as an initial benchmark.

2. Sentiment Score. Presence means nothing if the framing is wrong. AI models describe brands as “leading solutions,” “budget alternatives,” or “cautionary examples,” and the difference matters. Scores are typically normalized on a 0-100 scale. Most successful brands land between 65 and 85. Below 60 is a warning sign worth investigating immediately.

3. Response Position Index (RPI). Where in the answer does your brand appear? AI responses rarely cite more than 2-7 domains. A first-position mention carries far more implicit endorsement than a buried reference at the bottom. Position matters as much in AI answers as it does on a search results page.

4. Share of Voice (SoV). Your brand mentions as a percentage of total mentions in a category. If your space generates 100 brand recommendations across 50 prompts and you collect 20, your SoV is 20%. In competitive SaaS and finance verticals, category leaders typically hold 35-45% SoV.

5. Source Coverage. This one surprises most teams. Brands are cited 6.5 times more often through third-party sources than through their own websites. Source Coverage tracks how many independent domain types (media, reviews, forums, encyclopedic sources) are feeding the AI’s picture of your brand. Appearing across 4+ platform types is the baseline for model consensus and stable visibility.

These five numbers are what a real AI visibility report looks like. A Topify dashboard tracks all of them across ChatGPT, Gemini, Perplexity, DeepSeek, and other major platforms simultaneously.

3 Mistakes That Keep Brands Off AI’s Radar

Most brands aren’t invisible because they did something wrong. They’re invisible because they applied the right SEO instincts to the wrong environment.

Mistake 1: Owned-channel myopia. Pouring effort into your own website while ignoring your external footprint. Research puts 85% of brand mentions in AI answers as originating from external domains like Reddit, G2, YouTube, and industry publications. A perfectly optimized website that nobody else corroborates is easy for an AI to ignore.

Mistake 2: Single-platform dependency. Tracking only ChatGPT and calling it done. Each AI engine runs a different retrieval pipeline. ChatGPT Search mode relies heavily on Bing’s index. Perplexity uses a proprietary index of roughly 200 billion URLs with a recency bias. Only 11% of cited domains overlap between the two for the same query. Optimizing for one platform provides almost no coverage on the others.

Mistake 3: Treating visibility as a static asset. Pages updated in the last 60 days are nearly twice as likely to appear in AI-generated answers as older content. AI systems aren’t indexing a frozen snapshot of the web. They’re continuously recalibrating. Brands that set-and-forget their content are losing ground in real time to competitors who keep publishing.

That last point is worth sitting with. You can do everything right, build real visibility, and watch it erode over 90 days without ever knowing why. That’s what Topify’s Visibility Tracking monitors continuously, not just in monthly reports.

A Strategy That Moves the Number, Not Just the Report

Tactics without a framework produce scattered results. The most durable approach to AI visibility operates on three layers.

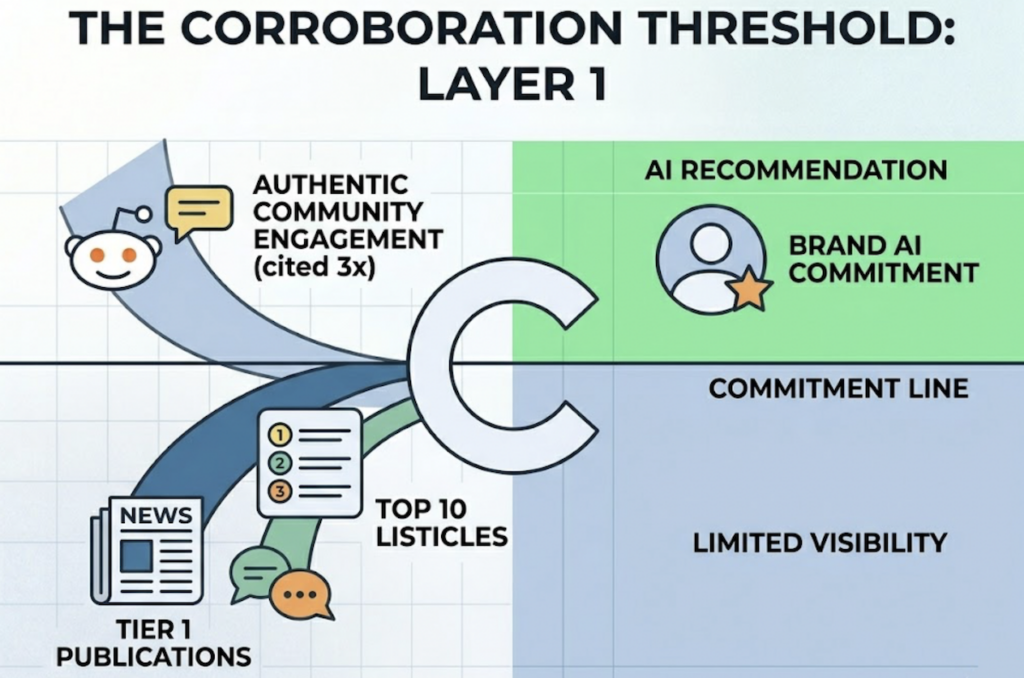

Layer 1: Source Coverage. The goal here is to cross the “corroboration threshold,” the point where enough independent, credible sources mention your brand that an AI commits to recommending it. This means editorial placements in Tier 1 publications, authentic participation in Reddit and community forums (brands with active community footprints are cited 3 times more often than those without), and getting onto the “Best of” and “Top 10” listicles that AI models habitually synthesize into their answers.

Layer 2: Prompt Intent Alignment. AI users don’t type keywords. They ask questions. “What’s the best CRM for a 5-person team that integrates with Slack?” is a single query that fans out into multiple sub-questions the model tries to answer. Content needs to explicitly address those sub-questions to be retrievable. This means shifting from keyword-targeting to intent-cluster coverage.

Layer 3: Sentiment Consistency. A brand that’s described differently across its website, LinkedIn, Wikipedia, G2, and Trustpilot creates ambiguity. AI models resolve ambiguity by going with the safer, more consistently described option. Aligning your positioning, your product specs, and your brand narrative across all platforms reduces the chance the model frames you in a way you didn’t intend.

Topify’s One-Click Agent Execution connects all three layers. You define the goals, the system handles monitoring and execution across the full cycle.

How to Improve AI Visibility Without Rebuilding Everything

The good news: you probably don’t need to start over. Most brands have the raw material. The gap is in how it’s formatted and where it lives.

A landmark 2024 study by researchers at Princeton and Georgia Tech identified concrete formatting techniques that shift citation rates. Adding verifiable statistics increases AI visibility by 41%. Content that cites its own external sources is 39.6% more likely to be retrieved by a generative engine. Leading each section with a direct answer, rather than a contextual warm-up, improves retrieval rates by 32.8%. AI models extract from the first one or two sentences of a paragraph. If you start with context, the model moves on.

Structured formats help too. Tables, numbered steps, and listicles are 17 times easier for AI models to parse than dense narrative prose.

On the competitive side, there’s a tactic worth prioritizing: reverse-engineering your competitors’ citations. Find the queries where competitors are being recommended. Identify which sources the AI is pulling from to justify those mentions. If a competitor is cited because of a G2 review thread or a mention in a specific industry blog, getting your brand into that same source becomes a concrete, actionable target rather than a vague “improve your content” suggestion.

Topify’s Source Analysis surfaces exactly this data, which domains AI platforms are citing for you and your competitors, so you can prioritize outreach with actual evidence rather than guesswork.

Tools That Track This, and What They Cost

The monitoring market for AI search visibility has developed quickly. At a high level, the options fall into three tiers.

Starter/self-serve tools ($30-$150/mo) are designed for SMBs and startups that need core visibility scores, competitor benchmarking, and citation analysis without enterprise overhead.

SEO toolkit extensions ($100-$300/mo) integrate AI visibility modules into existing platforms like Semrush and Ahrefs, letting teams track AI presence alongside traditional rankings in one workflow.

Enterprise platforms (€400-€2,000+/mo) offer SOC 2 compliance, statistical rigor at scale (some running up to 50,000 prompts), and board-ready reporting for regulated industries.

Topify sits in the starter-to-mid tier with a pricing model designed for teams that want real coverage without inflated enterprise bundles:

| Plan | Monthly Price | Key Limits |

|---|---|---|

| Basic | $99/mo | 100 prompts, 9,000 AI answer analyses, 4 projects |

| Pro | $199/mo | 250 prompts, 22,500 AI answer analyses, 8 projects |

| Enterprise | From $499/mo | Custom prompts, dedicated account manager |

All plans cover ChatGPT, Perplexity, Gemini, and AI Overviews tracking. The Pro tier adds significantly more prompt volume, which matters once you’re running weekly competitor benchmarking alongside your own brand monitoring.

For teams evaluating tools, the practical differentiator isn’t the dashboard. It’s whether the platform can tell you why AI recommends a competitor, not just that it does. Topify’s competitor monitoring and source analysis are built specifically for that second question.

AI Search Visibility Checklist: 8 Things to Audit This Week

Run this audit before touching any strategy. You need a baseline.

- Run brand queries across three platforms. Ask ChatGPT, Gemini, and Perplexity “What is [your brand]?” and “Is [your brand] a good choice for [your use case]?” Document what each says and how it frames you.

- Identify your Visibility Rate. Test 20 non-branded prompts your customers realistically ask. Note how often your brand appears vs. competitors.

- Check your Sentiment. In responses where you do appear, what language does the model use? “Leading solution” or “one option among many”?

- Map your source footprint. Which external domains is the AI pulling from when it mentions your brand? Are G2, Reddit, and industry media represented, or is it all your own site?

- Cross-platform comparison. Do your ChatGPT and Perplexity results match? If not, the gap reveals which platform’s retrieval logic you haven’t addressed.

- Find your prompt gaps. Which high-volume prompts in your category are competitors dominating that you don’t appear in at all? These become your content and PR priority list.

- Audit your third-party content assets. When did a Tier 1 publication last mention you? Are you active in the Reddit threads your customers actually read?

- Set a baseline, schedule a recheck. AI visibility shifts faster than organic rankings. A 30-day recheck cadence is the minimum. Weekly is better if you’re in a competitive category.

Conclusion

AI search visibility isn’t an extension of SEO. It runs on different logic, rewards different signals, and requires different tools to measure.

The brands that figure this out early will hold a compounding advantage. As AI-powered search traffic is projected to overtake organic referrals by 2028, the question isn’t whether to care about AI visibility. It’s whether you’re measuring it now or catching up later.

Start with the audit above. Get your baseline numbers. Then you’ll know exactly what to fix, and in what order.

Read More