Search “best AI visibility tool” and you’ll get a dozen platforms, each promising to show you exactly where your brand stands in AI search. Most of them will. But here’s the gap: knowing your brand was mentioned is not the same as knowing your brand was cited. One tells you the AI recognized your name. The other tells you whether the AI trusted your content enough to use it as evidence.

That distinction is where most platforms stop short, and where the real optimization opportunity lives.

LLM Citation Tracking Is Not the Same as Mention Tracking

When an AI recommends your brand, two separate algorithmic decisions happened. The first is the “recommendation check”: should this brand be named? The second is the “evidence check”: should this source be linked as proof?

These decisions are made independently, and they diverge more than most marketers expect. Research shows that only 28% of LLM responses include brands that were both mentioned and cited. A brand is three times more likely to earn a citation alone than to earn both at the same time.

The practical consequence is significant. A competitor can win citations on your best content, using your research to substantiate their recommendation. You’ll show up in the data as a source. They’ll show up in the AI’s answer as the solution.

That’s the gap LLM citation tracking is built to close.

What AI Visibility Products Actually Track: A Breakdown

Before comparing platforms, it helps to understand what “AI visibility” can actually mean at a technical level. There are five distinct dimensions, and most tools only cover two of them.

| Dimension | What It Measures | Coverage by Most Tools |

|---|---|---|

| Brand Mention Frequency | Is your brand named in the response? | ✅ Standard |

| Citation Source Analysis | Which URLs/domains does the AI cite? | ⚠️ Limited |

| Multi-Model Coverage | Does tracking span ChatGPT, Gemini, Perplexity, etc.? | ⚠️ Varies |

| Sentiment & Narrative Framing | How does the AI describe your brand? | ✅ Common |

| Competitive Citation Gap | What % of total citations go to you vs. competitors? | ❌ Rare |

The platforms that stay at dimensions 1 and 4 give you brand health data. The platforms that reach dimensions 2 and 5 give you a content strategy.

The Citation Source Dimension: Why It’s Technically Hard

LLMs don’t retrieve sources the way a search engine does. They use a multi-stage process: the query gets decomposed into sub-queries, vector embeddings find semantically similar content chunks, and a re-ranking layer asks whether a given fragment actually provides evidence for the claim. Content below a confidence threshold of roughly 0.75 gets discarded entirely.

On top of that, citation patterns vary dramatically across platforms: there’s only an 11% citation overlap between ChatGPT and Perplexity. Tracking one model and extrapolating to the others isn’t a strategy. It’s a guess.

How Profound Actions Handles AI Visibility: Strengths and Gaps

Profound has positioned itself as the enterprise-grade solution for AI visibility, backed by Sequoia, and its technical architecture justifies some of that positioning.

Its standout capability is the Conversation Explorer, which draws on licensed data from consumer panels to estimate real search volume for specific prompts across LLMs. This addresses one of the industry’s core blind spots: brands previously had no way to quantify how many people were actually asking about their category in a chat interface.

Equally notable is Agent Analytics. Via CDN integrations (Cloudflare or Akamai), Profound can identify when an AI crawler like GPTBot or ClaudeBot visits a website, then correlate that activity with subsequent citation appearances. This creates a direct feedback loop between content consumption and AI output.

On data accuracy, Profound’s “Direct Browser Capture” approach captures the actual consumer-facing UI rather than relying on API responses, which often omit real-time formatting and links. They report a 95-97% accuracy rate in reproducing ChatGPT’s shopping behavior.

That said, several gaps matter depending on your team’s size and setup.

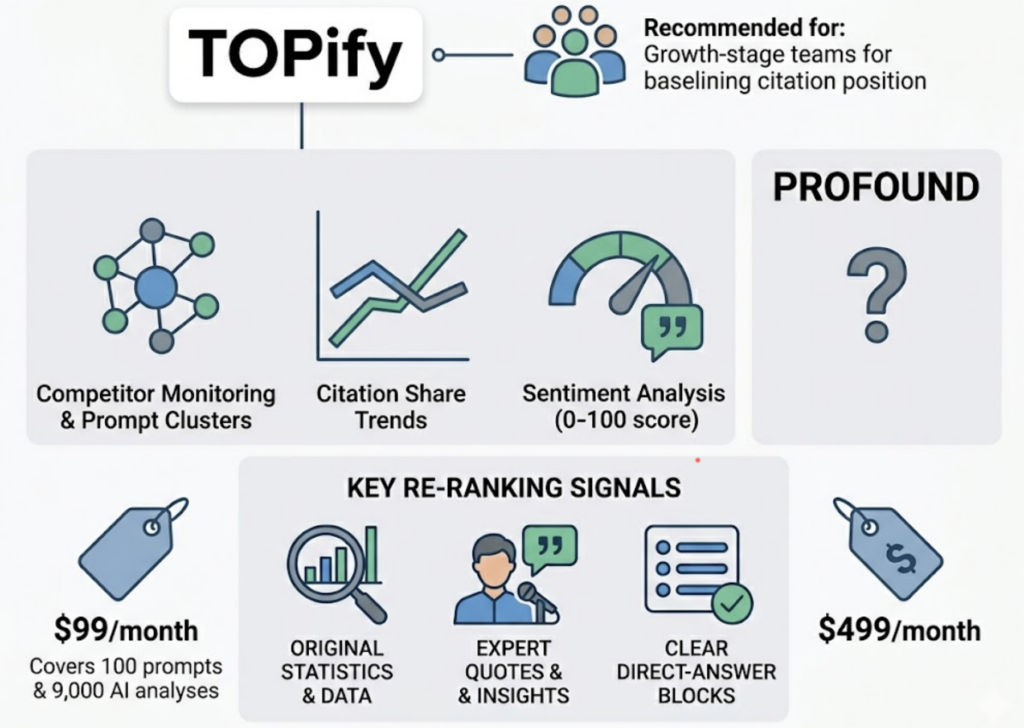

Profound AI visibility products data accuracy is strong at the response level but limited at the website analytics level: brands without complex CDN configurations have less granular visibility into their own crawl data. Profound AI visibility products model coverage is genuinely broad at 10+ engines, but full coverage is locked behind custom-priced enterprise tiers. The Lite plan at $499/month covers only four platforms.

On the execution side, Profound Conversation Explorer AI visibility products competitor analysis is informative but not always actionable. Users consistently note that dashboards surface gaps without guiding the content or technical response. The “Opportunities” section in growth plans is often limited to a handful of items at a time.

How Other AI Visibility Products Compare: GAIO.tech, Hotwire, and More

The broader competitive landscape splits into methodology-led platforms and narrative-focused tools, each addressing a different part of the same problem.

GAIO.tech takes a framework approach, built around a 5-pillar model: GEO (technical content readability), SEO (traditional authority foundation), AEO (answer engine optimization), GO (geographic nuance), and E-E-A-T (trust signal development). Their core metric is a weighted AI Share of Voice formula that divides brand mentions by total industry mentions. This auditable structure appeals to CMOs who need to present AI strategy to a board. The tradeoff is that it’s more of a strategic diagnostic than an operational tool.

Hotwire Spark approaches AI visibility from the communications side. Rather than tracking citation URLs, it focuses on which trade media, high-impact blogs, and analyst voices are shaping what LLMs understand about a category. Their Hotwire Radiate tool adds a content layer: upload a press release or case study, and it generates an “AI-citability score” along with an optimized version. AI systems often extract only 1-3 sentences from any given source, so the focus on “quotability” at the sentence level is well-grounded technically.

Neither platform focuses heavily on reverse-engineering competitor citation sources, which is where the practical content strategy work tends to live.

| Platform | Core Focus | Citation Source Analysis | Model Coverage | Best For |

|---|---|---|---|---|

| Profound | Enterprise intelligence | Partial (CDN-dependent) | 10+ engines | Large teams, governance use cases |

| GAIO.tech | Strategy / Share of Voice | Indirect | Core platforms | CMOs, board-level reporting |

| Hotwire Spark | PR / Narrative influence | Content-level (Radiate) | Core platforms | Comms and PR teams |

| Topify | Performance + citation gaps | URL-level reverse engineering | ChatGPT, Gemini, Perplexity, DeepSeek | Growth teams, content strategists |

How Topify Tracks LLM Citations Across AI Platforms

Topify was built around the citation layer specifically. Rather than starting with brand mention tracking and adding citations as a secondary feature, the platform’s Source Analysis function identifies the specific domains and URLs that AI engines cite for high-intent queries.

The practical output is a Citation Share metric: the percentage of prompts where a given domain is linked. Research suggests Citation Share is a more accurate predictor of referral traffic than brand mention rate, which makes it a more direct input to content investment decisions.

What makes this operationally useful is the reverse-engineering workflow. If a competitor is being cited more frequently, Topify traces the specific URL. Analysis might reveal the AI prefers that page because it contains a BLUF answer of 40-60 words, or a well-structured data table. Those are structural decisions the content team can reproduce.

Cross-model consensus adds another layer. If ChatGPT, Gemini, and Perplexity all cite the same external source, that source has high cross-model authority, making it the highest-priority target for displacement or outreach. Topify surfaces this pattern across the “Core 4” platforms that drive the majority of commercial AI search volume.

For teams tracking competitive position alongside citations, Topify’s Competitor Monitoring automatically detects rivals appearing in the same prompt clusters and shows how citation share shifts over time. Paired with Sentiment Analysis (0-100 scoring), you can tell whether a citation gain came with a favorable framing or not.

On pricing, Topify starts at $99/month, covering 100 prompts and 9,000 AI answer analyses, compared to Profound’s $499/month entry tier. For growth-stage teams that need to baseline their citation position before committing to an enterprise contract, the cost structure makes early adoption a reasonable decision.

3 SEO Strategies That Work When You Can See the Citation Layer

Understanding citation tracking data is most useful when it drives a specific content action. Three strategies tend to generate the clearest return.

Strategy 1: Source displacement through content quality. Using citation data, identify the top domains that appear instead of your brand for high-intent prompts. The information density formula for citation selection rewards content with more unique entities and verifiable data points per word. If a competitor’s page is winning citations because it has a tight, fact-dense answer block, that’s a reproducible content structure. Dense listicles earn AI citations roughly 25% of the time versus 11% for thinner opinion pieces. That’s not a style preference; it’s a structural signal.

Strategy 2: Connecting citation tracking to revenue. AI citation click-through rates are typically below 1%, which makes it easy to deprioritize citation work. The counterargument is conversion quality. Users who do click from an AI citation convert at 4.4x the rate of traditional organic search visitors, because the AI has already completed the research phase for them. Integrating Topify’s citation data with GA4 lets teams track whether citation gains correlate with branded search volume spikes, the most common downstream signal of AI-driven awareness.

Strategy 3: Freshness cycling to maintain retrieval strength. AI visibility is volatile: only 30% of brands maintain consistent presence across consecutive queries, and 65% of AI bot crawl activity targets content published within the past year. A freshness cycling approach, updating key pages every 30-90 days with new statistics, updated schema dates, and additional FAQs, sustains “retrieval strength” without requiring a full content overhaul. Tools like Topify’s AI Volume Analytics surface which prompts are generating the most crawl activity, so freshness effort can be concentrated where it matters.

Conclusion

The difference between AI visibility tools isn’t primarily about dashboards or pricing tiers. It’s about whether the platform reaches the citation layer, the specific URLs the AI trusts as evidence, or stops at brand mentions.

For teams that need board-level reporting and deep enterprise integration, Profound covers more ground, at a higher cost and setup overhead. For comms and PR functions, Hotwire’s narrative focus makes more sense. For growth teams that need to turn citation data into content decisions quickly, Topify’s URL-level reverse engineering at a $99/month entry point is a practical starting place.

The immediate action: audit what your current tool actually measures. If it doesn’t show you which URLs the AI is citing, you’re optimizing for awareness without touching the trust layer. Get started with Topify to baseline your citation share before your competitors do.

FAQ

Q: What is LLM citation tracking and why does it matter for SEO? A: LLM citation tracking monitors which external URLs and domains AI systems use to support their answers. It matters because traditional organic traffic is projected to decline significantly as AI Overviews expand, and citations are currently the primary mechanism for earning referral traffic and trust signals in generative search environments.

Q: How do Profound AI visibility products handle data accuracy for citation analysis? A: Profound uses direct browser capture rather than API polling, which means it reproduces the actual consumer-facing interface including real-time source links that APIs sometimes omit. However, their website-level citation analytics depend on CDN integrations like Cloudflare or Akamai, which limits accuracy for smaller brands without that infrastructure in place.

Q: What’s the difference between AI visibility tracking and LLM citation tracking? A: AI visibility tracking covers the full range of brand presence: mentions, sentiment, position, and share of voice. LLM citation tracking specifically targets the “evidence layer,” identifying which websites the AI uses as factual grounding. A brand can have strong mentions and zero citations, which creates a trust gap and limits referral traffic regardless of how often the AI recommends the brand by name.

Q: Which AI visibility products offer the best model coverage for generative engine optimization? A: Profound leads on raw coverage with 10+ engines, including niche models like Rufus and DeepSeek, though full access requires enterprise pricing. Topify focuses on the core commercial platforms (ChatGPT, Gemini, Perplexity, DeepSeek) that generate the majority of high-intent queries, which is sufficient for most growth-stage teams evaluating citation strategy.