The first thing most brands do when they hear about AI citation monitoring is Google themselves on ChatGPT. They type in their brand name, see it appear, and assume everything’s fine.

That’s the wrong test. The right question isn’t “does AI know I exist?” It’s “when a potential customer asks ChatGPT to recommend a solution in my category, does my brand show up?” Those are very different prompts. And for most brands, the second one returns a list of competitors.

Here’s how to build a measurement and monitoring system that answers the right question, across the platforms that actually matter.

Your Google Rankings Don’t Tell You What AI Is Saying

Most teams assume strong SEO performance carries over into AI search. The data says otherwise.

An analysis of 1.9 million AI citations found that only 12% of AI-cited content also appears in Google’s top 10 results. More striking: roughly 80% of AI citations come from pages that don’t rank on Google’s first page at all.

That’s not a minor gap. That’s a completely separate system operating by different rules.

The decoupling goes deeper when you factor in zero-click behavior. When Google AI Overviews appear at the top of a results page, the click-through rate for the #1 organic result drops by 58%. In high-traffic informational queries, that number reaches 64%.

So brands are simultaneously losing traffic to AI summaries and being excluded from those summaries. Measurement and monitoring only begins to matter once you accept that these two systems require separate tracking.

Citations vs. Mentions: What You’re Actually Tracking

Before setting up any monitoring workflow, it’s worth being precise about what you’re measuring. The two metrics serve different purposes and diagnose different problems.

Citations are when an AI engine explicitly attributes information to your website, usually through a footnote, source link, or sidebar card. They’re the only mechanism that drives actual referral traffic from AI. When AI systems decide whether to cite a source, they assess “hallucination risk”: content with structured technical details, original research data, or expert testimony gets treated as a “source of truth” and cited more often.

Mentions are when an AI says your brand name in the generated text without necessarily linking to you. A mention signals that your brand has strong entity association in the model’s knowledge base. If AI answers “What’s the best CRM for enterprise?” with your brand name unprompted, that means you’ve built real category authority in the training data or retrieval pool, even without a citation link.

Both matter. Citations drive traffic. Mentions build category positioning. A brand that gets mentioned but not cited is building awareness without capturing revenue. A brand that gets cited but rarely mentioned may be winning tactical queries while losing category-level authority.

Step 1: Build a Prompt Matrix Before You Run a Single Query

Most monitoring setups fail because they start with the wrong inputs. Running queries using your brand name as the prompt tells you almost nothing useful.

What you actually need is a Prompt Matrix: a structured library of the questions your target customers are asking AI before they’ve even thought of your brand.

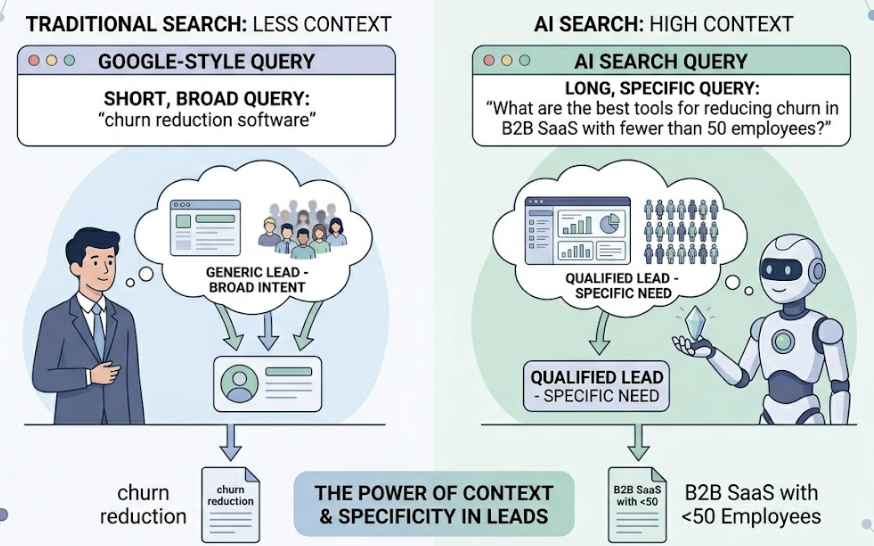

AI search queries average 23 words in length, compared to Google’s 4. That length carries context. Someone asking “What are the best tools for reducing churn in B2B SaaS with fewer than 50 employees?” is a very different lead from someone who types “churn reduction software.” Your prompt library needs to reflect that specificity.

Build prompts across three stages of the customer journey:

- Problem Unaware: “Why is our customer retention rate dropping even after product improvements?”

- Problem Aware: “How do enterprise SaaS companies usually reduce involuntary churn?”

- Solution Aware: “What’s the difference between [Your Brand] and [Competitor] for enterprise churn prevention?”

Industry benchmarks suggest a minimum of 20-30 core prompts per category for baseline monitoring. For larger brands managing multiple product lines or markets, that number typically runs between 100 and 300, with dynamic adjustments as AI recommendation patterns shift.

Don’t skip contextual modifiers. Adding conditions like “for a team of 20,” “under $500/month,” or “compatible with Salesforce” changes AI recommendation outputs significantly. A brand can dominate unmodified queries and disappear completely once a budget or tech stack constraint is added.

Topify‘s AI Volume Analytics surface high-value prompts continuously as AI recommendation behavior evolves, which removes the manual guesswork of figuring out which queries are worth tracking in the first place.

Step 2: Run Queries Across Multiple AI Platforms

Monitoring only ChatGPT is a common shortcut that produces misleading data.

Each major AI platform uses different retrieval logic and citation preferences. Tracking brand performance on one while ignoring the others means you’re measuring a fraction of where your customers are actually finding recommendations.

Here’s how the major platforms differ:

| Platform | Citation Logic | Market Position |

|---|---|---|

| ChatGPT | Training data + Bing real-time search layer. Favors structured, long-form content | 78.16% AI search share |

| Google Gemini / AI Overviews | Deeply integrated with Google index. Prioritizes E-E-A-T signals | 8.65% share, highest impact on traditional search traffic |

| Perplexity | Pure RAG architecture. Strong recency bias, heavy Reddit and news weighting | 7.07% share, high-value professional users |

| Microsoft Copilot | Bing-indexed. Sensitive to LinkedIn and professional social signals | 3.19% share, strong enterprise penetration |

The math behind manual monitoring is its own argument for automation. If you’re tracking 200 prompts across 4 platforms, that’s 800 queries per month, each requiring manual reading, citation extraction, and sentiment assessment. There’s no practical way to maintain historical trend data at that volume without tooling.

Topify’s Visibility Tracking handles cross-platform coverage automatically and flags competitor changes in real time. In practice, that efficiency gap tends to be the difference between brands that have current data and brands that are working from impressions.

Step 3: Separate Citations from Mentions in the Data

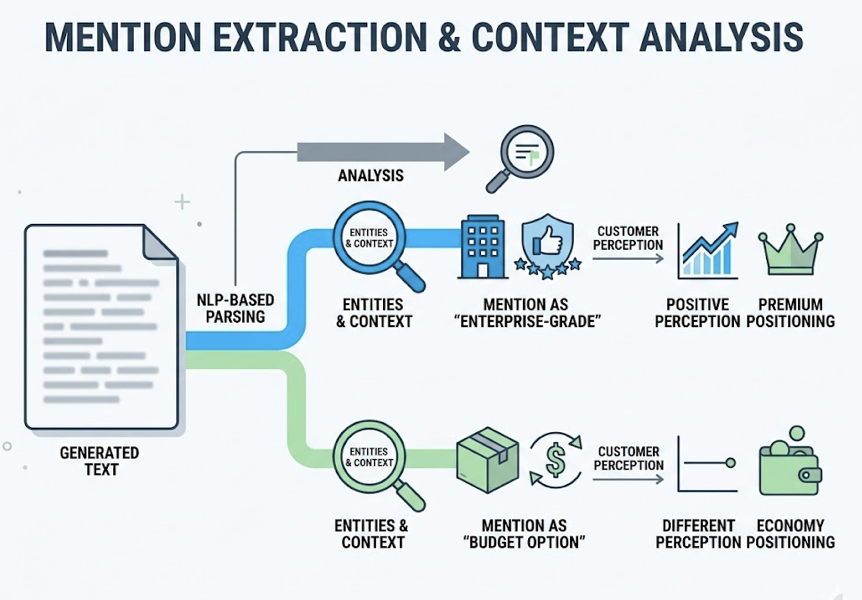

Raw query results need to be processed before they’re useful. The goal in this step is to split what you’ve collected into two distinct data types: traffic-driving citations and brand-positioning mentions.

For citation identification: Parse the HTML or footnote links that AI platforms attach to their answers. Each source link points to a specific domain and URL. That data tells you not just whether you’re being cited, but which type of content AI treats as credible enough to reference.

Topify’s Source Analysis feature reverse-engineers the third-party domains that drive competitor citations, which turns a general awareness of “we’re not being cited enough” into a specific list of target publications for your PR and content strategy.

For mention extraction: Use NLP-based parsing to pull brand entity references from the generated text. Focus especially on the context around each mention. Being mentioned as “an enterprise-grade solution” vs. “a budget option” produces very different downstream effects on customer perception, even if both appear in the same query results.

Once the data is separated, layer it across three dimensions:

- By prompt type: Are you getting cited in informational queries but disappearing in transactional ones?

- By platform: Which AI engine is citing you most, and why?

- By competitive position: Of all brand mentions in your category, what share is yours?

That last metric, Share of Voice in AI answers, is often more actionable than raw visibility numbers. A brand can have 40% visibility and still be losing to a competitor who appears first in 80% of the queries that matter.

The 4 Metrics That Tell You If Your Measurement & Monitoring Is Working

Once the data pipeline is established, these are the numbers worth tracking consistently:

Citation Rate is the percentage of relevant prompts where AI provides a link to your website. This is the direct measurement of AI-driven referral traffic potential. Low citation rate with high mention frequency means AI knows you exist but doesn’t trust your content enough to send users there.

Mention Frequency tracks how often your brand name appears in AI answers across your prompt library. This reflects category-level authority and is a leading indicator of future citation performance.

Position in Answer measures where your brand appears within a multi-brand recommendation. Research suggests that first-position mentions drive 1.5 to 2 times more clicks and trust than third-position mentions. Being in the answer isn’t enough: position within the answer matters.

Sentiment Score quantifies how AI describes your brand. Topify’s NLP-based scoring translates qualitative brand descriptions into a 0-100 score. A brand appearing consistently in AI answers as “a solid mid-market option” when their actual positioning is enterprise-grade has a data problem, not just a perception problem. High visibility with low sentiment is a net negative.

What Low Citation Rates Are Actually Telling You

When citation rates underperform across a prompt category, it usually points to one of two structural issues.

Content that isn’t machine-readable: AI retrieval systems, especially RAG-based architectures like Perplexity, favor content that puts conclusions first. A long-form article that buries its key finding in paragraph eight is technically correct but practically uncitable. The fix is restructuring key pages so the first 1-3 sentences under each heading deliver a complete, extractable answer. JSON-LD structured data that explicitly labels entity relationships also increases citation probability meaningfully.

Missing third-party validation: AI systems treat cross-source validation as a signal of factual reliability. If the only place AI can find information about your brand is your own website, it tends to avoid citing you, not because your content is wrong, but because it can’t verify the claim from an independent source.

The platform distribution data makes this concrete. Brand official websites typically account for less than 10% of AI citations. The rest come from community platforms like Reddit and Quora, professional review sites like G2 and Capterra, and mainstream media. Reddit’s influence has grown particularly sharp since its data licensing agreements with major AI providers: a well-ranked Reddit thread in your product category can generate more AI citation weight than a dozen brand blog posts.

Princeton University GEO research found that content with statistical evidence, technical explanations, and expert citations gets cited 30-40% more often than content without those features. The implication for content strategy is straightforward: every claim on a high-priority page should be backed with a number, a study, or a named authority.

There’s also a recency dimension. AI models carry a strong near-term bias. Updating a cornerstone page and explicitly marking it “Updated 2026” improves retrieval probability in most platforms. Content that looks stale gets deprioritized regardless of its quality.

Conclusion

Measurement and monitoring in AI search is a fundamentally different exercise than SEO reporting. The metrics are different, the data sources are different, and the strategic implications are different.

The brands that are building durable AI visibility aren’t doing it by checking ChatGPT once a month. They’ve defined the prompts their customers use, built cross-platform tracking across ChatGPT, Gemini, and Perplexity, and structured their measurement system around citations, mentions, position, and sentiment, not just keyword rankings.

That infrastructure takes time to build manually. If you want to skip the setup and start with a working baseline, get started with Topify and run your first cross-platform visibility report.

FAQ

Q: What’s the difference between an AI citation and a brand mention?

A: A citation includes a direct link or footnote pointing to your website and drives referral traffic. A mention is when AI says your brand name in the answer text without necessarily linking to you. Citations have higher direct commercial value. Mentions reflect category-level authority. Both require separate tracking strategies.

Q: How often should I run AI citation monitoring?

A: At minimum, weekly. Research shows that 40-60% of AI Overview citation sources rotate on a monthly basis, meaning last month’s baseline is often already outdated. For brands in competitive categories, real-time or daily monitoring tends to surface competitor changes before they compound.

Q: Which AI platforms should I prioritize?

A: Start with ChatGPT (highest market share), Google AI Overviews (largest impact on traditional search traffic), and Perplexity (concentrated professional user base with high purchase intent). Once that baseline is stable, expand to Copilot and emerging platforms based on where your audience concentrates.

Q: Can I track competitor citations using the same method?

A: Yes. Adding competitor brand names to your Prompt Matrix alongside category-level queries gives you a direct comparison of AI Share of Voice. Topify’s Source Analysis also identifies which third-party domains are driving competitor citations, which is often more actionable than the raw Share of Voice number alone.