You searched your brand name on ChatGPT. It mentioned you — buried in the third paragraph, after two competitors, with a description pulled from a press release that’s two years old.

That’s not a win. That’s a measurement problem.

Most marketing teams have no systematic way to track how often AI platforms recommend their brand, what they say when they do, or how that compares to competitors. They run one or two manual checks, screenshot the result, and move on. That’s not monitoring. That’s guessing.

This guide walks you through a practical framework for tracking brand visibility in ChatGPT and Perplexity — from building your first prompt set to the metrics that actually tell you whether your brand is winning in AI search.

Most Brands Don’t Know They’re Invisible to ChatGPT

The scale of AI search adoption in 2026 makes this a measurement gap you can’t afford.

ChatGPT now has 900 million weekly active users — more than double the 400 million reported just a year earlier. Perplexity processes approximately 780 million monthly queries and grew its user base 300% within a single year to reach 45 million monthly active users by late 2025. These platforms are no longer novelties. They’re where buyers are going to discover, compare, and shortlist brands.

Here’s the thing: being visible in AI search isn’t binary. It’s not just “mentioned” vs. “not mentioned.” It’s about how often, in what context, in what position, with what sentiment — and how that changes over time. A one-time manual check tells you nothing about any of that.

You need a repeatable measurement and monitoring system. This guide builds one from scratch.

ChatGPT vs. Perplexity: Why They Surface Brands Differently

Before you track, you need to understand what you’re tracking — because ChatGPT and Perplexity don’t work the same way, and they don’t recommend the same brands.

ChatGPT relies primarily on pre-trained knowledge, occasionally triggering real-time browsing through SearchGPT. Perplexity is built on a retrieval-first architecture — it searches the live web for every query and cites its sources inline. That structural difference produces strikingly different results.

Empirical studies involving over 200 high-intent product discovery prompts found only a 25% overlap between brands recommended by ChatGPT and Perplexity. Even among “consensus picks” — brands recommended consistently across multiple sessions — the overlap only rises to about 33%. What this means in practice: a brand’s AI visibility is not a single number. It’s platform-specific.

| ChatGPT | Perplexity | |

|---|---|---|

| Search mechanism | Hybrid (pre-trained + selective browsing) | Retrieval-first (real-time web search) |

| Citation style | Available but less central | Persistent, numbered, inline |

| Brand preference | Favors established, high-traffic brands | Favors newer, content-active brands |

| Data recency | Training cutoff unless browsing is triggered | Real-time dynamic retrieval |

The practical implication: brands recommended exclusively on ChatGPT tend to be 3 to 10 times larger in web traffic than those surfaced on Perplexity. Perplexity actually favors smaller, more agile brands that are actively creating content and gaining recent traction — its recommended brands have, on average, 32% fewer monthly visitors than ChatGPT’s picks.

If you’re an early-stage brand, Perplexity is your immediate opportunity. ChatGPT is the longer-term authority benchmark.

Step 1 — Build the Prompt Set That Reveals Your AI Footprint

The foundation of any measurement framework is the right set of prompts. Most teams make the same mistake: they search their brand name and call it a day.

That’s not visibility monitoring. That’s vanity monitoring.

Real brand visibility tracking requires three categories of prompts — and you need all three.

Category 1: Brand-direct queries These test what AI says about you specifically.

- “[Your brand] vs. [Competitor]”

- “Is [Your brand] good for [use case]?”

- “What are the pros and cons of [Your brand]?”

Category 2: Category-level queries These test whether you appear when buyers are still exploring the market.

- “Best tools for [category]”

- “How to solve [problem your product addresses]”

- “Top [category] platforms in 2026”

Category 3: Scenario and intent queries These test the highest-value moments in the buyer journey.

- “What do most [target companies] use for [workflow]?”

- “Which [category] platform is best for [specific use case]?”

- “What should I look for in a [category] tool?”

You need at least 20 to 30 prompts to form a meaningful baseline. Below that, you’re just sampling noise. Tools like Topify support up to 100 prompts on the Basic plan and 250 on Pro — giving you a dataset large enough to draw actual conclusions.

Step 2 — Run Your First AI Visibility Audit

With your prompt set ready, run your first audit. The goal is a snapshot: where does your brand currently stand?

Here’s a simple manual process to start:

- Run each prompt in both ChatGPT and Perplexity

- Record whether your brand appears in the response

- Note your position (first, second, third mention, or absent)

- Copy the exact language used to describe you

- Flag any competitor that appears in your place

Use a spreadsheet. Rows for prompts, columns for platform, visibility (yes/no), position, sentiment (positive/neutral/negative), and any notable quotes.

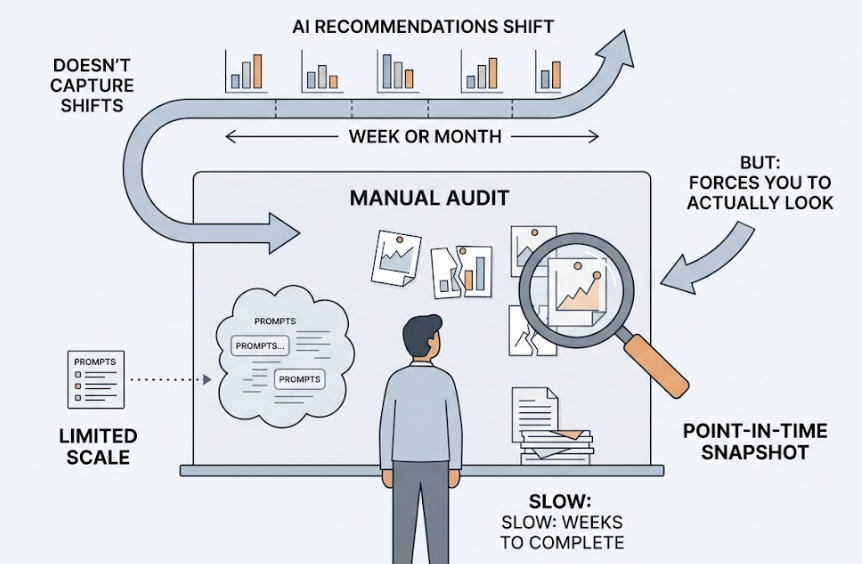

The limitations of this approach are real. A manual audit is a point-in-time snapshot — it doesn’t capture how AI recommendations shift over a week or month. It also can’t scale to the full set of prompts you need. And it’s slow: a comprehensive manual audit of a moderately sized website can take weeks. McKinsey research from 2024 found that firms using AI for monitoring see up to a 50% reduction in manual data processing time. But the manual process gets you started — and it forces you to actually look at what the AI says about your brand, which most teams have never done seriously.

Step 3 — The 4 Metrics That Actually Measure Brand Visibility

Once you have data, you need to know what to do with it. These are the four metrics that form the core of a professional measurement framework.

1. AI Visibility Score The percentage of tracked prompts where your brand appears in the AI response. This is your foundational benchmark — the simplest measure of whether AI platforms know you exist. A Visibility Score of 20% means you appeared in 1 out of every 5 prompts you tested. The goal isn’t 100%; it’s tracking the trend over time and comparing it to competitors.

2. Sentiment Score AI platforms don’t just mention brands — they describe them. Sentiment scoring uses natural language analysis to determine whether that description is positive, neutral, or negative. If ChatGPT consistently pairs your brand name with phrases like “steep learning curve” or “limited integrations,” that’s a signal worth acting on — even if your Visibility Score looks healthy.

3. Position Not all mentions are equal. A brand named first in an AI response has significantly higher influence than one buried in a third-paragraph list. Position tracking measures where you appear relative to competitors within the same response, and GEO techniques like Technical Justification and Statistics Addition can elevate citation rates by over 40% when factoring in position weight.

4. Citation Source This metric is especially important for Perplexity. When a platform cites a source to support a claim about your brand, that source becomes part of your AI reputation infrastructure. Are the citations pointing to your own site? A G2 review? A Reddit thread? A competitor’s comparison page? Knowing which sources AI platforms use to describe you tells you exactly where to invest in content and digital PR.

Platforms like Topify track all four of these — plus three additional metrics (volume, intent, and CVR) — across ChatGPT, Perplexity, Gemini, and other major AI engines simultaneously, eliminating the need to manually reconcile data across platforms.

What Your Visibility Data Actually Tells You

The data patterns you’ll encounter typically fall into one of three buckets — and each points to a different optimization path.

Pattern 1: You don’t appear at all. This usually means one of two things: AI platforms don’t have enough high-authority, structured content to confidently cite your brand, or your brand presence exists primarily behind paywalls, JavaScript-heavy pages, or formats AI crawlers can’t parse. Nearly 91% of top-cited sites use HTTPS, and pages with LCP over 4 seconds are 72% less likely to be cited. Fix the technical foundation first.

Pattern 2: You appear, but your position is consistently behind competitors. Your brand is on AI’s radar, but it’s not the consensus pick. This is a Share of Voice problem. In three out of five major industries, the top-ranked entity in AI recommendations captures an average of 62% of total AI Share of Voice. You need to build more citation sources — structured content, third-party mentions, Reddit threads, review platforms — to shift the weight.

Pattern 3: You appear, but sentiment is mixed or outdated. AI platforms synthesize millions of sources. If outdated information about your pricing, features, or team is circulating in high-authority sources, that’s what the model reflects. The fix involves updating Wikipedia entries, LinkedIn profiles, and high-authority industry publications, and ensuring your own site has a clearly structured “about” or “company facts” section that AI crawlers can extract cleanly.

The stakes aren’t abstract. ChatGPT referral traffic converts at 15.9% — versus 1.76% for Google organic. Perplexity referral traffic converts at 10.5%. Being cited as a “source of truth” in a high-accuracy AI response carries a 4.4x higher conversion probability compared to traditional organic search. The measurement matters because the outcomes matter.

When Manual Tracking Breaks Down (and How to Automate It)

Manual audits can get you started. They can’t scale with you.

Three structural problems eventually make manual monitoring unworkable. First, time lag: AI recommendation patterns shift as models update and new content enters the web. A monthly manual check misses weeks of drift. Second, coverage: to track 30+ prompts across two platforms, for your brand and three competitors, consistently, is a significant operational burden. Third, consistency: human reviewers classify sentiment differently. The data degrades over time.

This is where automated monitoring earns its place.

Topify tracks brand visibility across ChatGPT, Perplexity, Gemini, DeepSeek, and other major AI platforms — automatically running your full prompt set, analyzing 9,000 AI answers per month on the Basic plan, and returning structured data across all four core metrics (plus three additional ones). You get a live comparison against competitors without manually querying a single prompt.

For teams managing multiple clients, Topify’s agency-oriented workflow supports up to 10 seats and 8 projects on the Pro plan, making it practical to run measurement frameworks at scale rather than as a one-off exercise.

The market for AI visibility tracking tools was valued at $848 million in 2025 and is projected to reach $33.7 billion by 2034. That growth reflects how quickly “GEO monitoring” is shifting from nice-to-have to operational requirement — especially as Gartner projects traditional search volume will drop 25% by the end of 2026 alone.

Topify’s Basic plan starts at $99/month and includes a 30-day trial. For most in-house marketing teams and agencies, that’s the practical entry point for systematic measurement.

Conclusion

Tracking brand visibility in ChatGPT and Perplexity isn’t complicated. But it does require a system.

Start by building a prompt set across three categories: brand-direct, category-level, and scenario queries. Run your first manual audit to get a baseline. Then focus on four metrics that actually tell you something: Visibility Score, Sentiment Score, Position, and Citation Source. Use the data to diagnose which of the three patterns you’re in — invisible, underranked, or misrepresented — and act accordingly.

You can’t optimize what you don’t measure.

Once manual tracking hits its limits, automated platforms like Topify make it possible to run this as a continuous, scalable process rather than a one-time project.

AI search is already influencing buyer decisions at a conversion rate that dwarfs traditional organic search. The brands that build measurement & monitoring frameworks now will have the data advantage that compounds as the channel grows.

FAQ

How often should I run a brand visibility audit in ChatGPT?

For manual audits, monthly is a reasonable starting cadence. The risk is that model updates and new content can shift recommendations in a matter of weeks. Automated platforms typically run continuous monitoring, giving you data that reflects current AI behavior rather than a point-in-time snapshot.

Does Perplexity show different brand visibility results than ChatGPT?

Yes, significantly. Studies show only a 25% overlap in brand recommendations between the two platforms. Perplexity favors brands with recent, content-active presence; ChatGPT tends toward established players with deep historical data. Both should be tracked separately, not treated as equivalent.

What’s a good AI Visibility Score benchmark?

There’s no universal benchmark, since it varies by category size and competitive density. What matters more is the trend over time and your position relative to competitors. In most competitive categories, the leading brand captures around 62% of total AI Share of Voice — giving you a realistic ceiling to measure against.

Can I track competitor visibility at the same time as my own?

Yes, and you should. Monitoring your competitors’ Visibility Score, position, and citation sources helps you understand why they’re recommended over you in specific prompt contexts. This is where competitive intelligence in GEO tracking becomes most actionable.

How is AI brand visibility different from traditional SEO ranking?

Traditional SEO ranks individual pages by keyword. AI visibility measures how often your brand is synthesized into a generative response — factoring in mention frequency, position within the response, sentiment, and source attribution. A page can rank #1 on Google and never be cited by ChatGPT, if it lacks the structural clarity and “extractability” that AI retrieval systems prioritize.