Search “AI share of voice tools” and you’ll find a dozen platforms claiming to track how often your brand appears in AI answers. Most of them measure the same thing: how frequently your brand name shows up across a set of tracked prompts. That’s useful, but it’s not the full picture.

The layer most teams are missing is citation. Not just whether your brand gets mentioned, but whether AI engines are actually citing your content as a source. Those are two very different signals, and confusing them leads to strategies that optimize for awareness while leaving referral traffic and authority on the table.

Your Share of Voice Numbers Are Missing a Signal Layer

Traditional search volumes are projected to decline by 25% by the end of 2026 as users shift to conversational AI interfaces. At the same time, organic click-through rates for informational queries have already dropped by an estimated 34.5% year-over-year. Brands that built their visibility strategy on rankings and impressions are watching those metrics decouple from actual influence.

In AI search, visibility is volatile by nature. Only 30% of brands typically remain visible from one AI answer to the next for the same prompt. A mere 20% maintain their presence across five consecutive runs.

That’s not a rankings problem. That’s a citation problem.

What LLM Citation Tracking Actually Measures

LLM citation tracking is the practice of monitoring whether, and how often, AI engines cite your domain or specific URLs as source material when generating their responses.

A brand mention and a brand citation are not the same thing. A mention is when an AI tool includes your brand name in its response text. A citation is when it explicitly references your content as the source, typically with a link or footnote. The distinction matters because citations are what drive referral traffic from AI responses back to your domain.

Research shows that brands cited in AI Overviews earn 35% more organic clicks than brands that are merely mentioned. More importantly, brands that earn both a mention and a linked citation are 40% more likely to reappear in subsequent answers for the same prompt. That’s the difference between a random one-off appearance and a durable visibility position.

This is what most share of voice models don’t capture yet.

The Share of Voice Model Has a New Layer: Citation Inclusion Rate

The traditional share of voice model measured ad spend or media mentions. The AI-era version tracks how often your brand appears across a set of prompts compared to competitors. That’s a real improvement.

But there’s a third layer: Citation Inclusion Rate (CIR), which measures how often your brand’s content is used as “ground truth” by AI engines when generating their responses.

Here’s how the three metrics stack up:

| Metric | What It Measures | Primary Signal |

|---|---|---|

| AI Brand Mention Rate | Brand name appears in AI response text | Awareness & mindshare |

| AI Share of Voice (SOV) | Your mentions vs. total category mentions | Competitive benchmarking |

| Citation Inclusion Rate (CIR) | Your content cited as source material | Strategic authority & traffic |

A high mention rate with a low CIR means AI engines know your brand exists but don’t trust your content enough to reference it. That’s an authority gap, not a visibility gap, and it requires a completely different fix.

The AI share of voice model is most useful when it tracks all three layers together. Share of visibility tells you how wide your presence is. Citation rate tells you how deep your authority goes.

Platform-by-Platform Citation Behavior: Why One Strategy Won’t Cover All

One reason LLM citation tracking is technically difficult is that every major AI engine uses a fundamentally different retrieval architecture. There’s currently only an 11% domain overlap across platforms for the same set of queries. Content that earns citations on Perplexity may be completely invisible to ChatGPT’s retrieval pipeline.

The citation volume gap across platforms is significant:

| AI Engine | Avg Citations Per Response | Primary Citation Driver |

|---|---|---|

| Perplexity | 21.87 | Content freshness (under 30 days) |

| Google AI Mode | 17.93 | E-E-A-T and Knowledge Graph entities |

| ChatGPT | 7.92 | Brand popularity and proper noun density |

| Claude | 5.67 | Detailed, nuanced sourcing |

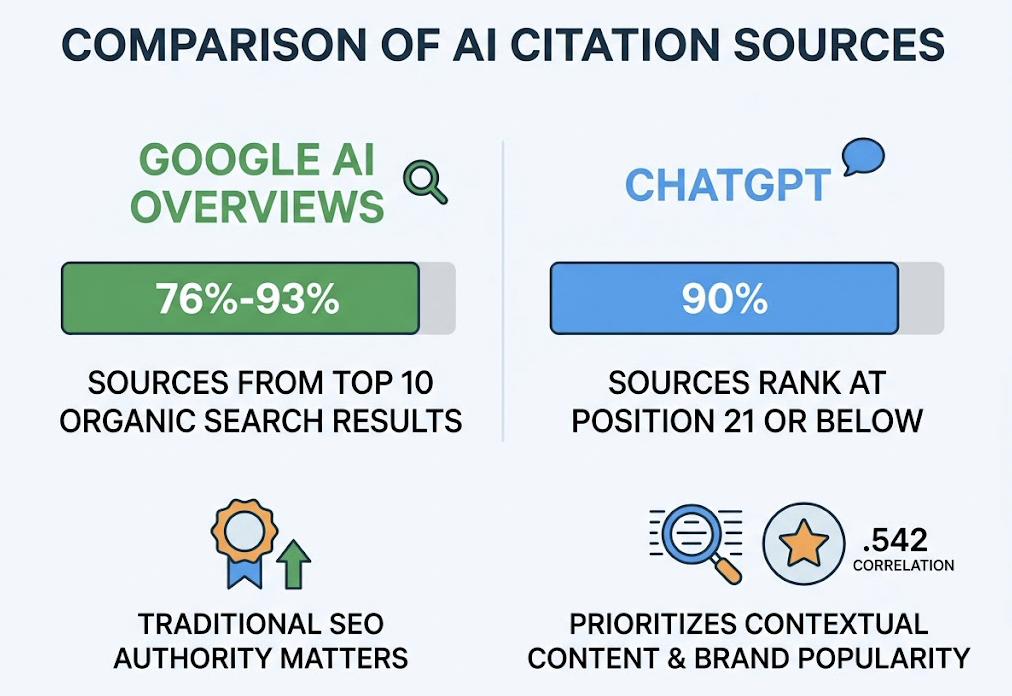

Google AI Overviews pull 76% to 93% of their citations from the top 10 organic search results, so traditional SEO authority still matters there. ChatGPT behaves differently: 90% of the pages it cites rank at position 21 or below on Google. The model prioritizes contextually extractable content over keyword-optimized landing pages, and brand popularity correlates more strongly with ChatGPT citations (.542) than any traditional SEO metric.

That’s a structural divergence. If your LLM citation tracking solution only monitors one platform, you’re missing most of the picture.

How Topify Solves LLM Citation Tracking Across Platforms

Most AI visibility tools stop at mention counting. Topify goes a layer deeper with Source Analysis: a feature that identifies which specific domains and URLs are being cited by AI platforms in your category, then shows you how your coverage compares to competitors.

In practice, this means a SaaS marketing team can log in and see that Perplexity is citing a competitor’s documentation pages 3x more frequently than their own, while ChatGPT is pulling from third-party review sites that haven’t featured their brand in 18 months. That’s not just a data point. That’s a prioritized action list.

Topify tracks LLM citation behavior across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms, with seven core metrics per brand: visibility, sentiment, position, volume, mentions, intent, and CVR. The multi-platform coverage matters because citation patterns diverge so sharply across engines. A single-platform dashboard gives you a partial view at best.

For teams that need to move from data to action, Topify’s One-Click Execution lets you state your goals in plain English and deploy an optimization strategy without building out manual workflows. The Basic plan starts at $99/month, with coverage for 100 prompts and 9,000 AI answer analyses across four projects. You can get started here.

Share of Voice Tracking Tools for AI Platforms: Where Citation Depth Matters

The AI share of voice tool market has expanded quickly, but not all platforms track at the same depth. Here’s how the current landscape breaks down:

| Tool | Focus Area | Citation Depth |

|---|---|---|

| Topify | Full-stack GEO platform | Source analysis + cross-platform citation tracking |

| Peec AI | Multi-engine monitoring | Granular gap scoring and intent tagging |

| Profound | Enterprise brand intelligence | Sentiment + competitive benchmarking |

| Otterly.ai | Citation monitoring | Budget-friendly multi-engine mention coverage |

| Rankability | GEO content analysis | AI keyword research and content briefs |

When evaluating any ai share of voice tool, three criteria separate citation-capable platforms from mention-counters:

1. Citation-level tracking vs. mention tracking. Can the tool distinguish between a brand reference and an actual sourced citation? This is the core capability gap in the market.

2. Cross-platform coverage. Given that domain overlap across AI engines is only 11%, single-platform tools produce systematically incomplete data for any share of visibility analysis.

3. Actionable gap analysis. Raw citation data is only useful if it maps to a content or outreach action. The most effective platforms for AI search optimization GEO surface not just where you’re missing, but which third-party domains you need to earn mentions on.

On that last point, the data is clear: brand mentions in third-party sources correlate 0.664 with AI visibility, while traditional backlinks correlate only 0.218. The implication for any AI visibility platform share of voice strategy is that earned media and digital PR carry more citation weight than your own domain’s link profile.

Close the Citation Gap with the DEEP Framework

For teams ready to move from tracking to action, the DEEP framework provides a structured approach to citation gap analysis for AI search optimization.

Discover your AI revenue surface first. Identify the 20 to 50 high-intent prompts that most directly influence buyer decisions in your category: discovery queries, competitor comparisons, and specific use-case questions. These are the prompts where your citation presence matters most.

Evaluate the gap between mentions and citations for each prompt cluster. A brand that appears frequently but rarely gets cited has an authority gap, not a visibility gap. The fix is different for each.

Execute on two fronts simultaneously. On-site, restructure content into modular “Fact Blocks” with direct definitional sections and 19 or more data points per page. Research shows that pages with 19+ data points receive twice as many AI citations as those with fewer. On third-party sites, prioritize the domains your AI engine already trusts. Getting updated data or expert quotes placed on those high-cited publishers is often faster than building citation authority from scratch.

Plan for volatility. Half of cited domains in any category can change within a single month. Citation gap analysis isn’t a one-time audit. It requires a monthly or bi-weekly cadence to catch shifts in model behavior before competitors do.

One more number worth anchoring to: pages that ranked at position 5 in organic search saw a 115% increase in AI visibility after applying GEO optimization. The AI citation landscape is not yet locked in by incumbents. Mid-market brands that move early on citation strategy have a structural window that won’t stay open indefinitely.

Conclusion

Your AI share of voice score tells you how often your brand shows up. Your citation rate tells you whether AI engines actually trust your content. Both metrics matter, and tracking only one leads to strategies that build awareness without authority.

The practical starting point is simpler than most teams expect: audit your citation coverage across the two or three AI platforms your audience uses most, identify the third-party domains driving competitor citations in your category, and run those gaps against your content calendar. The teams doing this systematically today are building visibility positions that will be difficult to displace once the AI citation landscape stabilizes. The window is open. It won’t stay that way.

FAQ

Q: What is LLM citation tracking and why does it matter for SEO?

A: LLM citation tracking monitors whether AI engines are citing your domain or specific pages as source material in their generated responses. It matters because citations drive referral traffic from AI answers back to your site, and brands cited in AI Overviews earn roughly 35% more organic clicks than those that are only mentioned by name.

Q: How is AI share of voice different from traditional share of voice?

A: Traditional share of voice typically measured advertising spend or media coverage relative to competitors. AI share of voice tracks how often your brand appears in AI-generated responses across a set of prompts, compared to your competitive set. The more advanced version also layers in Citation Inclusion Rate, which measures how often your content is used as ground truth, not just referenced.

Q: Which AI platforms should I prioritize for LLM citation tracking?

A: It depends on your audience, but most B2B and SaaS brands should track at minimum ChatGPT, Perplexity, and Google AI Mode. These three platforms have meaningfully different citation behaviors: Perplexity averages 21.87 citations per response and rewards fresh content, while ChatGPT cites an average of 7.92 sources and correlates more with brand popularity than organic rankings.

Q: How does Topify measure citation-level share of voice?

A: Topify’s Source Analysis feature identifies which specific domains and URLs are being cited by AI platforms within your category, and benchmarks your coverage against competitors. Combined with its Visibility Tracking across ChatGPT, Gemini, Perplexity, DeepSeek, and other major platforms, it lets teams see both their mention rate and citation rate in a single dashboard, then act on the gap with One-Click Execution.