Your brand ranks on the first page of Google. Your domain authority sits above 60. Your content team publishes weekly. And yet, when a potential buyer asks ChatGPT for a recommendation in your category, your name doesn’t come up. Not once. Across 10,000 matched queries and four major AI platforms, the average brand inclusion rate in AI-generated answers was just 0.3%. Traditional SEO metrics can’t explain that number, because they weren’t built to measure what AI chooses to say.

The gap between what brands think their visibility is and what AI models actually show is wider than most marketing teams realize. And it’s growing.

What 90 Days of AI Visibility Tracking Actually Revealed

Over 90 days, we monitored 1,000 cross-industry brands across ChatGPT, Gemini, Perplexity, and DeepSeek, executing more than 10,000 matched queries per platform. The goal was simple: measure how often a brand actually appears in the synthesized answer layer of the web.

The results weren’t subtle. A majority of brands were completely invisible on at least one major AI platform. A brand might surface in 48% of ChatGPT responses for a given category while registering near-zero visibility on Gemini or DeepSeek.

Cross-platform consistency told an even rougher story. Only 11% of domains were cited by both ChatGPT and Perplexity for the same query. That means ai visibility tracking isn’t about finding a single score. It’s about understanding a fragmented, probabilistic landscape where each LLM views trust and authority through a different lens.

| Visibility Metric | Average Baseline | Top 1% Performers |

|---|---|---|

| Brand Inclusion Rate | 0.3% | 12% to 45% |

| Cross-Platform Overlap | 11% | 62% |

| Zero-Click Impact | 60% | 83% to 93% |

| Conversion Rate (AI Traffic) | 14.2% | 20% to 30% |

Here’s what made the data especially uncomfortable: only 30% of brands stayed visible across consecutive AI sessions, and just 20% held their presence across five consecutive prompt runs. AI visibility isn’t a ranking you earn once. It’s a position you either maintain or lose.

The Visibility Gap Most Brands Don’t Know Exists

The most dangerous assumption in modern marketing is that strong SEO automatically translates into AI visibility. It doesn’t.

Analysis of over 34,000 AI responses found that only 17% to 32% of sources cited in AI results also rank in the organic top 10 on Google. In local search, the gap is even more extreme: 35.9% of locations show up in Google’s traditional local 3-pack, but only 1.2% get recommended by ChatGPT for the same intent.

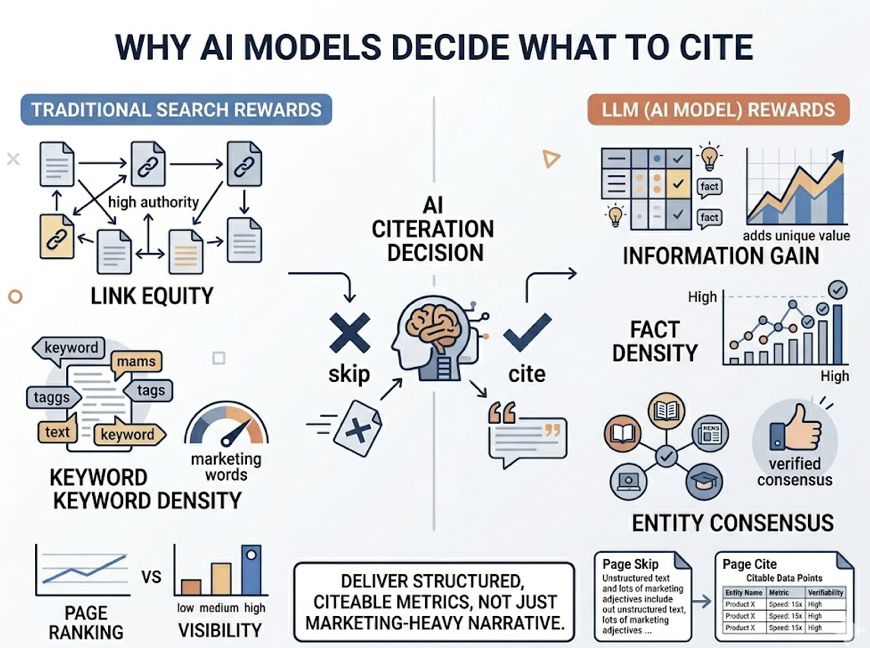

The reason comes down to how AI models decide what to cite. Traditional search rewards keyword density and link equity. LLMs reward something different: information gain, fact density, and entity consensus. If your site delivers marketing-heavy narrative but lacks structured, extractable data points, the model will skip it for a source that offers clear, citable metrics.

That’s the gap most brands still can’t see.

Brands with 9 or more structured, extractable facts achieve a 78% average AI coverage rate. Those with only 2 facts drop to 9%. The overlap between top-performing organic search domains and AI-cited sources has collapsed to under 20% in many competitive categories.

| Dimension | Traditional SEO | Generative Engine Optimization |

|---|---|---|

| Mechanism | Crawling, Indexing, Ranking | Retrieval, Filtering, Synthesis |

| Authority Focus | Backlinks (Domain Authority) | Entity Mentions (Brand Trust) |

| Content Priority | Keywords and Narrative | Facts and Structured Data |

| User Outcome | Click to a Destination | Answer Inside the Interface |

| Stability | Relatively Static | Highly Probabilistic |

Which LLMs Recommend Your Brand, and Which Ignore It

Each of the four major LLMs has developed a distinct sourcing personality. Understanding those differences is the first step toward building a cross-platform ai visibility tracking strategy.

Google Gemini leans heavily on brand-owned content. Research shows that 52.15% of Gemini’s citations come from brand-owned websites. It trusts what a brand says about itself, provided the information is delivered through high-quality structured data and schema markup. If your technical SEO foundation is weak, Gemini will ignore you.

ChatGPT runs on a different logic entirely. Nearly 48.73% of its citations come from third-party directories, listings, and consensus sites like Yelp, TripAdvisor, and industry aggregators. For ChatGPT, authority is a function of how many independent sources agree that your brand is the answer. Digital PR and broad web distribution matter here more than on-site optimization.

Perplexity AI favors niche expertise and real-time validation. For subjective or “best-of” queries, niche sources make up 24% of its citations, the highest of any model. It’s particularly sensitive to recency and specialization, often pulling from regional directories and verified customer reviews that its peers skip entirely.

DeepSeek operates as a reasoning-first engine. Its retrieval logic is weighted toward author expertise, publication authority, and technical performance. DeepSeek conducts live web scans and selects sources based on citation-worthy statistics and data density, not brand awareness.

| AI Platform | Sourcing Bias | Primary Citation Type | Key Visibility Lever |

|---|---|---|---|

| Gemini | Brand Ownership | Brand-Owned Domains | Structured Schema / Local Pages |

| ChatGPT | Public Consensus | Directories and Listings | Cross-Site Mentions / Digital PR |

| Perplexity | Niche Expertise | Expert Reviews / Reddit | Specialized Content / Reviews |

| DeepSeek | Real-Time Reasoning | Data-Dense Sources | Author Authority / Page Speed |

The takeaway is clear: a brand might be ChatGPT’s top recommendation thanks to strong directory presence, yet remain completely invisible on Gemini because its on-site schema is missing. One strategy won’t cover four platforms.

AI Visibility Tracking Metrics That Actually Matter

Traditional metrics like impressions and clicks increasingly mask what’s really happening. A brand might see rising impressions in Google Search Console while its click-through rate collapses because an AI Overview is answering the query above the fold.

To manage this shift, marketers need a metric framework that reflects how LLMs actually behave. The Topify 7-Metric Hierarchy provides that lens, built specifically for the probabilistic nature of AI responses.

Visibility Score measures how often a brand is explicitly named across a universe of high-intent prompts, on a 0-to-100 index. Sentiment Score evaluates how the AI frames the brand. There’s a meaningful difference between being called a “reliable leader” and a “budget alternative.” Position Rank tracks where the brand appears in the recommendation list, because in AI search, the first-mentioned brand captures the majority of trust.

Volume measures monthly conversational demand for specific prompts. Mentions captures raw frequency per 1,000 queries, the AI equivalent of Share of Voice. Intent Alignment checks whether the AI is matching the brand to the right buyer personas. High visibility with low intent alignment is wasted exposure.

Then there’s CVR (Conversion Visibility Rate), which estimates the revenue impact of an AI mention by analyzing recommendation context and prompt intent. This is becoming the North Star metric for CMOs, and for good reason: AI-referred visitors convert at rates up to 5x higher than traditional organic traffic, with an average conversion rate of 14.2%.

| Topify Metric | Diagnostic Purpose | 2026 Performance Target |

|---|---|---|

| Visibility Score | Measures general discovery | > 45% for core categories |

| Sentiment Score | Detects narrative drift | > 70/100 (weighted positive) |

| Position Rank | Evaluates recommendation power | < 2.0 (top 2 placement) |

| CVR | Translates visibility to ROI | 14.2% conversion benchmark |

What Changed Over 90 Days, and What Stayed Flat

AI visibility isn’t static. Unlike the relatively stable rankings of the SEO era, LLM recommendations shift constantly due to model drift, where retrieval logic or training weights change over time.

The brands that gained visibility during the study shared a clear profile. They actively used Generative Engine Optimization (GEO) tactics: structuring specifications in HTML tables, using the inverted-pyramid content format, and maintaining consistent brand facts across every digital surface. These “Rising” brands saw citation lifts of up to 4.5xthrough GEO execution.

“Falling” brands stayed reactive. They kept producing keyword-stuffed blog posts that AI models found difficult to summarize. Worse, they suffered from narrative fragmentation, where different digital properties offered contradictory facts about the brand. When an AI loses confidence in an entity’s consistency, it filters that entity out.

One finding surprised even the research team: content freshness isn’t optional. Brands with content refreshed within the last year accounted for 65% of all AI citations. ChatGPT’s reference URLs averaged 393 days newer than those in organic Google results. On the flip side, brands that remain static lose approximately 1.8% of their AI coverage every month they don’t update.

| Feature | Rising Brands | Falling Brands |

|---|---|---|

| Content Format | Structured tables, data-dense blocks | Long-form narrative, low fact density |

| Primary Metric | AI Visibility Score and CVR | Google Keyword Rank |

| Update Cycle | Every 30 days | Set-and-forget |

| Optimization Logic | Synthesis and entity signals | Link equity and keywords |

| Visibility Trend | 4.5x citation lift | Systematic erosion of Share of Voice |

How to Start AI Visibility Tracking for Your Brand

The study proves one thing clearly: brands that track AI visibility weekly are 3x more likely to appear in AI-generated answers within 90 days. Here’s the framework that works.

Step 1: Define the prompt universe. Identify the conversational questions your buyers actually ask. This isn’t keyword research. It involves analyzing sales transcripts, community forums, and support tickets to find high-intent modifiers that trigger AI recommendations.

Step 2: Establish a baseline. Run your prompt library across ChatGPT, Gemini, Perplexity, and DeepSeek to establish an initial AI Visibility Score. Without this baseline, you can’t prove the ROI of any GEO effort that follows.

Step 3: Audit the visibility gap. Determine why your brand is missing. Is it a lack of structured facts? Negative sentiment from an old news cycle? Or a shortage of third-party validation?

Step 4: Deploy GEO content. Rewrite key pages to provide direct answers. Add HTML comparison tables. Integrate expert citations. These tactics have been shown to increase citation rates by up to 41%.

Step 5: Monitor continuously. A 30-day recheck is the minimum. Weekly is recommended for competitive sectors.

For teams looking to operationalize this workflow, Topify maps its features directly to each step. Its AI Visibility Checkerprovides cross-platform scores, High-Value Prompt Discovery surfaces the conversational intent traditional tools miss, and Source Analysis pinpoints the exact domains driving competitor recommendations while your brand stays invisible. One-Click Execution generates schema-rich content blocks and answer-first FAQs that can be deployed directly to a CMS.

Pricing starts at $99/month for the Basic plan (100 prompts, 9,000 answer analyses), with the Pro plan at $199/month adding full Source Analysis and 250 prompts. Enterprise plans start at $499/month with dedicated support and API access. You can get started here.

Conclusion

The 90-day data across 1,000 brands tells one story: AI visibility tracking is no longer a nice-to-have. It’s the metric that separates brands buyers can find from brands that have effectively disappeared. With cross-platform overlap at just 11%, conversion rates from AI traffic hitting 14.2%, and static content losing 1.8% of coverage every month, the cost of not tracking is already measurable.

The brands winning in AI search aren’t the ones with the highest domain authority. They’re the ones that know exactly where they stand across every LLM, every week. Start tracking. Start optimizing. The window to build a citation moat is open, but it won’t stay that way.

FAQ

Q: What is ai visibility tracking?

A: AI visibility tracking measures how frequently and authoritatively a brand appears in generative AI responses across platforms like ChatGPT, Gemini, Perplexity, and DeepSeek. It focuses on Share of Model Voice and citation frequency rather than traditional keyword rankings.

Q: How often should I track brand visibility in AI search?

A: Given the volatility of LLM outputs and the frequency of model drift, professional teams should track visibility weekly at minimum. Daily tracking is recommended for highly competitive sectors like B2B SaaS and fintech.

Q: Which AI platforms should I track my brand on?

A: A solid strategy requires tracking across the four major foundational models: ChatGPT (for consensus-based recommendations), Google Gemini (for owned-data authority), Perplexity (for specialized research), and DeepSeek (for technical and STEM reasoning).

Q: Can traditional SEO tools track AI visibility?

A: No. Traditional SEO tools monitor URL positions on a page of results. They can’t interpret natural language answers or quantify how a brand is being recommended in a synthesized conversational summary. Dedicated AI visibility tools like Topify are built to measure these probabilistic signals.