Your site passes every structured data test Google offers. Rich snippets show up exactly where they should. Then someone asks ChatGPT for a recommendation in your category, and your brand doesn’t appear in the answer. Not once.

That disconnect isn’t a bug. It’s a gap between how traditional search engines and generative AI engines process structured data. Google’s crawler treats Schema as a direct classification instruction: star ratings go here, prices go there. LLMs treat it as a probability signal, one input among many that shapes whether your content gets cited or skipped. Most SEO teams are still optimizing for the first system while the second one quietly decides who gets recommended.

Most Schema Markup Doesn’t Reach AI Models. Here’s Why.

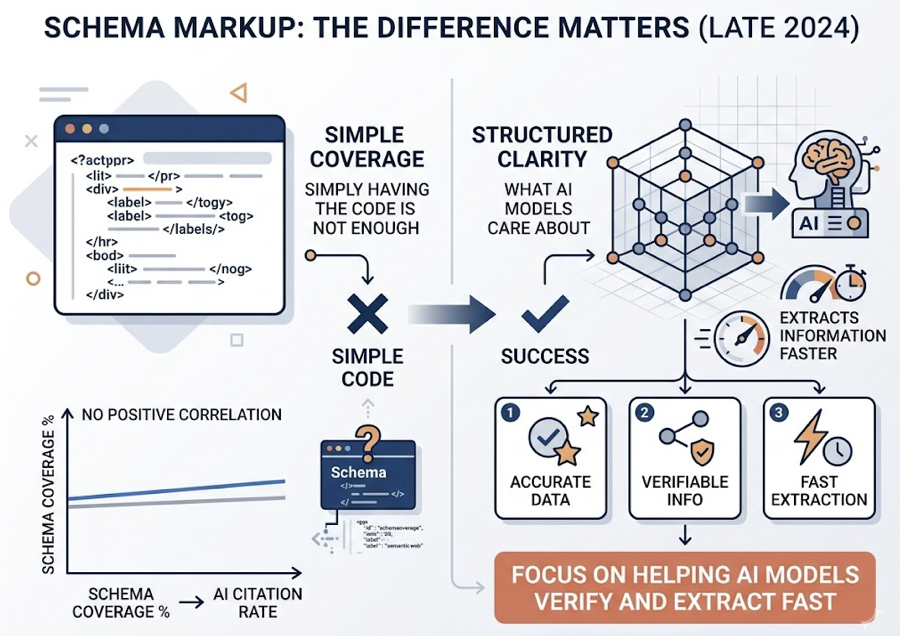

There’s a persistent myth in SEO circles: add JSON-LD to your pages, and AI models will automatically “read” it and boost your visibility. The reality is more nuanced.

Traditional crawlers like Googlebot parse Schema as a syntax tree, slotting data into specific SERP features. LLMs process content through tokenization, treating structured data as what researchers call a “probability calibration signal” rather than a direct ranking instruction. The model doesn’t see your JSON-LD the way Google does. It uses it to reduce ambiguity and increase confidence when extracting facts from your page.

That distinction matters. Studies from late 2024 found no direct positive correlation between Schema coverage rates and AI citation rates. Simply having the code on your page isn’t enough. What AI models care about is “structured clarity,” whether the markup actually helps them extract accurate, verifiable information faster than they could from unstructured text.

Here’s where it gets interesting. In systems like Google AI Overviews and Bing Copilot, Schema’s influence is more direct. These platforms lean heavily on existing search indexes, and structured data helps them identify answer boundaries. Microsoft noted in a late-2025 technical session that structured data functions as a “steering” mechanism, guiding the AI toward higher-confidence answers.

| Processing Dimension | Traditional Search (Google/Bing) | Generative AI (ChatGPT/Perplexity) |

|---|---|---|

| Primary Goal | Page indexing and feature classification | Entity recognition and answer synthesis |

| Schema Function | Triggers Rich Results (stars, prices) | Boosts RAG retrieval confidence scores |

| Reading Method | Strict Schema.org syntax tree | Contextual tokenization + symbolic reasoning |

| Citation Logic | PageRank + keyword relevance | Authority (E-E-A-T) + information density |

| Data Linking | Isolated page-level markup | Cross-domain entity graphs (@id, @graph) |

The bottom line: Schema is a signal, not a shortcut. It works when it’s paired with content AI models actually trust.

3 Schema Types That Actually Influence AI Recommendations

Out of hundreds of Schema types, only a handful consistently move ai visibility tracking metrics. They share one trait: they match the way AI systems process questions.

FAQ Schema: The Highest-Impact Format

FAQPage Schema is the single most effective type for AI citation. The logic is straightforward. Generative search is fundamentally a question-answering system, and FAQ Schema packages information into exactly the unit AI handles best: question-answer pairs.

Pages with properly implemented FAQPage Schema see citation rates roughly 2.5 times higher than unmarked pages in AI responses. GPT-4’s accuracy in understanding structured FAQ content jumps from 16% to 54% compared to unstructured text on the same topic.

One detail most guides miss: answer length matters. The sweet spot sits between 134 and 167 words per answer. That range gives the model enough verifiable facts (specific numbers, locations, credentials) while staying short enough to embed cleanly into a synthesized response. Go much longer, and the model is more likely to paraphrase loosely or skip it entirely.

HowTo Schema: Capturing Step-by-Step Intent

One of the most common AI use cases is “how do I…” queries. When users ask for instructions, AI systems prioritize content with clear, sequential steps over narrative explanations.

HowTo Schema marks up each step, required tools, and expected outcomes in a format AI can extract without guessing. How-to guides show citation rates around 54% across AI platforms, with particularly strong performance in Perplexity and Google AI Overviews. The structured format also helps AI cross-reference your steps against other sources, which increases citation confidence.

Product and Review Schema: Entering the Consideration Set

In purchase-decision contexts, AI models act as comparison engines. They sort brands by price, specs, user ratings, and availability.

Product, Offer, and AggregateRating Schema are effectively your ticket into that comparison. Analysis of 768,000 AI citations found that product-focused content accounts for 46% to 70% of all AI citations in commercial queries, while traditional long-form blog posts account for just 3% to 6%. AI heavily favors pages with hard specs, pricing tables, and structured review data when generating shopping recommendations.

| Schema Type | AI Citation Lift (vs. No Markup) | Core Advantage | Best For |

|---|---|---|---|

| FAQPage | +89% (Google AIO) | Direct Q&A format match | Service pages, FAQ sections |

| HowTo | +76% | Structured step extraction | Guides, tutorials, SOPs |

| Product | +60%-70% (overall) | Parameterized comparison | E-commerce, SaaS feature pages |

| Article/Blog | +36% (median) | Author identity signals | Thought leadership, industry news |

What Schema Won’t Fix: The Content Gap That Tanks AI Visibility

Schema is a powerful signal. It’s not a rescue plan for weak content.

The most common failure pattern: brands invest heavily in technical markup while ignoring what AI models actually weigh most in citation decisions. In AI’s evaluation framework, Schema accounts for roughly 10% of the total weight. Domain authority and content depth carry the rest, at an estimated 3.5:1 ratio over Schema alone.

That’s the gap most brands still can’t see.

AI systems, especially Perplexity and ChatGPT, show a strong preference for “earned media,” third-party, authoritative, independent sources. If your brand only has Schema on your own website but lacks external citations from platforms like Reddit, G2, or industry publications, AI will often cite those external sources instead of your site. Research consistently shows that 82% to 85% of AI brand citations originate from third-party domains.

There’s also the problem of “semantic drift.” When AI models form opinions based on outdated training data, Schema alone can’t override that bias. One documented case involved a fintech brand whose AI profile was shaped by a minor incident from two years prior. Correcting it required building a structured “trust center” packed with verifiable credentials (ISO certifications, security standards) that the RAG retrieval layer could pick up in real time.

What does move the needle alongside Schema:

- Layered heading structures where H2/H3 titles mirror the actual questions users ask AI

- Answer-first architecture that puts the conclusion in the opening sentence of each section

- Data tables instead of paragraphs for comparison content, which shows 2.8x higher citation rates than prose-based comparisons

How to Measure If Your Schema Changes AI Visibility Tracking Results

Google Rich Results Test confirms your code is valid. It tells you nothing about whether ChatGPT, Perplexity, or Gemini are actually citing your pages.

That’s where dedicated ai visibility tracking fills the gap. Traditional SEO tools were built to measure clicks and rankings. AI visibility requires a different measurement layer entirely.

Topify approaches this through five core metrics designed specifically for Schema-to-visibility measurement:

| Metric | What It Measures | 2026 Success Benchmark |

|---|---|---|

| Visibility Score | Brand appearance frequency across target prompts | Core category > 60% |

| Sentiment Score | How positively AI describes your brand (0-100) | > 85 (weighted positive) |

| Position Rank | Placement in AI recommendation lists (top 3-5) | Average < 2.0 |

| Source Citation | Which URLs are driving AI’s opinions | Your domain in top 3 citation sources |

| CVR (Visibility Rate) | Estimated conversion value of AI mentions | Outperform traditional organic CPC |

The Source Analysis feature reveals a critical detail most teams miss: is AI citing your site because of your Schema improvements, or is it still pulling from a Reddit thread you’ve never seen? If 60% of citations come from external forums, that’s a clear signal to shift effort from technical markup toward community engagement and third-party coverage.

Start with the free GEO Score Checker to get your baseline across AI bot access, structured data, content signals, and overall visibility. No signup required.

A 30-Day Schema-to-Visibility Playbook

Theory doesn’t move metrics. Here’s a tested four-week plan that connects Schema deployment to measurable AI visibility gains.

Week 1: Audit and Baseline

Pull 20-50 real conversational queries from sales calls, support tickets, and community threads. These are the prompts AI users are actually typing.

Run a technical check: is your robots.txt blocking AI crawlers? Is your site using server-side rendering? Heavy client-side JavaScript reduces AI citation visibility by roughly 60%. Use Topify’s GEO Score Checker to capture your starting Visibility Score across ChatGPT, Perplexity, and Google AI Overviews.

div data-topify-widget=”geo-score-checker”>Week 2: Deploy Schema and Align Content

Target your top 10 ranking pages first. Deploy FAQPage Schema with Q&A pairs that exactly match visible page content. Any mismatch triggers AI consistency checks that disqualify your page.

“Machine-ize” your content: convert key comparison data into HTML tables. Place a direct answer to the core question within the first 150 words of each page. That’s the highest-priority extraction window for AI retrieval models.

Week 3: Build the Entity Graph

Use @id attributes to link “author,” “organization,” and “article” entities into a connected loop. This can boost AI content confidence by approximately 20%.

Simultaneously, update your brand information on third-party platforms (G2, Reddit, industry directories) so AI can cross-verify structured data across multiple sources.

Week 4: Monitor, Iterate, Declare Victory

Track these signals through Topify’s Visibility Tracking:

- Citation frequency: look for a 30%+ increase in Google AIO mentions

- Sentiment shift: AI descriptions moving from vague or negative toward precise and positive

- Conversion quality: AI-referred visitors typically convert at 4.4x to 23x higher rates than standard organic traffic, even if raw click volume stays flat

Google AIO changes tend to appear within 2-4 weeks. For models with periodic training updates (like GPT), the impact takes longer in the base model but shows faster in web-search mode.

Conclusion

Schema Markup in 2026 isn’t about earning star ratings on Google. It’s infrastructure for machine trust, accounting for 10% to 20% of what determines whether AI cites your brand or your competitor’s.

That 10-20% matters. It lowers the friction AI faces when extracting facts from your pages. Combined with content depth and continuous ai visibility tracking, it’s the difference between showing up in the answer and being left out entirely.

The starting point is specific: audit your FAQ Schema, restructure answers to hit the 134-167 word sweet spot, and deploy Topify to measure what changes. The brands that treat Schema as part of a tracking loop, not a one-time technical fix, are the ones AI keeps recommending.

FAQ

Does schema markup directly affect ChatGPT recommendations?

Not the way it affects Google. LLMs treat JSON-LD as an auxiliary signal during RAG retrieval, using it to disambiguate entities and improve fact-extraction accuracy. It increases the probability your content is “correctly understood,” which indirectly influences recommendations.

Which schema type is most important for AI search?

FAQPage Schema currently has the highest measured impact, with approximately 89% citation lift in Google AI Overviews. Its Q&A structure matches how generative engines process and synthesize information.

How long does it take for schema changes to impact AI visibility?

For engines using real-time search indexes (Google AIO, Perplexity), expect 2-4 weeks. For models with periodic training data updates (ChatGPT’s base model), it can take months, though results appear faster when the model uses its live web-search mode.

Can I track my brand’s AI visibility after adding schema?

Yes. Topify monitors brand mentions, ranking positions, and sentiment scores across ChatGPT, Gemini, Perplexity, and other major AI platforms. You can directly compare pre- and post-Schema metrics to measure the impact of your optimization work.