Your domain authority is 75. Your blog ranks on the first page for a dozen high-intent keywords. But when a potential buyer asks ChatGPT, “What’s the best platform for [your category]?”, the answer pulls from Reddit threads, Wikipedia entries, and a G2 review page you didn’t even know existed.

That disconnect is growing. The top 15 domains now capture roughly 68% of all AI citations across major platforms, and most brand-owned sites aren’t among them. Traditional SEO metrics weren’t built to tell you which sources LLMs actually trust, or where your brand fits in that hierarchy.

This report breaks down the citation patterns behind ChatGPT, Perplexity, Gemini, and Claude, along with what those patterns mean for ai visibility tracking in 2026.

A Small Group of Domains Controls Most AI Citations

LLMs don’t treat the internet as a flat index. They’ve built a clear hierarchy, and it’s more concentrated than Google’s PageRank ever was.

Analysis of over one billion citation data points shows that a handful of sources dominate AI-generated answers. Wikipedia alone accounts for nearly 48% of top-ten citations in ChatGPT. Reddit captures about 40% of LLM citations overall, with its share climbing to 46.5% on Perplexity. YouTube leads Google’s AI Overviews at 29.5% citation share, thanks to its rich metadata, auto-generated captions, and chapter markers.

Then there’s the professional and editorial layer. LinkedIn dominates B2B and executive-level queries. Reuters, the Associated Press, and Bloomberg anchor time-sensitive financial and news responses. Forbes and the New York Times round out the editorial authority tier.

| Rank | Domain | Core Strength | Strongest Platform |

|---|---|---|---|

| 1 | Wikipedia.org | Entity definitions, factual grounding | ChatGPT |

| 2 | Reddit.com | First-hand experience, comparisons | Perplexity / AIO |

| 3 | YouTube.com | Visual evidence, tutorial metadata | Gemini / AIO |

| 4 | LinkedIn.com | Professional authority, B2B context | Multi-platform |

| 5 | Forbes.com | Editorial authority, business rankings | ChatGPT / Perplexity |

The takeaway isn’t that these domains are “better.” It’s that LLMs have been trained to weight certain trust signals, and these sources happen to score highest on those signals consistently.

Each AI Platform Has a Different Citation Personality

Brands that treat AI search as a single channel are misreading the landscape. ChatGPT, Perplexity, Gemini, and Claude each pull from different source pools, with meaningfully different preferences.

ChatGPT behaves like a cautious encyclopedia editor. It leans on Wikipedia and top-tier news outlets, keeping citations tight at 2 to 4 sources per answer. Brand-owned pages rarely appear unless the query has explicit commercial intent.

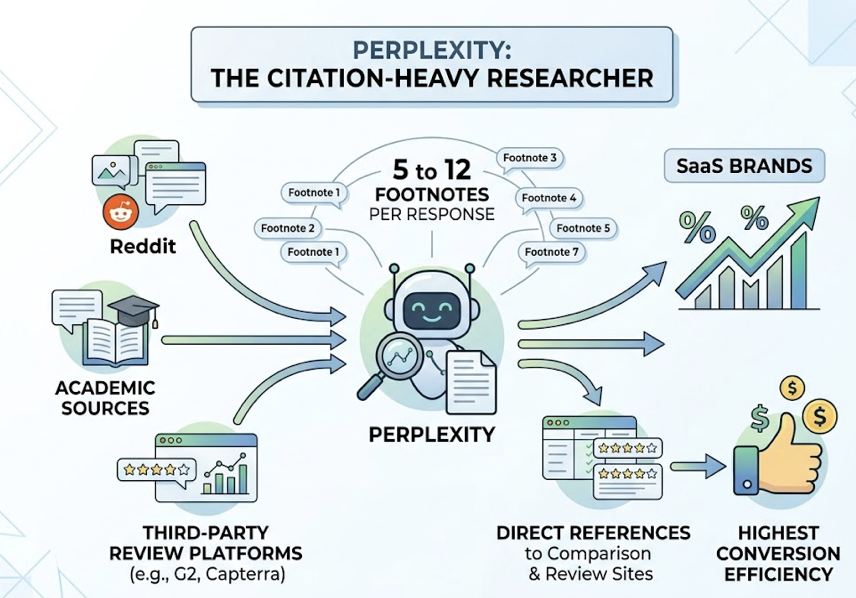

Perplexity is the citation-heavy researcher. It typically surfaces 5 to 12 footnotes per response, drawing heavily from Reddit, academic sources, and third-party review platforms like G2 and Capterra. For SaaS brands, Perplexity tends to deliver the highest conversion efficiency because it directly references comparison and review sites.

Gemini and Google AI Overviews favor Google’s own ecosystem. YouTube, Google Maps, and Google Shopping dominate the citation mix. The overlap between AIO citations and traditional top-ten SERP results once hit 76.1%, though that number is declining in 2026 as Google diversifies its sources.

Claude prefers long-form depth. The Atlantic, The New Yorker, and The Economist appear more frequently in Claude’s citations than in any other platform. It favors time-tested analysis over breaking news, making it the strongest platform for brands with deep editorial content.

| Dimension | ChatGPT | Perplexity | Gemini / AIO | Claude |

|---|---|---|---|---|

| Top source type | Wikipedia, elite news | Reddit, G2, academic | YouTube, local listings | Long-form magazines |

| Citations per answer | 2-4 | 5-12 | 3-5 | 2-3 |

| Algorithmic preference | Editorial authority | Real-time UGC + data | Google ecosystem, E-E-A-T | Narrative quality, logic |

One critical warning: citation patterns shift. In September 2025, a ChatGPT parameter update dropped Reddit’s citation share from 60% to 10% in six weeks. PR Newswire, Forbes, and Medium absorbed the gap. Brands that had over-invested in Reddit visibility lost ground overnight.

That’s the kind of volatility that makes ongoing ai visibility tracking non-negotiable.

Why AI Engines Favor Certain Content Formats

Domain authority gets you considered. But the format and structure of your content determines whether AI actually extracts and cites it.

The data here is specific. Pages that include concrete statistics, percentages, and data points are 40% more likely to be cited than purely qualitative content. That’s a significant gap for a single structural choice.

Position matters too. 44.2% of AI citations are extracted from the first 30% of an article. If your key claim or definition sits in paragraph eight, most LLMs won’t reach it. Front-loading your core statement is one of the highest-leverage GEO moves available.

A few more structural signals that correlate with higher citation rates:

Heading hierarchy: 68.7% of cited pages follow strict H1 to H2 to H3 logic. AI crawlers use these levels to map entity relationships.

Content freshness: 50% of cited content was published within the last 13 weeks. On Perplexity, freshness can override domain authority entirely.

Depth over brevity: Content exceeding 20,000 characters gets 4.3x more citations on average than thin pages. LLMs prefer comprehensive “single source of truth” documents they can chunk on their own.

The pattern is clear. AI engines don’t want summaries of summaries. They want structured, data-rich, deeply reported source material they can extract from confidently.

The Citation Gap Where Most Brands Lose AI Visibility

Here’s the number that should reframe every brand’s content strategy: 82% to 85% of AI citations come from third-party sources, not from brand-owned websites.

That means the page you spent three months optimizing on your own domain might never appear in an AI-generated answer. The Reddit thread where a customer described their experience with your product? That’s 6.5 times more likely to get cited.

This gap creates two problems.

First, brands that focus exclusively on their own site are building content in a space LLMs tend to ignore. Second, there’s the “ghost citation” phenomenon: one study found that Gemini cited a brand’s website 182 times in 30 days but never mentioned the brand name in its generated text. The AI extracted knowledge from the site but didn’t consider the brand identity relevant to the user’s question.

Closing that gap requires a shift in strategy. Instead of funneling all content investment into owned properties, brands need to build signal across the platforms LLMs trust most: Reddit, LinkedIn, G2, YouTube, and authoritative editorial outlets. The goal isn’t just visibility on your own site. It’s presence across the sources AI actually cites.

Topify‘s Source Analysis feature is built for exactly this problem. It reverse-engineers the domains and URLs that AI platforms cite for your target prompts, showing you where competitors are getting referenced and where your brand has gaps. That kind of ai visibility tracking at the citation layer turns a vague “we’re not showing up” into a specific, actionable map.

How to Track AI Visibility at the Source Level

Traditional keyword rankings can’t measure what’s happening inside AI-generated answers. The industry has developed a new set of KPIs specifically for this:

AI Share of Voice measures how often your brand gets mentioned relative to competitors across a defined set of prompts. It’s the closest equivalent to market share in AI search.

Citation Probability Score evaluates how likely a specific page is to be cited, based on its structure, data density, and freshness. Think of it as a predictive quality metric for GEO.

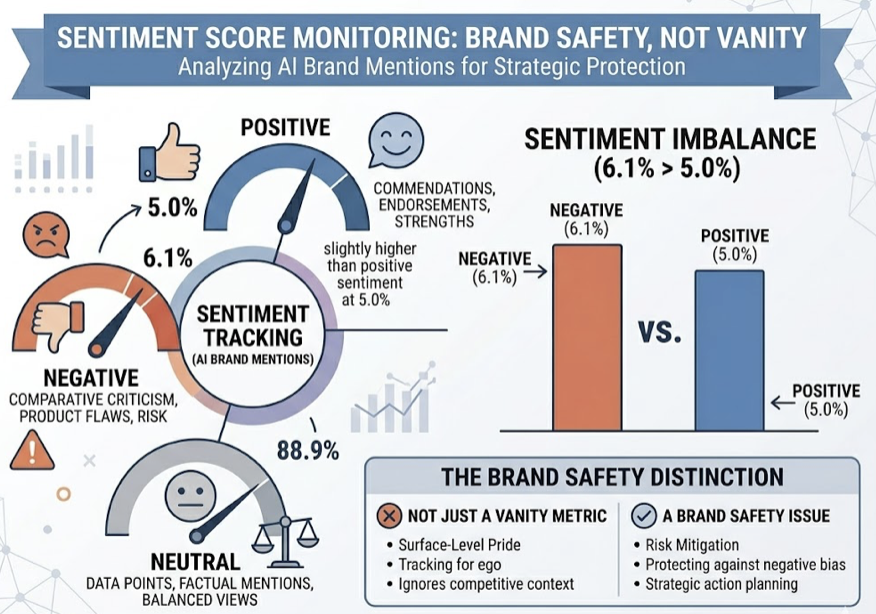

Sentiment Score tracks whether AI mentions your brand positively, neutrally, or negatively. The current data shows AI references negative sentiment at 6.1% in comparative contexts, slightly higher than positive sentiment at 5.0%. That makes sentiment monitoring a brand safety issue, not just a vanity metric.

For teams building an ai visibility tracking workflow, the practical framework looks like this: define your target prompts, monitor which sources get cited in each answer, identify content gaps between your coverage and your competitors’, then optimize content to fill those gaps.

Topify brings these steps into one platform. Its Visibility Tracking covers ChatGPT, Perplexity, Gemini, DeepSeek, and other major AI engines. Competitor Monitoring automatically detects rivals and benchmarks your position, sentiment, and citation sources against theirs. And the one-click GEO execution feature turns those insights into content actions without manual workflows.

That end-to-end loop, from tracking to diagnosis to execution, is what separates a dashboard from a system. You can get started with Topify to see where your brand currently stands across AI platforms.

3 Moves That Earn More AI Citations in 2026

The data from this report points to three high-impact actions brands can take now.

1. Front-load facts in every piece of content. If 44.2% of citations come from the first 30% of a page, burying your strongest claims below the fold is a structural disadvantage. Lead with your core insight, definition, or data point. Save the context for paragraphs two and three.

2. Build third-party signal where LLMs actually look. Your blog is important, but it’s not where AI citations concentrate. Invest in Reddit participation, G2 reviews with real use-case detail, LinkedIn thought leadership, and earned media in publications AI trusts. Brands with active third-party presence are 3x more likely to be selected as a recommended source by ChatGPT.

3. Monitor citation patterns continuously, not quarterly. The September 2025 algorithm shift proved that citation shares can swing dramatically in weeks. Monthly or quarterly audits miss these inflection points. Real-time ai visibility tracking tools like Topify’s AI search monitoring dashboard let you catch drops before they compound.

One more data point worth keeping in mind: despite the overall decline in click-through rates from AI answers (the top organic result loses 58% of its CTR when AIO is present), the traffic that does come through AI citations converts at dramatically higher rates. An Ahrefs case study found that AI-referred visitors, while representing just 0.5% of total traffic, generated 12.1% of signups, a 23x conversion premium over traditional organic traffic.

The volume is smaller. The value per visit is much higher.

Conclusion

The sources LLMs trust in 2026 are concentrated, platform-specific, and shifting faster than most brands realize. Wikipedia, Reddit, YouTube, and a small group of editorial authorities dominate the citation layer, and each AI platform weights them differently.

For brands, the strategic implication is straightforward: stop optimizing only for your own site, start building signal where AI actually pulls citations, and track the results continuously. The brands that treat ai visibility tracking as an ongoing discipline, not a one-time audit, are the ones that’ll hold position as citation patterns evolve.

FAQ

Q: What is ai visibility tracking?

A: AI visibility tracking is the practice of monitoring how and where your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, and Gemini. It measures metrics like citation frequency, source attribution, sentiment, and competitive positioning within AI search results.

Q: Which AI search engine cites the most external sources?

A: Perplexity currently provides the most citations per answer, typically 5 to 12 footnotes per response. It draws heavily from Reddit, academic databases, and third-party review platforms, making it the most citation-transparent AI search engine available.

Q: Does high domain authority guarantee AI citations?

A: No. Domain authority helps with traditional SEO rankings, but LLMs use a different trust hierarchy. Content structure, data density, freshness, and third-party presence on platforms like Reddit and G2 often matter more than DA alone.

Q: How often do AI citation patterns change?

A: They can shift significantly within weeks. The September 2025 ChatGPT update moved Reddit’s citation share from 60% to 10% in six weeks. Continuous monitoring is the only way to catch these changes before they affect your brand’s visibility.