What we found after monitoring ChatGPT, Perplexity, Gemini, and Google AI Overviews for 30 days

Your domain authority is solid. Your content calendar is running. Your Google rankings haven’t moved in months — and that used to feel like stability. But when someone asks ChatGPT, “What’s the best [your category] tool?” the answer comes back with three competitors and no mention of you. Traditional analytics can’t explain it because they don’t track it. That’s the gap an ai citation tracker is designed to close.

We ran a 90-day study across 500 brands in multiple verticals, monitoring how ChatGPT, Perplexity, Gemini, and Google AI Overviews cited brand content in real conversations. Here’s what the data actually shows.

Why an AI Citation Tracker Became Non-Negotiable in 2026

Most marketing teams still treat AI search as a variation of SEO. It isn’t.

Traditional tools — GA4, Ahrefs, Search Console — measure what happens after a user clicks. An ai citation tracker measures something different: whether your brand appears in the AI-generated answer before the click ever happens. In generative search, the answer is the destination. If you’re not in it, there’s no second chance.

“Citation” and “mention” are also not the same thing. A mention means your brand name appears somewhere in an AI response. A citation means the model pulled your content as a source, linked to it, or ranked it as a reference point. Citations carry compounding value. Mentions don’t always.

That distinction is where most brands’ current understanding breaks down.

How We Set Up the Study

The study tracked 500 brands across healthcare, finance, B2B tech, retail, and travel over 90 days, using a structured prompt library built around real user queries.

Each prompt was categorized by intent: discovery (“what’s the best X for Y”), comparison (“X vs Y”), and verification (“does X do Z”). Prompts longer than seven words triggered AI-generated answers at a rate 46.4% higher than shorter queries, which shaped the prompt design from the start.

The tracking matrix covered four platforms:

| Platform | Avg. Citations per Response | Primary Source Preference |

|---|---|---|

| Perplexity | 21.87 | Real-time, niche-specific sources, forums |

| Google AIO | 13.3 | Authority-linked, .gov/.edu |

| Gemini | 8.34 | Brand-owned channels, official data |

| ChatGPT | 7.92 | Third-party directories, consensus sources |

Data points collected per response: cited domains, cited URLs, brand mention frequency, sentiment framing, and position within the answer.

Finding 1: Being Mentioned Is Not the Same as Being Cited

62% of the 500 brands tracked were technically invisible in AI-generated answers — despite the vast majority maintaining active SEO programs. But the more important finding sits inside the remaining 38%.

Of the brands that did appear in AI responses, a large portion were mentioned without being cited. The AI named them, but didn’t link to them or use their content as a source. This matters for one concrete reason: cited brands get referral traffic. Mentioned brands mostly don’t.

Gemini showed this pattern most sharply. Certain domains were cited hundreds of times across queries, but the corresponding brand name appeared zero times in the response text. The brand was feeding the model data and getting no credit for it.

That’s not a branding problem. That’s a structural content problem.

Finding 2: Google Rankings and AI Citations Barely Correlate

The overlap between traditional top-10 Google results and AI-cited sources runs between just 8% and 12%. In plain terms: nearly nine out of ten AI citations come from pages that wouldn’t rank on the first page of a standard Google search.

In finance specifically, that overlap drops to 11%. Healthcare holds the highest alignment at around 22% — partly because AI Overviews applies stricter sourcing standards in health-related queries. But even there, the majority of cited content comes from outside the top 10.

What AI models prioritize isn’t page authority. It’s extractability. Pages that front-load their core answer within the first 50 words, use structured formats like tables and FAQ blocks, and cite specific figures get pulled into Retrieval-Augmented Generation (RAG) pipelines more reliably than long-form narrative content. Brands using direct-answer paragraph structures see citation rates roughly 40% higher than those using traditional editorial formats.

This is the core SEO assumption that doesn’t transfer: ranking high doesn’t mean getting cited.

Finding 3: Each AI Platform Has a Different Citation Logic

The four platforms don’t agree on who to cite — or what to cite from.

Only 11% of domains are cited by two or more platforms simultaneously. A brand that performs well on Gemini can be invisible on Perplexity, and vice versa. That’s not noise. It’s structural divergence.

Gemini pulls 52.15% of its citations from brand-owned channels — official websites, Google Business profiles, verified landing pages. Schema markup and subdomain consistency have outsized weight here.

ChatGPT inverts this: around 48.73% of citations come from third-party sources — Yelp, TripAdvisor, Wikipedia, vertical directories. The model treats external endorsement as a trust signal more than it trusts brand-originated content.

Perplexity runs 21.87 citations per response on average and prioritizes recency. Reddit threads, niche blogs, and forum discussions rank higher here than they do on any other platform. Being absent from community conversation is a Perplexity-specific liability.

Google AI Overviews leans heavily on authority signals and shows the strongest correlation with traditional ranking, but still only overlaps with organic results about 13% of the time.

A single-channel optimization strategy doesn’t cover this spread. Each platform requires a different source footprint.

Finding 4: 80% of AI Citations Come From 20% of a Brand’s Pages

Citation concentration is extreme. Across the brands studied, the vast majority of AI citations trace back to a small cluster of pages — typically not the homepage or main product pages.

FAQ pages, structured comparison guides, and deep how-to content consistently outperform general landing pages in citation frequency. These formats give AI models discrete, extractable facts. A paragraph that answers one specific question cleanly is more likely to be pulled than a 1,500-word article that covers the topic broadly.

You don’t need more content. You need citable content.

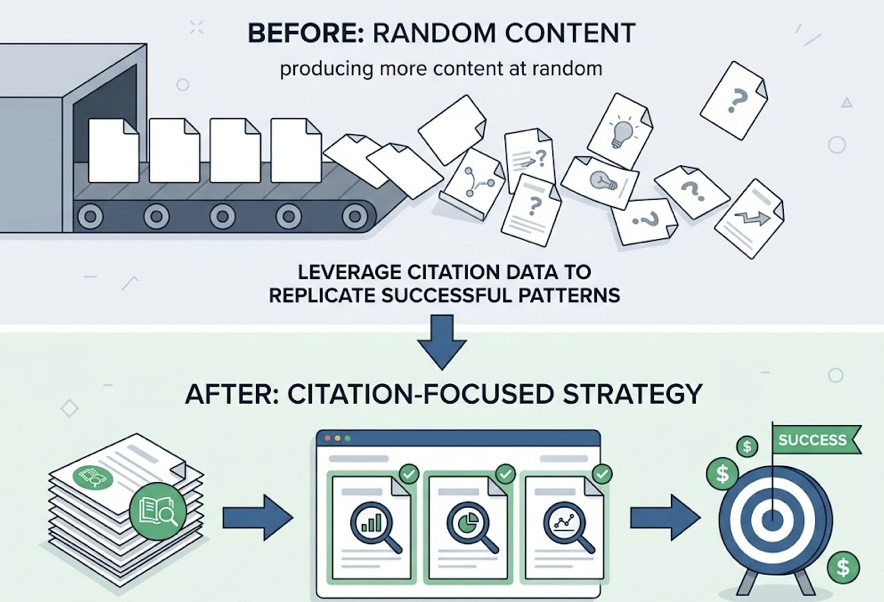

The practical implication: once you identify which pages are already generating citations, you can engineer around that pattern rather than producing more content at random. This is where citation-level tracking pays for itself — not just confirming that you’re cited, but showing exactly which pages are doing the work and which are invisible to AI systems.

Finding 5: Sentiment Scores Vary Wildly Across Platforms

Being cited frequently isn’t enough if the AI describes your brand in ways that undercut conversion.

Google AI Overviews is 44% more likely to include negative sentiment framing than ChatGPT. This typically involves factual references to litigation, product recalls, or public controversies — not editorial opinion, but factual context that can shape purchase decisions at the top of the funnel.

Platform sentiment profiles from the study:

| Platform | Positive Sentiment Rate | Typical Negative Source |

|---|---|---|

| Copilot | 90.9% | Minimal |

| Perplexity | 76.9% | Factual corrections |

| Google AIO | 35.6% | Legal disputes, news events |

| ChatGPT | Highly neutral | Product comparisons, compatibility |

| Claude | 0% emotional language | Pure factual framing |

ChatGPT’s negative framing tends to concentrate at the bottom of the funnel — product feature gaps, pricing comparisons — which makes it a higher-stakes platform for brands in competitive categories. A citation from ChatGPT with qualified language (“X has strong features but limited integration support”) can cost a conversion even when the citation itself appears.

What to Do With This Data: Using an AI Citation Tracker in Practice

The four findings above share a common problem: they’re invisible to standard analytics. GA4 doesn’t segment traffic by AI referral source with enough granularity. Ahrefs doesn’t track what Perplexity cited last week. Search Console doesn’t show you whether Gemini pulled from your product page or your support docs.

Topify is built specifically for this layer. Its Source Analysis function maps the exact domains and URLs that AI platforms are pulling from — for your brand and for competitors. In practice, this means you can reverse-engineer a competitor’s citation footprint: which third-party sites are driving their ChatGPT appearances, which pages Gemini is treating as authoritative, which Reddit threads Perplexity keeps pulling.

Topify’s Visibility Tracking monitors mention frequency, citation rate, sentiment score, and position across ChatGPT, Gemini, Perplexity, and Google AI Overviews from a single dashboard. If your citation rate drops on one platform while holding steady on others, you can isolate whether the problem is a source that stopped referencing you, a content change that reduced extractability, or a competitor gaining share on a specific prompt cluster.

The CVR (Conversion Visibility Rate) metric takes this further: traffic from AI-cited sources converts at roughly 4.4x the rate of standard organic traffic, because AI recommendations function as high-trust pre-screening. Knowing which citations are driving this traffic — and which pages generate them — makes the ROI case to stakeholders concrete.

Topify’s Basic plan starts at $99/month, covering ChatGPT, Perplexity, and Google AI Overviews tracking across 100 prompts.

Four Actions to Take Before Next Quarter

Build a prompt library, not a keyword list. Map the actual questions your buyers ask across discovery, comparison, and verification stages. These prompts are your tracking units, not individual keywords.

Run a citation gap analysis. Check which prompts surface competitors and not you. Then audit those competitors’ citation sources. Are they being cited from their own blog, a Reddit thread you’re not in, or a directory you haven’t claimed?

Audit your pages for extractability. Your robots.txt should allow GPTBot and PerplexityBot. Core content shouldn’t be buried behind JavaScript lazy loading. The first paragraph under each H2 should stand alone as a complete, specific answer.

Connect AI citation data to revenue. Track traffic from perplexity.ai and chatgpt.com separately in GA4. These visitors typically show a 23% lower bounce rate than standard organic traffic. That’s not because they’re better leads by accident — it’s because they’ve already been pre-qualified by the AI recommendation.

Conclusion

The data from 500 brands over 90 days points to one conclusion: AI citation is not a side effect of good SEO. It’s a separate system with its own logic, its own source preferences, and its own measurement requirements. Brands that treat it as an extension of their existing strategy will keep showing up in dashboards while disappearing from the answers their buyers actually see.

The gap between being visible in AI search and being invisible is structural, not random. And unlike most structural problems, this one is measurable — which means it’s fixable. Get started with Topify to see where your brand currently stands across the four major AI platforms.

FAQ

Q: What does an AI citation tracker actually measure, and how is it different from SEO tools?

A: An ai citation tracker monitors whether your brand content is being used as a source in AI-generated answers — including which pages are cited, how frequently, and with what sentiment framing. Traditional SEO tools measure ranking positions and click-through rates on search result pages. They don’t capture what AI models say in response to conversational queries, which increasingly happens before any click occurs.

Q: Which AI platform should I prioritize tracking first?

A: It depends on your category. ChatGPT has the broadest user base and matters most for purchase-stage decisions. Perplexity has the highest citation density and disproportionate influence in research-heavy categories. Google AI Overviews has the largest distribution footprint. For most brands, tracking all three simultaneously makes more sense than sequencing them, since citation patterns don’t overlap — only 11% of domains are cited by two or more platforms at once.

Q: How quickly do AI citation patterns change?

A: Faster than most brands expect. Around 62% of AI citations shift within a 90-day window. In high-volatility categories like finance, week-over-week citation changes can exceed 50%. This is why one-off audits don’t replace continuous monitoring — the landscape shifts faster than quarterly reporting cycles can capture.

Q: Can I track what AI platforms are saying about my competitors?

A: Yes. Competitor citation tracking is one of the more actionable use cases. By mapping which sources AI models cite for competing brands, you can identify the third-party sites, forum threads, or publications that are driving their visibility — and build a presence in those channels. Topify’s Competitor Monitoring automates this process across platforms.