Your competitor was cited 14 times by ChatGPT last week in response to high-intent buyer queries. Your brand? Zero mentions. And you had no idea it was happening.

That’s not a hypothetical. It’s the default state for most marketing teams right now. Your Google Analytics is blind to AI-mediated discovery because most of these interactions happen inside the AI interface — no referral link, no session data, no trace.

Setting up an AI citation tracker dashboard is how you fix that. Here’s exactly how to do it.

What AI Citation Tracking Actually Measures

Most brand monitoring tools track mentions: your brand name showing up in a news article, a tweet, or a review site. AI citation tracking is different.

It measures the internal logic of LLM responses — specifically, which domains the AI uses to construct its answers and whether your brand is named as a recommendation in the response body.

There’s a meaningful gap between the two. Research shows only 28% of brands achieve both a citation (the AI linking to your domain as a source) and a mention (the AI recommending your brand by name) in the same response. That “Mention-Source Divide” matters: brands that earn both signals are 40% more likely to reappear in consecutive AI responses, creating a compounding visibility advantage.

Google Analytics can’t see any of this. You need a dedicated system.

The 5 Metrics Your Dashboard Needs Before Anything Else

Before you touch any tool, get clear on what you’re actually measuring. A dashboard built around the wrong metrics is worse than no dashboard at all.

Visibility Rate (Share of Answer). The percentage of AI responses to your target prompt set that include your brand. If your brand appears in 31 out of 100 ChatGPT responses for a specific query, your visibility rate is 31%. Because LLMs are non-deterministic, this number needs to be averaged across 60-100 prompt iterations — not pulled from a single test.

Citation Source Share. How often your domain appears in the citation or footnote section of an AI response, relative to competitors. AI interfaces like Perplexity typically limit citations to 3-10 links per answer. That’s an intensely competitive slot.

Sentiment Score. A high visibility rate with negative sentiment is actively harmful. If the AI describes your brand as “an outdated solution” or positions you unfavorably against a competitor, that visibility is working against you. Track the quality of mentions, not just the count.

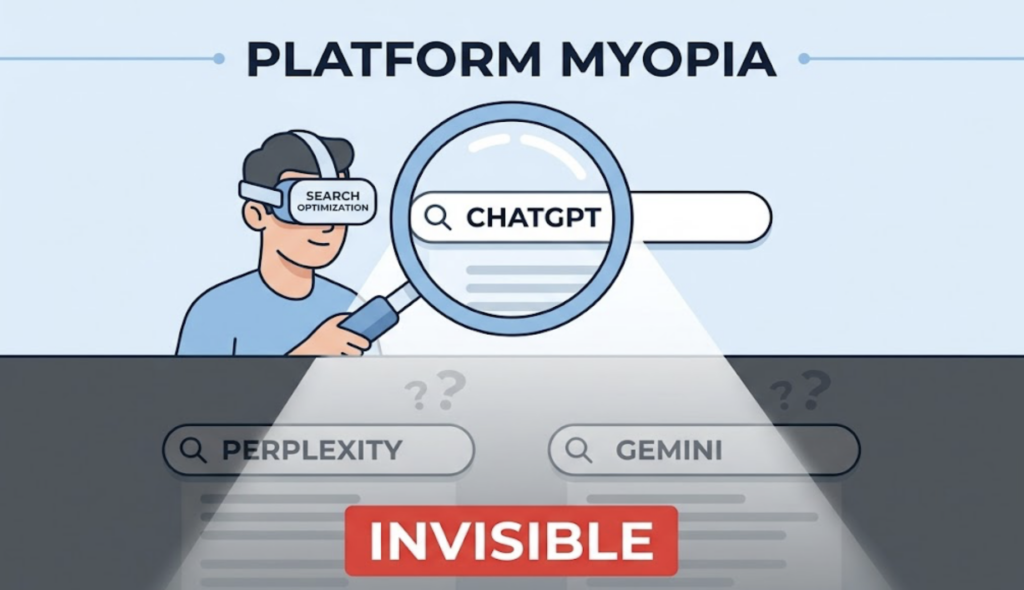

Platform Breakdown. ChatGPT and Perplexity share only 11% of the domains they cite. A single “AI score” hides these divergences. You need per-platform data.

Trend Line. Static snapshots are useless. AI citation patterns shift constantly as models update and web indexes are recrawled. You need weekly trend data to separate signal from noise.

Step 1: Define the Prompts That Drive Citations in Your Category

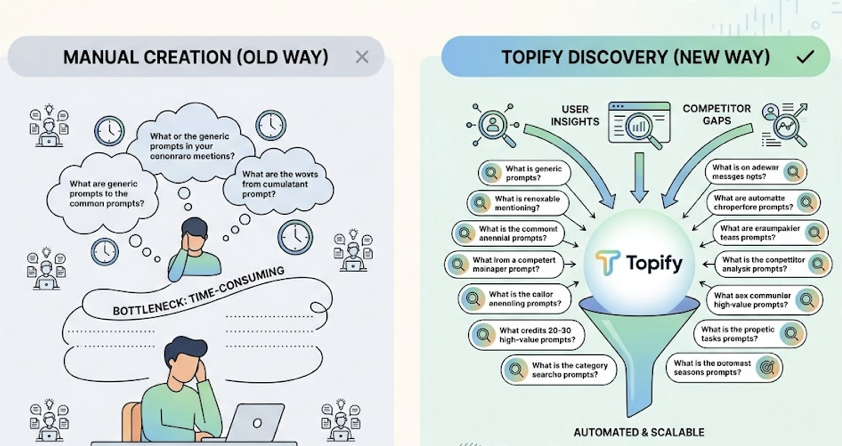

Your citation tracker is only as good as the prompts you’re monitoring. And this is where most teams underinvest.

Traditional keyword research doesn’t translate. The average ChatGPT prompt runs around 60 words. You’re not optimizing for “best CRM” — you’re optimizing for “what’s the best CRM for a 10-person SaaS team that needs Salesforce integration and doesn’t want to pay enterprise pricing.”

Start with two prompt categories that consistently drive citations. Evaluative prompts (“What’s the best [product] for [use case]?” / “Compare X vs Y”) push the AI to recommend a shortlist — these are your highest-value slots. Research prompts (“How does [process] work?”) often trigger citations of authoritative reports even when they don’t name brands.

Aim for 20-30 prompts that cover discovery, comparison, and evaluation stages. Manual prompt creation is a significant bottleneck — Topify’s High-Value Prompt Discovery automates this by surfacing the exact questions users are already asking AI engines in your category, including visibility gaps where competitors appear but you don’t.

Step 2: Map Your Competitive Entity Landscape

AI systems don’t see brands as isolated entries. They understand them as entities within a knowledge graph, clustered by association and context.

This has a practical implication: your “AI-perceived competitors” are often not the same as your marketing plan’s competitor set.

During initial dashboard setup, it’s common to discover that the AI is grouping your brand alongside a G2 aggregator page, a Reddit thread, or a niche analyst report — not the direct competitors you were tracking. That aggregator might be capturing citation share you didn’t know you were competing for.

Topify’s Competitor Monitoring automates this detection, showing how AI engines cluster your brand and flagging new rivals as they emerge. Don’t configure your competitor set manually based on gut instinct. Let the AI tell you who it thinks your competitors are.

Also track co-citation signals: when your brand is mentioned in the same context as trusted industry leaders across independent sources, the statistical probability of the AI recommending you alongside those leaders increases. Co-citation is an authority signal you can actively engineer.

Step 3: Set Up Source-Level Citation Tracking

This is the part most teams skip. It’s also where the most actionable intelligence lives.

AI models don’t just pull from brand-owned content. According to a 2026 citation distribution analysis, blogs and industry content account for 53.46% of all AI citations. News publishers contribute 14.09%. Reddit and community forums drive 8.71% — spiking significantly in evaluative queries where users are trying to gauge real-world trust.

Official brand pages are often deprioritized unless the query is brand-specific.

That distribution has a direct strategic implication: writing more content on your own domain isn’t always the highest-leverage move.

Using Topify’s Source Analysis, you can identify exactly which domains the AI is citing to construct answers in your category. When you look at a competitor who consistently appears in Perplexity responses, the source might not be their blog — it might be a specific Reddit thread, an analyst report, or a niche review on a trade publication you hadn’t considered.

That’s your action item. Not a new blog post. A targeted engagement in the channel the AI already trusts.

Sort your high-citation domains into two buckets: sources you can influence (community forums, industry publications that accept contributed content, analyst relationships) and sources you can’t (Wikipedia, major news archives). Allocate effort accordingly.

Step 4: Build Your Weekly Monitoring Routine

Here’s where most teams drop the ball: they build the dashboard and then check it once a month.

That’s not enough. Perplexity shows an 82% citation rate for content updated within the last 30 days, compared to 37% for content older than six months. AI citation patterns shift fast. A monthly review cycle means you’re responding to changes that happened weeks ago.

The manual alternative is unsustainable. Monitoring AI citations by hand requires roughly 3 hours per week — and human data entry carries a 1-7% error rate. With an automated platform, that drops to 15 minutes with significantly higher data density.

Here’s the weekly structure that works:

Metric audit (5 min). Check Visibility Rate and Sentiment trend lines across ChatGPT, Perplexity, and Gemini. You’re looking for direction changes, not absolute numbers.

Competitor pulse (5 min). Did any unexpected rivals appear? Did a competitor’s Citation Share spike? A sudden shift usually points to a content or PR move you should investigate.

Source opportunity (5 min). Identify one high-citation domain where your brand is currently absent. Assign it as an action item for the week — a Reddit comment, a media outreach, a data contribution to an industry report.

Topify generates these reports automatically. You show up, read the summary, make the call.

The Setup Mistakes That Tank Your Dashboard Before It Starts

Platform myopia. Most teams start with ChatGPT because of market share. But Perplexity skews toward niche expertise and community content, while Gemini prioritizes brand-owned pages and YouTube. Optimizing for one engine leaves you invisible on the others. Your dashboard needs cross-platform coverage from day one.

Tracking volume, ignoring sentiment. AI models are fine-tuned through RLHF to avoid recommending brands with poor user experience signals or controversy associations. A high citation count with negative sentiment is not a win — it’s a risk that compounds over time.

Only tracking your brand name. Category-level prompts (“what should I use for X”) often drive more purchasing decisions than brand-specific queries. If you’re not monitoring those, you’re missing the prompts where competitor share is being built.

Blocking AI crawlers. If GPTBot, ClaudeBot, or PerplexityBot are blocked in your robots.txt, your domain never enters the retrieval pipeline. The AI falls back on third-party sources — which may be less accurate or actively unfavorable. An AI robots checker should be part of your initial technical audit.

Monthly cadence on a weekly problem. Citation drift is real. By the time your monthly report lands, the shift you needed to respond to happened three weeks ago.

One Technical Detail Most Guides Don’t Cover

Content structure affects citability in ways most teams underestimate.

Placing a 40-80 word direct answer at the top of a page — before any supporting context — increases citation rates by 40%, based on research from the Princeton GEO study and industry testing. AI models running RAG retrieval are looking for machine-extractable answers, not prose that buries the key point in paragraph four.

Structured data (Schema.org Organization and Product markup) gives the AI a “cheat sheet” to extract brand facts accurately. Information gain — unique data points not found in the AI’s base training data — is weighted heavily as a sourcing signal. If your content says the same thing as ten other pages, it’s not a citation candidate.

This is worth auditing during setup, not after you’ve been tracking for six months.

Conclusion

The business case for this work is straightforward. AI search visitors convert at 23x the rate of traditional organic search visitors because they arrive pre-qualified. AI-driven retail referrals grew 4,700% year-over-year by mid-2025. The cost of invisibility is no longer theoretical.

Setting up an AI citation tracker dashboard isn’t a one-time project. It’s a visibility infrastructure — a system that tells you where you stand in the AI’s reasoning, what your competitors are doing that you’re not, and where to put resources next week.

Start with 20-30 prompts. Map your actual competitor set. Set up source-level tracking. Build the 15-minute weekly habit. The teams that treat this as operational infrastructure — not a reporting experiment — are the ones building defensible positions in AI search right now.

FAQ

What’s the difference between AI citation tracking and brand mention monitoring?

Traditional monitoring indexes public URLs to track social and news mentions. AI citation tracking analyzes the internal synthesis of LLMs, measuring how often a brand is mentioned, cited as a source, and recommended within AI-generated responses — data that standard analytics tools can’t capture.

How many prompts should I track when starting out?

Start with 20-30 high-value prompts covering discovery, comparison, and evaluation stages of the buyer journey. Prioritize evaluative and comparative prompts — these drive the AI to recommend shortlists and are the highest-value slots to compete for.

Can I track citations across ChatGPT, Perplexity, and Gemini in one dashboard?

Yes. Platforms like Topify provide unified multi-platform tracking so you can compare inter-engine performance and catch divergences that a single-platform view would miss.

How often does AI citation data change?

Frequently. Perplexity prioritizes content updated within 30 days, showing an 82% citation rate for fresh content vs. 37% for content older than six months. Weekly monitoring is the minimum cadence to distinguish sustained trend changes from temporary algorithmic noise.