Your domain authority is 70. Your keywords sit comfortably on page one. Your SEO dashboard looks healthy by every traditional metric. Then someone on your team types your core product category into ChatGPT and gets back a confident recommendation of four vendors. You’re not one of them.

That’s not a ranking failure. It’s a visibility gap that Google’s algorithm was never designed to detect. The signals that drive organic search rankings and the signals that drive AI citations are diverging fast, and the brands stuck measuring only one side are losing ground they can’t see.

What LLM Citations Are and Why Google’s Rules Don’t Apply

An LLM citation isn’t a backlink. It’s a dynamically generated reference that an AI model uses to attribute a fact, a recommendation, or a synthesized summary to a specific source. When ChatGPT or Perplexity answers a question, it doesn’t just list the top Google results. It evaluates content through a process called Retrieval-Augmented Generation(RAG), a multi-stage pipeline where queries get decomposed, documents get chunked, passages get scored, and only the most “extractable” content survives into the final response.

The divergence from Google’s logic starts here. Google rewards backlink quantity, domain authority, and keyword relevance. LLMs reward something different: brand search volume, factual density, and semantic extractability. Research shows that brand search volume has a 0.334 correlation with LLM citation frequency, surpassing the influence of backlinks entirely. That’s a fundamental shift. LLMs act as mirrors of societal mindshare, not as tallies of who earned the most links.

Here’s the thing: roughly 60% of ChatGPT queries get answered using only parametric memory, the information the model absorbed during training, with no external search triggered at all. For those queries, your page-one ranking is irrelevant. Your brand either exists in the model’s learned knowledge or it doesn’t.

| Feature | Traditional Search (Google) | Generative Engine (LLM) |

|---|---|---|

| Primary Visibility Driver | Backlink quantity and quality | Brand search volume and entity clarity |

| Content Evaluation | Keyword frequency and topical clusters | Factual density and semantic extractability |

| Retrieval Mechanism | Crawling and indexing via PageRank | RAG (Retrieval-Augmented Generation) |

| User Interface Goal | High-CTR navigational links | Synthesized answer or recommendation |

| Measurement Metric | Position (Rank 1-10) | Citation presence and sentiment score |

High Google Rank, Zero AI Visibility: How the Gap Forms

The term “LLM citation gap” describes a specific pattern: brands with strong organic rankings that are functionally invisible in AI-generated responses. It’s not hypothetical. In competitive verticals like online education and B2B SaaS, institutions with multi-million dollar marketing budgets and top-tier organic visibility capture less than 1.5% of AI citation share in their categories.

The root cause is structural. A page that repeats established consensus without adding unique, verifiable, or structured data might rank well on Google but gets discarded by a generative model during passage selection. LLMs don’t reward pages for having lots of links pointing at them. They reward pages that offer information gain: data, specifics, and structured answers that the model can confidently attribute.

That changes the stakes. In traditional search, being ranked fifth still gets you clicks. In generative search, if you’re not cited, your visibility is literally zero. There’s no “page two” to scroll to. The AI either mentions you in its synthesis or it doesn’t.

The behavioral shift makes this urgent. 73% of B2B buyers now report using AI tools as part of their purchase research. And the traffic that AI summaries capture tends to be the highest-value traffic: users in the consideration and evaluation phases, looking for direct recommendations rather than exploratory links. Early data suggests visitors arriving from an AI recommendation convert at roughly 5x the rate of traditional organic search visitors.

The Signals That Actually Drive LLM Citations

If backlinks and DA are losing their predictive power for AI visibility, what’s taking their place? Academic research into Generative Engine Optimization (GEO) has started to quantify the new signal hierarchy. Five factors stand out.

Brand search volume is the single strongest predictor. The 0.334 correlation with citation frequency means that brands people actively search for are the brands AI models prioritize, both in parametric memory and in RAG reranking. Brand-building activities that once seemed disconnected from search now directly impact AI visibility.

Source citations within your content have the largest documented impact on visibility, with research showing a 115.1% increase in citation likelihood when content references other credible sources. This signals to the retrieval system that your content is grounded in consensus, not isolated opinion.

Expert quotations increase citation probability by 37%. Statistical facts and verifiable data points boost it by 22%. And content freshness contributes roughly a 30% uplift in visibility for time-sensitive queries.

| Optimization Lever | Visibility Impact | Signal Type |

|---|---|---|

| Brand Search Volume | 0.334 Correlation | External / Parametric |

| Source Citations (within content) | +115.1% | Structural / Trust |

| Expert Quotations | +37% | E-E-A-T / Authority |

| Statistical Facts | +22% | Information Gain |

| Content Freshness | ~30% Increase | Temporal Relevance |

The pattern is clear. LLMs don’t reward keyword density. In fact, keyword stuffing actively harms GEO performance by up to 10% in generative engine responses. What they reward is factual density, structural clarity, and proof of expertise, the same qualities that make content genuinely useful to a human reader.

Content demonstrating strong E-E-A-T signals, like verifiable author credentials and firsthand experience, receives 5.2 times more citations than content without these markers. In B2B verticals, the presence of specific author credentials linked via Person Schema can account for a 2.1x increase in citation rates on platforms like Claude and ChatGPT.

Different AI Platforms, Different Citation Rules

One of the trickiest aspects of the LLM citation gap is that it’s not a single gap. It’s a different gap on every platform.

ChatGPT leans heavily on consensus data and authoritative foundations like Wikipedia. It matches Bing’s top search results for roughly 87% of retrieval-based queries. If your brand dominates traditional search, you have a partial advantage here, but only for the 40% of queries that trigger a web search at all.

Perplexity operates differently. It favors real-time, user-generated content and academic research. Approximately 46.7% of its citations come from Reddit threads. If your brand isn’t part of the conversation on Reddit, G2, or niche community forums, Perplexity may never surface you.

Google AI Overviews stay closely tied to the traditional organic index: roughly 76.1% of cited URLs rank in the top 10 organic results. That makes traditional SEO still relevant for AIO, but insufficient on its own, because the “summary selection” layer adds additional criteria.

A brand can dominate ChatGPT and be invisible on Perplexity. Research shows only a 25% overlap in brand recommendations between these two platforms. Single-platform tracking creates a false ceiling on your understanding of AI visibility.

How to Find Your Brand’s LLM Citation Blind Spots

Traditional rank tracking is binary: it tells you where your URL sits in a list. AI visibility tracking is multidimensional. It measures whether your brand is recommended, how it’s framed, and which sources the AI uses to validate that recommendation.

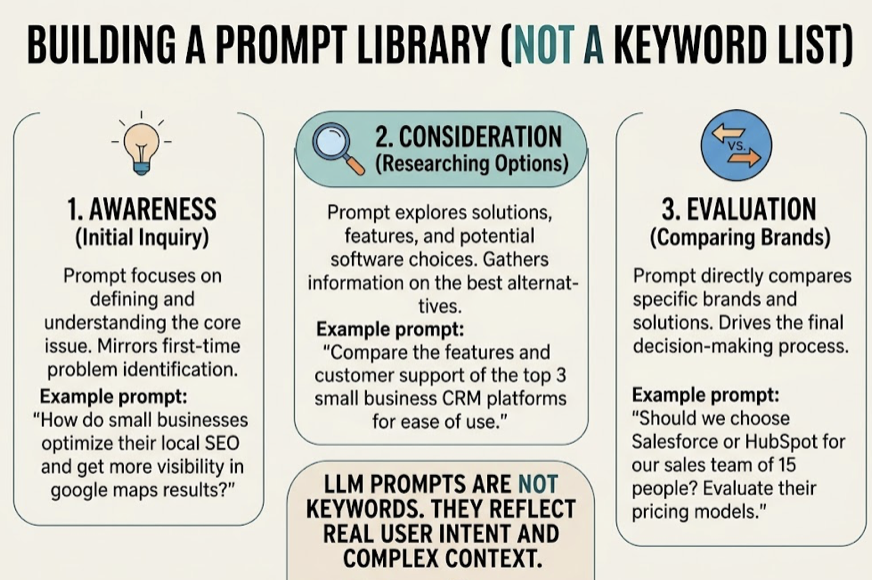

Build a prompt library, not a keyword list. LLM citation audits start with prompts that mirror how real buyers talk to AI. Unlike traditional keyword research, prompt research focuses on intent clusters: awareness prompts (“how to solve X”), consideration prompts (“best tools for Y”), and evaluation prompts (“Brand A vs Brand B”). The average AI prompt exceeds 20 words and contains multiple qualifiers that push the model from explanation to recommendation.

Map your citation sources. Once you’ve got your prompts, the next step is tracking which domains AI cites when it mentions you versus when it mentions a competitor. Topify’s Source Analysis feature lets teams reverse-engineer the specific URLs driving competitor visibility. If ChatGPT consistently cites a G2 review or a Reddit thread to recommend your competitor, that specific domain is a blind spot in your content strategy.

Measure Share of Model Voice. The primary KPI for the generative era is the percentage of AI-generated responses within your category that mention your brand. Unlike SERP share, Share of Model Voice accounts for both the frequency and the context of the mention. A brand recommended as a “reliable leader” carries a higher effective SOMV than one described as a “budget alternative” at the tail end of a list. Topify tracks this across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, combining visibility, sentiment, position, volume, mentions, intent, and CVR into a seven-metric framework.

For teams wanting a quick baseline before committing to a full audit, Topify’s free GEO Score Checker evaluates a site across four dimensions: AI bot access, structured data, content signals, and overall AI visibility. It’s the fastest way to find out whether AI crawlers can even read your site. And the AI Search Volume Checker shows how often specific prompts are searched across AI platforms, so you can prioritize the queries that actually carry demand.

From Invisible to Cited: Closing the LLM Citation Gap

Closing the gap requires a shift from keyword optimization to what practitioners call “entity sculpting,” ensuring that AI models recognize your brand as a definitive entity worth citing. Three pillars drive this.

Restructure content for extractability. AI models don’t read pages. They scrape chunks of text. To get cited, content needs to follow an “answer-first” architecture: state the direct answer in the first 60 words, then layer in context and supporting data. Modular paragraphs of 40-60 words improve the model’s ability to extract information during RAG processing.

Build third-party consensus. LLMs prioritize safety through consensus. They’re more likely to cite brands that appear consistently across multiple high-authority platforms. Brands cited across four or more platforms are 2.8 times more likely to appear in ChatGPT responses than those with a siloed web presence. Optimization needs to extend beyond your own website to include earned media on Reddit, industry review sites like G2, and reputable journalistic outlets.

Implement technical GEO infrastructure. Models and their RAG scrapers often struggle with JavaScript-heavy sites, leading to a 60% reduction in visibility for brands that don’t use server-side rendering. Advanced Schema.org markup, including FAQPage, HowTo, and Person schema, provides the “entity proof” that LLMs need to verify a brand’s credentials.

The execution loop matters as much as the strategy. Topify’s One-Click Execution feature lets teams review AI-generated content improvements, like schema-rich FAQs or data-dense summaries, and deploy them directly. In practice, this closes the gap between identifying a visibility issue and fixing it, which is the stage where most manual GEO efforts stall.

Conclusion

The LLM citation gap isn’t a temporary glitch in AI search. It’s a structural divergence between two different systems of digital authority. Google measures who earned the most links. AI models measure who provides the most useful, verifiable, and extractable information.

For SEO professionals and brand marketers, the goal has shifted from “ranking for clicks” to “being cited for authority.” That means elevating brand search volume, restructuring content for machine extractability, building third-party consensus across the platforms AI trusts, and using automated tools to monitor and maintain visibility across a fragmented landscape. The brands that close this gap now won’t just survive the shift to generative search. They’ll be the ones AI recommends first.

FAQ

Q: What is an LLM citation? A: An LLM citation is a reference that an AI model generates to attribute a specific fact or recommendation to an external source. It’s the primary way brands achieve visibility in AI-generated answers, and it works differently from a traditional backlink because it’s selected through semantic relevance and factual density, not link authority.

Q: Why doesn’t my high Google ranking help me get cited by AI? A: Google’s algorithm prioritizes link-based authority and keyword relevance. LLMs prioritize information gain, extractability, and cross-platform consensus. A high-ranking page may be skipped by an AI model if it lacks unique data, is poorly structured for RAG extraction, or doesn’t exist in the model’s parametric memory.

Q: How can I track whether AI platforms mention my brand?

A: Traditional SEO tools can’t measure this. You’ll need a dedicated AI visibility platform like Topify that monitors mentions, sentiment, citation sources, and share of voice across ChatGPT, Perplexity, Gemini, and other AI platforms. For a free starting point, the GEO Score Checker provides a quick baseline scan.

GEO Score Checker

Q: Does optimizing for LLMs hurt my Google rankings?

A: No. Most GEO strategies, like improving factual density, using clear headings, adding schema markup, and including expert quotations, align with Google’s own E-E-A-T and helpful content guidelines. In practice, brands that optimize for AI citations often see a “halo effect” that improves both traditional and AI visibility simultaneously.