Your domain authority is 70. Your keyword rankings are solid. But none of that tells you whether Perplexity is recommending your competitor instead of you. The gap between traditional SEO performance and AI search visibility has widened to the point where brands with first-page Google rankings are completely invisible inside the conversational responses of ChatGPT, Gemini, and Claude. With over 21% of search intents now satisfied by AI-generated answers, the question isn’t whether your brand ranks. It’s whether AI cites it.

That’s where LLM citation tracking tools come in. But the market is crowded, the terminology is fuzzy, and most tools measure the wrong thing.

LLM Citations vs. Mentions: Most Tools Track the Wrong Signal

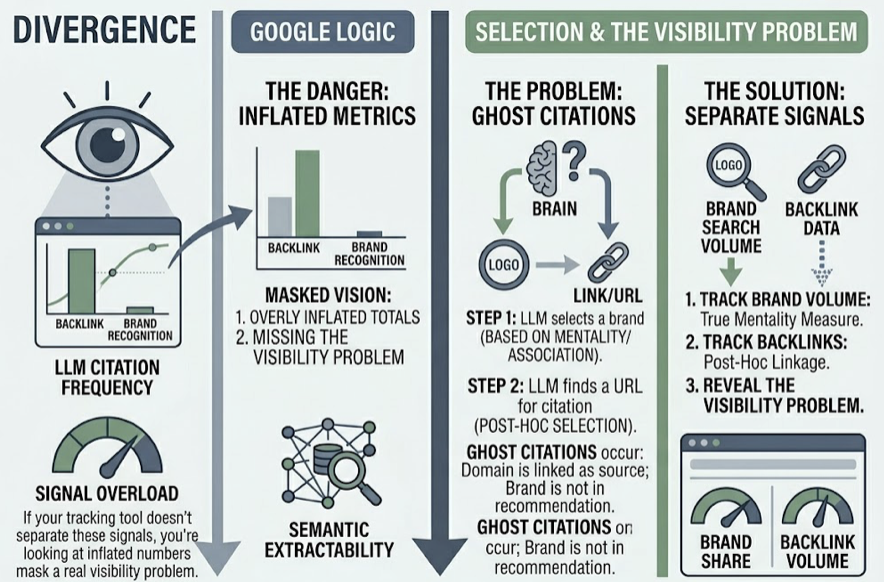

Here’s the distinction that trips up most marketing teams: a brand mention and a citation are two completely different signals. A mention means an LLM includes your brand name in its text. A citation is the formal attribution link, the footnote or source icon that tells the user where the information came from.

Why does this matter? LLMs often engage in what researchers call “post-hoc selection.” The model first picks which brand to recommend based on its training data, then goes looking for a URL to support the claim. This creates “ghost citations,” where your domain gets linked as a source for a factual claim while your brand doesn’t appear in the actual recommendation. If your tracking tool doesn’t separate these two signals, you’re looking at inflated numbers that mask a real visibility problem.

The fragmentation across platforms makes this worse. Only 11% of domains are cited by both ChatGPT and Perplexity for the same query. Each engine has its own index bias:

| Platform | Primary Source Preference | Top Source Share |

|---|---|---|

| ChatGPT | Wikipedia | 47.9% |

| Perplexity | 46.7% | |

| Google AI Mode | YouTube | 23.3% |

| Claude | Niche Blogs / Editorial | 43.8% |

| Gemini | Brand-owned Domains | 52.1% |

A strategy built around long-form blog content might earn citations in Claude but fail entirely in Perplexity unless paired with Reddit and community engagement. Tracking only one platform gives you a partial picture at best.

What Separates a Real Citation Tracker from a Dashboard That Just Looks Busy

Not every tool that claims “AI visibility” is actually tracking citations at the source level. Here are the five dimensions that separate professional LLM citation trackers from surface-level dashboards:

Prompt-level depth. Standard SEO tools track keywords. GEO requires prompt-level tracking that mirrors real conversational intent, multi-layered questions that single-term queries can’t replicate.

Source-level decomposition. A professional tracker doesn’t just report that your brand was mentioned. It identifies which specific URLs, whether yours or a third-party review, triggered the citation. This matters because 85% of brand mentionsin AI search come from third-party pages, not the brand’s own domain.

Multi-platform coverage. With 91% of AI citations appearing in only one engine, single-platform tracking is a blind spot, not a strategy.

Refresh frequency. AirOps research shows that only 30% of brands maintain visibility from one AI answer to the next, and just 20% survive across five consecutive runs. Monthly snapshots are statistically meaningless in this environment. Daily or on-demand refreshes are the minimum.

Actionability. Data without a path to execution is just noise. The best tools connect citation gaps directly to content strategies you can act on.

Quick Comparison: Top LLM Citation Tracking Tools at a Glance

| Tool | Models Tracked | Tracking Depth | Refresh Cadence | Starting Price |

|---|---|---|---|---|

| Topify | ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen | URL-level citations + 7 metrics | Daily / On-demand | $99/mo |

| Ahrefs Brand Radar | ChatGPT, Perplexity, Gemini, AIO | Brand-level SOV | Monthly | $199/mo add-on |

| Semrush AI Visibility | ChatGPT, Gemini, AIO, Claude | Mention vs. citation separation | Weekly | $99/mo add-on |

| AIclicks | 6+ models incl. Grok | Prompt-level sentiment | Real-time | $79/mo |

| Keyword.com | 10+ models incl. Mistral | Full response snapshots | Credit-based on-demand | $24.50/mo |

| Nightwatch | AI Overviews, AI Mode, ChatGPT | Segmented local/engine tracking | API-based | $39/mo add-on |

The table gives you the high-level view. The next few sections dig into what each tool actually delivers.

Topify: Reverse-Engineering Why AI Cites What It Cites

Most citation trackers answer “where.” Topify answers “why.”

Its Source Analysis engine doesn’t just flag that a URL was cited. It decomposes the citation to show which specific content elements, whether a comparison table, a data point, or a paragraph structure, satisfied the LLM’s informational retrieval requirements. For teams trying to close citation gaps, this is the difference between knowing you’re invisible and knowing exactly what to fix.

Topify’s tracking is built around a 7-dimension metric system: Visibility Score (how often AI includes you), Sentiment Quotient (how positively AI frames you, scored 0-100), Relative Positioning (where you land in recommendation lists), AI Search Volume (estimated prompt frequency), Mention Density, Intent Alignment (primary recommendation vs. afterthought), and Attributed CVR (linking AI citations directly to revenue via GA4 or Shopify integration). Early adopters have reported a 12.9x improvement in lead efficiency from AI-referred traffic.

One technical advantage that’s often overlooked: Topify natively tracks the Chinese LLM ecosystem, including DeepSeek, Doubao, and Qwen. Chinese models mention brands at a rate of 88.9% for English-language queries, a 30-point gap compared to Western models. For global brands, ignoring this is a massive blind spot.

The platform also includes a One-Click Execution layer. Once you’ve identified citation gaps, you can translate insights into optimized content strategies without building manual workflows. Pricing starts at $99/mo, which covers 100 prompts across 9,000 AI answer analyses.

The Rest of the Field: How Other LLM Citation Tools Stack Up

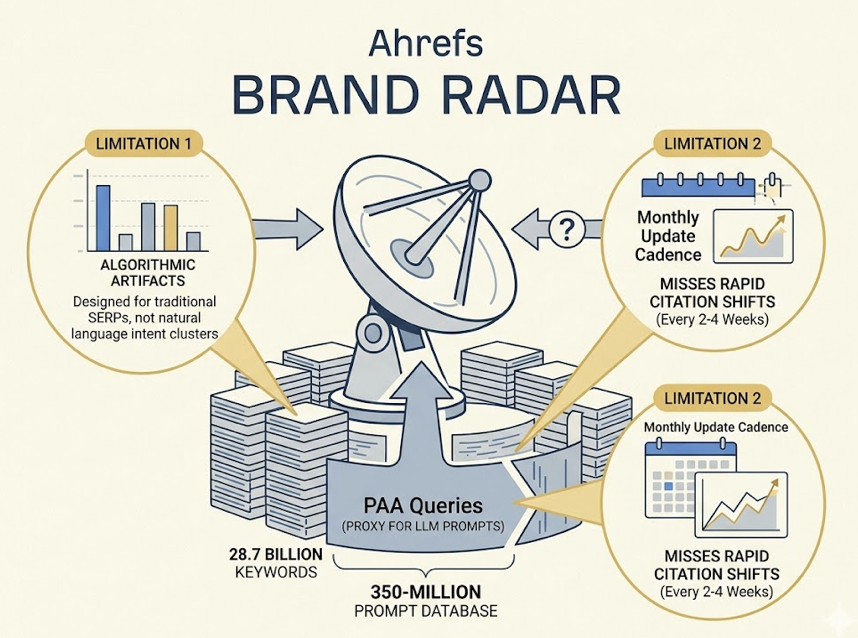

Ahrefs Brand Radar sits on top of 28.7 billion keywords and a 350-million-entry prompt database. The scale is impressive. The limitation is that it relies on “People Also Ask” queries as a proxy for LLM prompts, and PAA questions are algorithmic artifacts designed for traditional SERPs, not the natural language intent clusters that drive ChatGPT conversations. Update cadence tends to be monthly, which misses the rapid citation shifts that happen every 2-4 weeks.

Semrush AI Visibility works well for enterprise teams that want AI tracking inside a broader search suite. Its AI Search Site Audit is a standout, checking whether your robots.txt blocks GPTBot or other LLM crawlers. Citation depth runs lower than GEO-native tools, but competitive benchmarking against 3-5 direct rivals is solid.

AIclicks bridges monitoring and execution. It connects prompt tracking directly to a content generation engine and delivers prioritized action plans each month. Its “Mention-Source Divide” analysis, which flags brands with high mention frequency but low citation authority, is particularly useful for agencies managing multiple accounts. Pricing starts at $79/mo.

Keyword.com is built for teams that need verifiable proof. It logs timestamped, full-response snapshots across platforms, so agencies can show clients exactly when a citation appeared, what the sentiment was, and how it shifted. The Citation Tab provides clear visualizations of which competitor URLs are being referenced. At $24.50/mo, it’s the most budget-friendly entry point.

Nightwatch combines traditional rank tracking with LLM monitoring. If your team needs both classic SERP data and AI citation data in one platform, it’s a practical choice. Segmented tracking by local market and engine is a strong feature for multi-geo brands.

Choosing the Right Tool When “LLM Citation” Means Five Different Things

The right tool depends on what you’re actually trying to solve.

If you need attribution and full-funnel proof that AI citations drive revenue, Topify’s GA4/Shopify integration and 7-metric system give you the most granular view. AI-cited traffic converts at 12.4-15.9%, roughly 5x higher than traditional organic. Being able to tie that back to specific prompts and source URLs is where the ROI case gets built.

If your team is already deep in the Semrush or Ahrefs ecosystem, their AI add-ons may cover top-of-funnel monitoring. Just be aware of the consensus gap: with 91% of citations appearing in only one engine, you’ll need more specialized tracking if multi-platform dominance is the goal.

If you’re an agency that needs a fast monitor-to-action loop, AIclicks and Keyword.com are strong picks. AIclicks gives you built-in content workflows. Keyword.com gives you the timestamped proof that clients expect in quarterly reviews.

For global brands that need to track visibility in both Western and Chinese AI ecosystems, Topify is currently the only platform with native DeepSeek, Doubao, and Qwen coverage.

Conclusion

The gap between SEO rankings and AI search visibility isn’t closing. It’s widening. Traditional organic CTR has dropped 61% for queries where AI Overviews appear, and the brands recovering that lost value are the ones tracking citations at the source level, not just counting mentions.

LLM citation tracking in 2026 isn’t about having a dashboard. It’s about knowing which specific URLs AI cites, why it cites them, and what you can do to earn the next citation. Start with a baseline audit across ChatGPT, Perplexity, and Gemini. Identify the ghost citations where your domain serves as a footnote but your brand never gets recommended. Then close the gap.

FAQ

Q: What is LLM citation tracking?

A: LLM citation tracking monitors whether AI platforms like ChatGPT, Perplexity, and Gemini formally attribute information to your domain or URLs when generating answers. It’s different from traditional rank tracking, which measures position on a search results page. Citation tracking measures whether AI includes your content as a verified source.

Q: How is an LLM citation different from a brand mention?

A: A mention means the AI names your brand in its text. A citation means the AI links to your URL as a source. You can be cited without being mentioned (ghost citation), or mentioned without being cited. The two signals represent different levels of trust, and tracking only one gives you an incomplete picture.

Q: Which AI platforms should I track LLM citations on?

A: At minimum, ChatGPT, Perplexity, Google AI Overviews, and Gemini. Each platform has different source preferences: ChatGPT favors Wikipedia, Perplexity favors Reddit, and Google AI Mode leans heavily on YouTube. Only 11% of cited domains overlap between ChatGPT and Perplexity, so multi-platform tracking is essential.

Q: How often do LLM citations change?

A: Frequently. Research shows only 30% of brands maintain visibility from one AI answer to the next, and just 20% hold presence across five consecutive runs of the same query. Monthly tracking isn’t enough. Daily or on-demand monitoring is the minimum cadence for actionable data.