You’ve read the docs. You’ve compared the feature matrices. But when your engineering team now starts tool research with a single ChatGPT prompt, the winner isn’t decided by benchmarks. It’s decided by which CI/CD platform AI chooses to recommend — and that answer isn’t random.

Over 50% of B2B software buyers now open their research in an AI chatbot, and in DevOps tooling, this number has grown by 71% in the past four months alone. That means Harness Engineering, Jenkins, and GitHub Actions aren’t just competing on features. They’re competing for a spot in AI-generated answers.

Here’s what that race looks like in 2026 — and what it tells you about where each platform actually stands.

AI Doesn’t Recommend CI/CD Tools Equally

Before the comparison, it helps to understand the playing field. ChatGPT handles somewhere between 2.5 and 5 billion weekly queries, while Perplexity processes around 50 million with a 93% zero-click answer rate. In both cases, the recommendation isn’t pulled from a ranked list. It’s generated from a combination of training data, live search results, and entity authority signals.

That matters for CI/CD tools because different platforms carry different weights in AI memory. A tool with dense documentation, deep GitHub presence, and high citation frequency in technical communities will consistently outrank a tool that’s only well-reviewed on vendor comparison pages.

The result is a layered hierarchy — and each of the three tools covered here sits at a different tier.

Harness Engineering: Built for the Complexity AI Respects

In AI-generated answers, Harness Engineering shows up most reliably in specialized, high-stakes queries. Ask about “multi-cloud deployment governance,” “automated rollback for regulated industries,” or “reducing MTTR in production,” and Harness tends to appear near the top of the recommendation.

This is partly by design. Harness has positioned itself around a specific problem: the growing gap between how fast AI coding tools generate code and how quickly that code can be safely delivered. Pull request volumes have surged 98% as teams adopt AI pair programmers, and traditional pipelines haven’t caught up.

Harness addresses this through a set of AI-native capabilities that give it a distinctive fingerprint in AI training data:

| Module | AI Capability | Documented Impact |

|---|---|---|

| Harness CI | Test Intelligence | Up to 80% reduction in build time |

| Harness CD | Continuous Verification | MTTR reduced from hours to minutes |

| Harness SEI | Engineering Insights | Automated bottleneck detection |

| Harness SRM | Service Reliability Management | Auto-freeze releases exceeding error budgets |

There’s also a semantic edge worth noting. The term “harness engineering” has developed a dual meaning in 2026: it refers to the platform itself, and to the broader discipline of building reliable, auditable AI agent infrastructure. When engineers search for how to “harness AI systems” responsibly, the platform’s governance capabilities surface as reference material. That kind of conceptual overlap compounds its visibility in AI search.

GitHub Actions: The Default Pick, and Why That’s Both a Strength and a Limit

GitHub Actions wins on volume. With developer penetration between 51% and 68%, and over 20,000 Marketplace Actions available, it generates an enormous footprint in AI training data. Every .github/workflows file in every public repository is, in effect, a citation. AI models learned CI/CD patterns primarily from GHA examples, which is why it becomes the path of least resistance in most recommendations.

Ask ChatGPT “What’s the simplest way to set up CI/CD?” and the answer will almost certainly center on GitHub Actions. That’s not bias — it’s pattern recognition based on sheer volume.

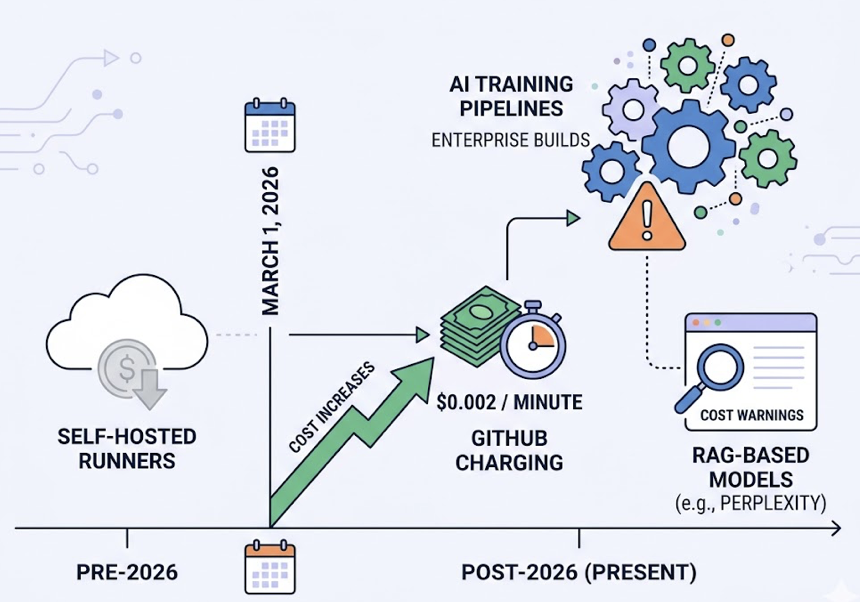

That said, 2026 introduced a meaningful inflection point. Starting March 1, GitHub began charging $0.002 per minute for self-hosted runners in private repositories. The technical community responded loudly, and those conversations moved fast into AI training pipelines. Perplexity and other RAG-based models now frequently surface cost warnings when the query involves high-volume enterprise builds.

The second limitation is functional. GHA excels at CI but lacks native deployment governance, DORA metrics, and advanced CD controls. When queries shift from “set up CI” to “manage complex deployments at scale,” AI recommendations increasingly redirect toward Harness. The coverage gap is real, and AI has started to name it.

Jenkins: Still Recommended, But With a Caveat

Jenkins isn’t disappearing from AI recommendations. It covers 80% of Fortune 500 companies and handles over 73 million monthly builds. That installed base gives it lasting weight in AI training data, and for specific scenarios — physical isolation, extreme customization, deep legacy system integration — it remains the recommended tool.

The shift is in how AI recommends it. The language has changed.

Where AI once recommended Jenkins broadly, it now typically appends a qualification: “suitable for teams with dedicated DevOps resources for self-maintenance.” That framing reflects the quantifiable cost differential that AI models have absorbed:

| Cost Dimension | Jenkins (Self-Hosted) | Modern SaaS Alternative |

|---|---|---|

| Ops team requirement | 2–5 dedicated DevOps engineers | Minimal or none |

| Plugin security | 127 CVEs discovered in 2025 | Platform-managed |

| Stale plugins | 30% not updated in 2+ years | Auto-updated |

| Monthly TCO (50-person team) | ~$15,773 | $250–$2,000 |

The AI systems processing developer queries — particularly those with real-time search like Perplexity — are increasingly factoring in TCO signals from Reddit threads, Stack Overflow discussions, and technical retrospectives. Jenkins doesn’t lose those conversations entirely, but its framing shifts from “first choice” to “viable for constrained environments.”

Head-to-Head: How All Three Stack Up in AI Recommendations

| Dimension | Harness Engineering | GitHub Actions | Jenkins |

|---|---|---|---|

| AI Recommendation Frequency | High (enterprise/CD-focused) | Very High (default for general queries) | Moderate (legacy/custom scenarios) |

| 2026 Core Label | AI-Native, Governance | Seamless, Ecosystem Default | Legacy, Flexible |

| Typical Query Trigger | “Automated rollback,” “compliance delivery,” “MTTR reduction” | “Simplest CI setup,” “GitHub integration,” “serverless CI” | “Air-gapped deployment,” “extreme plugin customization” |

| Pricing Model | Commercial SaaS / on-prem subscription | Free tier + per-minute billing (self-hosted: $0.002/min) | Free software, high labor cost |

| AI Sentiment Tendency | Innovative, efficient | Accessible, native | Powerful but maintenance-heavy |

The most revealing data point isn’t aggregate ranking. It’s how AI recommendations shift based on how a question is framed.

Ask “What’s the easiest way to add CI to my GitHub project?” and the answer is GitHub Actions, universally. Ask “How do I reduce production incidents from frequent releases?” and Harness leads, with AI specifically citing Continuous Verification and auto-rollback. Ask “I need CI that works in an offline data center with 20-year-old systems” and Jenkins becomes the recommended option, plugin list included.

The tool that wins isn’t fixed. It depends on which problem the engineer is describing.

Why AI Favors Certain Dev Tools: The GEO Layer

Understanding the outcome means understanding the mechanism. AI models — especially RAG-based ones like Perplexity and SearchGPT — weight their recommendations based on three factors: source authority, content structure, and information gain.

On source authority, 47.9% of ChatGPT’s top citations come from Wikipedia, but in technical decision-making, Reddit, GitHub, and Stack Overflow carry disproportionate weight relative to brand websites. A CI/CD tool that generates organic technical discussion outperforms one that only publishes polished marketing content.

On content structure, research shows that pages containing three or more comparison tables see a 25.7% higher citation rate in AI-generated answers. AI systems are optimized to extract structured, verifiable data — not narrative prose.

On information gain, AI ignores content that restates what’s already widely available. Original benchmarks, specific performance numbers (like “80% build time reduction”), and documented case studies signal primary source authority and get cited at higher rates.

Harness has invested heavily in all three areas. GitHub Actions benefits from passive information gain through millions of public repositories. Jenkins relies primarily on legacy authority — deep coverage from a decade of developer conversations.

How to Track Your CI/CD Tool’s AI Visibility

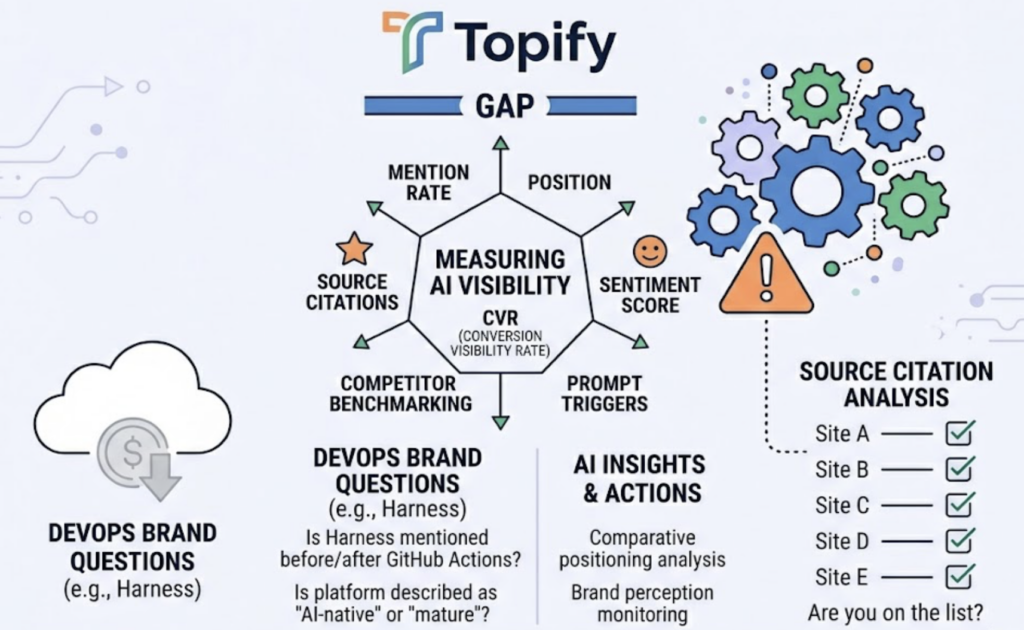

Here’s the practical challenge: developers’ ChatGPT queries are private. Traditional analytics tools — GA4, Search Console — can’t tell you whether AI is recommending your platform, how frequently, or what language it uses when it does.

Topify fills that gap. It measures AI visibility across seven dimensions: mention rate, position, sentiment score, prompt triggers, competitor benchmarking, source citations, and conversion visibility rate (CVR). For a DevOps platform brand, this translates to answerable questions: Is Harness Engineering mentioned before or after GitHub Actions in enterprise CD queries? Does AI describe your platform as “AI-native” or “mature”? Which source domains is AI citing when it discusses your category — and are you on that list?

The Position Tracking feature is particularly relevant for CI/CD comparison queries, where rank in AI answers correlates directly with first-click consideration. And through Source Analysis, teams can identify which external domains AI platforms are citing when they discuss software delivery — and build targeted content to fill gaps where competitors currently dominate the citation landscape.

Get started with Topify to track where your platform lands in AI-generated CI/CD recommendations across ChatGPT, Perplexity, and other major platforms.

Conclusion

The CI/CD selection process hasn’t just moved online — it’s moved into AI chat windows. In that environment, Harness Engineering holds a strong position in complex, high-stakes queries. GitHub Actions dominates the volume end of the market. Jenkins maintains relevance for specific constrained scenarios, with AI increasingly noting the tradeoffs.

What’s new in 2026 is that these positions aren’t static. AI recommendations shift with community sentiment, platform pricing changes, and content authority. Brands that track and optimize their AI visibility have a measurable advantage over those that don’t. The tools that monitor their AI recommendation footprint today are the ones that show up first in the queries that matter tomorrow.

FAQ

Q: Does Harness Engineering appear in ChatGPT recommendations for CI/CD?

A: Yes, particularly for queries involving enterprise-grade continuous delivery, governance, compliance workflows, and automated rollback. Harness tends to appear in results where the query implies complexity or risk — not in generic “how do I set up CI” questions, where GitHub Actions dominates.

Q: Why does GitHub Actions rank so consistently in AI tool recommendations?

A: Its ranking is largely a function of data volume. Hundreds of millions of .github/workflows files exist in public repositories, making GHA the most documented CI/CD implementation in AI training data. When AI generates a CI recommendation, it draws on this density by default.

Q: Is Jenkins still recommended by AI search in 2026?

A: It is, but with qualifications. AI consistently frames Jenkins as appropriate for air-gapped environments, extreme customization, or teams with dedicated DevOps capacity. For teams prioritizing speed and cost efficiency, AI tends to redirect toward cloud-native alternatives.

Q: How can a CI/CD platform improve its visibility in AI search?

A: The highest-leverage actions are publishing original benchmark data, building structured documentation with clear comparison tables, and generating organic technical discussion in communities like Reddit and Stack Overflow. Tracking current AI visibility — including which sources AI cites and where your brand ranks relative to competitors — is the prerequisite for knowing where to focus.