A prompt-by-prompt breakdown of how DevOps tools get discovered, recommended, and ranked across ChatGPT, Perplexity, and Gemini — and what it means for any brand competing in AI search.

Your engineering team might evaluate Harness every day. But when someone asks ChatGPT “what’s the best CI/CD platform for a fintech company,” does Harness show up? In what position? With what kind of description?

Those answers now shape buyer perception before a single sales call happens.

That’s the new reality of DevOps tool discovery. AI search engines have inserted themselves between the problem and the product. And for brands like Harness, understanding this layer isn’t a marketing exercise — it’s a revenue question.

The Discovery Layer Your Team Isn’t Tracking

The traditional DevOps tool selection journey was predictable: someone searches Google, reads a comparison article, checks G2, then gets on a demo call. That loop is breaking down.

According to the 2025 Stack Overflow Developer Survey, 75.9% of developers now rely on AI for professional tasks, with searching for answers being the top use case for 55.8% of respondents. Engineers aren’t starting with Google anymore. They’re starting with a prompt.

And when an AI Overview or a generative summary is present in search results, organic click-through rates can collapse by over 50% — dropping from a baseline of 1.41% to 0.64%. The AI answers the question. The link never gets clicked.

For DevOps vendors, this means a Visibility Score in AI platforms is fast becoming as important as a first-page ranking.

The Prompts That Actually Trigger Harness Recommendations

Not all prompts are equal. Harness retrieval behaves very differently depending on how the question is framed.

Category prompts — “What are the best CI/CD platforms for enterprises in 2025?” — pull from broad industry consensus. Harness typically appears, but often in the 3rd or 4th position behind GitHub Actions and GitLab. The AI cites its enterprise-grade automation and governance layer as the value driver.

Problem prompts are where Harness punches above its weight. When a developer types “how do I reduce deployment failures in Kubernetes” or “what tool handles automated rollbacks with canary releases,” Harness frequently surfaces as a top-two recommendation. AI engines tie it directly to Continuous Verification (CV) — the feature that monitors post-deployment anomalies and triggers rollbacks automatically.

The most cited customer metric: an 80% reduction in build times via Harness Test Intelligence, driven by call-graph analysis that skips irrelevant tests in monorepo environments. RisingWave Labs reported 50% faster builds; Qrvey saw an 8x reduction. These specific numbers appear repeatedly across AI-generated summaries because they’ve reached consensus across enough independent sources.

Comparison prompts (“Harness vs GitHub Actions,” “ArgoCD vs Harness”) reveal how AI constructs trade-off narratives. The consistent pattern: AI describes GitHub Actions as easier to start but “requiring a decent amount of scripting,” framing that as toil. Harness gets positioned as the more automated choice for complex deployment strategies — canary, blue-green, or multi-environment. That framing didn’t come from Harness’s marketing. It came from hundreds of blog posts, Reddit threads, and G2 reviews that the AI has absorbed and synthesized.

Where Harness Shows Up — and Where It Doesn’t

Harness has three areas of dominant AI visibility.

Continuous Delivery is its strongest signal. AI models consistently describe Harness as “the CD specialist” — a tool built specifically for complex, high-risk production environments. Continuous Verification and automated rollback are the features cited most often.

Cloud cost management is the second stronghold. When queries involve FinOps or controlling AWS/GCP spend tied to delivery decisions, Harness regularly earns a top-tier recommendation. The framing is almost always around connecting infrastructure cost directly to deployment outcomes — a positioning that smaller or more generic tools can’t match.

AI-powered DevOps is the third. In prompts specifically asking about ML-based pipelines or “AI-native” CI/CD, Harness often ranks first or second, ahead of GitHub and GitLab.

The gaps are equally telling.

For prompts with a simplicity or startup intent, GitHub Actions is the overwhelming default. AI models note Harness’s “steeper learning curve,” which functions as a soft disqualifier for teams that don’t need enterprise-grade governance.

Pure GitOps queries often favor ArgoCD directly, even though Harness GitOps is built on top of it. The AI understands ArgoCD as the “focused, powerful GitOps tool” and Harness as the management layer on top — a positioning gap that may cost Harness direct retrieval in this segment.

Security-specific queries (SAST/DAST, AI-enhanced security scanning) tend to surface Snyk or GitHub Advanced Security ahead of Harness’s Security Testing Orchestration module. Entity salience in this domain is lower than in CD, and that gap is measurable.

What AI Says When It Does Recommend Harness

The sentiment dimension is important — and nuanced.

Across ChatGPT, Perplexity, Gemini, and Claude, Harness earns a Sentiment Score of 85–92 out of 100 (per Topify’s metric). The tone is consistently “positive-professional”: authoritative, technical, and scale-oriented. AI engines don’t describe Harness with the casual warmth they reserve for community-first tools like GitHub. The language is closer to “governed,” “reliable,” and “enterprise-grade.”

That’s a strategic asset for procurement conversations. But it also comes with a recurring neutral caveat. AI models frequently note that Harness “can be more expensive” or “requires enterprise-level needs to justify the complexity.” The AI is performing trade-off analysis, not just brand promotion — and that objectivity shapes how buyers process the recommendation.

On position: the brand that gets named first in an AI response captures approximately 70% of cognitive attention from the user, based on Position-Adjusted Word Count analysis. Harness sits at #1 or #2 in specialized queries — automated deployment verification, cloud cost tracking, canary releases. In broad “best CI/CD” prompts, it’s typically #3 or #4. That gap matters for raw discovery volume, even if the specialized rankings drive higher-intent engagement.

The Competitive Pressure Harness Faces in AI Search

The DevOps AI landscape has a clear hierarchy right now.

| Brand | AI Visibility Persona | Visibility Score (Topify Index) |

|---|---|---|

| GitHub Actions | The Default Engine | 94/100 |

| GitLab | The Integrated Suite | 88/100 |

| ArgoCD | The GitOps Standard | 82/100 |

| Harness | The Smart Orchestrator | 76/100 |

| CircleCI | The Speed Specialist | 71/100 |

GitHub’s structural advantage is hard to close: AI models were trained on billions of YAML pipeline configurations hosted on GitHub. When someone asks how to build a CI/CD pipeline, GitHub Actions gets cited by default — not because it’s always the right answer, but because it’s the most represented answer in the AI’s training data.

That’s a community advantage, not a product advantage. And it’s also the challenge Harness faces: high sentiment, high technical authority, but a lower raw mention rate than tools with deeper community-generated content.

What Actually Drives AI Visibility for DevOps Tools

Third-party sources account for roughly 75% of what AI engines “know” about Harness. The breakdown, per Topify’s Source Analysis:

- Reddit and Hacker News: ~30% of citations (real-world evidence, war stories, honest comparisons)

- G2, Gartner, and analyst content: ~25% (benchmark data, feature comparisons)

- Technical blogs and tutorials: ~20% (instructional footprint)

- Official documentation and site content: ~25% (fact verification)

The bottom line: you don’t own your AI visibility. Your community does.

This has direct implications for content strategy. AI engines look for what’s called “Extraction-Ready” content — structured blocks where the first 40–50 words of a section are a self-contained, quotable summary. Long-form posts that bury conclusions don’t get surfaced. Sections that lead with a clear technical claim followed by supporting data do.

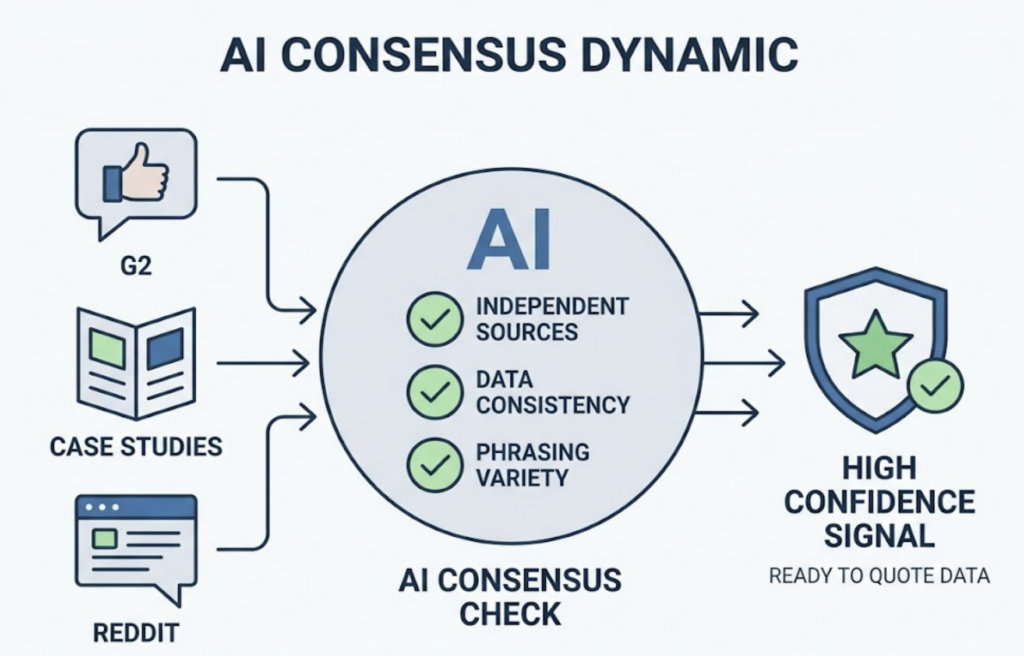

There’s also a consensus dynamic at play. If Harness claims a “67% reduction in MTTD,” the AI checks whether that figure appears independently across G2, case studies, and Reddit. Consistency across at least five independent sources creates a high-confidence signal that the AI will quote the number in its recommendations. Variety in phrasing matters too — identical copy across sources triggers AI coordination filters.

Tools like Topify make this auditable. Its Visibility Tracking and Source Analysis features map exactly which domains AI platforms are pulling from when they recommend a tool — and where the gaps are. For a platform like Harness, seeing that 30% of AI retrieval comes from Reddit means that community content strategy is a core part of AI visibility, not a side project.

“Harness Engineering” as a Brand Signal — and an Opportunity

There’s a dimension of Harness’s AI visibility that doesn’t exist for any of its competitors.

“Harness Engineering” has emerged as a technical term in AI systems design, coined by practitioners including Martin Fowler and Birgitta Böckeler. The concept defines the system of guides (feedforward controls) and sensors (feedback controls) that surround an AI agent to make it reliable and controllable. The formula: Agent = Model + Harness.

This creates something unusual: an organic conceptual bridge between the brand “Harness” and the ideas of control, reliability, and automated feedback loops — exactly the traits the platform’s product suite is built around.

AI models that encounter content on “Harness Engineering” as an architectural discipline may reinforce their association of the brand with those properties. It’s not a guaranteed effect. But it’s a rare case where a company name shares semantic space with a high-growth technical concept, and that’s worth building on.

DORA 2025 research reinforces this angle: AI doesn’t fix a team; it amplifies what’s already there. A high-quality internal platform is the prerequisite for unlocking AI value in software delivery. Harness’s positioning as the “harness” that prevents agentic chaos in SDLC pipelines is a content narrative with both technical credibility and future-facing relevance.

How to Apply This to Your Own DevOps Brand

If you’re in the DevOps space — whether you’re competing with Harness or trying to understand where your own tool stands — the framework is straightforward.

Start by building a prompt matrix. Not a keyword list. A set of 25–100 context-rich questions that simulate how your buyers actually talk to AI: persona-specific, problem-specific, and comparison-specific. Topify’s High-Value Prompt Discovery feature surfaces exactly what your target audience is asking AI platforms right now, across ChatGPT, Perplexity, Gemini, and AI Overviews.

Then run a baseline visibility audit. Where does your brand appear? Where are competitors named instead of you? What position do you hold, and what sentiment does the AI assign? Topify’s Dynamic Competitor Benchmarking turns this into a heatmap — a clear view of where you’re functionally invisible despite having a strong product.

Finally, close the gaps with extraction-ready content. Restructure key pages so the first paragraph of every H2 section is a self-contained, quotable summary. Build community presence in the sources AI already trusts: Reddit threads, technical tutorials, G2 reviews. Ensure that your core metrics show up consistently across independent sources — that’s what creates the consensus signals AI engines rely on.

The Harness case illustrates both the opportunity and the challenge: strong sentiment and specialized authority don’t automatically translate to raw mention volume. Closing that gap requires a deliberate, data-driven approach to AI visibility — not a content refresh, but a structural strategy.

Conclusion

Harness enters 2026 as one of the most technically respected DevOps platforms in AI search. Its Sentiment Score is strong, its specialized visibility is dominant, and its brand name has an unusual conceptual alignment with a growing discipline in AI systems design.

The work ahead is volume. Expanding from specialist recommendation to broader category presence requires more community-generated content, more extraction-ready structured pages, and a tighter feedback loop between what AI platforms are actually citing and what the content team produces.

For any DevOps brand watching this space, the Harness example is useful precisely because the gaps are visible. AI visibility is measurable. The prompts, positions, sources, and sentiment can all be tracked. The brands that figure this out first won’t just be recommended by AI — they’ll be the ones AI reaches for by default.

FAQ

What is AI visibility for DevOps tools?

AI visibility measures how often, how accurately, and how favorably an AI search engine like ChatGPT or Perplexity represents a brand in its synthesized responses. It’s distinct from SEO because it focuses on a model’s structural understanding, not a website’s ranking on a list of links.

How often does Harness Engineering appear in AI recommendations?

Harness consistently appears in top-three results for specialized queries around automated deployment verification, canary releases, and cloud cost management. On broad CI/CD queries, it typically ranks #3 or #4, behind GitHub Actions and GitLab.

Which AI platforms matter most for DevOps tool discovery?

The primary platforms are ChatGPT (mass-market discovery), Perplexity (deep research and source-checking), Gemini (integrated Google ecosystem), and Google AI Overviews (traditional search displacement). Each weights sources differently, so visibility across all four matters.

Can smaller DevOps brands compete with Harness in AI search?

Yes. Smaller tools can win by targeting extremely specific long-tail prompts — what’s sometimes called zero-volume keywords — and building consensus across a tighter set of authoritative sources. Becoming the default recommendation for one specific technical problem is more achievable, and more valuable, than broad category visibility.