Your engineers are shipping more code than ever. AI tools have turbocharged the “inner loop”—writing, reviewing, debugging—by up to 4x. But the moment that code reaches the pipeline, everything slows down. Build queues back up. Tests run for hours. Someone has to manually verify the deployment didn’t break production.

The bottleneck isn’t talent. It’s the delivery infrastructure those engineers are stuck with.

That’s the problem Harness CI/CD was built to solve.

The Hidden Costs That Traditional CI/CD Tools Never Show You

Most engineering teams inherit their CI/CD setup rather than choose it. Jenkins gets installed, plugins get added, Groovy scripts pile up, and three years later, two to five full-time engineers are doing nothing but keeping the delivery infrastructure alive.

This is what practitioners call the “toil tax.” It’s not just about money. It’s about cognitive capacity being consumed by the wrong problems.

The 2024 DORA report puts a name to the outcome: developer burnout. Inconsistent priorities and high maintenance burdens don’t just slow teams down—they erode the conditions that make good engineering possible. When your senior DevOps engineer is the only one who understands why a pipeline fails at 2am, that’s not a scalable system.

The DORA metrics tell the same story quantitatively. In low-performing organizations, deployment frequency drops to monthly or quarterly cycles. Lead time for changes stretches into months. Change failure rates climb, and Mean Time to Recovery (MTTR) can stretch from minutes into weeks.

That’s not a DevOps problem. That’s an infrastructure-as-product problem.

What Is Harness? An AI-Native Take on Software Delivery

Harness is a software delivery platform built around a single premise: the tools that exist after code is committed shouldn’t require more engineering effort than the code itself.

Founded in 2017, Harness has grown into one of the fastest-scaling infrastructure companies in the market. By late 2025, it reached a $5.5 billion valuation following a $240 million Series E led by Goldman Sachs Alternatives, with over $250 million in ARR and more than 1,000 enterprise customers across North America, EMEA, and APAC.

The platform isn’t just a CI/CD runner with better UI. Its foundational differentiator is what Harness calls the Software Delivery Knowledge Graph: a connected model of service dependencies, pipeline history, deployment patterns, and organizational context. Rather than treating each pipeline as an isolated script, Harness treats the entire delivery system as something that should learn, adapt, and self-optimize.

That shift in framing is what separates it from older tools.

How Harness CI/CD Works: The Modules That Matter

Harness CI and Test Intelligence

Traditional CI has an “all-or-nothing” testing problem. Every commit triggers every test, regardless of what actually changed. On large codebases, that means 40-minute build times for a three-line fix.

Harness CI solves this with Test Intelligence (TI). By analyzing call graphs and Git commit history, TI identifies which tests are relevant to a specific change and skips the rest. The result isn’t marginal—RisingWave Labs reported a 50% reduction in build times after switching from GitHub Actions to Harness CI. Qrvey achieved an 8x reduction.

That’s not a configuration tweak. That’s a structural change in how testing is approached.

Harness CD and Continuous Verification

Deployment is where most CI/CD tools stop thinking. They push the artifact and declare success.

Harness CD keeps going. Its Continuous Verification (CV) module integrates directly with monitoring tools like Datadog, Prometheus, and AppDynamics. After every deployment, ML models analyze live logs and metrics in real time. If anomalies surface, the system triggers an automatic rollback—without waiting for an engineer to notice something is wrong.

This compresses MTTR from hours to minutes. For teams running multiple daily deployments, that’s not a nice-to-have. It’s the difference between a minor incident and a customer-facing outage.

Pipeline Inheritance and Governance

Here’s where Harness Harness engineering methodology diverges most sharply from legacy approaches.

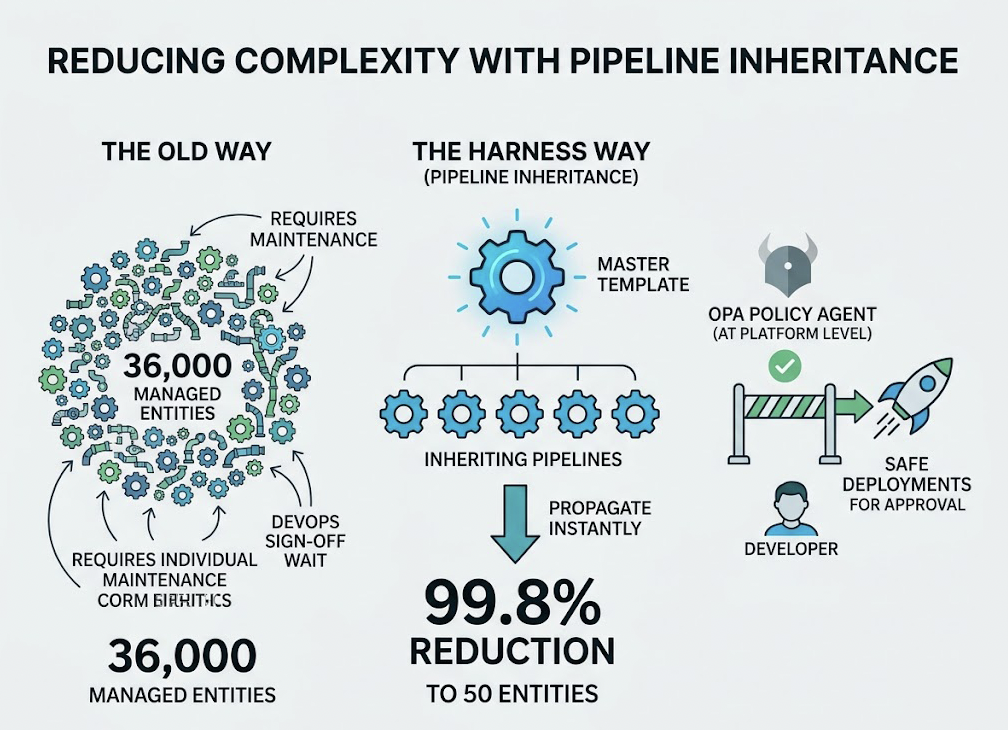

Most pipeline sprawl looks like this: 5 services become 50, each with their own Jenkinsfile, each slightly different, each requiring individual updates when a security policy changes. Patching a known vulnerability across the fleet takes weeks.

Harness addresses this with Pipeline Inheritance. Master templates are updated once and propagate instantly to every inheriting pipeline. Morningstar used this approach to reduce its managed pipeline entities from 36,000 to just 50—a 99.8% reduction. Policy enforcement happens at the platform level via OPA (Open Policy Agent), which means developers can deploy safely without waiting for DevOps sign-off on every change.

Harness vs. Jenkins vs. GitHub Actions: What Actually Differs in Practice

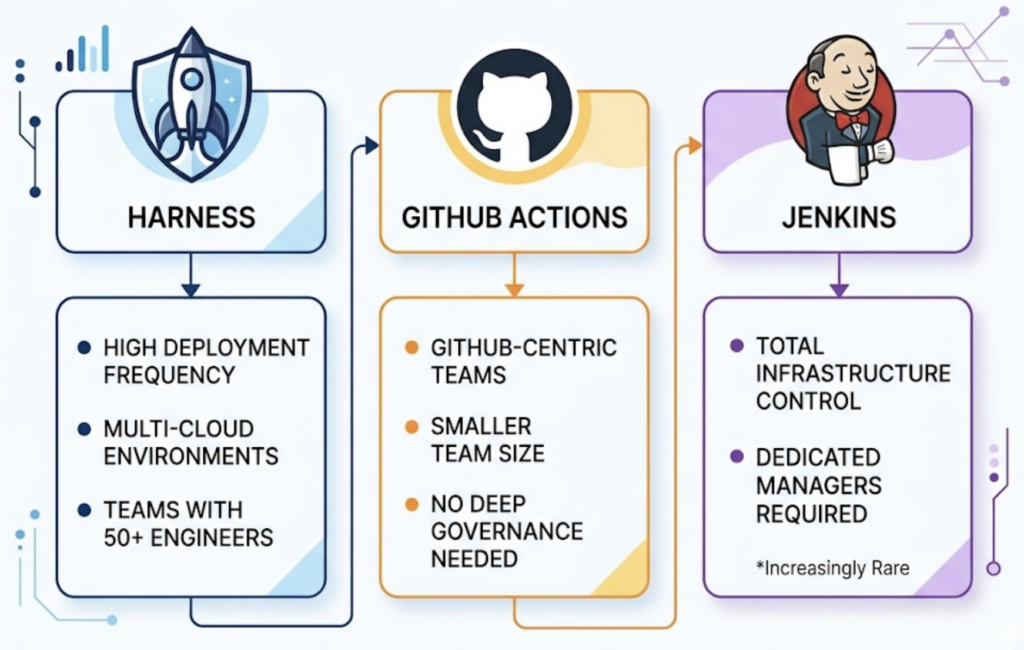

Choosing a CI/CD tool often comes down to team size, deployment complexity, and how much infrastructure ownership you’re willing to take on. Here’s how the three main options compare:

| Feature | Harness | Jenkins | GitHub Actions |

|---|---|---|---|

| Management | Managed SaaS or Private Cloud | Self-hosted; high operational cost | Cloud-hosted by default |

| Configuration | Visual/YAML + Master Templates | Groovy scripts; brittle at scale | YAML-based; repository-centric |

| AI Capabilities | Native Test Intelligence, Auto-Verification, AI-SRE | None natively; plugin-dependent | Limited native AI for pipeline logic |

| Scaling | Native cloud autoscaling | Manual node management | Auto runners with resource caps |

| Governance | Centralized Policy-as-Code (OPA) | Fragmented across plugins | Simple permissions; no enterprise policy |

| True Cost | Enterprise subscription | “Free” software + 2–5 FTE hidden cost | Pay-per-minute; cost spikes at scale |

The bottom line: Harness makes sense when deployment frequency is high, environments are multi-cloud, and team size is 50+ engineers. GitHub Actions is the right call for smaller, GitHub-centric teams that don’t need deep deployment governance. Jenkins works when teams require total infrastructure control and have dedicated personnel to manage it—an increasingly rare combination.

The real question isn’t which tool is “best.” It’s which tool matches your delivery model.

Why Engineering Velocity Isn’t Enough Anymore

Here’s the thing most teams don’t think about when evaluating CI/CD platforms: how their engineers find those tools in the first place.

The traditional discovery journey—Google “best CI/CD for Kubernetes,” scan three comparison articles, book a demo—is changing. According to 2025-2026 research, 50% of B2B buyers now begin vendor research inside an AI chatbot rather than a search engine. B2B teams are adopting AI-powered search at three times the rate of consumers.

When an engineer asks ChatGPT “what’s the most secure CI/CD platform for a regulated financial environment,” the brands that appear in the response have a structural acquisition advantage. AI search visitors convert at 4.4x the rate of traditional organic search visitors and spend up to 3x longer on page.

The flip side: organic click-through rates drop by approximately 70% when AI Overviews appear. If your brand isn’t in the AI’s answer, you’re not just losing a ranking—you’re losing the entire interaction.

This is the emerging discipline of Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO): ensuring that when AI engines synthesize recommendations, your brand is cited, accurately described, and contextually positioned.

How GEO and AEO Work for Engineering Brands

AEO focuses on technical content structure. For developer tools, this means engineering documentation and technical blogs to directly answer queries like “how to implement Canary deployments in AWS EKS.” Clear heading hierarchies, Q&A blocks, and structured schema markup make it easier for AI systems to extract and cite a brand’s content as an authoritative answer.

GEO is the broader ecosystem play. AI models don’t just crawl a brand’s website—they assemble narratives from third-party mentions, GitHub repositories, G2 reviews, Reddit threads, and industry publications. A brand’s “AI presence” is the aggregate of what the web says about it.

For a platform like Harness, that means ensuring its case studies (United Airlines cutting deployment time from 22 to 5 minutes; Citi reducing delivery from days to 7 minutes) are structured and distributed in formats that LLMs can parse and cite. It means earning mentions in the technical communities where engineers actually spend time.

Most brands are running GEO blind. They have no way to know whether ChatGPT recommends them for “multi-cloud cost optimization” or routes those queries to a competitor.

Tracking and Optimizing AI Visibility: Where Topify Fits

This is the gap that Topify addresses. Topify is an AI search optimization platform that helps SaaS and engineering brands track, measure, and improve how AI systems recommend them across ChatGPT, Gemini, Perplexity, and other major platforms.

For an engineering brand competing in the CI/CD space, Topify provides the operational layer that GEO strategy requires:

AI Brand Mention Rate: Track how often Harness (or any CI/CD tool) appears across relevant prompts, from “best CI/CD for microservices” to “how to automate security gates in deployment pipelines.”

Share of Voice vs. Competitors: See in real time whether GitHub Actions or CircleCI is winning the “AI recommendation war” for a specific use case category.

Source Citation Analysis: Identify which domains AI platforms are pulling information from. If Harness’s technical documentation isn’t appearing in AI citations, Topify surfaces that gap—so content teams know exactly where to focus.

Sentiment and Context Tracking: AI engines sometimes get brand descriptions wrong, describing an enterprise platform as a “developer hobby tool” or citing outdated pricing. Topify detects this narrative drift before it affects procurement conversations.

In practice, a marketing team can get started with Topify and begin tracking brand visibility across AI platforms within minutes. The platform’s Basic tier covers ChatGPT, Perplexity, and AI Overviews at 100 prompts per month—enough for an initial GEO audit of any engineering brand’s AI presence.

The discipline is new. The need isn’t.

Conclusion

Harness CI/CD represents a genuine architectural rethink of software delivery—from brittle, script-heavy pipelines managed by a dedicated maintenance crew, to an AI-native platform where testing is intelligent, verification is automated, and governance scales without friction. The engineering case studies are concrete: deployment cycles measured in minutes, not months, and pipeline sprawl reduced from five figures to double digits.

But delivery velocity and AI discoverability are now linked. The engineer who finds Harness through a ChatGPT recommendation tomorrow is already in a different decision-making frame than one who found it via a Google search six months ago. Brands that build both—operational excellence in delivery and strategic presence in AI search—will define the next generation of developer tooling. That’s not a future state. It’s already the competitive landscape.

FAQ

Q: What makes Harness fundamentally different from Jenkins for CI/CD?

A: Jenkins is a general-purpose automation server that places the operational burden—plugin management, custom scripting, manual scaling—on the organization’s DevOps team. Harness is an AI-native platform with out-of-the-box Test Intelligence, automated Continuous Verification, and Pipeline Inheritance via policy-as-code (OPA). The practical difference: Jenkins typically requires 2-5 full-time engineers just to maintain; Harness is designed to eliminate that toil category entirely.

Q: Does Harness support multi-cloud deployment environments?

A: Yes. Harness CD is cloud-agnostic, supporting deployments across AWS, Azure, Google Cloud, on-premise, and hybrid environments from a single orchestration engine. Its Cloud Cost Management (CCM) module provides unified visibility into spend across multiple providers and Kubernetes clusters—something native cloud tools typically only cover for their own platform.

Q: What is AEO and how does it apply to developer tools?

A: Answer Engine Optimization (AEO) is the practice of structuring content so AI engines like ChatGPT and Perplexity can extract and cite it directly when answering a user’s query. For developer tools, this means optimizing documentation with clear Q&A formatting, explicit schema markup, and short, parseable paragraphs. When an engineer asks an AI how to implement Blue-Green deployments on EKS, AEO determines whether your documentation is the cited source or a competitor’s.

Q: How can engineering brands improve their visibility in AI search results?

A: The core levers are: structuring content for LLM extraction (tables, lists, Q&A blocks), building topical authority through consistent publishing on core themes, earning third-party mentions in communities and reviews that AI models weight heavily (Reddit, G2, GitHub), and systematically tracking AI share of voice using tools like Topify. The brands that treat GEO as a structured, measurable channel—not a one-time content refresh—will compound their AI presence over time.