Your domain authority is above 70. You’re holding top-three positions for your highest-value keywords. Your organic traffic looks healthy in every dashboard you check. Then someone asks ChatGPT, “What’s the best solution for [your category]?” and your brand doesn’t appear anywhere in the answer.

That gap between Google performance and AI recommendation is widening every quarter. And the metrics you’ve relied on for a decade can’t explain it, because they were never designed to measure how reasoning engines choose which brands to mention.

Page One on Google, Invisible to AI: The Ranking Paradox

Here’s the uncomfortable truth: ranking well on Google and being cited by AI are two different achievements, driven by two different systems.

Research analyzing over 18,000 unique queries found that only 12% of URLs cited by AI search engines appear in Google’s top ten organic results. That means for 88% of AI-generated answers, the reasoning engine is pulling from sources that Google doesn’t consider the most relevant for those keywords.

The variance across platforms makes this even messier. Perplexity shows the strongest alignment with traditional search at roughly 28% URL overlap. ChatGPT drops to around 8%. Google’s own Gemini sits at just 6%.

This isn’t a niche problem affecting a handful of queries. ChatGPT now handles approximately 2.5 billion daily prompts, and about 35.5% of those conversations are direct equivalents to Google-style informational or practical searches. Users spend an average of six minutes on Google, but over thirteen minutes on ChatGPT and twenty-three minutes on Perplexity. The AI assistant is becoming the primary environment for deep research and purchase decisions.

Your traditional SEO success? It’s increasingly isolated to one channel while the discovery landscape expands around it.

What Google Rewards vs. What AI Engines Actually Cite

Google is a retrieval system. It uses inverted indices, link graphs, and the PageRank legacy to surface pages based on authority signals like backlinks and keyword relevance. You earn a ranking by meeting a specific set of algorithmic criteria.

LLMs work differently. They’re reasoning engines that use Retrieval-Augmented Generation (RAG) to synthesize answers from training data and real-time web retrieval. When a generative engine responds to a prompt, it doesn’t look for the page with the highest Domain Authority. It optimizes for what researchers call “pass-level extractability” and “semantic richness.”

In practice, that means the AI evaluates whether a source can be safely and accurately used to construct a narrative without hallucinating. High DA pages often fail this test. They’re structured for human engagement: clever narrative introductions, visual design hierarchies, creative metaphors. All of that is noise to a machine trying to extract a factual answer.

| Dimension | Google Ranking Criteria | AI Citation Criteria |

|---|---|---|

| Authority signal | Backlinks, domain rating, site age | Entity clarity, E-E-A-T, consensus |

| Content goal | Match keywords and satisfy intent | Provide extractable, verifiable facts |

| Structure | Mobile-ready, fast load, metadata | Semantic HTML, Schema, chunking |

| Evaluation logic | Algorithmic ranking (strings) | Reasoning-based synthesis (things) |

AI engines prioritize sources that offer a “comprehension subsidy,” which is pre-processed, structured data that reduces the computational cost of inference. Specific textual modifications like adding quantitative statistics, relevant quotes, and authoritative citations can increase a brand’s AI search visibility by more than 40%.

3 Blind Spots That Kill Your AI Search Visibility

The failure of high-authority brands to show up in AI answers typically traces back to three gaps that reflect a legacy SEO mindset.

Blind Spot 1: Content Built for Keywords, Not Answers

Traditional SEO encourages writing for keyword density and topical coverage. That often produces articles with lengthy narrative introductions designed to signal relevance to a search algorithm, but functionally opaque to a RAG system.

AI engines favor answer-first content: the primary resolution to a query appears in the first one or two sentences of a paragraph, followed immediately by supporting data. In a RAG pipeline, the engine retrieves “chunks” of text. If the most relevant chunk contains narrative padding, the AI’s confidence in that source drops. A page with a clear heading hierarchy and concise definitions directly under those headers is far more likely to be extracted and cited.

Blind Spot 2: No Entity-Level Authority Signals

LLMs recognize and relate entities: distinct people, places, brands, and concepts. Your brand might rank for “cloud security” on Google without the AI actually understanding that your brand is an entity within that category.

Without a presence in the global Knowledge Graph, fed by Wikipedia, Wikidata, and industry databases, a brand remains a “string” of text rather than a “thing” in the AI’s world. Missing sameAs mappings in Schema.org markup creates what’s known as “entity drift,” where the AI can’t confidently verify the identity or credibility of a source. The result is systematic exclusion from generated answers.

Sites with proper author metadata and deep entity-level Schema deployment are cited up to 40% more frequently by AI platforms.

Blind Spot 3: You’re Not Measuring What AI Says About You

The third gap is reliance on traditional metrics like organic traffic and keyword position. These are becoming lagging, sometimes misleading, indicators.

As Google AI Overviews intercept informational traffic, a brand may see steady impressions in Search Console while its clicks erode because the AI has already provided the answer, without ever mentioning the brand. On top of that, LLM training data can be months old, and RAG systems may be blocked by client-side rendering or restrictive robots.txt files that prevent bots like GPTBot or PerplexityBot from accessing content.

If you’re not tracking AI-specific mentions, sentiment, and citation share, you’re flying blind in the environment where your highest-intent buyers are now conducting research.

How to Bridge the Gap Between SEO and AI Search Visibility

Closing the gap requires a structured transition: diagnosis first, then content optimization, then ecosystem authority building.

Start with a baseline audit. Run 20 to 50 “money prompts,” long-form conversational queries that represent real buyer questions, across ChatGPT, Perplexity, and Gemini. Compare the results against your traditional keyword rankings. You’ll likely find what researchers call “Invisibility Gaps”: keywords where you rank on page one of Google but don’t appear in any AI-generated recommendation.

Restructure content for machine readability. The shift is from narrative-first to answer-first. Place 50 to 120 word summaries directly under H2 headers. Replace qualitative claims with verifiable data. Use HTML tables and structured lists instead of prose walls. Research shows that statistical grounding alone lifts AI visibility by approximately 40%, while semantic formatting and citation integration each add another 30 to 40%.

Build ecosystem authority. AI engines treat a brand’s self-description as useful but biased. A review on G2, a thread on Reddit, or an article in a major trade publication carries more weight. Brands with high citation density from independent third-party sources are significantly more likely to be cited by reasoning engines.

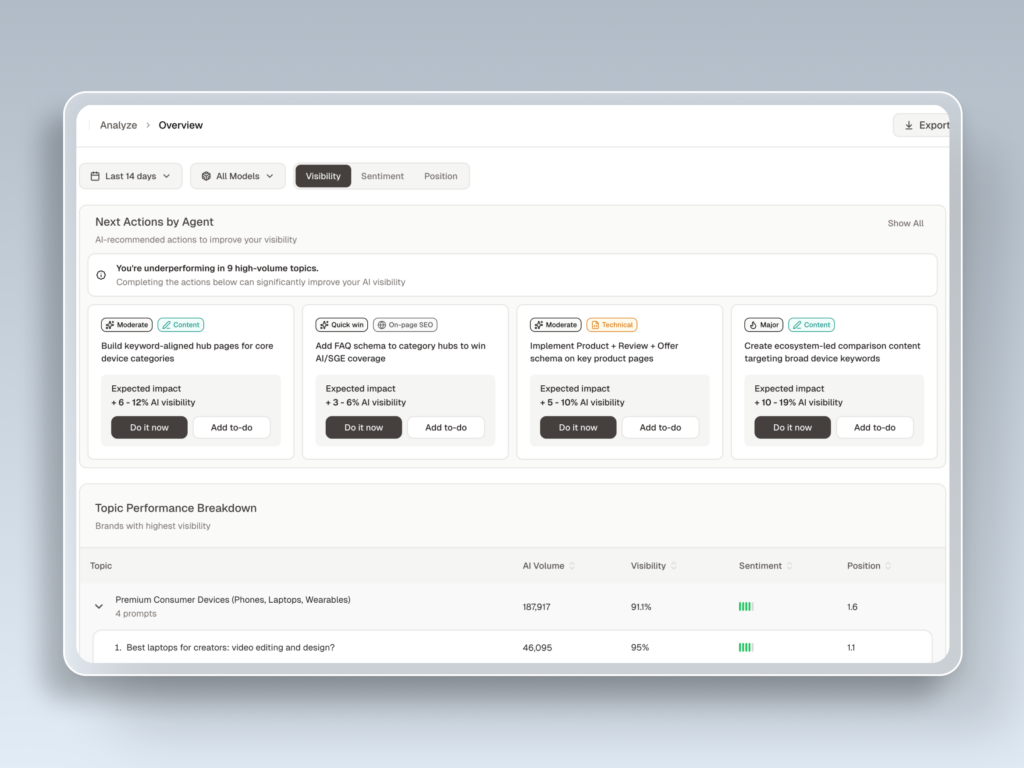

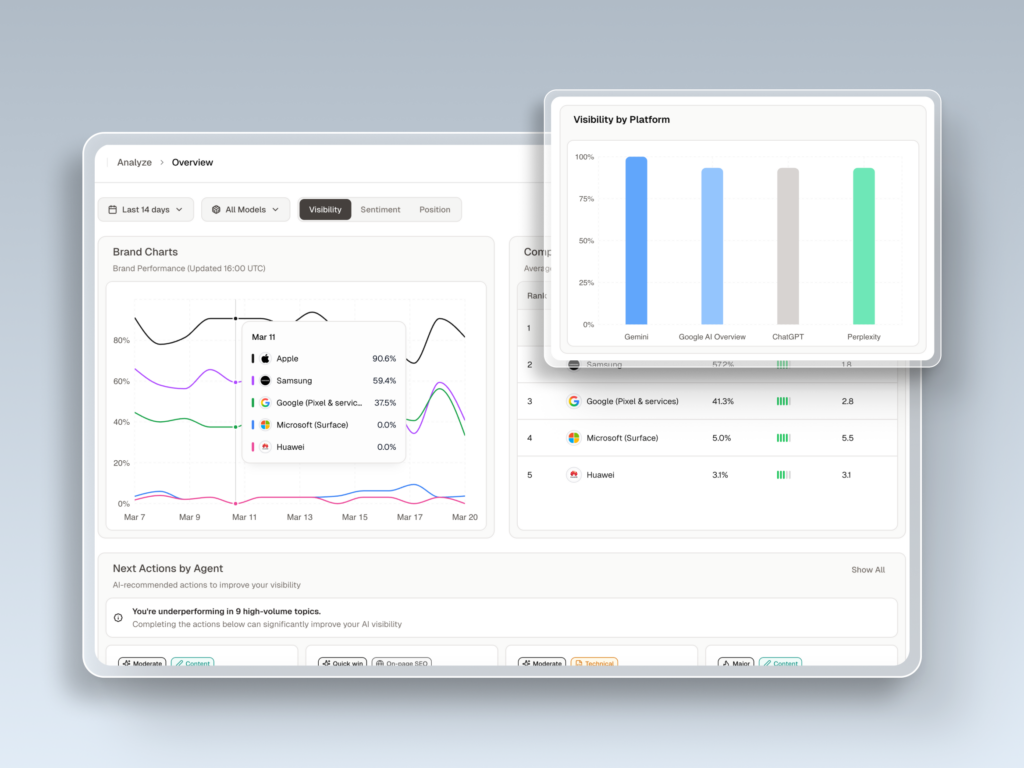

For teams managing this across multiple AI platforms, Topify provides real-time Visibility Tracking across ChatGPT, Gemini, Perplexity, and other major engines in a single dashboard. Its Source Analysis feature reverse-engineers exactly which third-party URLs the AI is citing, so you can identify the specific content gaps driving your invisibility and act on them directly.

What AI Search Visibility Metrics Actually Matter

Traditional CTR and rank tracking are losing their predictive power for informational content. A new scorecard is needed, one that measures not “where I rank” but “how I’m described.”

Topify tracks AI search visibility across seven dimensions that map directly to brand performance in the generative search era:

Visibility measures the percentage of relevant prompts where your brand is explicitly mentioned. Sentiment scores the framing of each mention, whether the AI describes you as innovative, trustworthy, or budget, on a 0 to 100 scale. Positiontracks whether you’re the primary recommendation or a secondary alternative. First-position mentions drive 32% higher purchase intent compared to second or third positions.

Volume reveals the true monthly demand for topics within AI platforms, often surfacing high-volume prompts invisible to traditional tools like Ahrefs. Mentions distinguishes between your brand being named in the text versus your domain being cited as a source in footnotes. Intent Alignment measures whether the AI matches your brand to the correct buyer persona. High visibility for the wrong intent is a wasted investment.

The seventh metric, Conversion Visibility Rate (CVR), estimates how likely an AI mention is to translate into downstream revenue. AI-referred visitors convert at 14.2%, a rate roughly 4 to 8 times higher than traditional organic search. That makes each AI citation disproportionately valuable compared to a standard ranking position.

Conclusion

The gap between SEO and AI search visibility isn’t a temporary glitch. It’s a structural shift in how buyers discover and evaluate brands.

Traditional SEO drives traffic through a list of links. AI search visibility determines whether your brand makes it into the synthesized answer that users now trust to filter the noise. For brands with strong domain authority but low AI visibility, the path forward starts with three moves: shift from keyword-first to answer-first content, formalize your entity presence in global knowledge graphs, and start tracking how AI platforms actually describe you. The goal is no longer to be the first link on the page. It’s to be the first name in the answer.

Get started with Topify to see where your brand stands across every major AI platform today.

FAQ

Q: What is AI search visibility?

A: AI search visibility refers to how often and how favorably your brand appears in responses generated by AI platforms like ChatGPT, Perplexity, and Gemini. Unlike traditional search rankings, it measures whether AI engines mention, recommend, or cite your brand when users ask relevant questions.

Q: Does SEO help with AI search visibility?

A: Strong SEO provides a foundation, but it doesn’t guarantee AI visibility. Research shows only 12% of URLs cited by AI engines appear in Google’s top ten results. AI engines prioritize extractable, structured content and entity-level authority signals, which differ from traditional ranking factors like backlinks and keyword density.

Q: How do I check if my brand appears in ChatGPT or Perplexity?

A: The manual approach is to run your target buyer’s questions directly in each AI platform and note whether your brand is mentioned. For systematic tracking, tools like Topify automate this across multiple AI engines, monitoring your mention rate, sentiment, position, and citation sources in real time.

Q: What’s the difference between SEO and GEO?

A: SEO optimizes for search engine rankings, focusing on keywords, backlinks, and page authority. GEO (Generative Engine Optimization) optimizes for AI-generated answers, focusing on content extractability, entity clarity, and third-party citation density. Both matter, but they require different strategies and different metrics.