Your team has spent six months earning backlinks and pushing a target page to position one on Google. Then a buyer asks ChatGPT, “What’s the best tool for our category?” and gets back five recommendations. Your brand isn’t on the list.

This isn’t a glitch. It’s the gap between Google rankings and AI search visibility, two retrieval systems that look similar from the outside and behave nothing alike. Google ranks URLs. AI search picks passages, checks corroboration across sources, and synthesizes a single answer. The dashboards built for the first system can’t see what’s happening in the second.

What AI Search Visibility Actually Means

AI search visibility tracks how often your brand gets surfaced, cited, and recommended inside the synthesized answers from large language model engines like ChatGPT, Gemini, Perplexity, and DeepSeek. It’s a composite signal, not a single number.

Three terms get conflated by most marketing teams. Rankings refer to a URL’s vertical position on a search results page, where success means a lower number. Mentions refer to the raw frequency with which a brand name appears in AI-generated text, regardless of whether a citation is provided. AI search visibility is the holistic combination of mention frequency, accuracy of portrayal, position within the answer hierarchy, and the credibility of the sources the AI uses to justify its recommendation.

The shift matters because the search-to-click economy is being rewired in real time. Around 60% of Google searches now end without a click, and that number climbs to 77% on mobile. Inside AI Overviews, organic CTR for top-ranking pages drops by as much as 61%.

For the 68% of B2B buyers who now begin research inside AI tools instead of search engines, the AI’s synthesized answer functions as the shortlist.

The two systems optimize for different outcomes:

| Metric Component | Traditional Ranking | AI Search Visibility |

|---|---|---|

| Primary Unit | URL (domain-level) | Passage (entity-level) |

| Output Type | Ordered list of links | Synthesized natural language |

| Success Goal | Traffic acquisition | Answer dominance |

| Authority Basis | Backlink profile | Corroborated expertise |

| User Behavior | Comparison and selection | Consumption and verification |

Scale-wise, ChatGPT hit 900 million weekly active users by February 2026 and processes about 2.5 billion daily prompts. Google still owns nearly 90% of total search market share, but AI-driven interactions now account for 30% of total search behavior. Many users run dual queries, asking AI to explore a topic and Google to verify the specifics.

Three Core Differences Between AI Search Visibility and Google Rankings

The structural gap comes down to how each system retrieves and presents information. Three differences explain most of what shows up in your dashboards.

Difference 1: The “First Page” No Longer Exists

In traditional search, the first page captured roughly 90% of attention. AI engines collapse the page into a single synthesized answer. Most models cite only 3 to 5 sources per response, even when they retrieved hundreds of candidates during processing. There’s no “position five” that still drives meaningful visibility.

That’s a binary visibility state. You’re either part of the answer, or you’re absent.

Difference 2: Retrieval Grounding Beats Link Authority

Google ranks on relevance plus domain authority, with backlinks doing a lot of the heavy lifting. AI engines run on Retrieval-Augmented Generation. The model breaks the prompt into multiple semantic search vectors, a process called query fan-out, then selects passages that ground its answer with verifiable, structured evidence.

Synthesizability beats link counts. This is the “Page 2 Anomaly”: in roughly 40% of cases, ChatGPT skips the top 10 Google results to cite a source from page two or three that has a tighter data table or a clearer definition. Across nearly one million keywords, only 38% of AI citations overlap with Google’s top 10 results.

Difference 3: Visibility Lives in Language, Not URLs

Google visibility is tied to where a URL sits on a results page. AI visibility lives in the model’s language layer, both pre-trained knowledge and real-time retrieval context. Your brand can be recommended in an AI answer without anyone clicking the supporting citation.

That changes how authority gets built. AI engines evaluate Entity Confidence, the degree of certainty that a brand is the right one to recommend, by checking whether claims about it are corroborated across independent sources like Reddit, GitHub, industry forums, and third-party review platforms. A brand frequently discussed in technical threads on LinkedIn or Reddit can outrank a brand with a high-performing SEO blog but no third-party footprint.

| Feature | Google Rankings | AI Search Visibility |

|---|---|---|

| Navigation Unit | The URL link | The semantic entity |

| Selection Logic | Competitive popularity (links) | Factual corroboration (consensus) |

| Structure Preference | Keyword-rich prose | Machine-legible data, tables, lists |

| Stability | Relatively static (weeks) | Highly probabilistic (regenerative) |

| Visibility Channel | SERP impressions | Synthesized narrative mentions |

Why Traditional SEO Metrics Miss AI Search Visibility

Most marketing dashboards rely on lagging indicators that no longer track the path to revenue. Three blind spots stand out.

Keyword Rankings vs AI Mentions

You can rank #1 for a term and still get ignored by an AI engine for the same query. AI models don’t just match keywords. They evaluate the “information gain” of a page, which means original research, proprietary data, or unique case studies often beat generic well-optimized content.

Conversational queries average around 23 words. They generate dark queries, prompts with high research intent and near-zero traditional search volume. Tools that track 5-word head terms can’t see them.

The Domain Authority Deception

DA and DR were proxies for trust. AI models evaluate authority at the passage and entity level, not the domain. Mid-tier sites with high topical density, meaning consistent and structured coverage of a specific niche, often beat legacy giants on citation rate.

The mechanism is corroboration, not link counts. AI prefers pages whose facts align with multiple independent sources. A “DA-first” content strategy often produces pages too broad and promotional to clear that bar.

Citation Without Click

In the old model, an impression with no click was a creative failure. In AI search, an impression is consumption. When the AI digests your content into the answer, the user gets what they need without ever visiting your site.

Documented cases show brands losing 20% of referral traffic while gaining 113% AI visibility, with branded search volume rising in parallel. The dashboard says traffic is down. The reality is that the AI is feeding the top of the funnel.

That’s the metric mismatch in one sentence: an old dashboard can’t measure a new game.

The 7 Metrics That Actually Track AI Search Visibility

AI search visibility isn’t a single number. It’s a matrix that tracks how a brand appears, gets described, and gets ranked across multiple AI engines.

The framework most analysts now reference covers seven dimensions:

- Visibility (cross-platform mention rate): percentage of priority queries where your brand gets mentioned. Category leaders in 2026 typically sit between 30% and 45%.

- Sentiment (RankScale): 0 to 100, where 50 is neutral. A score below 40 flags a reputation problem. Visibility paired with negative sentiment is a liability, not an asset.

- Position (response position index): relative order of brand mentions in multi-brand answers. LLMs often default to the first-named entity as the recommended option.

- Source coverage: distribution of domains the AI cites when discussing your brand. If only your own site shows up, your authority is shallow.

- AI volume: monthly demand for a topic specifically inside AI platforms. Reveals dark queries traditional keyword tools miss.

- Intent alignment: whether the AI matches your brand to the right buyer persona and use case. High visibility plus low intent alignment means wasted exposure.

- Conversion Visibility Rate: predictive measure of how likely AI visibility is to drive action. AI-referred visitors convert at rates around 14.2% versus 2.8% for traditional search.

Tracking visibility without sentiment, or position without source coverage, gives you a partial picture. The point of the matrix is to catch trade-offs early, before they show up in pipeline.

What Actually Drives AI Search Visibility

Earning visibility is less about hacking a ranking algorithm and more about becoming a citation-worthy entity. AI models are optimized to find the most efficient passage that answers a question accurately and safely.

Citation Worthiness Through Structure

AI retrieval doesn’t ingest entire pages. It extracts passages, usually 150 to 300 words. To get pulled, that passage has to be extraction-ready.

Pages with clear H2 and H3 hierarchy, bulleted lists, and comparison tables show citation rates 25% to 40% higher than narrative-heavy pages. Entity density, the concentration of company names, product identifiers, and quantified statistics, is one of the most consistent predictors of selection. Adding quantified claims to a page has been shown to lift citation rates by 40% to 115%.

Source Coverage and the Consensus Signal

Roughly 83% of B2B citations in AI answers come from third-party sources, not brand-owned websites. AI models read consensus across independent sources as a primary trust signal.

Two specific footprints matter most. Wikipedia accounts for up to 48% of ChatGPT’s top citations. Reddit is the top source for Perplexity at 46.7%. Industry review platforms like G2 and Capterra round out the trusted nodes that make a brand groundable.

Topic Depth Beats Keyword Density

AI evaluates authority through semantic topical clusters. A site that publishes one optimized article on a brand-new topic rarely wins a citation. Reference-grade content (original research, primary documentation, expert case studies) shows information gain over the existing web consensus.

Concentrated coverage of a tight topic cluster builds the corroboration AI needs. Broad coverage across unrelated topics dilutes it.

| Driver | Traditional SEO Focus | AI Visibility Focus |

|---|---|---|

| Content unit | Keyword-optimized page | Synthesizable passage |

| Structure | Reader-friendly prose | Machine-legible lists and tables |

| Authority | Inbound link quantity | Multi-source corroboration |

| Alignment | Search term match | Conversational intent fulfillment |

Why Measuring AI Search Visibility Manually Falls Apart

Manual tracking has stopped being viable. Each AI engine returns probabilistic answers that change between regenerations. A standard audit needs about 100 prompts run across 4+ engines with multiple regenerations per prompt.

A human researcher would need weeks to complete a single round. The models update daily.

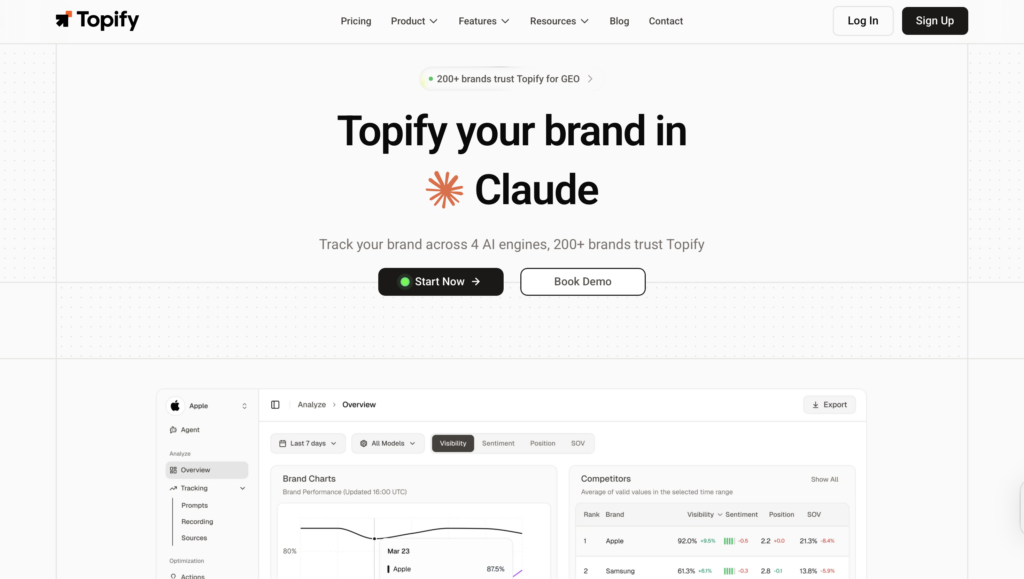

Specialized platforms like Topify exist to handle that scale. The platform is built around the seven-metric matrix above and tracks brand performance across up to nine AI models, including ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and Qwen. Its source analysis surfaces which third-party domains, specific subreddits, industry publications, or review sites, are fueling competitor recommendations. That gives PR and content teams a roadmap rather than guesswork.

The platform also identifies dark queries, prompts where users are actively researching a category but no traditional search volume exists. That’s the visibility competitors can’t see in their keyword tools. When the system detects a sentiment drop or a visibility gap, its agents propose specific fixes (schema implementation, content restructuring, citation building) and execute them in one click from the same dashboard.

In a fast-moving search environment, the gap between detecting a problem and fixing it is where most of the lost visibility lives.

A Starting Point for Your First AI Search Visibility Audit

A baseline audit answers one question: where does your brand stand in the synthetic web today? Four steps cover most of it.

Step 1: Build the money prompt set. Pick 20 to 50 conversational questions that high-intent buyers actually ask. These aren’t keywords like “CRM software.” They’re sentences like “Which CRM is best for a remote sales team of 50 that needs deep Slack integration?” Balance the set across awareness, solution-aware, comparison, and branded queries.

Step 2: Measure the baseline. Run the prompts across ChatGPT, Gemini, Perplexity, and DeepSeek with multiple regenerations to account for model variance. Capture visibility, sentiment, and position scores. Most brands discover their first visibility gap here, often for queries where they hold a #1 organic ranking.

Step 3: Diagnose the gap. Is it a sentiment problem, where the AI mentions you unfavorably? A source coverage problem, where the AI cites only competitor reviews on G2? A structural problem, where the AI retrieves your page but can’t extract a clean passage? The cause changes the fix.

Step 4: Optimize surgically. Skip the urge to overhaul the whole site. Restructure high-priority pages into an answer-first format. Add schema markup for the entities in your prompts. Run targeted PR to land mentions on the third-party sites the AI is currently citing for competitors.

You can’t optimize what you don’t measure.

Conclusion

By 2026, AI search visibility and Google rankings are running on parallel architectures that reward different inputs. Traditional SEO still drives transactional traffic on legacy search. It’s no longer enough on its own to manage how a brand gets recommended in an agentic world.

The strategic move is to keep foundational SEO running while building a dedicated AI visibility tracking and optimization layer. The brands that establish semantic authority before the rest of the market notices the dashboard mismatch will compound an advantage that’s hard to displace.

In a zero-click, synthesized world, visibility is the new currency of trust.

FAQ

Q: Is AI search visibility replacing SEO?

A: No. They’re complementary systems. SEO governs your visibility on traditional search engines, while AI search visibility (often called GEO) governs how you get synthesized into AI answers. Covering the full 2026 buyer journey takes both.

Q: If I rank #1 on Google, will AI also recommend me?

A: Not reliably. Only about 38% of AI citations overlap with Google’s top 10 results. If your page isn’t synthesizable or lacks third-party corroboration, the AI will often skip it for a better-structured source from page two or three.

Q: How often should I check AI search visibility?

A: Priority queries should be tracked weekly, since AI models change their consensus frequently and run on real-time retrieval. A full audit covering all priority prompts and competitive positioning makes sense once a month.

Q: What’s the difference between AI search visibility and GEO?

A: AI search visibility is the metric, what gets measured. Generative Engine Optimization is the strategy and execution layer that improves those metrics. One is the dashboard, the other is the playbook.