Your domain authority is 72. Your keyword rankings are solid. Your content calendar runs like clockwork. But when a prospect asks ChatGPT, “What’s the best platform for [your category]?”, your brand doesn’t show up. Not in the first recommendation. Not in the third. Not at all.

That gap between Google performance and AI visibility is widening every quarter. Google still processes over 15 billion queries daily, and traditional search volume grew 26% between 2023 and 2025. But AI-native platforms like ChatGPT and Perplexity are growing at 42.8% year-over-year, and 68% of B2B buyers now start their research inside an AI tool before they ever open a search engine. SEO tells you where your links rank. AI visibility tracking tells you whether AI recommends your brand at all. Those are two different questions with two different answers.

SEO Still Works. But It Doesn’t Answer AI Prompts.

SEO and GEO aren’t competing for the same job. They’re solving different problems in different systems.

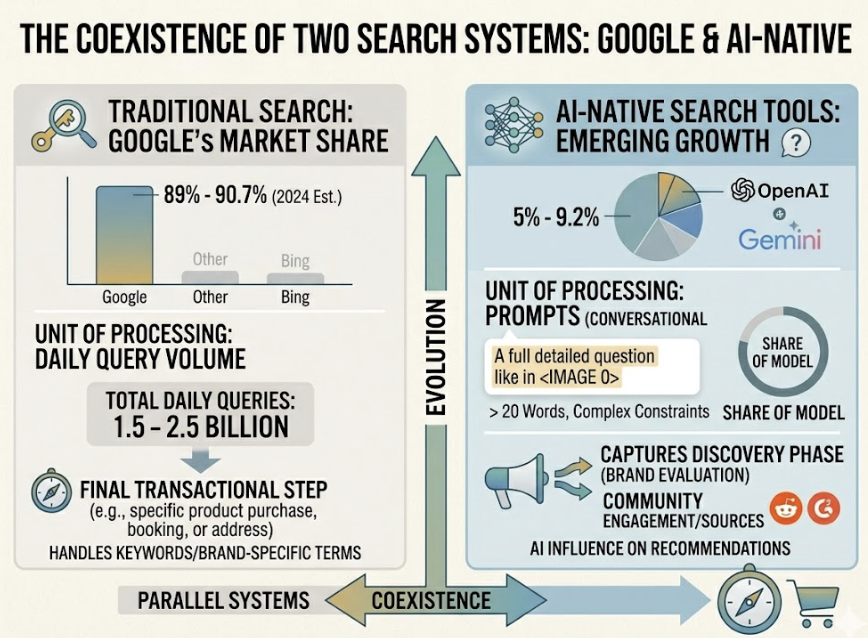

SEO optimizes for a directory. Google crawls your pages, indexes them, and ranks URLs in a list based on backlinks, keyword relevance, and technical signals. The user sees ten blue links, picks one, and clicks through. The unit of value is the click.

GEO (Generative Engine Optimization) optimizes for a synthesizer. When someone asks ChatGPT or Perplexity a question, the AI doesn’t serve a list. It pulls information from multiple sources, extracts relevant passages, and composes a single answer. Your brand either shapes that answer or it doesn’t exist in the conversation.

The two systems run in parallel. Google’s market share still sits between 89% and 90.7%, but AI-native search tools now account for an estimated 5% to 9.2% of global query volume, processing 1.5 to 2.5 billion queries daily. More importantly, AI platforms are capturing the discovery phase of the buyer’s journey, the moment someone decides which brands to evaluate, while traditional search increasingly handles the final transactional step.

That’s the split. Ignoring GEO doesn’t mean losing traffic today. It means losing the conversation before your prospects even know you exist.

How AI Decides What to Recommend vs. How Google Ranks Pages

Google’s ranking logic is relatively transparent: crawl the page, evaluate backlinks, check keyword match, weigh domain authority, factor in Core Web Vitals. You can reverse-engineer most of it with a standard SEO toolset.

AI engines work on a fundamentally different architecture called Retrieval-Augmented Generation, or RAG. When a user submits a prompt, the AI decomposes it into sub-queries, retrieves relevant text passages (not full pages) from its index or live web search, ranks those passages by semantic relevance, and then synthesizes a response. The unit of retrieval isn’t a URL. It’s a chunk, typically 256 to 512 tokens of self-contained, fact-dense text.

Here’s where the sourcing logic diverges. Research analyzing millions of citations across major platforms reveals distinct editorial identities. About 92% of Google AI Overview citations come from domains already ranking in the top 10 on traditional search. ChatGPT flips that pattern: roughly 90% of its citations come from outside the Google top 20, drawing heavily from Reddit, G2, Wikipedia, and news publishers. Perplexity favors real-time retrieval with a strong recency bias, prioritizing content updated within the last 30 days.

Across nearly every model, 82% to 85% of AI citations come from non-brand sources: third-party reviews, community discussions, and industry publications. The AI doesn’t take your word for it. It trusts what others say about you.

For teams managing AI visibility tracking across platforms, Topify provides Source Analysis that maps exactly which domains each AI engine cites when recommending brands in your category, so you can see where the gaps are and which third-party sources you need to win.

What SEO Metrics Miss About AI Visibility Tracking

The metrics that built your SEO dashboard, domain authority, keyword position, organic sessions, weren’t designed to measure what happens inside an AI-generated answer. And the gap between those metrics and reality is growing.

Consider what’s happened to organic click-through rates. When Google’s AI Overviews appear, the organic CTR for Position 1 drops from 1.76% to 0.61%, a 65.3% decline. Zero-click searches in the US now account for 58.5% to 60% of all queries. Users are getting their answers without visiting your site.

But here’s the counterintuitive part. While click volume is compressing, the quality of traffic from AI referrals is dramatically higher. Visitors arriving via an AI recommendation convert at 4.4x to 5.1x the rate of traditional organic search visitors. The AI has already done the comparison and evaluation for the user. By the time they click through, they’re close to a decision.

That means the metrics that matter for AI visibility tracking are categorically different from SEO KPIs:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Visibility Score | % of target prompts where your brand appears | Your “discovery” rate in AI conversations |

| Sentiment Score | How the AI frames your brand (positive, neutral, negative) | Being mentioned as “outdated” is worse than not being mentioned |

| Position Rank | Where you appear in the recommendation list | First-named brands capture disproportionate trust |

| Citation Share | How often the AI links to your domain vs. competitors | The new “backlink” equivalent |

| Share of Voice | Your mention % vs. all tracked competitors | Category dominance in the AI conversation |

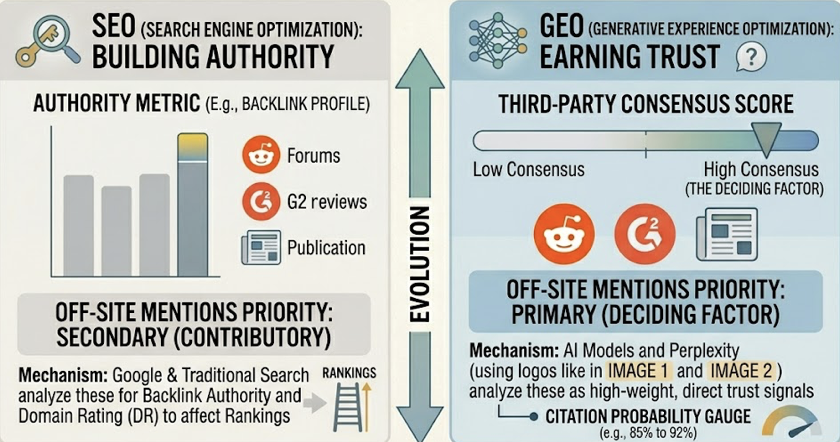

A brand can rank #1 on Google for “best enterprise CRM” and be completely absent from ChatGPT’s answer, because the model relies on a different consensus layer, Reddit threads, G2 reviews, and trade publications, where the brand hasn’t built presence.

The Metrics That Actually Matter for AI Visibility Tracking

Traditional SEO measurement is binary: you’re ranked or you’re not. AI visibility tracking is probabilistic and multi-dimensional. The AI might mention your brand in 40% of relevant prompts, describe you positively in 70% of those mentions, and cite your domain in only 15%. Each dimension requires a different optimization response.

Research from Princeton University, Georgia Tech, and the Allen Institute for AI, published at KDD 2024, formalized what drives AI citation behavior. Content that includes verifiable data and statistics sees up to a 40% boost in AI visibility. Expert quotations with named sources add 30%. Authoritative tone contributes 25%. And keyword stuffing, the backbone of early SEO, actually hurts AI visibility by roughly 10%.

The most striking finding: sites ranking outside Google’s top 10 saw the greatest relative gains, up to 115%, by implementing GEO-specific tactics. That means AI visibility tracking isn’t just a new metric layer. It’s a new competitive landscape where traditional domain authority barriers don’t apply the same way.

For teams building an AI visibility tracking practice, Topify’s platform monitors all seven dimensions, visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate), across ChatGPT, Gemini, Perplexity, and AI Overviews from a single dashboard. In practice, this means you can spot a drop in ChatGPT mentions, trace it to a specific source that stopped citing your brand, and deploy a fix without switching between tools.

3 GEO Tactics That Don’t Work in SEO, and Vice Versa

The overlap between SEO and GEO execution is smaller than most teams assume. Here’s where the two diverge in practice.

GEO works, SEO doesn’t:

Optimizing for third-party consensus. AI models heavily weight what others say about your brand. Earning mentions in Reddit communities, G2 reviews, and trade publications directly increases your citation probability. In SEO, these off-site mentions contribute to backlink authority, but the mechanism and the priority are different. In GEO, third-party consensus is often the deciding factor.

Structuring content as extractable chunks. RAG systems retrieve 40-60 word passages, not full pages. Each section of your content needs to function as a standalone answer with its own data point and attribution. Traditional long-form SEO content with gradual build-ups and vague introductions gets skipped entirely by retrieval systems.

Managing brand sentiment across AI platforms. It’s not enough to appear. If ChatGPT describes your product as “budget-friendly” when your positioning is premium, that visibility works against you. Sentiment tracking is a core GEO discipline with no direct SEO equivalent.

SEO works, GEO doesn’t:

Backlink building for domain authority. While traditional DA still matters for Google AI Overviews (which favor top-ranking domains), ChatGPT and Perplexity don’t weigh backlink profiles the same way. A site with fewer backlinks but denser, more structured content often wins the AI citation.

SERP feature optimization. Featured snippets, People Also Ask boxes, and knowledge panels are Google-specific real estate. They don’t influence whether ChatGPT recommends your brand.

Keyword density targeting. GEO penalizes this. AI models prioritize semantic density, the richness of meaning per sentence, over keyword frequency. If your content team is still chasing keyword density, they’re optimizing against themselves in the AI layer.

How to Run SEO and GEO Without Doubling Your Workload

The good news: SEO and GEO share a technical foundation. A crawlable, well-structured site with clean schema markup serves both systems. The divergence happens at the content and distribution layer.

Think of it as three layers working together. Layer 1 is your SEO foundation: technical health, indexability, and domain authority. Without this, your content can’t enter the retrieval candidate set for most AI engines. Layer 2 is Answer Engine Optimization (AEO): structuring pages with direct answers, FAQ schema, and question-format headings so that both Google’s AI Overviews and AI-native platforms can extract clean passages. Layer 3 is GEO: the citation layer, where you optimize for inclusion in synthesized AI responses by building factual density, entity clarity, and multi-source corroboration.

| Strategic Priority | SEO Role | GEO Role | Where They Overlap |

|---|---|---|---|

| Content Strategy | Keywords and topics | Prompts and questions | Use H2s as query-match headers |

| Technical Stack | HTML and meta tags | JSON-LD and schema | Schema labels entities for AI extraction |

| Authority Building | Backlinks | Citations and mentions | PR drives mentions that fuel both |

| Measurement | GSC and rankings | Visibility and sentiment | Track conversion across the full funnel |

The practical starting point: identify 50 to 200 high-value prompts your customers are asking AI. Test them across ChatGPT, Perplexity, and Gemini to map where your brand appears and where it doesn’t. Topify’s AI visibility checkerautomates this across platforms, surfacing the prompts where competitors get recommended but your brand is absent.

From there, the workflow is straightforward. Fix content structure for extractability, build third-party authority where the AI looks for consensus, and monitor changes weekly. AI models update faster than Google’s index, and visibility can shift in days, not months. For a quick baseline, Topify’s free GEO Score Checker evaluates your site’s AI readiness across four dimensions, no signup required.

Conclusion

GEO doesn’t replace SEO. It adds a second front. Google still drives high-volume traffic, and traditional rankings still matter for the final transactional click. But the discovery phase, where prospects decide which brands to evaluate, is migrating to AI platforms that operate on entirely different rules.

AI visibility tracking is the bridge between those two worlds. It tells you what SEO dashboards can’t: whether AI recommends your brand, how it frames you, and which sources are shaping that narrative. The brands building this measurement layer now are the ones that will own the recommendation when their competitors are still wondering why clicks aren’t converting. Start by mapping your AI visibility baseline with Topify, and build from there.

FAQ

Q: What’s the difference between GEO and SEO?

A: SEO optimizes for ranking URLs in a list of links on search engine results pages. GEO optimizes for being cited, mentioned, and recommended inside AI-generated answers. The authority signals differ: SEO rewards backlinks and domain authority, while GEO rewards factual density, structured content, and third-party corroboration across platforms like Reddit and G2.

Q: Do I need ai visibility tracking if my SEO is already strong?

A: Yes. A high domain authority and strong keyword rankings don’t guarantee AI visibility. Research shows that 90% of ChatGPT’s citations come from outside Google’s top 20 results. Your brand can rank #1 on Google and still be invisible in ChatGPT’s recommendations. AI visibility tracking reveals that gap.

Q: Which AI platforms should I track my brand on?

A: At minimum, track ChatGPT, Google AI Overviews (Gemini), and Perplexity. Each has distinct citation biases: Google favors top-ranking domains, ChatGPT favors third-party consensus, and Perplexity favors recent, niche-expert content. Tracking only one platform gives you an incomplete picture.

Q: How often should I monitor AI visibility?

A: Weekly for high-priority prompts, monthly for citation trends and sentiment shifts. AI models update faster than traditional search indexes, and RAG-based platforms like Perplexity pull live data. Visibility can shift within days of a content change or competitor move.