Inside the Ranking Logic of ChatGPT, Perplexity, and Gemini

Your team spent six months building content, earning backlinks, and climbing Google rankings. Then a potential customer asked ChatGPT, “What’s the best tool for [your category]?” and got a list of five recommendations. Your brand wasn’t on it.

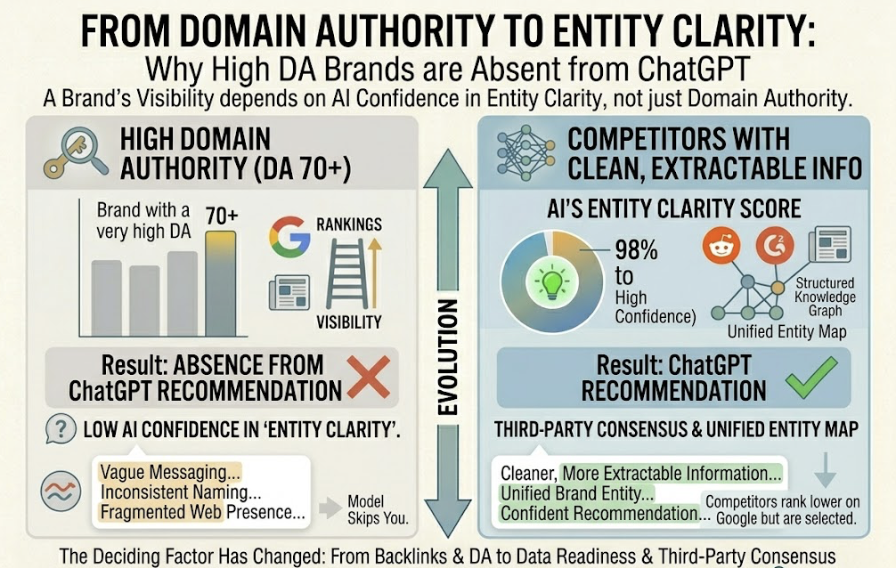

The disconnect isn’t a fluke. Research shows the correlation between a high Google ranking and being cited in a ChatGPT response is just 0.034. That’s nearly random. Your SEO dashboard says everything is fine. The AI engines say you don’t exist.

The brands that do get cited aren’t always the ones with the strongest domain authority. They’re the ones whose data is structured, validated across third-party sources, and formatted in ways that AI retrieval systems can actually extract. Understanding that logic is the first step toward fixing it.

Your Google Rankings Don’t Decide What AI Recommends

Here’s the assumption most marketing teams still operate under: if we rank on the first page of Google, AI search engines will recommend us too.

That assumption is wrong.

Traditional SEO is built on backlinks, domain authority, and keyword density. Generative engines like ChatGPT, Perplexity, and Gemini use a completely different retrieval logic. They don’t rank websites. They synthesize factual claims from a diverse ecosystem of sources, then assemble a response based on which entities have the highest “semantic density” and cross-platform validation.

A brand with a DA of 70+ can be entirely absent from a ChatGPT recommendation for its core product category. Not because the content is bad, but because the AI’s confidence in that brand’s “entity clarity” is low. If your messaging is vague, your naming inconsistent, or your presence fragmented across the web, the model skips you in favor of competitors who may rank lower on Google but present cleaner, more extractable information.

| Dimension | Traditional Search (Google) | Generative Search (ChatGPT/Perplexity) |

|---|---|---|

| Primary Goal | Ranking in top 10 blue links | Inclusion in synthesized answer |

| Authority Proxy | Backlinks and DA | Third-party consensus and earned media |

| User Interaction | Click-through to website | Zero-click information consumption |

| Optimization Focus | Keywords and technical SEO | Entity binding and answerability |

The shift is structural, not incremental. It demands a different optimization framework entirely: Generative Engine Optimization, or GEO.

What ChatGPT, Perplexity, and Gemini Actually Look For

The generative search market isn’t a monolith. Each platform has its own retrieval architecture, source preferences, and citation patterns. A study found that only 11% of cited domains appeared across multiple AI platforms, which means a single optimization strategy won’t cover all three.

ChatGPT uses a Retrieval-Augmented Generation (RAG) pipeline that queries the Bing index in real time. It favors depth and comprehensiveness, typically providing between 3.5 and 8 citations per response. It leans heavily on authoritative “earned media,” encyclopedic sources, and high-authority industry publications. If your brand is well-covered in third-party reviews and industry roundups, ChatGPT is more likely to surface you.

Perplexity operates as a search-first retrieval engine with clear, numbered inline citations. It’s the most sensitive to content freshness: content updated within the last 30 days has an 82% citation rate, while content older than six months sees a steep drop. Perplexity also shows a willingness to cite smaller, specialized niche blogs over high-DA generalists if the data is more precise.

Gemini and AI Overviews draw from Google’s two decades of crawl history and its Knowledge Graph. Gemini inherits Google’s E-E-A-T signals but applies a different authority weighting than the traditional ranking algorithm. While AI Overviews have high semantic overlap with standard Google results, the URL overlap is just 13.7%.

| Feature | ChatGPT | Perplexity | Gemini / AI Overviews |

|---|---|---|---|

| Search Partner | Bing | Proprietary + Bing Hybrid | Google Index / Knowledge Graph |

| Avg Citations | 7.92 | 21.87 | 8.34 |

| Source Preference | Wikipedia, High-DA Publishers | Niche Experts, Recent Data | Official Brand Sites, Knowledge Entities |

| Optimization Focus | Depth, Multi-turn Context | Freshness, Claim-Source Links | E-E-A-T, Schema, Brand Profiles |

That divergence is exactly why ai visibility tracking across all three platforms matters. A brand might perform well on ChatGPT and be completely invisible on Perplexity because its content is six months stale.

5 Signals That Get a Brand Into AI Answers

Transitioning from traditional SEO to GEO means focusing on five signals that compel an AI engine to trust, retrieve, and cite a brand.

Signal 1: External Validation Through Earned Media

AI engines show a systematic bias toward third-party sources over brand-owned content. A brand mentioned consistently on Reddit, industry news sites, and review platforms like G2 is roughly 2.8x more likely to be cited than a brand that only publishes on its own domain. For LLMs, trust is built through consensus across diverse source types, not through self-promotion.

What to do: Audit your third-party descriptions on review sites, directories, and forums. AI reflects these sources more than your website’s marketing copy.

Signal 2: Structured, Extractable Content Architecture

The physical layout of your content determines its “extractability.” AI systems prefer what researchers call “Answer Capsules,” modular 40 to 60 word paragraphs that directly answer a query at the beginning of a section. Content using consistent heading hierarchies and structured data (FAQ, Article, Product schema) sees a 44% to 67% increase in citation likelihood.

What to do: Restructure H2 headers to match common user queries and follow immediately with a direct, answer-first paragraph.

Signal 3: Entity-Category Binding

AI visibility is, at its core, a classification problem. The model needs to confidently bind your brand name to its industry category. If your messaging says “we provide innovative solutions” instead of “we build project management software for remote teams,” the AI lacks the structured confidence to recommend you for a specific need.

What to do: Use consistent naming and clear service descriptors across all digital platforms to reinforce the co-occurrence of your brand with industry-specific terminology.

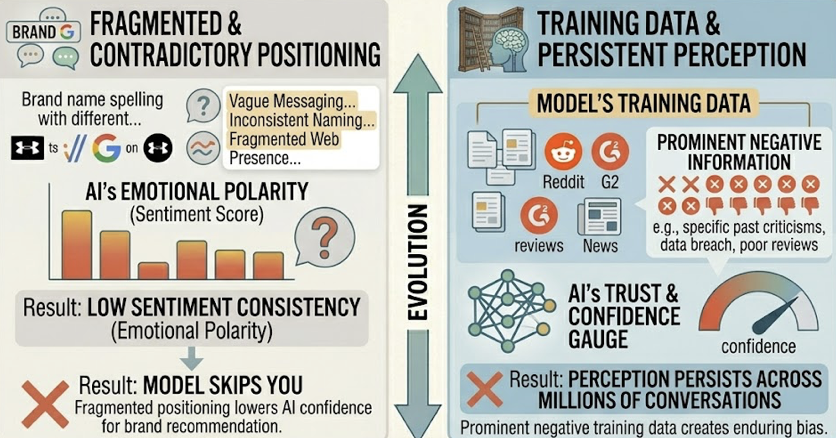

Signal 4: Sentiment Consistency Across Sources

AI models evaluate what’s called “Sentiment Consistency,” the emotional polarity of how a brand is discussed across reviews, social media, and news. If negative information was prominent in the model’s training data, that perception can persist across millions of conversations. Fragmented or contradictory positioning lowers the model’s confidence in recommending the brand.

What to do: Monitor “Semantic Drift” monthly. If AI characterizations of your brand diverge from your actual positioning, you need to fix the inputs (third-party sources) rather than trying to correct the output directly.

Signal 5: Information Freshness and Recency

For RAG-driven search, recency is a primary retrieval trigger. Perplexity gives a massive boost to content published within the last 30 days. Adding visible “Last Updated” dates and current statistics can lift citation rates by 47%.

What to do: Implement a quarterly update cycle for your highest-value pages. Freshness isn’t optional anymore.

| Signal | Mechanism | Measured Impact |

|---|---|---|

| Earned Media | Consensus across multiple platforms | 6.5x more weight than brand-owned content |

| Structure | Answer Capsules and FAQ Schema | 67% improvement in AI coverage |

| Entity Binding | Schema and category co-occurrence | Higher likelihood of appearing in shortlists |

| Sentiment | Polarity scores across the web | Influences how favorably the AI recommends you |

| Freshness | dateModified and datePublished schema | 82% citation rate for content under 30 days old |

Why Most Brands Can’t See Whether AI Is Citing Them

Here’s the thing: even if you’ve optimized for all five signals, you still can’t measure the results using traditional analytics.

Google Analytics 4 is built to track browser sessions and cookie-based interactions. Generative engines bypass both. AI bots don’t execute JavaScript, which makes them invisible to standard tracking pixels. Over 70% of AI referrals arrive without referrer headers because users copy-paste URLs from AI chats rather than clicking them.

The result is a “dark funnel.” Google’s AI Overviews now appear in over 13% of queries, yet they’ve caused organic click-through rates to drop by 61%. Prospects research your brand in a ChatGPT answer, form purchase intent, and later search your brand name directly. GA4 misattributes this to “Direct” or “Branded Search.”

That’s the Influence-Attribution Gap. Traditional models measure visits. In the AI era, the real metric is influence. A brand can be the top recommendation in a ChatGPT answer, receive zero clicks, and still drive significant downstream revenue.

To close that gap, you need ai visibility tracking: a shift from session-based metrics to Citation Rate (how often the brand is cited) and Share of Model (visibility relative to competitors).

How AI Visibility Tracking Closes the Gap

AI visibility tracking is the continuous monitoring of how a brand appears, ranks, and is described across generative platforms. It provides a standardized view based on core metrics: visibility frequency, recommendation position, sentiment score, query volume and intent, and citation source mapping.

Topify tracks these seven metrics across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms. That coverage matters because a brand’s visibility profile differs significantly across platforms. Knowing you rank well in ChatGPT tells you nothing about whether Gemini is recommending a competitor for the same query.

Here’s a practical scenario. A marketing team uses Topify to track 200 high-intent prompts. They discover a significant “Citation Gap”: a competitor is cited in 70% of responses while they appear in only 15%. Using Source Analysis, the team reverse-engineers those citations and finds that the competitor’s visibility is being driven by a series of Reddit threads and niche industry reviews. That tells the team exactly which third-party domains to target for their earned media strategy. Instead of guessing, they’re closing the gap with data.

The Competitor Monitoring feature handles benchmarking systematically, automatically detecting which competitors appear alongside your brand and tracking how that shifts over time. And Sentiment Analysis scores how the AI characterizes your brand on a 0-100 scale, so you can see not just whether you’re mentioned, but whether the AI is positioning you as a recommendation or a cautionary example.

Topify’s Basic plan starts at $99/mo, covering 100 prompts and 9,000 AI answer analyses, which makes professional-grade ai visibility tracking accessible for mid-sized teams.

Three Steps to Start Tracking Your Brand’s AI Visibility

Step 1: Discover Your High-Value Prompts

Unlike traditional SEO keywords (averaging 4 words), AI queries are conversational prompts averaging 23 words, filled with specific qualifiers like budget, use-case, and company size. The first step is identifying 50 to 200 high-intent prompts your target audience actually asks AI platforms. Topify’s High-Value Prompt Discovery surfaces the exact conversational clusters that have volume and currently trigger recommendations in your category.

Step 2: Establish Your Baseline

Before automating, run “Manual Spot-Checks.” Ask 10 to 20 variations of a buyer-intent question across ChatGPT, Perplexity, and Gemini. Record whether your brand appears, its position, and whether the description is accurate. Look for Semantic Drift: if the AI’s characterization of your brand diverges from your positioning, that’s a distortion you need to fix through updated content inputs.

Step 3: Move to Continuous Automated Monitoring

AI models update frequently and their retrieval caches are dynamic. Visibility isn’t a static rank. Transition from manual checks to Topify’s automated dashboard, which tracks the 7 core metrics in real time. This lets teams respond immediately if a competitor gains a citation advantage or if an AI begins to hallucinate incorrect pricing or features.

Conclusion

AI engines aren’t random recommendation machines. They’re retrieval systems that favor entities with high structural clarity, cross-platform validation, and content freshness. The brands that get cited are the ones that have optimized for these signals, not just for Google’s blue links.

The first step to optimization is sight. You can’t optimize what you can’t measure. AI visibility tracking is the only way to expose the Citation Gaps and Entity Inconsistencies that lead to brand invisibility. Start by understanding which prompts matter, where you stand today, and what your competitors are doing differently.

The gap between “ranking on Google” and “being recommended by AI” is only growing. The brands that close it first will own the consideration set where modern buyers actually make decisions.

FAQ

What is ai visibility tracking?

It’s the systematic process of monitoring how often, where, and with what sentiment a brand is mentioned and cited across generative engines like ChatGPT, Perplexity, and Gemini. It shifts measurement from clicks and sessions to citation rate and share of voice in AI answers.

How often do AI search engines update their brand recommendations?

Recommendations can shift in real time as the retrieval layer indexes new web content. Perplexity is especially sensitive to content published within the last 30 days. Other platforms update less frequently but still reflect changes in third-party source coverage.

Can I improve my chances of being cited by ChatGPT?

Yes. Use Answer Capsules (40 to 60 word modular answers), ensure your site uses server-side rendering (AI bots struggle with JavaScript), and secure mentions on high-authority third-party platforms like Reddit, G2, and industry publications.

What’s the difference between SEO and GEO?

SEO optimizes for a ranked list of links to drive website traffic. GEO optimizes for inclusion and citation within a synthesized, conversational answer to drive brand influence and purchase intent.