Your domain authority is 70. Your keyword rankings are solid. Your SEO dashboard looks healthy by every traditional metric. Then someone asks Perplexity, “What’s the best tool for [your category]?” and your brand doesn’t appear anywhere in the answer.

That gap between Google rankings and AI search recommendations is where revenue quietly disappears. ChatGPT referral traffic converts at 15.9%, nine times the baseline for traditional Google organic. When your brand is absent from those answers, you’re not losing impressions. You’re losing pre-qualified buyers.

The good news: you can map exactly where you stand across AI search engines in 30 minutes. Here’s how.

What AI Visibility Tracking Actually Measures (and What SEO Tools Miss)

AI visibility tracking is the practice of measuring how often, how prominently, and how accurately a brand appears in the outputs of generative models like ChatGPT, Perplexity, Gemini, and Google AI Overviews.

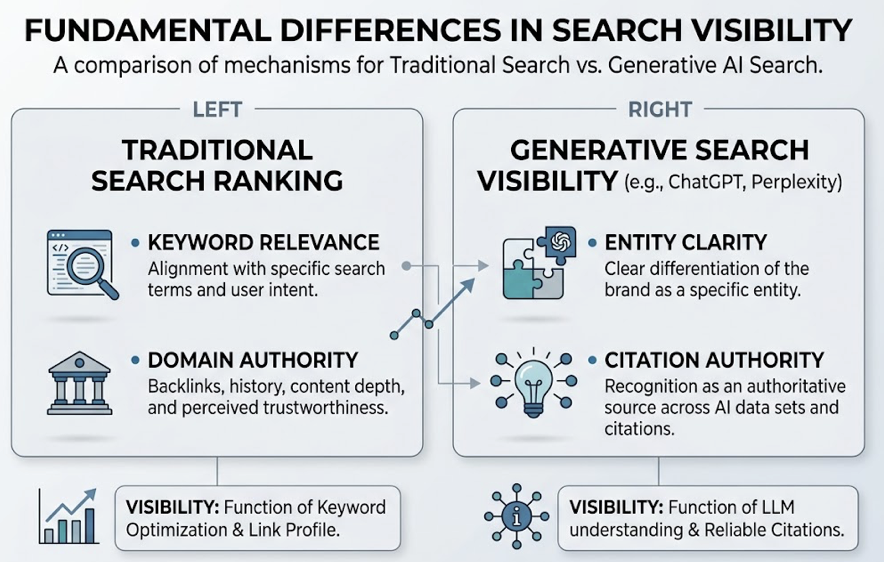

That might sound similar to traditional rank tracking, but the mechanics are fundamentally different. In traditional search, visibility is a function of domain authority and keyword relevance. In generative search, visibility depends on what researchers call “entity clarity” and “citation authority.” A brand can hold the #1 Google position for a high-volume keyword and still be completely absent from a ChatGPT response for the same category query.

The disconnect happens because generative engines use Retrieval-Augmented Generation (RAG) to prioritize information that shows cross-platform consensus and semantic density, not traditional ranking signals.

Here’s what a professional AI visibility tracking framework actually measures:

| Metric | What It Tells You |

|---|---|

| Brand Presence | Percentage of category-relevant prompts where your brand is mentioned |

| Citation Share | How often AI models link to your owned or earned media |

| Sentiment Polarity | The evaluative tone the AI uses when describing your brand |

| Position Prominence | Where your brand appears in the answer (first recommended vs. buried) |

| Narrative Accuracy | Whether the AI’s description matches your actual features and pricing |

Tools like Google Analytics, Ahrefs, and Semrush were built to track clicks and link-based authority. They’re blind to the internal narrative logic of an LLM. While organic rankings influence what a generative engine might “see,” they don’t dictate what the engine will “say.”

That’s the gap Topify was built to close, providing cross-platform tracking of brand mentions, citation patterns, sentiment, and positioning across every major AI engine.

Why Most Brands Fail Their First AI Visibility Audit

Before walking through the audit framework, it’s worth understanding why most initial attempts produce misleading results. Three failure patterns show up consistently.

Treating LLMs like search engines. Generative models are probabilistic, not deterministic. The same prompt can produce different answers for users in London versus San Francisco, and even the same user can get different results across sessions. Searching a couple of prompts on ChatGPT and treating those results as representative is like polling two people and calling it a survey.

A professional audit needs a multi-sample methodology: running prompts through multiple geographic nodes to capture a statistically meaningful baseline.

Platform myopia. Most brands check ChatGPT and stop there. Research shows that only 11% of cited domains are shared across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Dominance on one platform guarantees nothing on another.

Ego-centric tracking. Auditing your brand in isolation, without benchmarking against competitors, misses the most actionable signal. In the generative era, AI visibility is a zero-sum game. If a model recommends three competitors and excludes you, that’s a definitive signal of an authority gap in the model’s retrieval cache.

The 30-Minute AI Visibility Audit: Step by Step

This framework is designed to be repeatable. Run it monthly or trigger it after major product launches, PR campaigns, or known AI model updates. Here’s the time breakdown: 5 + 10 + 10 + 5 minutes.

Step 1: Define Your Audit Scope, 5 Minutes

The foundation of any AI visibility audit is the prompt library. Select 3 to 5 core “category prompts” that reflect how a prospective customer would actually search for a solution.

Tag each prompt by intent: Informational (“What is [category]?”), Commercial (“Best [category] for small business?”), or Comparison (“[Brand] vs [Competitor]”). Then define 3 to 5 direct competitors as your primary tracking entities.

Platform selection matters. Your audit should cover at least ChatGPT, Perplexity, Gemini, and Google AI Overviews. Zero-click rates tell the story of where users actually get their answers: Perplexity at 93%, Google AI Mode at 88%, ChatGPT Search at 82%. Skipping any of these leaves a blind spot.

Step 2: Check Your AI Visibility Across Platforms, 10 Minutes

Run your prompt set across each platform and document where your brand falls into one of four categories:

- Directly Recommended: Named as a top-tier solution.

- Mentioned: Included in the narrative but not as a primary pick.

- Cited: Used as a reference source with a link.

- Absent: Completely missing from the conversation.

Doing this manually for 5 prompts across 4 platforms means reviewing 20 responses and cataloging every brand mention. It’s possible for a limited scope, but it doesn’t scale.

Topify’s Visibility Tracking automates this entire step. It monitors brand mentions across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms, scoring each appearance across seven key metrics: visibility, sentiment, position, volume, mentions, intent, and CVR. What takes 10 minutes manually takes seconds with the right tooling.

One data point worth noting: content updated within the last three months is roughly twice as likely to be cited by retrieval-augmented AI engines like Perplexity. If your audit reveals low visibility, freshness could be the first variable to investigate.

Step 3: Analyze Sentiment and Positioning, 10 Minutes

Showing up is only half the story. What the AI says about your brand matters just as much.

In this step, examine three things. First, identify the specific themes the AI associates with your brand. Are you described as “innovative but expensive”? “Reliable but legacy”? These sentiment drivers directly shape how potential buyers perceive you before they ever visit your site.

Second, benchmark your sentiment against competitors. If a rival’s sentiment score is consistently higher across prompts, that’s a content gap, not a branding problem.

Third, check for hallucinations. Across major models, hallucination rates range from 15% to 52% depending on the model and query type. These errors fall into categories that directly hurt conversion: fabricated features, omitted differentiators, outdated pricing, and misattributed capabilities.

Topify’s Sentiment Analysis provides daily breakdowns of how each AI platform characterizes your brand, with a 0-to-100 sentiment score tracked over time. Its Competitor Monitoring feature detects every brand the AI mentions alongside yours, comparing visibility, sentiment, and position side by side.

Step 4: Identify Citation Sources, 5 Minutes

The final step is reverse-engineering the AI’s “trust graph.” Which third-party sources is the AI citing when it forms opinions about your category?

This matters because third-party sources are cited 6.5 times more often than brand-owned pages in AI answers. Earned media accounts for roughly 48% of citations, while your own blog contributes around 23%. If a competitor has coverage on Gartner, Forbes, or a top industry subreddit and you don’t, the AI will naturally treat them as more authoritative.

Reddit alone accounts for approximately 21% of citations in Google AI summaries. Brands that ignore community platforms are forfeiting their authority to the most vocal users on the internet.

Topify’s Source Analysis feature maps exactly which domains and URLs each AI platform cites for your category. You can see at a glance whether your brand’s owned content is in the citation mix, or whether third-party sources are shaping the narrative without your input.

div data-topify-widget=”report-generator”>Turning Audit Data into a GEO Action Plan

You’ve now collected four layers of data: visibility baseline, competitor positioning, sentiment accuracy, and citation sources. The next step is prioritizing where to act.

Not all gaps are equally urgent. Here’s a triage framework based on common audit outcomes:

| Audit Finding | Priority Action | GEO Strategy |

|---|---|---|

| Low visibility across platforms | Retrieval Optimization | Create “GEO-ready” content with statistics, structured citations, and clear entity markup. Ensure GPTBot and PerplexityBot aren’t blocked by robots.txt. |

| Mentioned but negative sentiment | Sentiment Repair | Address specific sentiment drivers (pricing confusion, outdated info) and build third-party consensus on review sites. |

| Competitors winning citations | Digital PR + Community | Secure mentions in publications and Reddit threads the AI already trusts. |

The data on GEO content strategies is concrete. Research shows that adding precise statistics to content can increase visibility by up to 65.5% in category queries. Including inline citations to credible external reports boosts visibility by up to 132.4% in informational queries. Rewriting content in a more authoritative tone lifts visibility by 89.1% in specific domains.

On the structural side, AI models tend to prefer content organized into 120 to 180-word “atomic” sections rather than long, undifferentiated blocks of text. Implementing Schema Markup (Organization, FAQ, Author) provides the explicit metadata that helps AI crawlers identify and link entities correctly.

Topify’s One-Click Agent Execution bridges the gap between audit data and action. Once a visibility gap is detected, the platform’s AI agent analyzes content gaps against competitor citations, drafts GEO-optimized content including schema markup and data tables, and deploys directly. It turns a diagnostic report into a production-ready content brief.

AI Answers Change Faster Than Google Rankings. Your Audit Schedule Should Too.

An AI visibility audit isn’t a one-time project. The generative search environment is significantly more volatile than traditional search.

Data from 2026 shows that Google’s core updates and AI model recalibrations can shift up to 80% of top-three results in a single cycle. On top of that, there’s a “freshness gap”: Perplexity updates its index constantly, while ChatGPT may rely on training data several months old. Your brand’s position on one platform can shift without any corresponding change on another.

Monthly audits are the baseline for maintaining narrative control. Immediate audits should be triggered by major product launches, PR crises, or known AI model updates.

For teams that need more than monthly snapshots, Topify offers continuous monitoring. It alerts brands to citation drops or sentiment shifts in real time, so marketing teams can address inaccuracies or competitor incursions before they become entrenched in the model’s retrieval cache.

Conclusion

The gap between Google rankings and AI search recommendations is where the next generation of brand competition plays out. A brand can rank first on Google and be invisible to the AI engines where 900 million weekly active users now look for answers.

The 30-minute AI visibility tracking audit outlined here gives you a structured, repeatable process to measure where you stand. Track presence, sentiment, and citations across platforms. Benchmark against competitors. Then act on the gaps with a clear GEO strategy.

The brands that build this diagnostic muscle now will compound their authority advantage. In an era where decisions are made inside the chat box, the most valuable asset isn’t traffic. It’s the informed trust of the AI models your buyers rely on.

Get started with Topify to run your first AI visibility audit today.

FAQ

Q: What is AI visibility tracking?

A: AI visibility tracking is the process of measuring how often your brand gets mentioned, how it’s described, and where it ranks in the outputs of generative AI engines like ChatGPT, Perplexity, and Gemini. It goes beyond traditional SEO metrics to capture presence, sentiment, citation share, and positioning across AI-generated answers.

Q: Can I audit my brand’s AI visibility without a paid tool?

A: You can run a basic manual audit by entering category prompts into ChatGPT, Perplexity, and Gemini and documenting the results. The limitation is scale: AI outputs are probabilistic and vary by session and geography, so manual checks give you a snapshot, not a trend. Professional tools like Topify automate this across thousands of prompts and multiple platforms simultaneously.

Q: Which AI platforms should I track for brand visibility?

A: At minimum, cover ChatGPT, Perplexity, Gemini, and Google AI Overviews. Each runs a different retrieval pipeline, and only 11% of cited domains overlap across platforms. A brand can be a category leader on ChatGPT and completely absent from Perplexity.

Q: How often do AI search recommendations change?

A: More often than traditional Google rankings. AI model recalibrations and retrieval index updates can shift up to 80% of top-three results in a single cycle. Monthly audits are a reasonable baseline, with immediate checks after major product launches or known model updates.