Your domain authority is 65. Your top pages rank on page one for every target keyword. Your content team publishes twice a week. Then you type your core product category into ChatGPT and get back a confident, five-brand recommendation list. Your brand isn’t on it.

That’s not a content quality problem. It’s a visibility gap that traditional SEO metrics were never built to detect. When we ran a full AI visibility tracking audit for a mid-market SaaS client last quarter, we found they appeared in only 6% of the high-intent prompts in their category. Their closest competitor showed up in 31%. Over the next 90 days, seven specific tactics closed that gap and pushed their citation rate to 4× the original baseline.

Here’s what we did, step by step.

Most Brands Track SEO Rankings but Miss What AI Actually Cites

The disconnect between Google rankings and AI recommendations is wider than most marketing teams realize. Roughly 60% of all Google searches now resolve without a click to an external website. When AI Overviews trigger, that figure climbs to 83%. In conversational AI modes, it reaches 93%.

That means the majority of discovery and evaluation is happening inside AI-generated answers, not on your website. And the clicks that do come from AI sources carry disproportionate value. AI-referred visitors convert at rates up to 23 times higher than standard organic traffic, because the intent is already compressed by the time they arrive.

The problem is measurement. Legacy SEO tools track rank, traffic, and backlinks. They don’t tell you whether ChatGPT mentioned your brand, how Perplexity framed your product, or which sources Gemini cited instead of yours. Without AI visibility tracking, you’re optimizing for a channel that’s shrinking while ignoring the one that’s growing.

Our client’s starting point looked strong on paper: high DA, solid keyword positions, consistent publishing cadence. But when we mapped their AI visibility across 150 prompts on ChatGPT, Perplexity, and Gemini, the picture was different. Six percent citation rate. Negative sentiment on two platforms. Zero presence in comparison prompts.

That baseline became the starting line.

Tactic 1: Map the Prompts That Actually Drive AI Citations

Not all prompts are created equal. The average Google keyword is about four words. The average AI prompt runs closer to 23 words, packed with qualifiers like budget constraints, company size, and use-case specifics. Treating AI prompts like keywords is the first mistake most teams make.

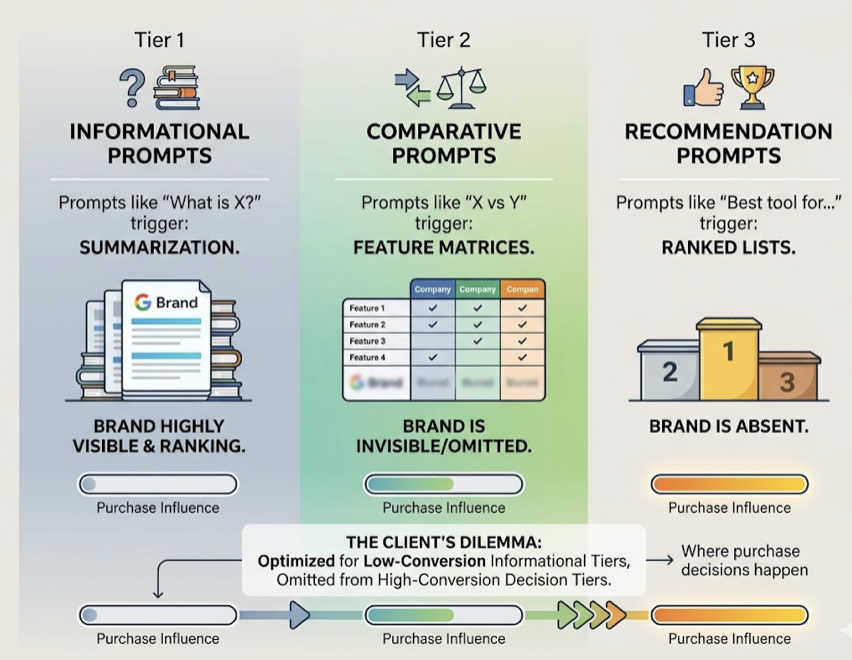

We categorized prompts into three tiers based on citation behavior. Informational prompts (“What is X?”) trigger summarization. Comparative prompts (“X vs Y”) trigger feature matrices. Recommendation prompts (“Best tool for…”) trigger ranked lists. Our client’s content was optimized for informational queries but almost invisible in the recommendation and comparison tiers, which is where purchase decisions happen.

The fix started with prompt discovery. Using Topify’s High-Value Prompt Discovery, we identified 40+ prompts in the client’s category where competitors consistently appeared but the client didn’t. Each prompt was scored by query volume, competitive density, and commercial intent. The top 20% of those prompts, the ones with high “qualifier density” around specific use cases and buyer profiles, became the content roadmap.

AI Prompts Researcher

Targeting these long-tail, high-intent prompts let the client bypass the “big brand bias” that dominates broader queries. Within three weeks, new content built for these specific prompts started appearing in AI answers.

Tactic 2: Reverse-Engineer What AI Cites for Your Competitors

Generative engines don’t rank pages. They retrieve sources through a process called Retrieval-Augmented Generation (RAG), which pulls from a corpus of trusted web documents to ground each response. To show up in that response, your content needs to be in the retrieval pool and match the extraction patterns the model prefers.

Here’s the uncomfortable reality: approximately 85.5% of AI citations in informational and evaluation queries come from third-party sources like Wikipedia, Reddit, G2, and tier-1 media outlets. Brand-owned domains account for less than 10% of citations. If your GEO strategy only optimizes your own website, you’re competing for a fraction of the citation pipeline.

We used Topify’s Source Analysis to map exactly which URLs each AI platform cited for the client’s top 30 prompts. The pattern was clear: competitors dominated not because their product pages were better, but because they had coverage on the specific G2 comparison pages, Reddit threads, and niche industry blogs that models treated as high-confidence sources.

That analysis became the targeting list for Tactics 3 through 5.

Tactic 3: Restructure Content for AI-Preferred Formats

Structural optimization is one of the highest-leverage moves in GEO, and it’s often overlooked. Research into what’s called Structural Feature Engineering (GEO-SFE) shows that formatting changes alone, without altering the underlying claims, can yield a 17.3% improvement in citation rates.

Why? Transformer-based LLMs parse text through attention mechanisms that respond to structural signals. Unstructured prose causes attention dispersion. Segmented, hierarchical text with clear headings and self-contained blocks focuses the model’s attention on the relevant section.

The specific changes that moved the needle for our client:

| Structural Change | Citation Impact |

|---|---|

| Question-style H2/H3 headings | +22% lift |

| Pricing and feature comparison tables | +47% to +51% lift |

| Pros/cons lists on product pages | +38% lift |

| Answer-first formatting (key facts in first 200 words) | +27% lift |

| FAQ sections with schema markup | +71% lift |

There’s a sweet spot for answer blocks: 134 to 167 words. Blocks shorter than that lack the information density models need. Blocks exceeding 300 words suffer from attention degradation in the middle. We restructured the client’s top 15 pages to fit this pattern, converting marketing copy into data-dense, table-heavy content that AI retrievers could extract cleanly.

The shift is less about writing differently and more about formatting for machine extraction. Think “data tabulization” over “marketing fluff.”

Tactic 4: Build Entity Authority Through Trust Anchors

In generative search, AI systems prioritize “entities,” formally recognized concepts, over keywords. Authority isn’t just about backlink volume anymore. It’s about the consistency of signals across what models treat as “truth anchors.”

Wikipedia sits at the top of that hierarchy. It comprises 3-4% of model training data and accounts for nearly 47.9% of ChatGPT’s top-ten citation share. Wikidata, with its structured Q-IDs, provides the metadata layer models use for entity resolution. If your brand doesn’t have a stable identifier in these systems, LLMs have lower confidence when attributing facts to you.

Our client didn’t have a Wikipedia page. So we focused on three proxy strategies:

First, we ensured the client’s Wikidata profile was complete, with sameAs links to social profiles, Crunchbase, and industry directories. Second, we secured mentions within existing high-authority Wikipedia articles relevant to their category. Third, we prioritized third-party review coverage on G2 and Capterra, which function as consensus validators. Research suggests brands with strong third-party review profiles see roughly a 3× citation multiplier compared to those without.

Consistency matters here. If your website says “enterprise-grade platform” but G2 reviews describe you as “good for small teams” and your LinkedIn bio says something else entirely, the model flags the conflicting signals and defaults to a better-corroborated competitor.

Tactic 5: Close the Source Gap Between You and Competitors

The “Source Gap” is the structural disadvantage that exists when competitors control the third-party surfaces AI models retrieve from. Since 85% of citations come from external domains, your AI visibility is largely determined by your coverage on listicles, comparison engines, and community forums you don’t own.

Closing this gap requires what we call “Machine Relations,” a digital PR strategy focused specifically on the URLs that AI already trusts for your category.

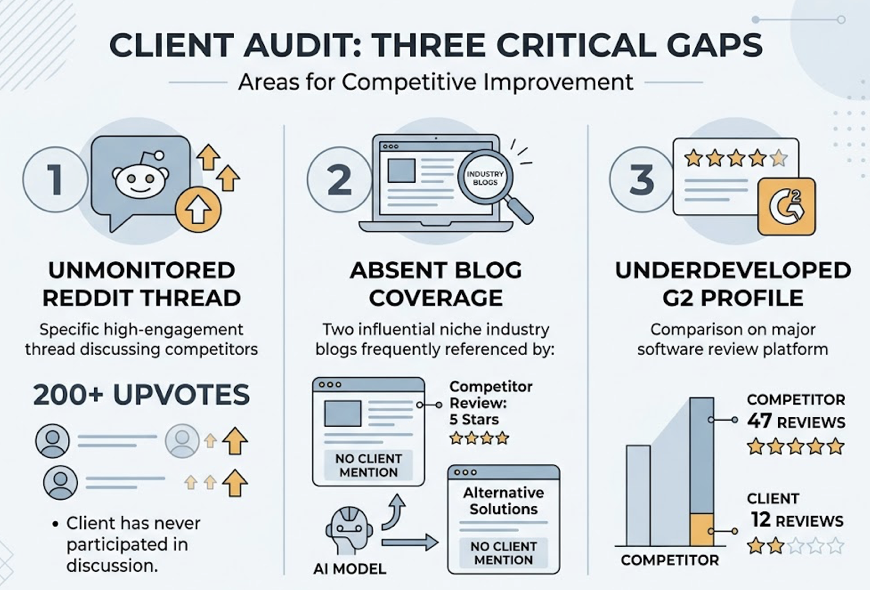

For our client, the audit revealed three critical gaps. First, competitors were being cited from a specific Reddit thread with 200+ upvotes that the client had never participated in. Second, two niche industry blogs that models consistently retrieved had published competitor reviews but had no coverage of the client. Third, the client’s G2 profile had 12 reviews versus a competitor’s 47.

The playbook was targeted:

We developed authentic Reddit participation in high-visibility threads. We pitched contributed content to the two niche publications. We launched a structured review acquisition campaign on G2.

Topify’s Competitor Monitoring flagged when new competitors entered the AI recommendation set, showing which specific URL the model referenced to justify the inclusion. That let the team respond within days, not months, securing a “corrective” placement before the next model refresh.

Tactic 6: Maintain Citation Velocity with a Refresh Cadence

Content in AI search has a half-life. Research shows that 50% of content cited by AI is less than 13 weeks old. AI-cited pages are on average 25.7% fresher than traditionally ranked organic content. This creates the “13-week rule”: content not refreshed quarterly is three times more likely to lose its citation position.

Our client had several pages ranking well in traditional search that hadn’t been updated in over a year. In AI search, those pages were effectively invisible.

We implemented a tiered refresh cadence:

| Content Type | Refresh Frequency | What Gets Updated |

|---|---|---|

| Core product comparisons | Monthly | Current-year data, pricing, new features |

| Category explainers | Every 8-12 weeks | Recent research, updated FAQ blocks |

| Thought leadership | Quarterly | New examples, emerging trends |

| Evergreen guides | Bi-annually | Statistics, relevance check |

Cosmetic date changes don’t work. Models detect and ignore them. A meaningful update requires replacing outdated statistics with current-year data, adding references to recent research, and expanding sections with new FAQ blocks addressing emerging questions. Content updated within 30 days receives up to 6× more AI citations than content over 12 months old.

The ROI of operationalized maintenance is measurable. Within four weeks of the first refresh cycle, three previously invisible pages started appearing in AI answers.

Tactic 7: Track, Measure, and Iterate with AI Visibility Tracking

The non-deterministic nature of generative responses, where a single prompt can yield different outputs across different models and different days, makes legacy rank tracking obsolete. You can’t manage what you don’t measure, and measuring AI visibility requires a fundamentally different framework.

Effective ai visibility tracking operates across seven core indicators:

Visibility Score: The percentage of target prompts where the brand appears. Category leaders typically maintain 30-45%.

Sentiment Score: A 0-to-100 scale measuring whether AI framing is positive, neutral, or negative. Scores below 40 indicate a reputation problem that can disqualify a brand from high-intent shortlists.

Position Rank: The relative order of mentions in multi-brand lists. First-mentioned brands earn significantly higher trust and click-through.

Volume Analytics: Monthly demand for topics specifically within AI interfaces, surfacing “dark queries” invisible to traditional keyword tools.

Mentions Rate: Raw frequency of brand names within answer text, tracking awareness even without direct links.

Intent Alignment: Whether AI correctly associates the brand with its target customer profile and primary use case.

Conversion Visibility Rate (CVR): A predictive measure of how likely the brand’s visibility is to drive action. AI-referred traffic converts at an average of 14.2%, a 5.1× advantage over traditional search.

For our client, we tracked all seven weekly using Topify’s Comprehensive GEO Analytics dashboard across ChatGPT, Perplexity, and Gemini. The measurement loop connected directly to execution: when citation drift showed a drop on a specific prompt cluster, we traced it to a competitor’s new G2 review and responded with a targeted content update within 48 hours.

That feedback loop, discovery to optimization to measurement, is what turned a one-time improvement into sustained 4× growth.

Conclusion

The gap between brands that dominate AI recommendations and those that remain invisible comes down to systems, not luck. The seven tactics here follow a logical chain: discover the right prompts, analyze what AI already trusts, restructure your content for extraction, build entity authority, close the source gap, maintain freshness, and measure everything continuously.

None of this is a one-time project. Citation patterns shift as models retrain and retrieval algorithms evolve. The brands that treat ai visibility tracking as an ongoing discipline, benchmarking Visibility, Sentiment, and Position weekly, will control the recommendations that define discovery in 2026 and beyond. Get started with Topify to see where your brand stands today.

FAQ

Q: What is AI visibility tracking?

A: AI visibility tracking is the process of monitoring how often and how favorably your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, and Gemini. It measures metrics like citation rate, sentiment, mention frequency, and recommendation position, none of which traditional SEO tools capture.

Q: How long does it take to see results from AI citation optimization?

A: Structural content changes and technical fixes (like unblocking AI crawlers) can produce results within days. Broader tactics like entity authority building and source gap closure typically show measurable improvement within 4 to 12 weeks, depending on the competitiveness of the category.

Q: Can you track brand mentions in ChatGPT?

A: Yes. Tools like Topify simulate thousands of prompts across ChatGPT and other AI platforms, tracking your brand’s mention frequency, recommendation position, and sentiment in each response. This replaces the manual approach of typing queries one by one.

Q: What’s the difference between SEO and GEO?

A: SEO optimizes for ranking on search engine results pages. GEO (Generative Engine Optimization) optimizes for being cited, recommended, and accurately described inside AI-generated answers. The key metrics shift from organic rank and CTR to citation share, visibility score, and AI sentiment.