Your content ranks on page one. Your DA is solid. But a potential customer asks ChatGPT, “What’s the best [tool in your category]?” and gets five recommendations. You’re not on the list.

Traditional SEO metrics can’t explain this. They weren’t built to measure what AI chooses to say. And in 2026, the gap between Google visibility and AI citation is where most brands are quietly losing ground.

AI Citation Isn’t Random. It Follows a Pattern.

Most marketers assume AI just summarizes whatever ranks well on Google. That’s not how it works.

Generative AI engines use a process called Retrieval-Augmented Generation (RAG). When a user submits a prompt, the AI retrieves relevant text fragments from the web, then synthesizes them into a response. It doesn’t pick the highest-ranked page. It picks the most extractable, fact-dense, and structurally clear content it can find.

Research from Princeton, Georgia Tech, and other institutions confirms that AI citation visibility can improve by 30% to 40% through targeted GEO strategies. That improvement doesn’t come from gaming algorithms. It comes from strengthening what AI researchers call “authority signals”: precise data, verifiable claims, expert attribution, and semantic clarity.

The difference between a cited brand and an invisible one isn’t always content quality. It’s usually content structure. AI needs content it can extract cleanly. If yours buries key facts in marketing copy or behind heavy JavaScript, AI moves on.

That’s the pattern. And it’s fully optimizable.

Step 1: Know Which Prompts You Need to Appear In

AI citation starts with prompts, not keywords.

A user on Perplexity doesn’t type “best CRM.” They type, “What’s the best CRM for a 15-person remote team that needs Salesforce integration and a free trial?” That specificity completely changes the competitive landscape. Brands that built their SEO around short-tail keywords are often invisible in this context.

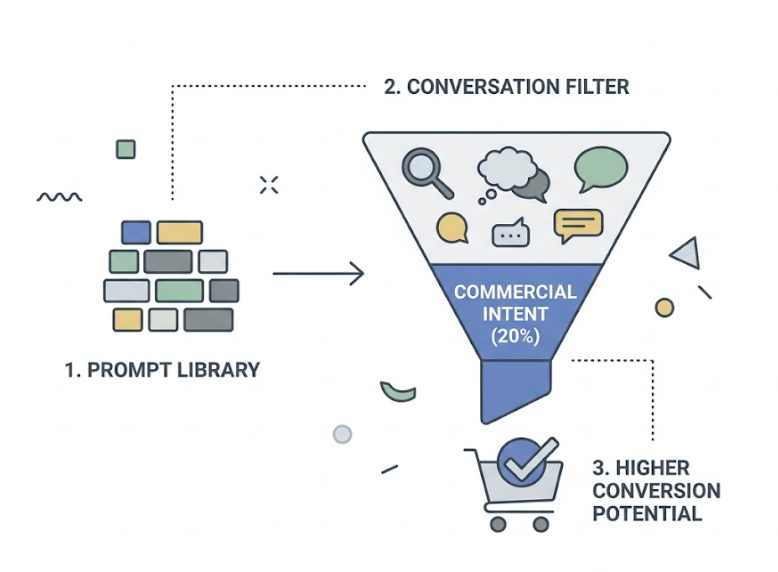

The first step is building a prompt library: 50 to 100 real user prompts that map to your product category, use cases, and decision stages. Importantly, about 20% of ChatGPT conversations carry clear commercial intent. If your brand enters those “intent windows,” conversion potential is substantially higher than traditional search.

The challenge is that different AI platforms attract different user behaviors. Perplexity users skew toward factual queries and recent data. ChatGPT users tend toward complex, multi-step reasoning. Google AI Overviews blend both. Your prompt library needs to reflect where your actual audience is asking questions, not just where you’ve historically built SEO authority.

Topify‘s AI Volume Analytics addresses this gap directly. Unlike traditional keyword tools, it estimates monthly prompt demand across ChatGPT, Gemini, Perplexity, and other platforms. You can see which prompts have high AI search volume in your category, where competitors are already getting cited, and which platform differences matter for your audience.

This isn’t keyword research with a new name. It’s a fundamentally different data layer.

Step 2: Structure Content So AI Can Extract It

AI doesn’t read your page the way a human does. It uses vector embeddings to scan for semantically relevant text chunks. If your content is buried in promotional copy, nested inside accordions, or rendered client-side via JavaScript, AI often retrieves nothing.

The fix is a production model called semantic chunking: every section of your content should be an independent, self-contained unit of meaning. That means it should make sense even if lifted out of context.

The Formats AI Prefers to Cite

Some content structures are consistently over-represented in AI citations:

Comparison tables are the highest-value format. Structured data in Markdown tables is trivial for LLMs to parse and compare. If you’re making category claims, put them in a table.

Numbered step lists map cleanly to how-to queries, which are among the most common AI search prompt types. A well-formatted 5-step process is almost purpose-built for RAG retrieval.

Definition blocks let AI extract your answer in a single chunk. If you’re defining a concept, lead with the definition, not the backstory. Put the answer first, every time.

FAQ sections are consistently cited. Domains with structured FAQs are cited roughly 40% to 100% more often than those without. The questions should mirror real user language, not sanitized marketing phrasing.

Data points with explicit sourcing are the highest-trust signal. “According to [Institution] 2025 research” gives AI a clear attribution chain. Unsourced statistics get deprioritized.

What Makes a Page “Uncitable” to AI

Heavy client-side rendering is the most common problem. If your page requires JavaScript execution to surface your content, many AI crawlers (including GPTBot and ClaudeBot) see a blank page or a loading state.

Hiding key facts in collapsible UI elements, using non-semantic HTML, or writing in long, dense paragraphs without clear topic sentences all reduce what researchers sometimes call “extraction score.” Pages that take more than 2 seconds to load risk timing out AI retrieval systems entirely.

The structural principle is straightforward: write for humans, but render for machines.

Step 3: Build Source Authority That AI Trusts

In 2026, traditional Domain Authority is being supplemented by something more nuanced: entity authority and consensus signals.

AI models don’t just evaluate your site in isolation. They evaluate your brand across the entire web. Is the information about you consistent across LinkedIn, Wikipedia, G2, your press coverage, and your own site? Inconsistencies, even minor ones like differing founding dates or mismatched product descriptions, create what AI systems treat as a reliability flag.

Three dimensions drive AI source trust:

Cross-web consistency. Your brand’s factual footprint needs to be uniform. This is table stakes, but most brands haven’t audited it.

Associative authority. AI tracks which other sources cite you. A mention in a .gov report, an .edu case study, or a Forbes feature carries substantial weight. This is where digital PR starts to directly feed AI citation rates.

Community consensus. This is the most underestimated factor. Research shows Reddit accounts for 21% to 46.7% of AI citations across major platforms. Perplexity, in particular, draws heavily from forum discussions. If your brand is genuinely referenced and discussed in relevant communities, AI picks up those signals.

Topify’s Source Analysis tool maps exactly this: which domains are citing your competitors, in what context, and what the citation-to-authority pattern looks like. You can identify the specific media outlets or community platforms that function as AI citation hubs in your category, then prioritize outreach accordingly.

Link-building in this context isn’t about PageRank. It’s about being cited by sources that AI already trusts.

Step 4: Track Whether AI Is Actually Citing You

“You can’t optimize what you can’t measure” applies here more than in almost any other channel.

Manually prompting ChatGPT to see if you appear is both inefficient and misleading. Large language models introduce randomness into every response. A single test tells you almost nothing. You need volume, consistency, and cross-platform coverage to establish a real baseline.

The metrics that matter in 2026 are different from traditional SEO KPIs:

Share of Model (SoM): The percentage of target-prompt responses that include your brand. This is the AI-era equivalent of share of voice.

Citation sentiment: Whether AI describes your brand positively, neutrally, or negatively. A brand cited as “affordable but limited” has a very different conversion trajectory than one cited as “the go-to platform for enterprise teams.”

Citation provenance: Which specific URLs on your site, or which third-party pages, are generating AI citations. This tells you which assets are pulling weight and which aren’t.

Position in response: When multiple brands are listed, where do you appear? First-position citations generate meaningfully more trust and traffic than fifth-position.

| Tracking Method | Limitation | Topify Advantage |

|---|---|---|

| Manual testing | 10-20 prompts/day max, high variance | Thousands of simulated prompts, multi-platform |

| Platform-native analytics | Only covers one AI engine | Unified view across ChatGPT, Gemini, Perplexity, and more |

| Standard SEO tools | No AI citation layer | Native GEO metrics: SoM, Sentiment, Position, CVR |

Topify’s Visibility Tracking runs automated prompt simulations at scale, surfaces sentiment scoring through an NLP engine, and tracks how your citation rate changes over time. The optimization cycle typically shows measurable visibility improvement within 8 to 12 weeks of implementing structural changes.

Set a baseline before you change anything. Otherwise, you’re optimizing blind.

Step 5: Close the Gap Between You and the Brands AI Prefers

AI citation in most categories follows a concentrated pattern. AI typically cites 3 to 7 sources per response. If you’re not in that set, the traffic and trust go entirely to whoever is.

The question isn’t whether to compete for citations. It’s why AI is currently choosing your competitors and not you.

Three Gaps Worth Diagnosing

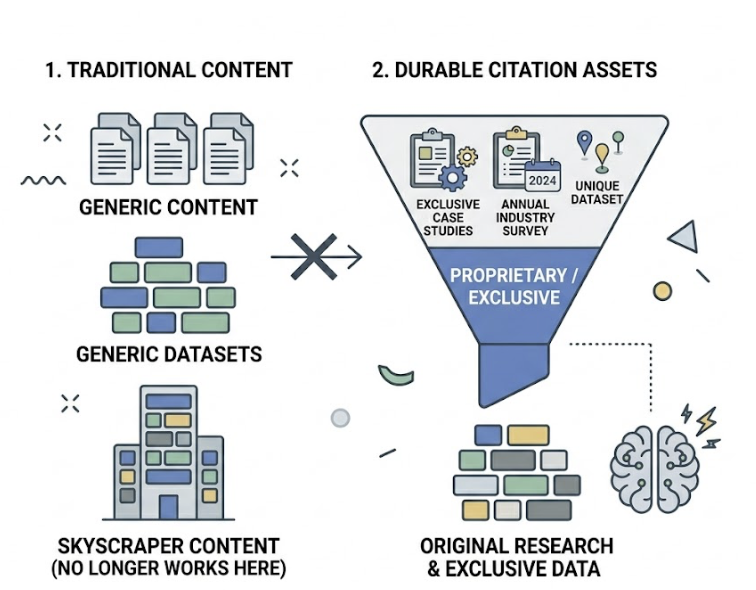

Information gain gap. Does your competitor have original research, proprietary data, or exclusive case studies that you don’t? AI is drawn to information that can’t be generated from existing training data. Publishing an annual industry survey or a dataset no one else has is one of the most durable citation assets you can build. Generic “skyscraper content” no longer works here.

Schema gap. Are competitors using structured data markup (FAQPage, ProductDetail, ShippingDetails) that makes their commercial information machine-readable at lower cost? Schema markup reduces the work AI has to do to extract your data. Less extraction friction equals more citations.

Third-party validation gap. Is your competitor consistently referenced on Reddit, mentioned in Wikipedia, and listed in authoritative industry reports while your brand is absent? That external consensus is what AI uses to break ties between similar-quality sources.

Topify’s Competitor Monitoring gives you a live view of this: where competitors are being cited, by what sources, in what prompt contexts, and at what sentiment levels. The output isn’t just a report. It’s a gap analysis you can act on.

Once you’ve identified the gaps, the action sequence is clear. For information gain: publish original data. For schema: audit your highest-value pages and add missing markup. For third-party validation: invest in community presence in the forums and platforms your category actually uses.

The 5-Step AI Citation Checklist at a Glance

| Step | Core Action | Supporting Tool |

|---|---|---|

| 1. Identify high-value prompts | Build a prompt library of 50-100 commercial-intent queries | Topify AI Volume Analytics |

| 2. Restructure content for extraction | Implement semantic chunking, FAQ sections, comparison tables | Topify One-Click GEO Execution |

| 3. Build cross-web authority | Audit brand consistency, pursue digital PR in high-citation channels | Topify Source Analysis |

| 4. Track citation performance | Establish SoM baseline, monitor sentiment and position | Topify Visibility Tracking |

| 5. Close the competitor gap | Run gap analysis on information, schema, and third-party validation | Topify Competitor Monitoring |

Conclusion

AI citation isn’t luck. It’s what happens when a brand consistently provides clear, structured, verifiable information across the right channels.

The brands winning in AI search right now didn’t stumble into citations. They built the content architecture, the authority footprint, and the measurement system that makes citation predictable. That’s achievable for any brand willing to treat GEO as a structured channel, not an afterthought.

Get started with Topify to see exactly which of your pages are generating AI citations, which prompts you’re missing, and where your competitors are pulling ahead.

FAQ

Q: How long does it take for AI to start citing my content after I optimize it?

A: It depends on the platform. AI engines with live web search (like Perplexity and SearchGPT) can pick up newly indexed content within days. For models that rely on training data snapshots, the lag can be several months. In practice, GEO optimization on real-time AI platforms typically shows measurable citation improvement within 8 to 12 weeks.

Q: Does my Google ranking affect whether AI cites me?

A: There’s a correlation, but not a direct causal link. Roughly 38% of AI citations come from pages in Google’s top 10, but that figure is declining as AI engines develop more independent evaluation logic. A page ranking #12 with a clean structure, strong schema markup, and clear factual content often outperforms a #3 page that’s dense, slow-loading, or marketing-heavy.

Q: Which AI platforms should I prioritize?

A: Prioritize based on where your audience actually asks questions. If your category involves frequent factual queries or product research, Perplexity is high-priority. If your audience uses AI for complex decision-making, ChatGPT should be central. Google AI Overviews is non-negotiable for most brands given Google’s search volume. Ideally, you’re tracking all three simultaneously.

Q: Can a smaller brand realistically compete with large incumbents for AI citations?

A: Yes, and in some ways GEO is more democratic than traditional SEO. AI evaluates content quality and structural clarity more than raw domain authority or budget. A smaller brand that publishes original data, maintains consistent schema markup, and builds genuine community presence can capture citation share from much larger competitors in a specific niche. The information gain advantage is not something money can simply buy.