Your organic traffic looks fine. Conversion rates are quietly dropping. And you’re not sure why.

Here’s one explanation worth taking seriously: a growing share of your buyers never visit your site anymore. They ask ChatGPT, get a synthesized answer with 3 sources, and move on. Your brand isn’t one of the 3. You don’t show up in the zero-click moment that now shapes the decision.

That’s the gap AI citation data is starting to quantify.

AI Search Is Already Your Buyers’ First Stop

The shift happened faster than most teams planned for.

By late 2025, 50% of the US population is actively using AI-powered search engines to make buying decisions. In high-consideration categories like finance, consumer electronics, and wellness, that number climbs to 40-55% of consumers. And according to McKinsey, 44% of AI search users now consider these platforms their primary source of information — ahead of traditional search (31%) and brand websites (9%).

The AI Overview trigger rate tells the same story in a different way. In January 2025, Google’s AI Overviews appeared on 6.49% of searches. By December 2025, that rate had doubled to 13.14%. Projections put it at 47% by the end of 2026.

That means nearly half of all Google searches could produce an AI-synthesized answer before a user ever sees your link.

There Are Only 3 to 5 Citation Slots Per AI Response

This is the number that should reset how you think about visibility.

In traditional search, a first-page result offers ten opportunities to appear. In a generative response, Profound’s analysis of 680 million citations found that AI platforms typically surface just 2 to 12 sources per answer, depending on the engine. Google AI Overviews cite 3 to 5 sources. ChatGPT typically cites 2 to 4. That’s not a funnel. That’s a bottleneck.

The competitive dynamics get sharper when you factor in platform fragmentation. The overlap in which sources different AI engines actually cite is strikingly low.

| Platform | Citations per Response | Overlap with Other Platforms |

|---|---|---|

| ChatGPT | 2-4 | 11-12% |

| Perplexity | 5-12 | 11% |

| Google AI Overview | 3-5 | 13.7% |

| Claude | Variable | Low |

An 11% overlap means a brand dominating ChatGPT citations is probably invisible in Perplexity. Perplexity, notably, pulls 46.7% of its top citations from Reddit-style community content. ChatGPT skews toward long-form, authoritative prose. These aren’t variations of the same game. They’re different games.

The Brands That Do Get Cited Convert at 5x the Rate

Here’s what makes AI citation worth competing for.

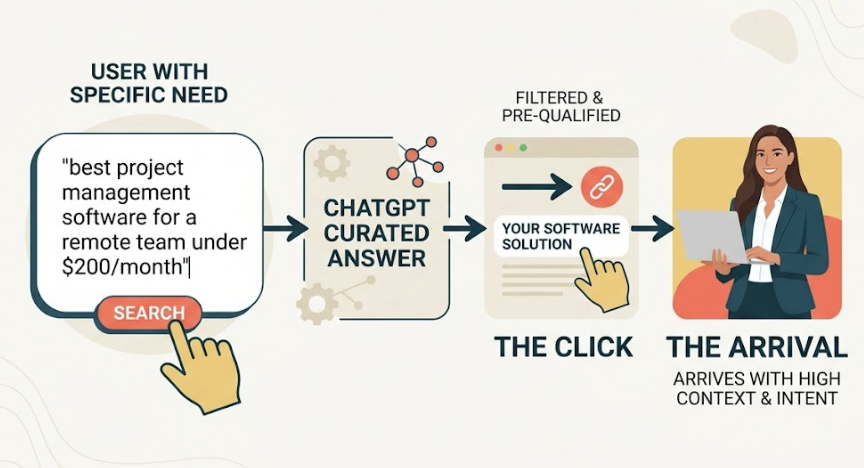

AI-referred users aren’t casual browsers. The synthesis process acts as a pre-qualification filter. By the time someone clicks through from a ChatGPT recommendation for “best project management software for a remote team under $200/month,” they’ve already received a curated answer. They arrive with context and intent.

The data reflects this.

| Metric | Organic Search | AI Referral |

|---|---|---|

| Conversion Rate | 2.8% | 14.2% |

| Pages per Session | 1.8 | 4.2 |

| Time on Site | 2.5 min | 8-10 min |

| Lead Conversion ROI | Baseline | 2.8x-4x higher |

AI platforms currently drive only 0.15% of global internet traffic. But users they send stay nearly four times longer on site. In one documented case, a SaaS firm saw leads from AI referrals convert at 2.8 times the rate of organic traffic, producing a 288% ROI on their GEO investment without changing total traffic volume at all.

The referral is rarer. The referral is worth dramatically more.

Your #1 Google Ranking Doesn’t Secure Your AI Citation

This is where most marketing teams have a false sense of security.

Traditional organic rankings only account for 17% to 38% of citations that appear in Google AI Overviews. A competitor in position 23 on a Google results page may be the primary source cited in the AI answer if their content is more extractable. The AI doesn’t honor your PageRank. It pulls what it can confidently reformulate.

That means a competitor you haven’t tracked in years, one sitting far below you in traditional rankings, may currently be teaching ChatGPT, Perplexity, and Gemini what your category is about.

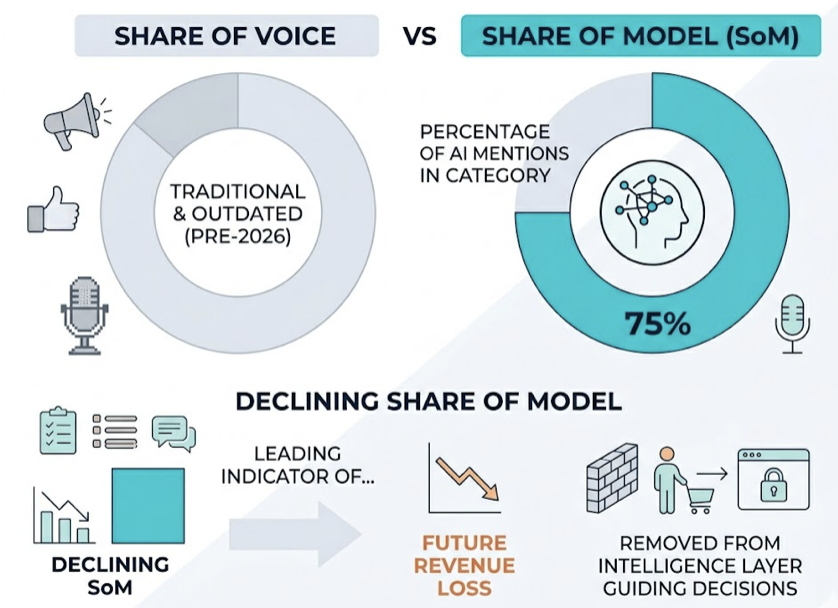

This is why Share of Model (SoM), not Share of Voice, is the metric that actually matters in 2026. SoM measures the percentage of AI-generated responses in your category that mention or cite your brand. A declining SoM is a leading indicator of future revenue loss — it shows your brand is being systematically removed from the intelligence layer that guides decisions before a buyer ever reaches your website.

Topify’s Citation Gap Analysis is built specifically for this problem. It identifies which competitor URLs are currently being cited in your category, what information those pages provide that yours don’t, and which queries you’re absent from entirely. That makes prioritization concrete rather than intuitive.

Publishing More Content Won’t Fix a Citation Problem

The instinct is reasonable: produce more, rank more, get cited more. The data doesn’t support it.

A Princeton University study (Aggarwal et al., 2023) tested nine different optimization approaches for AI visibility. Keyword density optimization — the core tactic of legacy SEO — showed low to negative impact on citation rates. What actually moves the needle looks different.

| Optimization Method | Traditional SEO Impact | GEO/AI Citation Impact |

|---|---|---|

| Keyword density | High | Low / Negative |

| Citing external sources | Neutral | +115.1% visibility |

| Adding statistics & data | Moderate | +37-40% visibility |

| Expert quotations | Low | +30% visibility |

| Structured data (FAQ, lists) | High | High (essential) |

Content updated within the last 30 to 90 days receives approximately 2.3 times more citations than older material. Recency matters. But recency alone without structural depth doesn’t.

AI models prioritize what they can extract with confidence: direct answers in the first 40 to 60 words of a section, tables, cited statistics, attributed expert quotes. Marketing prose that reads well to a human is often invisible to a language model scanning for extractable facts.

How to Actually Track Which Sources AI Is Citing

You can’t optimize what you haven’t measured. And most analytics dashboards weren’t built for this.

A practical GEO monitoring workflow starts with querying 20 to 50 high-intent buyer prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews to establish a baseline. From there, the work is identifying which URLs are currently cited for those prompts and what those pages contain that yours don’t.

Topify’s Source Analysis automates this across all major AI platforms, tracking citation patterns weekly. It surfaces which domains are being referenced in your category, flags when a competitor displaces you for a high-value prompt, and generates a unified GEO Score that aggregates performance across platforms. Given that AI responses are non-deterministic and shift with model updates, weekly monitoring isn’t optional — it’s the minimum viable cadence.

There’s also a technical layer most brands overlook. Many CDNs, including Cloudflare, block AI crawlers like GPTBot and PerplexityBot by default. If you haven’t explicitly allowed these bots in your robots.txt, you may be entirely invisible in AI answers regardless of content quality. That’s a 15-minute fix with potentially significant impact.

Conclusion

AI citation is not a variation of SEO. It’s a separate distribution channel where the rules, the metrics, and the competitive landscape are fundamentally different.

The 3 to 5 citation slots per AI response, the 5x conversion premium for AI-referred traffic, the 11% platform overlap: these numbers collectively describe a winner-takes-most environment that’s forming right now. With traditional search volume projected to drop 25% by 2026, the brands that earn consistent AI citations won’t just compensate for lost clicks. They’ll capture a disproportionate share of buyer attention in the channel that’s replacing the one they’re currently optimizing for.

The window for early positioning is open. It won’t stay open long.

FAQ

What is AI citation and why does it matter for SEO?

AI citation refers to when a generative AI platform like ChatGPT, Perplexity, or Google AI Overviews references a specific source URL in its synthesized answer. It matters because AI platforms are increasingly the first stop in a buyer’s research process, and a brand that isn’t cited in these answers is invisible at the moment intent is highest — even if it ranks #1 on traditional search.

How do AI platforms decide which sources to cite?

Each platform uses different retrieval logic, which is why citation overlap between platforms sits at just 11-13.7%. Common factors across platforms include content recency (updated within 30-90 days), the presence of cited statistics and external references, structured formatting (tables, lists, FAQ schemas), and domain authority signals from trusted third-party sites.

Can I improve my brand’s AI citation rate without rewriting everything?

Yes. Two of the highest-impact starting points are technical: ensuring AI crawlers like GPTBot and PerplexityBot aren’t blocked by your CDN or robots.txt, and implementing JSON-LD structured data on key pages. On the content side, adding verifiable statistics with source attribution and making the first 40-60 words of each section directly answer the implied question can meaningfully improve extractability.

How do I know if competitors are outranking me in AI answers?

Traditional rank tracking tools don’t capture this. You need to run high-intent prompts across AI platforms and record which sources are cited — or use a platform like Topify that automates this monitoring across ChatGPT, Perplexity, Gemini, and Google AI Overviews and alerts you when a competitor displaces your brand for a tracked prompt.