Your SEO rankings look solid. Traffic is up. But when a potential customer opens ChatGPT and types “what’s the best [your category] tool for mid-sized companies,” your brand doesn’t come up. A competitor does. Three times.

That gap, between where you rank on Google and where you land in AI answers, is where the new competitive battle is being fought. And most brands don’t even know they’re losing it.

Most Brands Are Invisible to AI Search Without Knowing It

Traditional search gives every brand multiple shots. A user clicks through three links, compares pages, and eventually finds you. AI search doesn’t work that way.

When ChatGPT or Perplexity synthesizes an answer, it picks two or three sources and presents them as the definitive response. If your brand isn’t cited, it doesn’t exist in that conversation. The user doesn’t scroll down to find you.

This is what researchers call an “AI visibility gap”: brands that dominate Google rankings but have zero presence in AI-generated answers. It’s not a penalty. It’s just that AI systems never learned to trust your content as a reliable source.

That’s fixable. But first, you need to know where you actually stand.

What AI Citation Actually Means (And Why It’s Not the Same as SEO)

An AI citation isn’t a backlink. It’s not a ranking. It’s something more specific: when an AI system selects your content as evidence to support a claim it’s making.

Traditional SEO is built on keyword matching and domain authority. AI citation runs on a different logic entirely, based on a process called Retrieval-Augmented Generation (RAG). The AI converts your content into a semantic vector, compares it against the user’s query, and decides whether your information is specific, accurate, and trustworthy enough to quote.

The difference matters because a brand with a domain authority of 80 can still get zero AI citations if its content lacks what AI retrieval systems look for: factual density, clear entity definitions, and external corroboration. The research report from this field puts it plainly: AI citation is about being a source of evidence, not a source of traffic.

The table below shows where the two systems diverge:

| Dimension | Traditional SEO | AI Citation (GEO) |

|---|---|---|

| Core unit | Web pages (URLs) | Semantic passages / chunks |

| Key signals | Backlinks, keyword density | Factual density, entity clarity |

| User experience | Click-through to your site | Zero-click, answer delivered directly |

| Citation purpose | Promotion and visibility | Fact verification and evidence |

| How you measure it | Rankings | Citation frequency, Share of Voice |

How to Manually Check Your AI Citations Right Now

Before setting up any monitoring system, run a manual audit. It takes about 15 minutes across three platforms and tells you whether you have a citation problem worth solving.

The goal isn’t to search your brand name. It’s to simulate how real buyers actually ask questions, then see whether your brand appears in the response.

ChatGPT

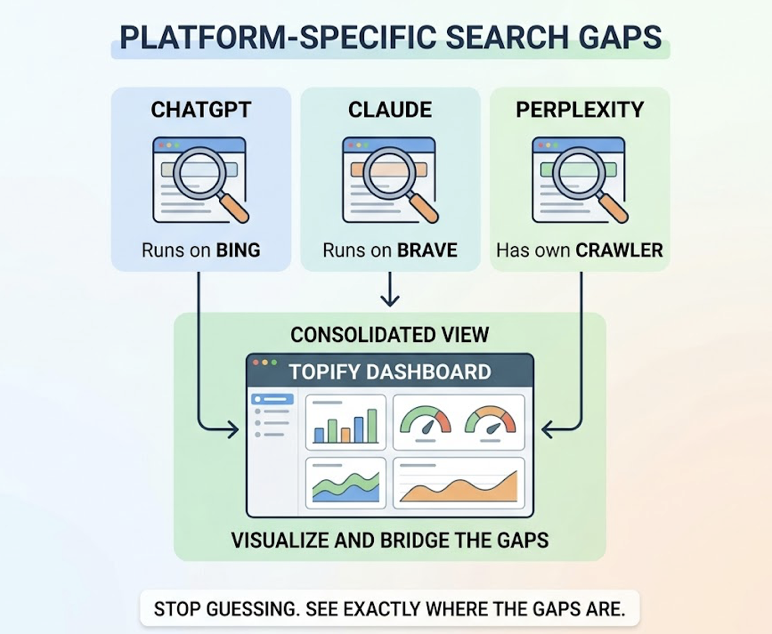

ChatGPT’s search mode (available in GPT-4o with browsing enabled) pulls from Bing’s index, semantically reranks the top results, and synthesizes an answer. It tends to weight recency and source specificity.

Use prompts like: “What are the most recommended [your product category] tools for mid-sized companies in 2026? Give me the top three with reasons.”

What to look for: Is your brand listed as a primary recommendation, or just mentioned in passing? More importantly, check the footnotes. If ChatGPT cites a competitor’s website in the sources but only mentions your brand in the text, your content is losing the “information gain” competition. The AI found a competitor’s page more useful as evidence.

Perplexity

Perplexity is a citation-first engine. Every sentence it generates needs a source. It pulls from Google, Bing, and its own crawl index, and it weights recency heavily.

Try: “Compare [your brand] and [competitor] on [specific capability]. Include recent user reviews and technical documentation.”

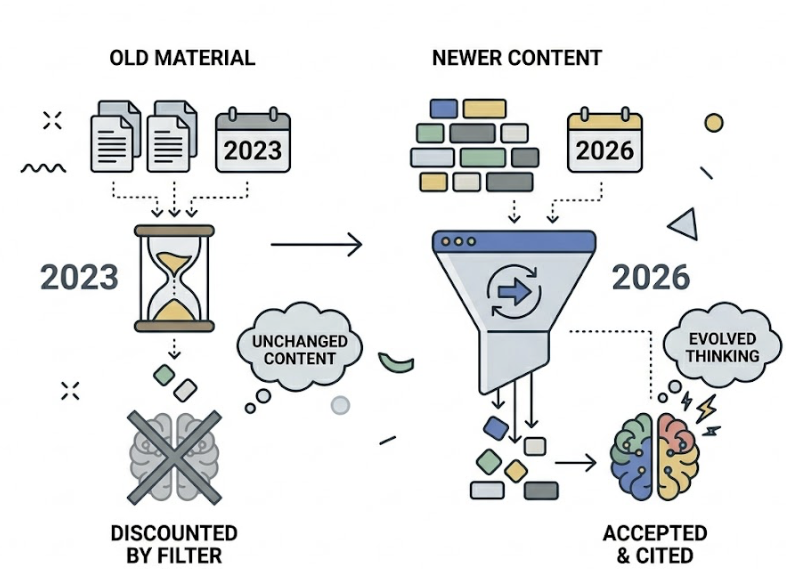

What to look for: If the sources cited are from two years ago, your newer content hasn’t passed Perplexity’s time decay filter. Perplexity discounts older material systematically. Being cited from a 2023 blog post in 2026 is almost worse than not being cited, because it signals to users that your thinking hasn’t evolved.

Claude

Claude uses Brave Search as its primary retrieval infrastructure. Research shows that Brave’s search results correlate with Claude’s citations at a rate of 86.7%, which means your Brave Search presence is a strong proxy for your Claude visibility.

Try: “From an expert perspective, what is [your brand]’s core methodology for solving [specific customer problem]? How does it differ from industry standards?”

What to look for: Can Claude describe your product accurately and specifically? If it gives a vague or generic description, it means your brand hasn’t been clearly defined in the external sources Claude trusts. Wikipedia, industry white papers, and analyst coverage are the “trust anchors” Claude relies on most.

5 Signs Your Brand Has an AI Citation Problem

After running those checks, you’ll have raw observations. Here’s how to turn them into a diagnosis.

Low citation frequency. In 10 queries about core problems you solve, your brand appears in fewer than 3. The research benchmark is clear: brands cited fewer than 30% of the time on their core topic have a content extractability problem. AI systems can’t pull clean facts from your pages.

Competitor displacement. The AI describes a competitor’s features in detail and only mentions you in passing. This signals that in AI semantic space, your competitor has established a stronger association with your category. They’ve achieved what researchers call “semantic monopoly.”

Outdated or negative sentiment. The AI pulls a two-year-old review or a discontinued product mention. Old negative signals haven’t been overwritten by newer positive content. AI systems don’t automatically forget bad data; you have to bury it with volume and authority.

Source mismatch. The AI cites a Reddit thread or third-party review to explain your pricing, rather than your own pricing page. This means your official content has poor machine readability. The Reddit thread was more extractable than your website.

Entity ambiguity. When asked about your brand, the AI gives the wrong industry classification or confuses you with another company. This is the most serious signal. Your brand’s entity identity hasn’t been established in the knowledge graph that AI systems draw from.

Why Running Manual Checks Every Week Doesn’t Scale

Here’s the core problem with the manual approach: LLMs are stochastic. The same query, run twice, can return different sources. A single test gives you one data point from one moment in one model’s probabilistic output.

To get statistically meaningful visibility data, you’d need to run hundreds of prompt variations, across multiple AI platforms, on a consistent schedule, and then aggregate the results. Manually. Every week.

That’s not realistic for any team.

This is where a platform like Topify changes the equation. Instead of running 10 manual checks, Topify executes thousands of simulated queries daily, covering long-tail prompt variations your team would never think to test. The result isn’t a snapshot; it’s an AI Visibility Score (AVS) with statistical weight behind it. Scores below 10 indicate near-invisibility. Above 70 means you’re functioning as a category authority in AI answers.

The difference between a manual check and Topify’s tracking is the difference between checking the weather once and running a climate model.

How to Set Up Ongoing AI Citation Monitoring

If you’re moving from manual checks to systematic monitoring, the setup process follows three steps.

Build a prompt library first. Don’t just monitor your brand name. Structure your prompt matrix around three types of queries: buyer intent (“which [category] tool is best for [specific use case]”), entity clarity (“what is [your brand]’s approach to [core methodology]”), and competitive comparison (“[your brand] vs [competitor] for [specific need]”). This covers the full range of ways a real buyer might encounter or look for you.

Track the right metrics. Topify surfaces seven core dimensions: visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate). For most teams starting out, three matter most. Your AI Visibility Score tells you whether you’re present. Sentiment Velocity tells you whether the AI’s description of your brand is improving or declining over time. Source Forensics identifies which specific URLs are being cited, so you know which content is actually working.

Monitor across platforms, not just one. A brand can have strong ChatGPT visibility and near-zero Claude visibility. These gaps aren’t random; they reflect the different retrieval infrastructure each platform uses. ChatGPT runs on Bing. Claude runs on Brave. Perplexity has its own crawler. Topify’s dashboard consolidates these into a single view, so you can see exactly where the gaps are rather than guessing.

What to Do When Your Brand Isn’t Being Cited

Knowing you have a citation gap is step one. Closing it requires a different kind of thinking than traditional SEO.

Restructure content for AI extractability. AI retrieval systems favor high information density. Every H2 section on your site should open with a 40-60 word factual summary: a concise, self-contained statement that can stand alone as a cited passage. Think of it as writing for an AI that’s going to quote one sentence from your entire page. Which sentence would you want it to pick?

Fix your machine readability. Deploy JSON-LD Schema markup, especially Organization, FAQPage, and HowTo types. The sameAs attribute is particularly valuable: it connects your official site to Wikipedia, LinkedIn, and Crunchbase entries, which signals entity uniqueness to AI knowledge graphs. Also consider implementing an /llms.txt file in your root directory, a Markdown-formatted index that tells AI systems which pages are your authoritative source of truth.

Build external trust signals. AI systems cite sources they already trust. Getting accurate coverage in industry directories like G2 and Capterra, in authoritative media, and in high-activity communities like Reddit increases the probability that AI retrieval systems will include you in their trusted source pool. These are the “trust seeds” that influence which brands get cited consistently.

Don’t try to fix hallucinations by deletion. If an AI is generating inaccurate descriptions of your brand, you can’t remove the bad data. The strategy is volume: publish enough accurate, high-authority content that the correct signal overwhelms the incorrect one. Researchers call this a “digital cushion strategy.”

Conclusion

Your brand’s visibility in AI search isn’t determined by your SEO rankings. It’s determined by whether AI systems have been given enough clean, credible, and extractable information about you to include you as a trusted source.

The manual checks in this guide take 15 minutes and give you a starting baseline. But if you’re serious about closing the gap, the next step is moving from one-off audits to continuous monitoring. Start with the three prompt types above, run them across ChatGPT, Perplexity, and Claude this week, and use what you find to prioritize which signals to fix first. Then set up a tracking system that removes the guesswork.

AI citation isn’t a trend you can wait out. It’s the infrastructure of how buyers discover brands now.

FAQ

Q: How often should I check my brand’s AI citations? A: For brands in fast-moving industries, weekly monitoring across all major platforms is the right cadence. Monthly checks are likely too slow to catch negative sentiment trends or citation drops before they affect pipeline. AI models update their retrieval indexes frequently, and what was true last month may not reflect your current visibility.

Q: Does being cited by AI actually drive traffic? A: Yes, though the traffic profile is different from organic search. Traffic arriving from an AI citation typically converts at a significantly higher rate because the AI has already done the initial trust-building. The research on this topic suggests that citation-referred visitors arrive with higher purchase intent than visitors from traditional search results.

Q: Can I request that AI platforms cite my brand directly? A: There’s no official appeal process or submission channel at any major AI platform. Citations are determined algorithmically through RAG logic. The only reliable path is building what researchers call “overwhelming consensus”: ensuring that accurate, structured information about your brand is consistently available across the sources AI systems are trained on and retrieve from.

Q: What’s the difference between an AI citation and an AI mention? A: A mention means the AI said your brand name in a response. A citation means the AI linked to or explicitly sourced your content as evidence. Mentions build mindshare. Citations build authority and provide a conversion path back to your site. In Topify’s scoring system, citations carry significantly more weight than plain mentions because they reflect the AI’s judgment that your content is credible enough to stake a claim on.