Your domain authority is solid. Your content ranks on the first page. Your team has published dozens of well-researched articles this quarter. Then a potential customer asks ChatGPT, “What’s the best tool for [your category]?” and gets a confident list of five recommendations. Your brand isn’t mentioned once.

This isn’t a ranking problem. It’s a citation problem, and traditional SEO metrics won’t show it to you.

What LLM Citation Tracking Actually Measures (and What Most Teams Get Wrong)

LLM citation isn’t a synonym for backlinks. In traditional SEO, a link passes authority from one page to another. In generative search, a citation is something different: it’s an AI model selecting your content as a factual source worth surfacing in a synthesized answer.

The mechanism behind this is called retrieval-augmented generation (RAG). When a user asks ChatGPT or Perplexity a question, the model first retrieves the most relevant text chunks from its indexed sources, then uses a probability model to determine which facts are trustworthy and directly answerable. If your content isn’t structured for extraction, it gets passed over, regardless of how authoritative your domain is.

That last point is where most teams get blindsided. Research shows that around 90% of ChatGPT citations come from pages ranked 21 or lower in traditional search results. AI systems are looking for “micro-authority,” meaning content that delivers direct, structured answers, not pages that simply accumulate the most backlinks.

Four other misconceptions tend to derail citation strategies before they start. Publishing more content doesn’t linearly increase citation rate. Standard analytics tools like GA4 or Ahrefs can’t capture AI-generated, non-deterministic outputs. And SEO and GEO, while related, operate on fundamentally different logic: SEO optimizes for keyword density and page performance, while GEO optimizes for semantic density and factual accuracy.

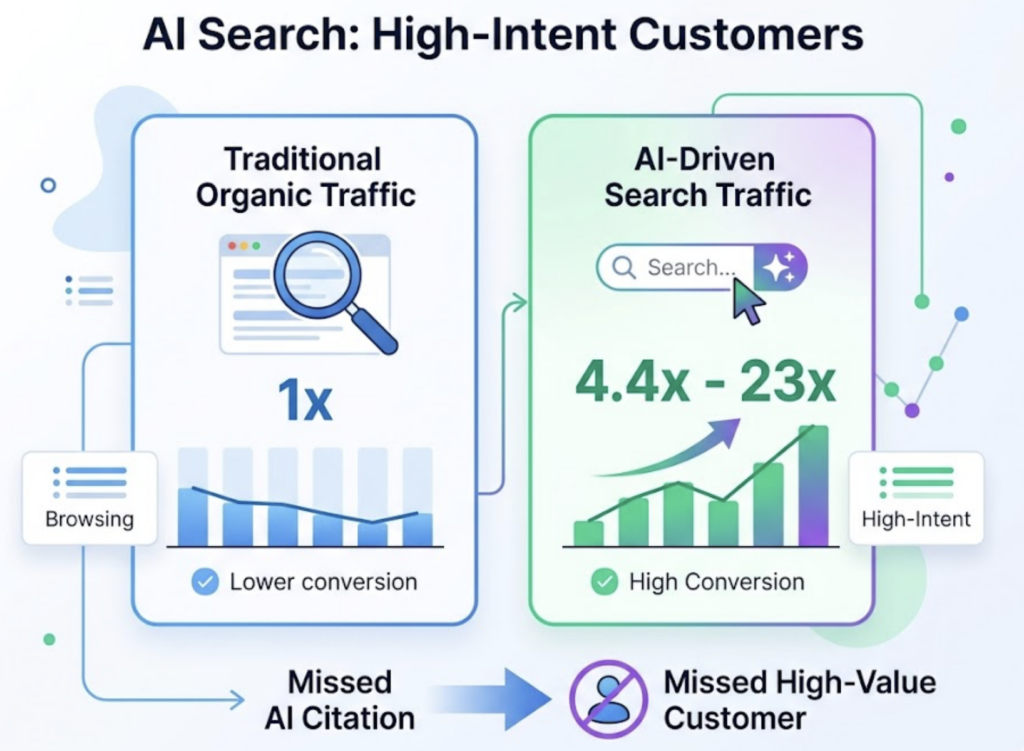

The business case for treating these as separate disciplines is hard to ignore. AI search visitors convert at 4.4x the rate of traditional organic traffic. In one documented case, AI-referred traffic represented just 0.5% of total visits but accounted for 12.1% of signups, a conversion rate 23x higher than standard organic. A missed citation isn’t just a missed click. It’s a missed high-intent customer.

The 4 Metrics at the Core of Any LLM Citation Tracking Strategy

To measure what’s happening in AI-generated answers, you need metrics built specifically for that environment. Here are the four that form the foundation of any serious LLM citation tracking strategy.

Citation Rate is the starting point: how often does your domain or URL appear as a cited source across a defined set of tracked prompts? For B2B SaaS, the industry average citation penetration sits around 0.41%, though that figure climbs to 1.22% for search result pages. This is your baseline visibility number.

Citation Position tells you where in the answer your content appears. 70% of users read only the first three lines of an AI summary. A citation buried in the fifth footnote delivers a fraction of the value of a first-position mention. The click-through rate for a last-position citation is roughly one-quarter of what a first-position citation earns.

Citation Share vs. Competitors is the AI equivalent of share of voice. When a user asks a decision-stage question like “What are the best project management tools?”, LLMs typically surface three to five brands. If your competitors consistently occupy more of those slots than you do, the model is actively building a “competitor-first” narrative in your target audience’s mind, without you even knowing it’s happening.

Citation Consistency is the hardest metric to achieve and the most valuable. Only 11% of domains get cited by both ChatGPT and Perplexity on the same topic. The two platforms draw from very different source pools: Perplexity pulls 46.7% of its citations from Reddit, while ChatGPT draws only 11.3% from that source. Google AIO, on the other hand, overlaps 93.67% with traditional top-10 results. Achieving cross-platform citation coverage can increase your visibility in ChatGPT answers by 2.8x.

How to Build Your LLM Citation Tracking Strategy: A 5-Step Framework

Step 1: Design Your Prompt Corpus

You can’t track everything, so you need a defined set of prompts that map to real business value. Structure your prompt library across three layers: brand and product queries (“What does [brand] do?”), mid-funnel decision queries (“What’s the best [category] tool?”), and top-funnel informational queries (“How do I solve [industry pain point]?”).

Keep your prompt corpus between 50 and 100 prompts. Below that threshold, the data lacks statistical significance. Also worth noting: AI search users tend to type full sentences of seven or more words rather than keyword fragments, so build your prompts accordingly.

Step 2: Establish a Baseline Citation Snapshot

Before optimizing anything, document where you currently stand. Run your prompt corpus across at least ChatGPT, Perplexity, and Google AIO. Record which URLs are being cited, the context in which they’re cited (positive, neutral, or framed in a way that undermines your positioning), and which prompts are currently going entirely to competitors. This baseline is the reference point every future improvement gets measured against.

Step 3: Audit the Structural Characteristics of Cited Content

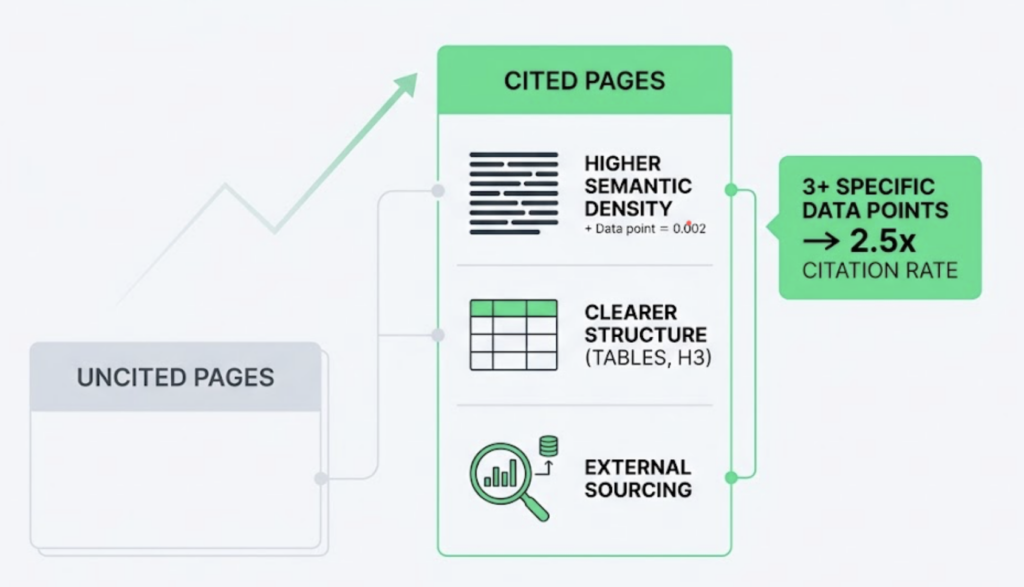

This is where the diagnostic work happens. Compare the content AI is selecting against the content it’s ignoring. Three structural factors consistently separate cited pages from uncited ones: semantic density (how many specific facts appear per paragraph), structural clarity (the presence of HTML tables, H3 subheadings, and FAQ schema), and external sourcing (whether the page references third-party research or expert data). Pages containing three or more specific data points are cited at 2.5x the rate of pages that don’t.

Step 4: Identify Your Citation Gaps

A citation gap is any topic where a competitor is getting cited and you’re not. These gaps typically fall into one of two categories: you don’t have content on that topic at all, or you have content that the AI can’t efficiently extract from. Prioritize gap-filling by conversion intent, not traffic volume. A gap in a decision-stage prompt is worth more than a gap in a broad informational query.

Step 5: Track on a Bi-weekly Cadence

Single-point snapshots are misleading. AI citation behavior drifts: 57% of brands that disappear from an AI answer in one query reappear in subsequent queries. Two-week tracking intervals give you enough frequency to distinguish temporary fluctuations from real trend shifts, without generating data faster than your team can act on it.

3 Common Mistakes That Kill an LLM Citation Tracking Strategy

Mistake 1: Tracking only ChatGPT. It’s the most visible platform, so teams default to it. But the source pools differ dramatically across platforms, and optimizing for one while ignoring others creates a defensive blind spot. A brand that dominates ChatGPT citations but is invisible on Perplexity is leaving a sizable audience segment unaddressed.

Mistake 2: Measuring presence without measuring context. A citation isn’t always a positive signal. If ChatGPT consistently surfaces your brand in the context of “budget-friendly alternatives” and your positioning is premium, you have a sentiment problem that a citation rate dashboard won’t reveal. You need to track not just whether AI mentions you, but how it describes you. Fixing a negative framing often requires publishing specific content types: transparent pricing pages, direct comparison articles, or data-backed case studies that reframe the AI’s narrative.

Mistake 3: Treating tracking reports as KPI summaries rather than action triggers. This is the most expensive mistake. Tracking data only has value when it connects directly to the content calendar. If you identify a high-intent prompt where a competitor is getting cited and you’re not, that gap should trigger an optimization task within days, not the following quarter.

The Right LLM Citation Tracking Tool Changes What You Can Actually See

The math on manual tracking doesn’t work at scale. At 100 prompts, four platforms, and a bi-weekly cadence, you’re looking at 800 manual queries per month. Manual tracking carries an error rate of around 30% and can’t produce the historical trend data that reveals whether a change you made last month actually moved the needle. Automated platforms reduce that operational cost by over 90%.

Topify is the LLM citation tracking platform that most directly maps to the five-step framework above. Its Source Analysis function identifies not just whether your domain appears in AI answers, but which specific URLs are being cited and in what context, giving you the diagnostic clarity to understand what’s working at the content level, not just the domain level.

Topify’s Visibility Tracking covers ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms in a single LLM citation tracking dashboard, which solves the cross-platform consistency problem most teams struggle with. The Competitor Monitoring feature runs citation comparisons within the same prompt report, so you’re not building a separate workflow to understand how your citation share compares to competitors on the same queries.

For teams that need to move from tracking to action quickly, Topify’s One-Click Execution lets you define a GEO strategy in plain English and deploy it without manual workflow overhead. That’s the connection between citation data and content output that most LLM citation tracking solutions leave as a gap.

Pricing for Topify’s LLM citation tracking system starts at $99/month, which includes 100 tracked prompts, coverage across major AI platforms, and a 30-day trial. For teams running 250 prompts across multiple projects, the Pro plan at $199/month extends that coverage with 22,500 AI answer analyses per month.

| Plan | Price | Prompts | AI Answer Analyses |

|---|---|---|---|

| Basic | $99/mo | 100 | 9,000/mo |

| Pro | $199/mo | 250 | 22,500/mo |

| Enterprise | From $499/mo | Custom | Custom |

Other options in the LLM citation tracking software space include SE Ranking, which integrates AIO tracking with traditional rank monitoring for teams that want both in one place, and ZipTie.dev, which focuses on large-scale AIO data extraction.

How to Turn Citation Data into Actual Content Improvements

Citation data has one useful output: telling you what to change.

Comparative listicles account for 52% of LLM citation share. Comprehensive guides with data tables earn a citation rate of 67%. FAQ and Q&A formats are cited 2.7x more often than narrative paragraphs. These aren’t style preferences. They’re structural signals that AI retrieval systems respond to consistently.

When you find a citation gap, the decision path splits into two scenarios. If a competitor is being cited on a topic you also have content for, the fix is usually factual density: add specific data points at the start of paragraphs, introduce a data table, embed FAQ schema. If a competitor is being cited on a topic you don’t cover at all, you need new content, and Topify’s One-Click Execution can generate a content brief structured around the semantic patterns AI platforms are currently rewarding.

One important attribution note: AI-driven brand awareness often flows through what’s called “dark traffic.” A user sees your brand in a ChatGPT answer, doesn’t click, then searches your brand name directly in Google an hour later. That visit shows up as branded organic search, with no AI attribution. Tracking the correlation between citation rate increases and branded search volume growth gives you a more complete picture of the real downstream value of your LLM citation tracking strategy.

Conclusion

The CTR signal that traditional SEO was built on is contracting fast. Since Google AIO launched in March 2024, top-ranked pages have seen average CTR drops of 34.5%. By December 2025, that figure had reached 58% for the number-one organic result. The traffic isn’t disappearing. It’s being filtered through AI, and only brands that show up as cited sources in those AI answers are capturing it.

An LLM citation tracking strategy isn’t a replacement for SEO. It’s the layer you need to add now to understand where your content actually stands in the environment where your highest-intent customers are forming their decisions. Get started with Topify and build your baseline citation snapshot this week, before your competitors figure out the same gap you’re looking at right now.

FAQ

Q: What is an LLM citation tracking strategy?

A: An LLM citation tracking strategy is a systematic process for monitoring, analyzing, and optimizing how large language models like ChatGPT and Gemini cite your brand’s content when generating answers. Unlike traditional SEO, it focuses on content extractability, factual density, and cross-platform citation consistency rather than keyword rankings or backlink counts.

Q: How much does LLM citation tracking cost?

A: Automated LLM citation tracking tools like Topify start at $99/month, which covers 100 tracked prompts and analysis across major AI platforms. Manual tracking might seem free, but given its 30% error rate and the hundreds of hours required at scale, the real cost is typically far higher. Enterprise-level citation tracking platforms with custom prompt volumes and dedicated support are available from $499/month.

Q: What’s the difference between LLM citation tracking and traditional backlink tracking?

A: Backlink tracking measures static links between web pages as a signal for search engine ranking. LLM citation tracking measures the probability that an AI model selects your content as a factual source when generating a live answer. LLM citations don’t require a click, they influence brand perception and purchase decisions directly within the AI interface.

Q: How often should I update my LLM citation tracking strategy?

A: A bi-weekly tracking cadence is the recommended minimum. AI citation behavior is non-deterministic: roughly 57% of brands that drop from an AI answer reappear in subsequent queries. Monthly or quarterly tracking can’t reliably distinguish genuine visibility trends from short-term fluctuations. High-competition categories like SaaS and fintech benefit from closer to real-time monitoring.