You optimized a page for “best project management tool.” It ranks on page one in Google. But when a prospect asks ChatGPT the same question, your brand doesn’t appear. When they ask Perplexity, a competitor’s blog post gets cited three times. And when Gemini answers, it pulls from your YouTube video but never links to your product page.

Three AI engines. Three completely different citation behaviors. And if your ai visibility tracking strategy treats them as one, you’re optimizing for a platform that might not even be surfacing your content.

Same Query, Three Different Answers, Three Different Sources

The most common mistake in AI search optimization is assuming that what works on one engine works on all three. It doesn’t. An analysis of over 118,000 AI responses shows that the citation gap between platforms is far wider than most marketers expect.

Perplexity averages 21.87 citations per response. ChatGPT averages 7.92. Gemini sits at 8.34.

That’s not a rounding error. Perplexity provides nearly triple the source density of its competitors, and the types of sources it pulls from barely overlap with the other two. Only 11% of domains appear in both ChatGPT and Perplexity results for the same query.

| Metric | ChatGPT | Perplexity | Gemini |

|---|---|---|---|

| Avg. Citations per Response | 7.92 | 21.87 | 8.34 |

| Unique Domains Cited | 42,592 | 37,399 | 38,876 |

| Primary Search Index | Bing | Bing / Proprietary Hybrid | Google + Knowledge Graph |

| Google Top 10 Correlation | 90% | High | 14% |

| Domain Overlap with Peers | 11% | 11% | 13.7% |

That last row is the one worth staring at. If your brand is visible in ChatGPT, there’s roughly a 1-in-9 chance the same content gets cited in Perplexity. A unified “AI SEO” strategy isn’t just suboptimal. It’s structurally broken.

Perplexity Cites Like a Research Paper. ChatGPT Doesn’t.

Perplexity is built as an answer engine, not a chatbot. Every query triggers a real-time web search using a Retrieval-Augmented Generation (RAG) pipeline that pulls 20 to 30 candidate pages, then synthesizes a response grounded strictly in those pages. The result looks like an academic paper: numbered inline markers, each one linking to a verifiable source.

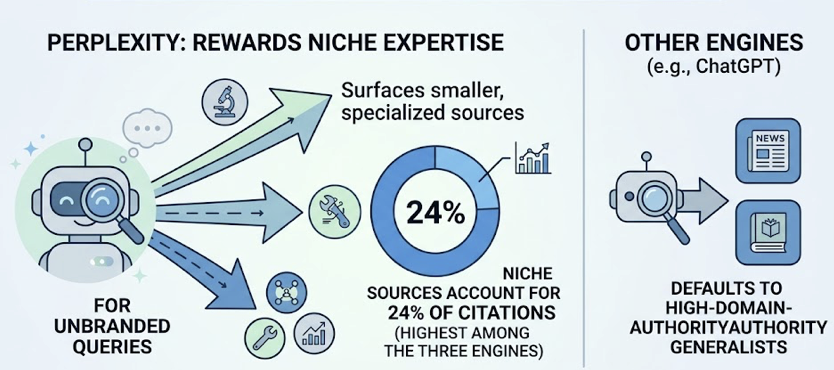

This architecture has two implications for content creators. First, Perplexity rewards niche expertise. While ChatGPT defaults to high-domain-authority generalists, Perplexity surfaces smaller, specialized sources if they provide more precise answers. For unbranded queries, niche sources account for 24% of all Perplexity citations, the highest rate among the three engines.

Second, recency matters more on Perplexity than anywhere else. Content updated within 30 days has an 82% citation rate. Content older than 180 days drops to 37%. That’s not a gentle decay curve. It’s a cliff.

| Content Age | Perplexity | ChatGPT | Gemini |

|---|---|---|---|

| Within 30 Days | 82.0% | 71.2% | 58.5% |

| Within 60 Days | 64.5% | 76.4% | 59.2% |

| Within 90 Days | 48.0% | 65.0% | 61.0% |

| Over 180 Days | 37.0% | 42.3% | 45.1% |

For brands in fast-moving sectors, a monthly content refresh isn’t optional. It’s the minimum viable strategy for staying in Perplexity’s citation window.

ChatGPT operates on a fundamentally different logic. Its citations lean on “web consensus,” pulling from what the internet broadly agrees on rather than what’s most recent or most specialized. Third-party directories like G2, Yelp, and TripAdvisor account for 48.73% of its citations on subjective queries. And 87% of its search-mode citations match Bing’s top 10 organic results.

Here’s the thing that trips up most brands: ChatGPT mentions brands far more often than it cites them. Research shows 85% of brands mentioned in ChatGPT answers have no accompanying citation link. Your brand might show up in the narrative, but without a clickable link, you’re building recall without traffic.

What Each Engine Prefers to Cite

The sourcing preferences across the three engines reveal distinct strategic playbooks.

| Source Type | ChatGPT | Perplexity | Gemini |

|---|---|---|---|

| Brand-Owned Website | 28% | 31% | 52.15% |

| Third-Party Listings | 48.73% | 22% | 12% |

| Niche/Expert Blogs | 15% | 24% | 18% |

| Academic/Gov | 8% | 23% | 18% |

Gemini stands apart with a clear preference for brand-owned content: 52.15% of its citations come from a brand’s own domain. It’s also deeply integrated with the Google ecosystem. YouTube is currently the most-cited domain in AI Overviews, and brands combining video with optimized transcripts see a 317% increase in citation rates compared to text-only content.

Perplexity, on the other hand, gives academic and government sources their highest representation at 23%. If you’re publishing original research or data-backed reports, Perplexity is the platform most likely to reward that effort.

ChatGPT’s heavy reliance on directories means your G2 profile, your Capterra listing, and your Yelp page are not just review management tasks. They’re citation signals.

Content AI Engines Ignore (and the Technical Fixes)

Not getting cited isn’t always a content quality problem. Often, it’s a technical one.

Most AI crawlers, including OpenAI’s GPTBot and Perplexity’s retrieval agents, have limited or zero JavaScript rendering capability. If your pricing table, product features, or key data points load via client-side JavaScript, they’re invisible to these crawlers. On top of that, AI agents enforce strict 2 to 5 second timeout limits. If your page takes longer to return raw HTML, the agent moves to a competitor’s page.

| Technical Factor | AI Crawler Behavior | Fix |

|---|---|---|

| JS Rendering | Most bots read raw HTML only | Server-Side Rendering (SSR) |

| Crawl Timeout | 2-5 second limit | Optimize Time to First Byte |

| Robots.txt | Legacy blocks still common | Allow OAI-SearchBot and PerplexityBot |

| Content Structure | Headings parsed as queries | Use question-based H2s and H3s |

On the content side, AI engines prioritize declarative, subject-predicate-object statements that can be turned into knowledge triplets. Original research, statistical benchmarks, and case studies with specific metrics get cited at significantly higher rates. Marketing copy with vague adjectives and metaphorical language gets systematically skipped.

Why AI Visibility Tracking Is the Layer Most Brands Are Missing

Traditional SEO tools like Google Search Console and Ahrefs track clicks from a list of links. They offer zero visibility into what AI models are saying about your brand, which sources they’re citing, or how your competitors are being recommended.

In a world where search is increasingly zero-click, the metric of success shifts from traffic volume to citation share.

The financial case makes this urgent. AI search referral traffic converts at 14.2%, compared to 1.76% for traditional Google organic. That’s a 5.1x conversion advantage. ChatGPT referrals alone convert at 15.9%, roughly 9x higher than Google organic, with an average referral value of $47 per visit compared to $9 for Google.

| Platform | Conversion Rate | vs. Google Organic |

|---|---|---|

| ChatGPT | 15.9% | 9x Higher |

| Perplexity | 10.5% | 6x Higher |

| Copilot | 5.0% | 3x Higher |

| Google Organic | 1.76% | Baseline |

Those numbers reframe the entire ROI calculation. Losing citation share in AI search isn’t a branding problem. It’s a revenue problem.

This is where Topify fills the gap. Topify’s Source Analysis reverse-engineers the exact domains and URLs that AI platforms cite in a given category. Instead of guessing which content AI prefers, you can see which competitor pages Perplexity cites in 40% of relevant answers, analyze their content structure, and identify the specific information gap to close.

What sets Topify apart from tools that rely on API-based data is its UI-based scraping methodology. API results and real user-facing results have only a 4% source overlap. Optimizing for API data is optimizing for the wrong target. Topify captures the actual user experience: formatting, citation placement, and recommendation hierarchy across ChatGPT, Perplexity, and Gemini.

3 Moves to Lift Your Citation Rate Across All Three Engines

Each engine rewards a different content strategy. Here’s how to address all three without tripling your workload.

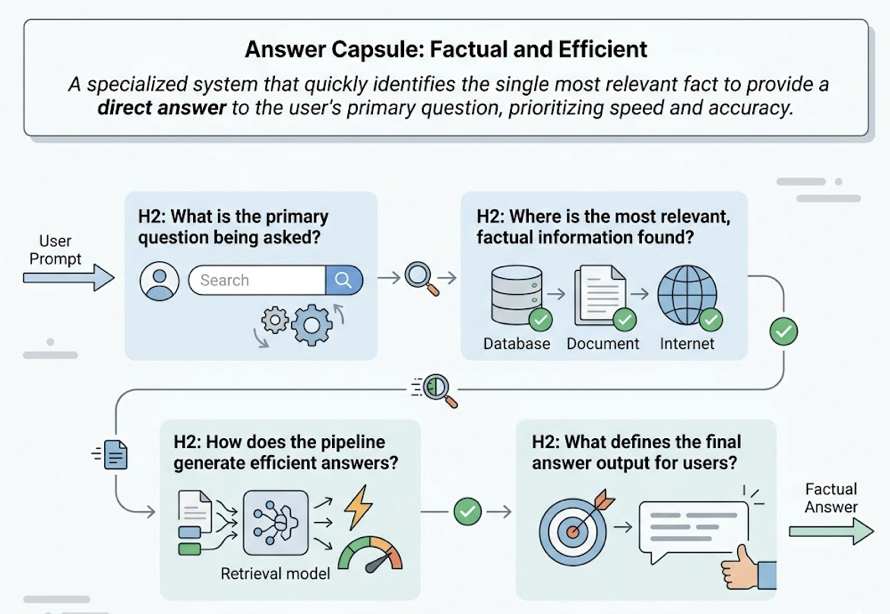

Move 1: Build Answer Capsules for Perplexity

Perplexity’s RAG pipeline looks for the most efficient path to a factual answer. Place a 40 to 80 word “Answer Capsule” at the top of every high-intent page. This capsule should contain definitive, non-hedged statements that directly answer the primary question. Combine it with H2s phrased as natural language questions so the retrieval model matches your headings to user prompts.

Move 2: Build Entity Authority for ChatGPT

ChatGPT rewards brands that show consistent presence across the web. Secure mentions and listings in high-authority third-party sources: industry publications, guest roundups, review platforms. Make sure your brand name, description, and value proposition are identical across LinkedIn, Wikipedia, G2, and Capterra. The model synthesizes “consensus.” If your signals conflict, you drop off the shortlist.

Move 3: Own the Google Ecosystem for Gemini

Gemini leans on the Knowledge Graph. Implement structured data markup (Article, FAQ, Organization schema) to define the relationships between your content and broader entities. Produce YouTube content for cornerstone topics. Gemini’s heavy reliance on YouTube citations means a video strategy is often the fastest way to leapfrog competitors in AI Overviews.

Conclusion

ChatGPT, Perplexity, and Gemini don’t just give different answers. They cite different sources, from different indices, using different logic. Treating AI visibility as a single-platform problem leads to lopsided results: visible in one engine, invisible in the other two.

The brands pulling ahead are the ones that track citation behavior at the source level, platform by platform, prompt by prompt. With Topify, that kind of ai visibility tracking becomes a structured, repeatable process, not a quarterly guessing game.

FAQ

Q: How does ChatGPT decide which brands to cite?

A: ChatGPT leans heavily on web consensus and third-party directory presence. It pulls 48.73% of its citations from platforms like G2, Yelp, and TripAdvisor for subjective queries, and 87% of its search-mode citations match Bing’s top 10 organic results. Consistent presence across multiple web touchpoints is the strongest signal.

Q: Does Perplexity always show source links?

A: Yes. Perplexity uses numbered inline citations for nearly every factual statement, averaging 21.87 citations per response. It’s designed as a research-first engine where every claim is grounded in a real-time web retrieval, making it the most transparent platform for source attribution.

Q: Can you track which AI engines cite your content?

A: Not with traditional SEO tools. Specialized ai visibility tracking platforms monitor brand presence, sentiment, and citation share across ChatGPT, Perplexity, and Gemini by systematically querying these models with high-value prompts.

Q: What’s the difference between an AI mention and an AI citation?

A: A citation is a link to your content used as evidence for a claim, appearing as a footnote or sidebar. A mention is when the AI names your brand in the body text as part of a recommendation. Citations drive referral traffic. Mentions drive brand recall. Research shows 85% of ChatGPT brand mentions have no accompanying citation link.