Your team ran 200 prompts across ChatGPT, Gemini, and Perplexity last quarter. Not hypothetical prompts. Real questions your customers type every day: “best project management tool for remote teams,” “most reliable CRM for mid-market SaaS,” “top analytics platform with real-time dashboards.” You checked manually. Some days your brand showed up. Some days it didn’t. The results changed between Tuesday morning and Wednesday afternoon, even with the exact same wording.

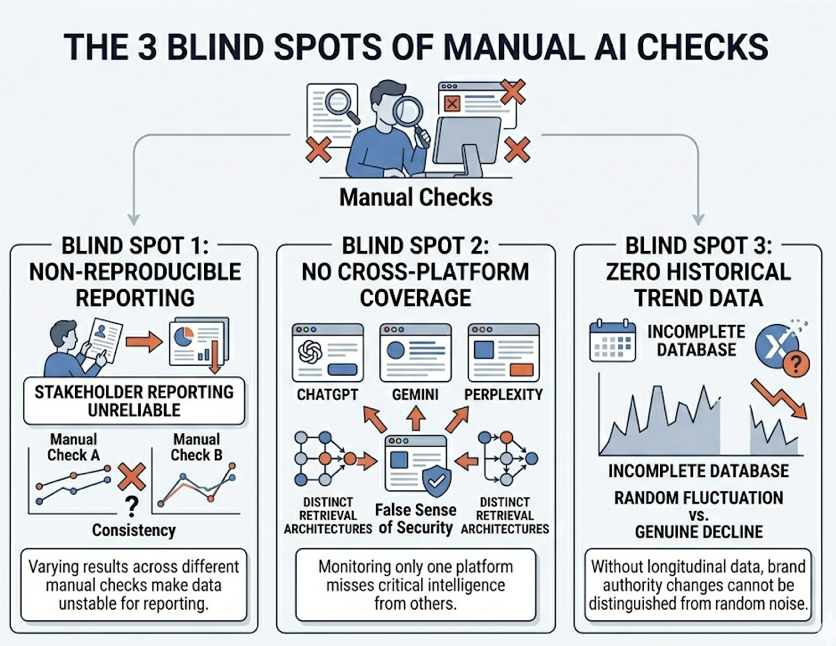

That inconsistency isn’t a bug in the AI. It’s the nature of how large language models generate responses. And it means the old approach of spot-checking your brand name in ChatGPT once a month tells you almost nothing about your actual AI search visibility.

Why Manual Spot-Checks Fail at Measuring AI Search Visibility

Traditional search visibility relied on a stable, periodically updated index. You could check your Google ranking, see the same result an hour later, and trust the data.

Generative search doesn’t work that way. Every response is synthesized in real time through retrieval-augmented generation (RAG), and the output is shaped by token sampling strategies, temperature settings, and even the physical hardware running the inference. Small-to-medium-sized language models (2B to 8B parameters) demonstrate answer consistency rates in the range of 50% to 80% under standard inference conditions. That means the same prompt can produce a different brand list every time you run it.

The technical reason is surprisingly fundamental: floating-point arithmetic isn’t perfectly associative in parallel computing environments. The order of operations in matrix multiplications can vary between runs. Those tiny rounding differences cascade across billions of calculations, and at a critical branch point, the model might include your brand in a recommendation list, or it might not.

That’s why a marketing manager can see their brand recommended on a Tuesday, then fail to reproduce it during an executive presentation on Wednesday. It’s not anecdotal. It’s mathematical.

Manual checks create three specific blind spots. First, they’re non-reproducible, which makes stakeholder reporting unreliable. Second, they can’t achieve cross-platform coverage. ChatGPT, Gemini, and Perplexity use distinct retrieval architectures, so monitoring just one platform gives a false sense of security. Third, manual checks provide zero historical trend data. Without a longitudinal database, you can’t tell whether a brand disappearance is a random fluctuation or a genuine decline in AI authority.

What “Brand Mentions” Actually Mean Across AI Platforms

Not all AI mentions carry the same weight. A brand mention in generative search is fundamentally different from a mention on social media or in a news article. The commercial value of each mention is directly tied to how close it sits to the user’s decision-making moment.

Direct recommendations are the highest-value mentions. These happen when the AI explicitly names your brand as a solution: “The best CRM for small businesses is [Brand].” This implies a degree of algorithmic trust that’s difficult to earn and easy to lose.

Comparative mentions appear when the AI lists your brand alongside competitors, often in a table or bulleted list. These reveal the “narrative neighborhood” your brand occupies in the AI’s training data. If you’re consistently grouped with budget tools when your positioning is enterprise-grade, that’s an insight manual checks would never surface at scale.

Source citations occur when the AI provides a clickable link to justify its response. Perplexity does this systematically for nearly every claim. Gemini provides citations for factual statements. ChatGPT has historically leaned toward synthesized answers without direct attribution, though this is shifting with its search integrations.

Each platform also has distinct retrieval biases that shape which brands get mentioned. Gemini demonstrates a strong preference for brand-owned content, with roughly 52.15% of its citations originating from brand-owned websites. It rewards structured, factual information and consistent schema markup. ChatGPT operates on the logic of consensus, with nearly 48.73% of its citations coming from third-party directories and aggregators like Yelp and TripAdvisor. Perplexity prioritizes niche expertise and factual density, often citing industry experts, real-time news, and customer reviews.

The practical implication: your brand can be highly visible on one platform and completely absent on another. Tracking only one engine is like measuring your Google ranking and ignoring Bing, except the stakes are higher because AI answers don’t just list your site. They tell users whether to trust you.

5 Metrics That Define Your AI Search Visibility

Quantifying brand performance in a non-deterministic environment requires more than checking “are we mentioned or not.” Five metrics, tracked together, normalize the noise and reveal long-term trends.

1. Visibility Score (Answer Share of Voice). This is the percentage of high-value prompts where your brand appears in the AI’s response. If you track 100 prompts across three platforms and appear in 34 responses, your Visibility Score is 34%. Think of it as market share for generative discovery.

2. Sentiment and Narrative Framing. This goes beyond positive/negative. It evaluates the specific descriptors and tone the AI uses when positioning your brand. Tracking “Sentiment Velocity,” the direction of sentiment change over time, reveals whether the AI is becoming increasingly critical of your pricing, support, or product quality before it shows up in customer complaints.

3. Recommendation Position. Just as position matters in SEO, the order in which your brand appears in an AI-generated list is critical. Users overwhelmingly trust the first recommendation. Whether you’re the primary pick or listed under “other options” is a clear indicator of relative authority.

4. Source Citation Frequency and Gaps. This tracks which domains the AI relies on as “ground truth.” The most actionable insight here is the “Citation Gap”: prompts where competitors are cited from domains where your brand has no presence. Research indicates that third-party citations carry roughly 6.5 times the authority weight of self-published material in many AI retrieval systems. That makes earned media and expert quotes disproportionately valuable.

5. Conversion Visibility Rate (CVR). CVR evaluates the context of a mention to project the likelihood of a downstream conversion. It distinguishes between a passive mention (a historical reference) and an active recommendation that aligns with the user’s specific constraints (“this tool fits your budget and feature requirements”). High CVR means the AI is sending high-intent signals. Low CVR means you’re visible but not driving action.

| Metric | What It Tells You | High Score | Low Score |

|---|---|---|---|

| Visibility Score | Broad brand awareness in AI | Dominant category presence | Discovery gap |

| Sentiment Trend | Brand reputation health | AI promotes the brand | AI warns against the brand |

| Position | Competitive authority | Trusted leader | Secondary alternative |

| Source Gaps | Content coverage blind spots | Strong earned media | Missing from key domains |

| CVR | Pipeline impact | High-intent leads | Passive discovery only |

How to Set Up Cross-Platform Brand Tracking, Step by Step

Moving from manual checks to systematic AI search visibility tracking follows a four-step lifecycle. Each step builds on the previous one, and skipping ahead typically means the data you collect won’t be representative or actionable.

Step 1: Build Your Prompt Universe

Visibility tracking starts with identifying high-value conversational prompts, not short keywords. While traditional search queries average four words, conversational AI prompts often exceed 23 words and include specific user constraints. You need a “Prompt Matrix” organized by funnel stage:

Problem/Solution prompts: “How do I automate payroll for a global team?” Product selection prompts: “What is the most secure cloud storage for healthcare?” Comparison prompts: “Notion vs. Obsidian for personal knowledge management.”

Topify’s High-Value Prompt Discovery surfaces real-world AI search volume and response patterns to isolate “Dark Queries,” prompts where your brand should be present but is currently excluded. That’s the starting point: knowing which conversations matter before you start measuring.

Step 2: Establish a Multi-Platform Baseline

The baseline is your “before” snapshot across ChatGPT, Gemini, and Perplexity. To account for the non-determinism discussed earlier, each prompt needs to be sampled 15 to 20 times within a controlled period to achieve a statistically significant average for visibility and sentiment. This initial audit reveals where you stand relative to competitors and highlights the most immediate gaps.

Doing this manually for even 50 prompts across three platforms means 2,250 to 3,000 individual checks. That’s where a tracking platform becomes non-negotiable. Topify’s Visibility Tracking runs this across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms automatically, producing baseline scores for all five metrics in a single dashboard.

Step 3: Turn on Continuous Monitoring

AI recommendations shift as models get updated and new web content gets indexed. A competitor that wasn’t in the AI’s recommendation set last month can appear this month. Worse, the AI can start hallucinating incorrect information about your brand: claiming a product has been discontinued, misquoting your pricing, or confusing you with a similarly named company.

Continuous monitoring catches these shifts in real time. Topify’s Competitor Monitoring automatically detects emerging rivals in your category and tracks position changes across platforms. Its hallucination alerting flags factual errors about your brand so PR teams can respond before the misinformation spreads.

Step 4: Run Competitive Forensics on Citations

The final layer is reverse-engineering the AI’s citations. When a competitor consistently outranks you on a specific prompt, the question isn’t just “why.” It’s “what sources is the AI trusting, and are we present on those sources?”

Source Analysis shows you the exact domains and URLs that AI platforms cite for your category. If a competitor dominates because three industry journals reference them and none reference you, that’s a specific, actionable gap: earn coverage on those publications, and you change the AI’s input data.

What Your First AI Visibility Report Should Include

A visibility report that just shows numbers doesn’t drive action. The standard cadence for high-performing teams is a weekly report, produced every Monday, structured to translate data into decisions.

Headline narrative. One paragraph that converts visibility movements into business context: “Visibility in Perplexity rose 12% following the TechCrunch feature, leading to a measurable increase in referred demo requests.”

Model-specific visibility trends. A line graph comparing brand presence across ChatGPT, Gemini, and Perplexity. Large discrepancies between platforms point to platform-specific optimization needs. If Gemini visibility is low, schema markup and brand-owned content need attention. If ChatGPT visibility lags, third-party directory listings and aggregator presence are the lever.

Sentiment velocity chart. A visualization of how the AI’s framing of your brand is changing over time. Downward trends in sentiment are leading indicators of future reputation problems, often surfacing weeks before they appear in customer feedback.

The citation gap matrix. A table listing high-value prompts where your brand is absent, alongside the sources the AI currently cites for competitors. This is the direct “to-do” list for content and PR teams.

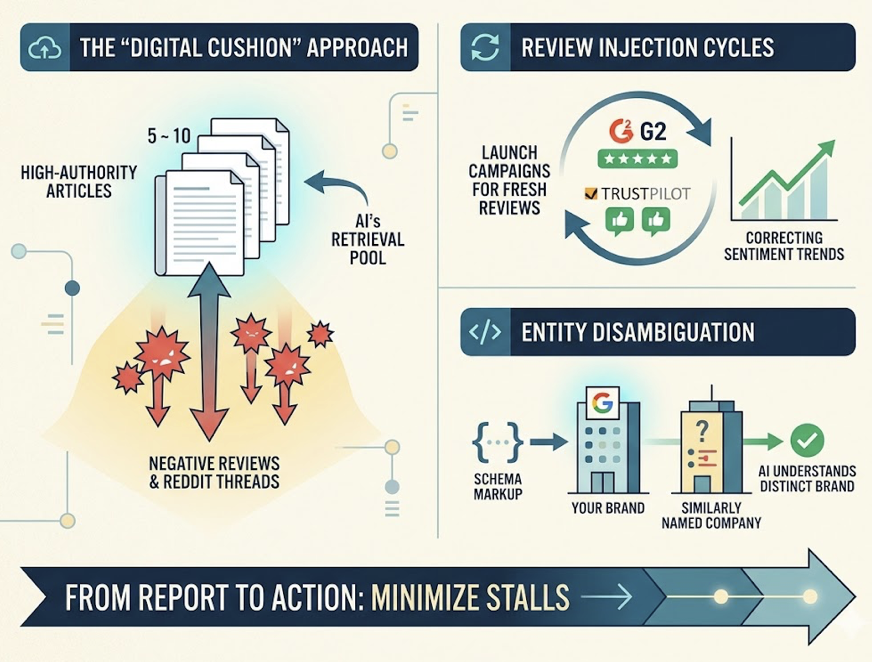

The transition from report to action is where most teams stall. Common post-report strategies include the “Digital Cushion” approach: if the AI is citing negative reviews or Reddit threads, publishing 5 to 10 high-authority articles on the same topic dilutes the negative signal in the AI’s retrieval pool. Review injection cycles, launching campaigns for fresh reviews on G2 or Trustpilot, correct negative sentiment trends. Entity disambiguation through Schema Markup ensures the AI doesn’t confuse your brand with a similarly named company.

3 Mistakes That Tank Your Brand Tracking Results

Even teams that adopt AI visibility tracking make predictable errors in the first few months.

Mistake 1: Only tracking brand-name prompts. If you’re only monitoring “Is [Brand] a good CRM?”, you’re missing the category prompts that drive discovery: “best CRM for mid-market SaaS.” Category prompts are where new customers first encounter your brand in AI search. Brand-name prompts tell you what the AI thinks about you. Category prompts tell you whether the AI thinks of you at all.

Mistake 2: Monitoring a single AI platform. Given the retrieval biases outlined earlier (Gemini favors brand-owned content at 52.15%, ChatGPT favors third-party consensus at 48.73%, Perplexity favors niche expertise), single-platform tracking produces a fundamentally incomplete picture. Your audience uses multiple AI platforms, and your visibility profile is different on each one.

Mistake 3: Running a one-time audit instead of continuous tracking. A single snapshot captures one moment in a highly volatile environment. AI recommendations change as models update, new content gets indexed, and competitor strategies shift. Without longitudinal data, you can’t distinguish a random fluctuation from a real trend. Weekly tracking is the minimum cadence for actionable insights.

Conclusion

The shift from index-based search to generative synthesis has changed what “brand visibility” means. You’re no longer competing for a position on a results page. You’re competing for a place in the AI’s narrative, across every platform your audience uses, on every prompt that matters to your business.

Manual spot-checks can’t measure that. The non-determinism of large language models, with consistency rates as low as 50%, means that anything less than systematic, multi-platform, longitudinal tracking gives you unreliable data and false confidence. The brands that build this infrastructure now will know exactly where they stand. The ones that don’t will keep guessing. Get started with Topify and find out where your brand actually stands in AI search.

FAQ

How often should I check my brand’s AI search visibility?

Weekly is the recommended minimum. AI recommendations shift as models update and new content gets indexed. Monthly audits miss too many changes, and daily tracking is overkill for most teams unless you’re in a fast-moving category with aggressive competitors.

Can I track competitors’ brand mentions in AI search?

Yes. Competitive benchmarking is one of the most actionable parts of AI visibility tracking. Tools like Topify automatically detect competitors in your category, compare visibility scores, sentiment, and position across platforms, and surface the specific sources the AI is citing for them but not for you.

Which AI platforms should I prioritize for brand tracking?

Start with ChatGPT, Gemini, and Perplexity. They represent the largest share of conversational AI usage and have distinct retrieval architectures, which means your visibility profile is different on each one. If your audience skews toward specific regions, platforms like DeepSeek or Doubao may also be relevant.

Is AI search visibility different from traditional SEO rankings?

Yes, fundamentally. Traditional SEO measures your position on a search results page. AI search visibility measures whether the AI mentions your brand in its synthesized response, how it frames you (sentiment), and what position you hold relative to competitors. A high domain authority and strong keyword rankings don’t guarantee that AI platforms will recommend your brand. They measure different signals entirely.