Your domain authority is 70. Your keyword rankings are solid. You even rank #1 for your category’s head term. Then someone asks Perplexity, “What’s the best tool for [your niche]?” and it cites three competitor URLs you’ve never heard of. None of your content appears anywhere in the response.

Traditional SEO dashboards can’t explain what just happened, because they weren’t built to track what LLMs choose to cite. And right now, roughly 93% of AI-powered search sessions end without a single click to any website. The brands that show up inside those answers aren’t just visible. They’re capturing traffic that converts at 14.2% on average, roughly 4-5x the rate of traditional organic search.

Most AI Rank Tracking Tools Track Mentions. They Should Be Tracking Citations.

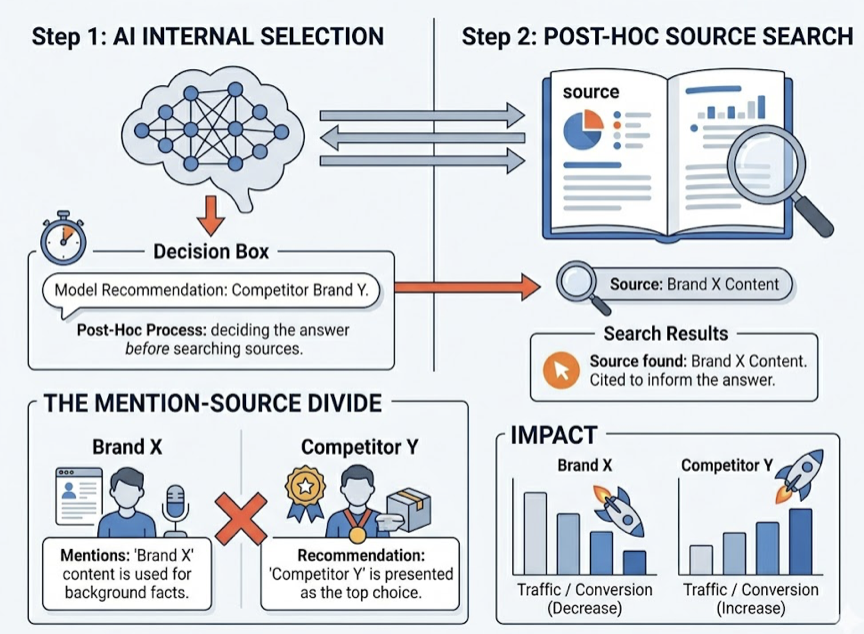

Here’s the thing most marketers miss when shopping for an AI rank tracking tool: there’s a fundamental difference between a “mention” and a “citation,” and most platforms blur the line.

A mention happens when an LLM pulls your brand name from its parametric memory, the patterns baked into the model during training. It means the model “knows” you exist. That’s good for brand recall, but it doesn’t tell you why the model chose to bring you up or whether the context was positive.

A citation is different. It’s the result of Retrieval-Augmented Generation (RAG), where the model actively searches the live web, finds your URL, and uses it as evidence to build its answer. When Perplexity shows a numbered footnote or Google AI Overviews surfaces a source card, that’s a citation. It means the model trusts your content enough to reference it in real time.

The problem? Research shows that citations are often “post-hoc.” The model decides which brands to recommend first, then searches for sources to back up that decision. This creates what researchers call the “Mention-Source Divide”: your content might be cited to inform the answer, while a competitor gets the actual recommendation in the text.

That’s the gap most brands still can’t see.

If your AI rank tracking tool only counts how often your brand name appears, you’re measuring the wrong thing. You need URL-level citation depth, the ability to see exactly which domains the model pulls from and whether your pages are in that set.

What an LLM Citation Tracking Platform Actually Measures

An LLM citation tracking platform monitors how AI models reference your brand at the source level, not just the surface level. The best tools in this category focus on five core metrics.

Visibility Score. The percentage of relevant prompts where your brand appears in the AI response. For unoptimized B2B SaaS brands, a baseline of 8-15% is typical. Category leaders with “answerable” content regularly hit 40-50%.

Sentiment Quotient. A mention doesn’t help if ChatGPT calls you “a budget alternative with limited features.” Sentiment analysis scores each response on a scale (typically -100 to +100) to flag whether the model frames you positively, neutrally, or negatively. High mention rate plus negative sentiment is a brand crisis that traditional SEO would never catch.

Citation Source Mapping. This is the layer most tools miss. It tracks the specific domains and URLs that AI platforms cite when constructing answers in your category. Perplexity links roughly 78% of its assertions to specific sources, while ChatGPT manages about 62%. Knowing which URLs land in that citation set, and whether they’re yours or a competitor’s, is where the strategic value lives.

Share of Model. In generative search, there’s no “Page 2.” If the model names three competitors and excludes you, you’ve lost 100% of that query’s value. Share of Model measures your citation volume relative to competitors across a prompt set.

Position Rank. Order matters. Being mentioned first in a recommendation list confers first-mover authority. And because AI-referred visitors arrive “pre-educated,” having already compared options inside the chat, they convert at disproportionately high rates. Ahrefs’ internal data found that AI traffic accounted for just 0.5% of visitors but drove 12.1% of all new signups, a 23x conversion premium.

7 Best AI Rank Tracking Tools for LLM Citations in 2026

Not every team needs the same level of depth. Here’s how the current crop of LLM citation tracking platforms stacks up.

| Platform | Best For | Technical Strength | Price |

|---|---|---|---|

| Topify | Growth Teams | Swarm Probing and Action Center | $99/mo |

| Profound | Enterprise | CDN Crawler Analytics | $499+/mo |

| ZipTie.dev | Agencies | Visual Screenshot Verification | $69/mo |

| KIME | Marketing Leaders | 10-Model Perception Scoring | €149/mo |

| SE Ranking | SEO/GEO Hybrid | Cross-channel Correlation | $129/mo |

| Peec AI | Global Brands | 115+ Language Support | €89/mo |

| Otterly.AI | SMBs/Beginners | On-page GEO Audit | $29/mo |

1. Topify: The Standard for Strategic GEO Execution

Most platforms stop at dashboards. Topify closes the loop between data and action.

Its core differentiator is “Swarm Probing.” LLMs are non-deterministic: the same prompt can return different results depending on session state, geographic node, and randomization settings. Topify addresses this by sending thousands of prompt variations across multiple regions, producing statistically reliable Share of Model data instead of one-off snapshots.

The platform tracks across ChatGPT, Gemini, Perplexity, AI Overviews, DeepSeek, Claude, Doubao, and Qwen. That breadth matters. If you’re only monitoring ChatGPT, you’re missing citation patterns on platforms your audience actively uses.

Where Topify pulls ahead of other ai rank tracking tools is its Action Center. When the system detects a drop in citation share, its AI agent proposes specific content fixes, schema updates, or source-gap strategies. You review the recommendation and deploy it with one click. No separate content brief. No waiting for a dev sprint.

For growth-stage SaaS and ecommerce teams that need both the data and the execution layer, Topify is the platform most likely to move the needle within 30 days. Plans start at $99/month with a 30-day trial on the Basic tier.

2. Profound

Profound is built for Fortune 500 compliance environments. Backed by $35M in Series B funding from Sequoia, it integrates with CDN logs from Cloudflare, Akamai, and AWS to track how AI training bots interact with your content before that data surfaces publicly. SOC 2 Type II, HIPAA, and GDPR compliant. Starting at $499/month, it’s priced for enterprise budgets.

3. ZipTie.dev

ZipTie’s standout feature is screenshot capture: it records the full visual context of every AI response it tracks. For agencies that need to show clients exactly what a customer sees in ChatGPT or AI Overviews, this visual evidence is more persuasive than any abstract score. Its proprietary AI Success Score synthesizes mentions, sentiment, and citation strength into a single metric. Starting at $69/month.

4. KIME

KIME was purpose-built for the agentic web, not bolted onto a legacy SEO platform. It tracks 10 models in real time, including Claude, Grok, and Microsoft Copilot. Its “AI Perception” module breaks down the specific keywords and source types (editorial, UGC, influencer) shaping how AI describes your brand. Its impact prediction feature tells you how much each fix will move your visibility score. Starting at €149/month.

5. SE Ranking

If your team isn’t ready to abandon traditional SEO workflows, SE Ranking bridges the gap. It integrates AI citation tracking into its existing rank-tracking interface, so you see SERP movements and AI Overview inclusion rates side by side. Its “AI Source and Coverage Analysis” categorizes cited domains into types (media, blogs, forums), helping you identify which “Trust Hubs” carry the most weight. Starting at $129/month.

6. Peec AI

Berlin-based Peec AI addresses a gap most tools ignore: non-English markets. With citation tracking across 115+ languages and GDPR built into its foundation, it’s designed for global brands. Peec distinguishes between content the AI “used” to form an answer and content it explicitly “cited” with a link, a distinction that matters for uncredited content usage investigations. Starting at €89/month.

7. Otterly.AI

The most accessible entry point. At $29/month, Otterly covers six platforms and includes a GEO Audit tool that evaluates 25+ on-page factors like header structure and schema. It lacks the behavioral depth of Profound or the execution engine of Topify, but for solo marketers establishing their first AI visibility baseline, it’s the fastest path from signup to data.

5 Mistakes That Burn Your LLM Citation Tracking Budget

Having the right platform is half the battle. Using it wrong wastes whatever you’re paying.

Mistake 1: Only tracking ChatGPT. Citation patterns differ wildly across platforms. Google AI Overviews is the most stable, with 53% of queries showing zero citation changes over 17 weeks. ChatGPT Search is the most volatile, replacing up to 74% of cited domains every week. If you’re only watching one model, you’re basing strategy on a fraction of the picture.

Mistake 2: Counting mentions instead of mapping citation sources. A mention tells you the model knows your name. A citation source map tells you which URLs the model actually trusts. The gap between the two is where competitors steal your position.

Mistake 3: Checking once a month. Research across 80,000+ prompts shows that “carousel” sources outside the stable core rotate at 89% per week. Monthly spot-checks produce noise, not signal. You need continuous monitoring to separate real trends from statistical flicker.

Mistake 4: Ignoring content freshness. LLMs have a strong recency bias. Content updated within the past 60 days is 1.9x more likely to appear in AI answers than older material. If your “ultimate guide” hasn’t been touched in six months, it’s probably already falling out of the citation set.

Mistake 5: Skipping the fan-out. Traditional SEO targets a head term. LLMs break complex questions into sub-queries. A user asking about the “best HIPAA-compliant hosting” triggers sub-searches for features, pricing, and security reviews separately. Brands that only optimize for the main query miss citation slots in every sub-search.

Your Checklist Before Picking an LLM Citation Tracking Platform

Before you commit to a platform, run through these seven evaluation criteria. They’ll save you from buying a dashboard that looks impressive but doesn’t change outcomes.

Cross-platform coverage. Does it track the models your audience actually uses? ChatGPT, Perplexity, Gemini, and AI Overviews are table stakes. Regional models like DeepSeek matter if you operate in Asia-Pacific.

URL-level citation depth. Can you see the specific domains and pages being cited, not just whether your brand name appeared? This is the line between a visibility tool and a citation tracking platform.

Competitive citation benchmarking. Can you compare your citation sources against competitors? Knowing you’re cited 20% of the time means nothing without knowing your top competitor is cited 45%.

Update frequency. Weekly monitoring is the minimum. Daily is better. The 74% weekly churn rate on ChatGPT Search means yesterday’s data is already partially stale.

Sentiment and context analysis. Being mentioned as “outdated” or “limited” is worse than not appearing. Make sure the platform scores sentiment, not just presence.

Actionability. Data without a path to execution is expensive trivia. Look for platforms that connect insights to specific content recommendations, like Topify’s Action Center, which translates citation gaps into deployable fixes.

Pricing alignment. Match the investment to your stage. Solo marketers can start with Otterly at $29/month. Growth teams get the most leverage from Topify at $99/month. Enterprise needs justify Profound at $499+. The cost of not tracking is a Revenue Visibility Gap that compounds every month.

Conclusion

The brands winning in AI search right now aren’t the ones with the highest domain authority. They’re the ones that know exactly which URLs ChatGPT, Perplexity, and Gemini are citing, and they’re updating those pages before the citation set rotates next week.

LLM citation tracking isn’t a nice-to-have reporting layer. It’s the difference between capturing AI-referred traffic that converts at 23x traditional rates and being invisible in the channel that now accounts for 93% of zero-click sessions. Start by picking a platform that matches your team size and budget, establish your citation baseline across at least three AI models, and build a 60-day content refresh cadence. The conversion premium rewards early movers, and the stable core of AI citations gets harder to crack with every passing quarter.

Ready to see what AI is actually citing in your category? Get started with Topify and run your first citation audit today.

FAQ

Q: What is an LLM citation tracking platform?

A: An LLM citation tracking platform monitors which URLs and domains AI models like ChatGPT, Perplexity, and Gemini cite when generating answers. Unlike traditional SEO tools that track keyword rankings, these platforms reveal the specific sources AI trusts, how often your brand appears, and whether the context is positive or negative. They’re built to measure visibility inside AI-generated responses, not on traditional search results pages.

Q: How much does an LLM citation tracking platform cost?

A: Pricing ranges from $29/month for basic monitoring (Otterly.AI) to $499+/month for enterprise-grade solutions (Profound). Growth-focused platforms like Topify start at $99/month with 100 tracked prompts, 9,000 AI answer analyses, and coverage across ChatGPT, Perplexity, and AI Overviews. Most platforms offer monthly billing with discounts on annual plans.

Q: How do I measure if my LLM citation tracking platform is working?

A: Track four metrics over 90 days: Visibility Score (percentage of target prompts where you appear), Share of Model (your citations vs. competitors), Sentiment Quotient (whether mentions are positive), and citation source stability (whether your URLs are in the “stable core” or rotating “carousel”). If your Visibility Score climbs above 40% and your URLs anchor in the stable core, the platform is delivering value.

Q: What’s the difference between AI rank tracking and LLM citation tracking?

A: AI rank tracking typically measures whether your brand is mentioned and where it appears in an AI recommendation list. LLM citation tracking goes deeper: it identifies the exact URLs the model references as evidence, maps citation patterns across platforms, and tracks how those sources shift over time. Think of rank tracking as “did AI mention me?” and citation tracking as “did AI trust my content enough to cite it?”