You’ve invested months building up your G2 presence. Hundreds of verified reviews, a solid star rating, maybe a Leader badge or two. Then a prospect asks ChatGPT, “What’s the best [your category] software?” and your brand doesn’t appear once.

The reviews are real. The problem is that AI engines don’t read G2 the way buyers do. And most SaaS marketing teams are optimizing for the wrong signals entirely.

G2 AEO isn’t about accumulating more reviews. It’s about making your profile machine-readable in the specific ways that determine whether an AI recommends you or your competitor.

AI Engines Don’t Trust Your Website. They Trust G2.

When an AI like ChatGPT or Perplexity generates a product recommendation, it isn’t summarizing your homepage. It’s cross-referencing your claims against third-party sources that it treats as higher-confidence truth layers.

Your website is viewed as a biased narrative. It was written by your team, to your audience, with your positioning front and center. AI systems flag this as a potential source of error. To provide accurate recommendations without hallucinating, LLMs look for corroboration, and review platforms like G2 are the primary corroboration layer for B2B software.

G2’s structured data environment is exactly what makes it valuable here. Its taxonomy, use-case tagging, and verified user-generated content give AI models the machine-readable infrastructure they need to categorize and compare brands at scale. The relationship is similar to what Wikipedia once was for Google’s Knowledge Graph: G2 provides the ontological framework that lets AI place your brand in the right context.

This trust isn’t distributed evenly. Five review domains, including Gartner Peer Insights, G2, Capterra, Software Advice, and TrustRadius, account for 88% of all review-platform links cited by AI engines. G2 holds a 23.1% citation shareacross general B2B and SaaS queries. That number is about to get larger.

G2 Is Already Being Cited. The Question Is Whether It’s Citing You.

Here’s the counterintuitive reality of 2026: G2’s influence as an AI citation source is at an all-time high, even as its organic search traffic dropped 84.5% between January 2024 and December 2025.

That drop isn’t a sign of G2 losing relevance. It’s proof that the zero-click era has arrived. Users no longer need to visit G2 because the AI has already extracted the data and presented it in a synthesized answer. The platform went from a traffic destination to a citation anchor.

55% of enterprise buyers now rely on AI search more heavily than traditional Google, and 50% of B2B software buyersbegin their purchase journey inside an AI chatbot. That’s a 71% increase in just four months.

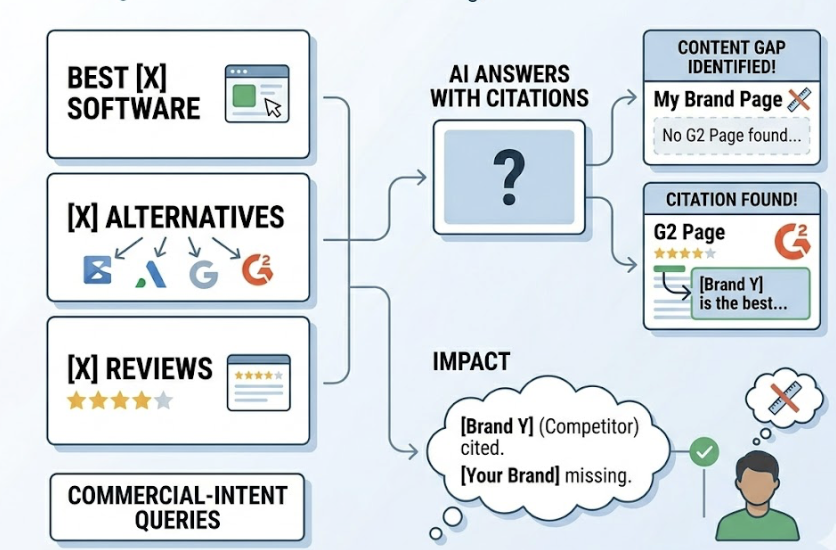

The queries that trigger G2 citations most consistently are commercial-intent searches: “best X software,” “X alternatives,” “X reviews.” These are the exact prompts your prospects type when they’re evaluating options. When AI answers those prompts, it’s frequently using a competitor’s G2 page to make the case.

That’s the hidden dynamic most teams miss. Your absence from AI citations in those queries doesn’t mean the AI said nothing. It means it recommended someone else.

What AI Actually Reads on Your G2 Profile (It’s Not the Star Rating)

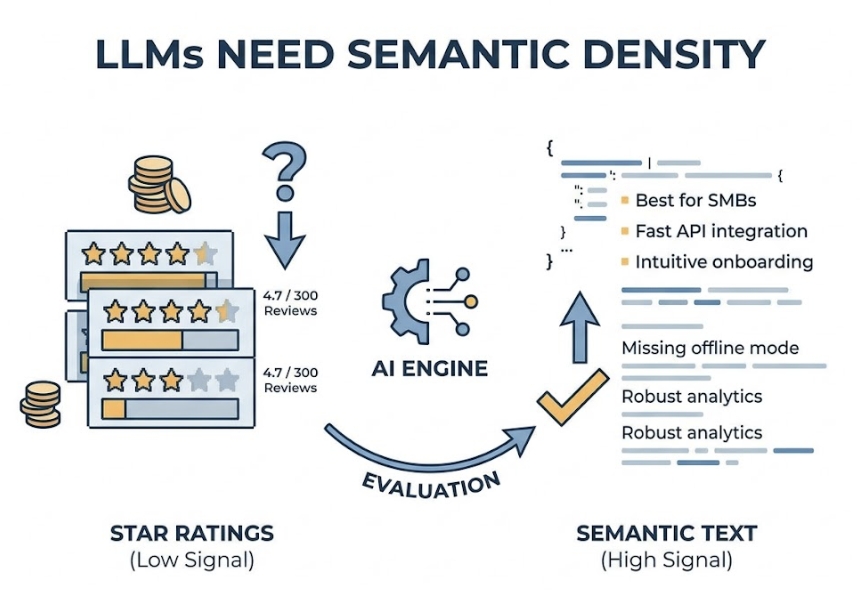

Most G2 strategies are built around one metric: aggregate star rating. That’s the wrong lever for G2 AEO insight.

LLMs treat star ratings as low-resolution signals that are easily gamed and difficult to contextualize. A 4.7 from 300 reviews tells the AI very little about which specific use case your product is suited for. What AI engines actually prioritize are the fields that carry semantic density.

Use Case and Category Tags

Category and use-case tags are the ontological anchors that determine which AI recommendation pools your product enters. If your product is tagged as a general “CRM” when it specifically serves real estate teams, it won’t appear when an AI answers “best CRM for real estate agents,” regardless of how many reviews you have.

AI models calculate how closely a product’s defined purpose matches a user’s specific request. Brands that achieve Leader status in precisely the right G2 categories see a 4.1x higher citation frequency in AI “best of” queries. The category audit is one of the highest-leverage fixes available.

Review Body Text

The raw text inside the Pros and Cons fields is the primary evidence layer for AI engines. When an AI recommends a product, it often pulls specific themes or quasi-direct context from these sections to justify the recommendation.

A review that says “excellent for cross-departmental collaboration in large engineering teams” is dramatically more useful for answer engine optimization than “5/5, love this product.” The first gives AI the scenario-specific context it needs to match your brand to a detailed, high-intent prompt. The second gives it nothing.

Vendor Responses

Vendor responses are one of the few places in your G2 profile where you have full editorial control, and most brands treat them as customer service exercises.

AI engines read vendor responses to understand official brand positioning and resolve contradictions within user reviews. When you respond with language that mirrors how your buyers actually phrase their problems in AI queries, you’re giving the AI a second, brand-verified data point to work with. That’s what turns a vendor response into a G2 AEO asset.

Your Competitors Are Winning AI Recommendations Before You Know the Game Started

The G2 acquisition of Capterra, Software Advice, and GetApp from Gartner was framed as a strategic move to build the definitive AI trust layer for the software industry. The data backs that framing.

Statistical modeling of the acquisition suggests that G2’s citation share in bottom-of-funnel queries, including pricing, comparisons, and proof of evidence, will increase by approximately 76%. For high-intent queries specifically around customer testimonials and evidence, the combined G2 ecosystem is projected to reach a 12.69% share of all AI citations, a 93% lead over the next most-cited domain.

This creates a compounding problem for brands that haven’t started optimizing.

A competitor who’s mapped their G2 profile to the right categories, guided reviewers toward scenario-specific feedback, and maintained an active review cadence will get cited more. More citations lead to higher frequency in conversational AI answers. Higher frequency leads to cognitive capture, where the market starts to perceive that competitor as the category default, because AI consistently names them first.

By the time your sales team notices the pattern, the association is already forming in the minds of buyers who never visited your website.

The G2 AEO Optimization Checklist: 6 Things to Fix This Week

1. Rewrite Your Product Description with Prompt-Aware Language

Marketing-heavy copy doesn’t translate to AI citations. Replace phrases like “our innovative platform streamlines operations” with factual, scenario-specific statements: “automates Salesforce integration, manages SSO for enterprise teams, provides real-time ROI tracking for marketing managers.”

AI engines use “answer-first” structures. Your product description should read like the answer to a buyer’s AI prompt, not a homepage hero section.

2. Audit Your Category and Use-Case Tags for Precision

Run a quick test: search your core buyer personas’ queries in ChatGPT and Perplexity. If competitors appear and you don’t, cross-reference their G2 category tags against yours. Misalignment in G2’s taxonomy creates entity conflicts that cause AI to favor more clearly defined alternatives.

Specificity beats breadth. Five precisely matched tags outperform fifteen broad ones.

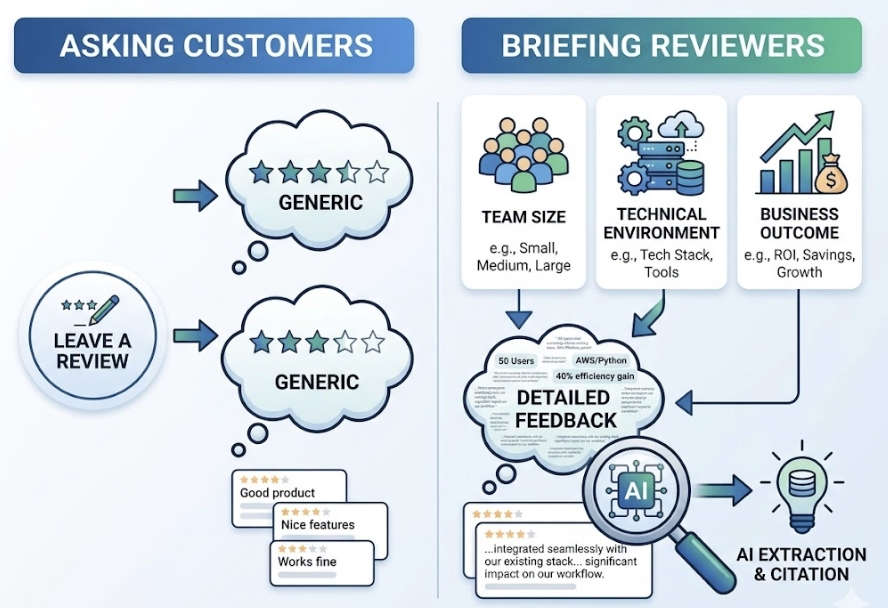

3. Brief Reviewers on What to Write, Not Just to Write

Most review collection campaigns ask customers to “leave a review.” That produces generic feedback. Instead, brief reviewers on the specific scenario they used your product for: the team size, the technical environment, the business outcome. That level of detail is what AI engines extract and cite.

Active, descriptive profiles are 3x more likely to be cited by ChatGPT than stagnant ones, regardless of total review count. Recency and semantic density matter more than volume.

4. Respond to Reviews Like the AI Parser Is Reading

Shift the frame on vendor responses. You’re not just addressing a customer. You’re adding context to a dataset that AI engines read when synthesizing brand summaries.

In each response, weave in one or two scenario-specific terms that your buyers use when querying AI platforms. Don’t force it. One well-placed phrase per response compounds over time into a richer retrieval profile.

5. Monitor Which Prompts Are Triggering G2 Citations for Competitors

This is the step most teams skip because traditional tools don’t support it. The prompts that matter aren’t the ones that mention your brand name. They’re the category-level queries, “best [category] for enterprise teams,” “alternatives to [competitor],” that buyers use before they’ve shortlisted anyone.

Knowing which prompts are driving citations for competitors tells you exactly where your G2 optimization should focus.

6. Ensure Your G2 Claims Are Mirrored on Your Own Site

AI engines cross-reference. If your G2 profile mentions an integration that your website doesn’t document, or claims a use case your blog doesn’t support, the AI may flag the inconsistency and exclude your brand from the answer to avoid providing inaccurate information.

Cross-platform entity consistency isn’t a nice-to-have. It’s a prerequisite for being cited confidently.

You Can’t Measure G2 AEO Impact with G2 Traffic Metrics

Traditional web analytics can’t capture most of what’s happening in AI search. When a user asks ChatGPT for a software recommendation and your brand gets named, there’s no click to track. That interaction happens entirely inside the AI interface, and GA4 typically attributes any resulting visits as direct or referral traffic, making the source invisible.

But the impact is real. AI-referred traffic converts at 4.4x higher rates than traditional organic for informational queries. In specific B2B cases, ChatGPT referrals have demonstrated conversion rates as high as 15.9%, compared to 1.76% for Google organic. The visits are fewer, but they arrive already briefed.

To measure whether your G2 optimization is actually working, you need a different set of metrics.

Topify tracks citation frequency across ChatGPT, Perplexity, Gemini, and other major AI platforms, and its Source Analysis feature identifies exactly which domains AI engines are pulling from when they answer queries in your category. That means you can see whether G2 is being cited for your brand or your competitors, and which specific prompts are triggering those citations.

Visibility Tracking shows you your brand’s presence score over time, so you can correlate G2 profile updates, like a batch of new scenario-specific reviews or a product description rewrite, with actual changes in AI citation frequency. That’s the feedback loop that turns G2 AEO from a hypothesis into a measurable growth channel.

The Q1 2026 data tells the story clearly: the AEO software category on G2 saw a 62% increase in page views in a single quarter. The market has already concluded that G2 optimization for AI visibility is worth prioritizing. The question is whether your team is ahead of that curve or catching up to it.

Conclusion

G2 was built for buyers. It’s now being read by AI. That shift changes almost everything about how a profile should be managed.

The brands that win AI recommendation share in 2026 aren’t necessarily the ones with the most reviews or the highest star ratings. They’re the ones with the most machine-readable profiles: precise category tags, scenario-specific review text, consistent cross-platform data, and vendor responses that speak the language AI engines are listening for.

Start with the checklist in this article. Then set up the measurement layer so you can see what’s actually moving. G2 has become one of the highest-leverage surfaces for B2B brand visibility in AI search. The ROI is real, but only if you’re tracking it.

FAQ

Q: Does having more G2 reviews improve my AEO ranking?

A: Not on its own. AI engines prioritize the recency and semantic density of reviews over total count. Profiles with active, descriptive reviews updated within the last 90 days are 3x more likely to be cited by ChatGPT than stagnant profiles with hundreds of generic five-star ratings. Focus on quality and scenario specificity, not volume.

Q: Is G2 more effective than Capterra for AI search visibility?

A: G2 currently holds a slight lead with a 23.1% AI citation share versus Capterra’s 17.8%, and they’re typically cited for different purposes. G2 tends to appear in user-rating queries while Capterra surfaces in feature-comparison queries. Following the acquisition, the distinction is becoming less relevant operationally, as the unified data pool will inform recommendations across both surfaces simultaneously.

Q: Do negative reviews on G2 hurt my AI search ranking?

A: Yes, but not through the star rating mechanism. AI engines synthesize the substance of negative feedback. If several reviews mention “frequent downtime” or “poor enterprise support,” the AI may characterize your brand that way in synthesized answers. Prompt, well-written vendor responses can provide corrective context that AI includes in a balanced summary, which is why response strategy matters as much as review collection.

Q: Can AI engines read gated G2 content?

A: No. AI crawlers including GPTBot and ClaudeBot can’t bypass login walls, paywalls, or form gates. If your most detailed case studies or review insights are gated, they’re invisible to the AI that could be citing them. G2 also specifically disallows certain crawler paths in its robots.txt to protect proprietary data, so the publicly accessible portions of your profile are what AI engines work from.