Your content ranks. Your domain authority is solid. Your backlink profile took years to build.

Then you check what ChatGPT tells users when they ask for a recommendation in your category. Your brand isn’t there. A competitor with a fraction of your DA is cited three times. The problem isn’t your SEO. It’s that the rules of brand discovery have changed faster than most teams realized, and traditional optimization has no lever to pull.

That’s what AEO is for. And that’s why doing it manually doesn’t work anymore.

AI Search Has a New Gatekeeper — and It Doesn’t Work Like Google

The search landscape isn’t just shifting. It’s splitting.

Global daily search queries continue to grow, estimated at 9.1 to 13.6 billion per day. But the traffic those queries generate is heading somewhere else. 60% of Google searches now end without a click, a number that climbs to 77% on mobile. When Google triggers an AI Overview, the organic CTR for informational queries drops from 1.62% to as low as 0.61%.

ChatGPT now processes over 2 billion queries daily, holding a 79.98% share of the AI chatbot market. Google’s AI Overviews reaches 2 billion monthly users. Perplexity is the go-to for research-heavy queries.

These platforms don’t return a ranked list. They return a verdict. And if your brand isn’t part of that verdict, the ranking you worked years to build becomes invisible at the moment of highest purchase intent.

What AEO Actually Means (and Where It Differs from GEO)

Answer Engine Optimization (AEO) is the practice of structuring content so that AI systems select your brand as the cited source when generating a direct answer.

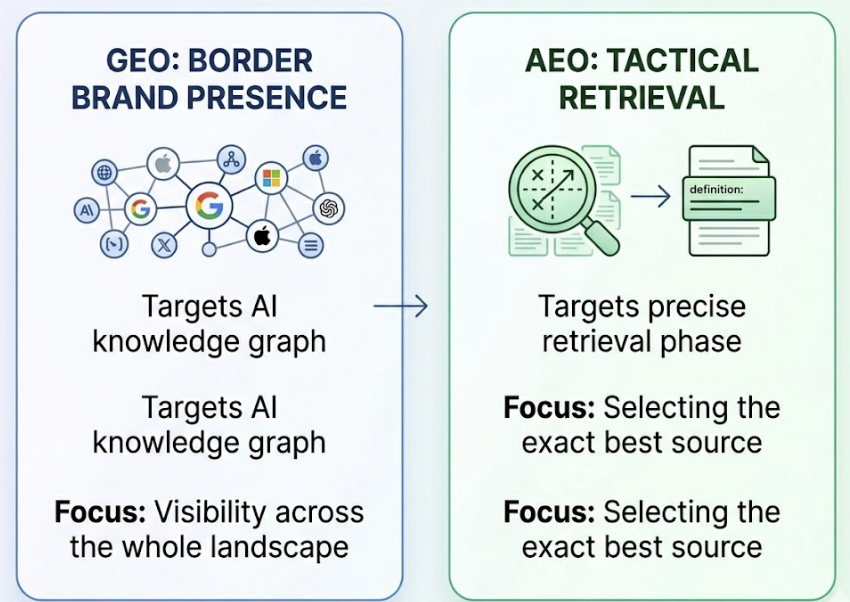

It’s distinct from GEO (Generative Engine Optimization), though the two are related. GEO focuses on broader brand presence across the AI knowledge graph. AEO is more tactical: it targets the retrieval phase, where an AI engine decides which source to extract a specific fact, definition, or recommendation from.

That distinction matters in practice. AEO wins the specific answer. GEO wins the ambient perception. You need both, but AEO tends to produce faster, more measurable results on high-intent queries.

Here’s where things get interesting.

The Princeton, Georgia Tech, and Allen Institute for AI research team analyzed 10,000 queries to measure what actually drives AI citation rates. The findings don’t resemble traditional SEO logic at all.

| Optimization Strategy | Visibility Improvement |

|---|---|

| Adding statistics | +41% |

| Citing authoritative sources | +40% |

| Including expert quotations | +28% |

| Fluency optimization | +15–30% |

Keyword density doesn’t appear. Domain authority isn’t a variable. Domain Authority explains less than 4% of the variance in AI citations, meaning the metrics most marketing teams have tracked for a decade are nearly irrelevant for AEO performance.

The 4 Signals AI Answers Actually Look For

AI engines aren’t ranking content by relevance in the traditional sense. They’re minimizing risk. Specifically, minimizing the risk of generating a hallucination that damages user trust.

That changes what “good content” means at the machine level.

Verifiable data density. Adding statistics improves citation likelihood by 41% because quantitative data is easy for an AI to attribute and hard to contradict. A paragraph with a specific number is a safer citation than one with qualitative claims.

Structural clarity. 68.7% of cited pages use a logical H1 → H2 → H3 hierarchy. AI systems parse content in semantic chunks. A well-organized heading structure tells the model what each section is about before it processes the content.

Front-loading. 44.2% of AI citations come from the first 30% of a piece of content. The inverted pyramid isn’t just a journalism convention. It’s an extraction optimization.

Schema markup. 61% of cited pages use structured data. Schema serves as a fact anchor, reducing the ambiguity that leads to hallucinations. Without it, the AI is guessing at your entity relationships.

These aren’t soft best practices. They’re the mechanical inputs that determine whether the AI trusts your content enough to use it.

Why Manual AEO Doesn’t Scale — and What Agentic AI Changes

Here’s the core problem with manual AEO: the average citation half-life across AI platforms is 4.5 weeks. ChatGPT specifically cycles through sources every 3.4 weeks. That means content you optimized last month has a roughly 50% chance of no longer being cited this month.

| Platform | Citation Half-Life |

|---|---|

| ChatGPT | 3.4 weeks |

| Google AIO | 4.7 weeks |

| Gemini | 4.6 weeks |

| Perplexity | 5.8 weeks |

No marketing team can manually re-audit thousands of prompts across four platforms every three weeks, identify which citations dropped, diagnose why, update content accordingly, and re-seed authoritative signals at scale. The math doesn’t work.

This is where Agentic AEO becomes the only viable approach at scale.

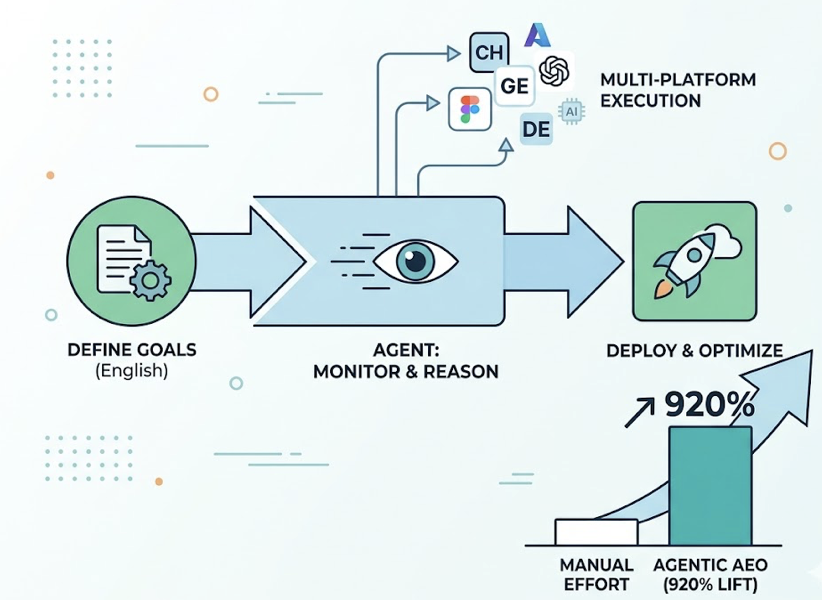

Unlike a simple dashboard or a one-time audit tool, an AEO Agent operates on a continuous cycle: sense, decide, act, learn. It monitors which prompts cite your brand and which cite competitors. It performs attribute gap analysis when your share of voice drops. It identifies whether the gap is a freshness problem, a structural problem, or a source trust problem. Then it executes.

Topify‘s One-Click Agent Execution is built around this model. You define your optimization goals in plain English. The agent handles the monitoring, reasoning, and deployment, covering ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms simultaneously. Brands using agentic AEO systems report a 920% lift in AI-driven traffic compared to manual efforts.

The difference isn’t just efficiency. It’s whether you can actually maintain visibility in an environment where citations decay faster than any human workflow can respond.

A Practical AEO Workflow Using AI Agents

This is the operational structure most teams should follow. Each step maps to a capability an AEO agent handles continuously, not just at launch.

Step 1: Discover high-value AI prompts. This is different from keyword research. You’re looking for the conversational queries your target audience types into ChatGPT or Perplexity, including “fan-out queries” the AI generates to build a complete answer. Topify’s High-Value Prompt Discovery surfaces these continuously as AI recommendation patterns shift.

Step 2: Establish your visibility baseline. Before optimizing, you need to know your current citation rate, ranking position within AI responses, and sentiment across platforms. There’s only an 11% overlap between sources cited by ChatGPT and those cited by Perplexity, so single-platform data gives a deeply incomplete picture.

Step 3: Audit citation sources. The agent identifies which domains the AI currently trusts for your category. If Reddit threads and industry publications are being cited instead of your owned content, the strategy needs to include third-party signal seeding on those platforms.

Step 4: Create content capsules. Long-form content is harder for AI to parse. The effective unit for AEO is a self-contained 40–60 word block that leads with a direct answer, includes a proprietary statistic, and is wrapped in schema markup. These are designed for fragment extraction, not for human reading time-on-page metrics.

Step 5: Monitor, detect, and redeploy. The agent continuously re-tests target prompts. When a citation drops or a hallucination appears, it either alerts the team or deploys an updated data set to correct the model’s understanding before the damage compounds.

3 AEO Mistakes That Drain Your Citation Rate

Even with an agent in place, these structural errors commonly undercut results.

Optimizing for one platform only. With only 11% citation overlap between ChatGPT and Perplexity, a strategy focused solely on ChatGPT leaves the majority of AI-driven discovery untouched. Topify tracks across ChatGPT, Gemini, Perplexity, DeepSeek, and others from a single view. You can’t optimize what you can’t see.

Treating AEO as a project, not a system. A one-time schema update or a batch of optimized posts will generate citations for roughly 4 weeks. After that, source decay kicks in. The 920% traffic lift from agentic AEO compared to manual processes reflects this directly. It’s not that the optimization is better. It’s that it’s continuous.

Ignoring sentiment. Being cited isn’t always a win. Negative sentiment appears in 2.3% of Google AIO brand mentions and 1.6% in ChatGPT, but it concentrates in evaluative queries where purchase intent is highest. One case study from the research found a SaaS firm whose demo-to-close rate dropped 23% because ChatGPT was hallucinating an outdated, lower price point. Prospects were arriving at sales calls accusing the team of bait-and-switch pricing.

Topify’s Sentiment Analysis tracks AI brand perception with a 0–100 scoring model. Catching a sentiment drift before it hits revenue is the kind of signal manual audits miss entirely.

Conclusion

Traditional SEO got you ranked. AEO determines whether AI systems trust your brand enough to say your name.

The citation premium is real: brands cited in AI Overviews receive 35% more organic clicks and 91% more paid clickscompared to brands excluded from the summary. The difference between being cited and being invisible isn’t content quality in the traditional sense. It’s structural machine-readability, data density, and the operational discipline to maintain both as citation sources decay every 3–5 weeks.

Start by auditing your current AI visibility baseline across platforms. Then build the content and schema infrastructure that makes your brand the lowest-risk citation. Let an agent handle the rest. Get started with Topify to see exactly where you stand today.

FAQ

Q: What’s the difference between AEO and GEO? A: AEO (Answer Engine Optimization) focuses specifically on getting your brand cited as the direct answer in AI-generated responses, targeting the retrieval phase. GEO (Generative Engine Optimization) is broader, aiming to build your brand’s presence across the entire knowledge graph an AI model draws from. AEO tends to produce more immediate, measurable citation wins; GEO builds the ambient authority that sustains them.

Q: Do AI Agents replace the need for human content teams? A: No. AEO agents handle the monitoring, gap detection, and technical execution that humans can’t maintain at the frequency required. Typically 3–5 week citation cycles across multiple platforms. Human teams still define strategy, set optimization goals, and create the core content. The agent operationalizes that work continuously.

Q: How long does it take to see results from AEO optimization? A: Citation changes can appear within 72 hours for technical updates like schema markup, since AI platforms re-index source data frequently. Broader visibility improvements typically become measurable within 2–4 weeks, aligning with the 3.4–5.8 week citation refresh cycle across major platforms.

Q: Which AI platforms should I prioritize for AEO? A: It depends on where your audience searches, but the overlap between platforms is small enough that single-platform optimization is a significant risk. ChatGPT handles 2 billion daily queries, making it the highest-volume target. Perplexity has the longest citation half-life at 5.8 weeks, making it more durable once you earn a citation. Ideally, your AEO strategy covers ChatGPT, Perplexity, Google AIO, and Gemini simultaneously.