A 4.8-star rating on G2 doesn’t tell you whether a tool can track your brand inside a ChatGPT response. That gap is where most AEO buying decisions go wrong.

The AEO software category on G2 has grown over 2,000% since March 2025. More teams are shopping for tools. Fewer know what to actually look for. This article breaks down what G2 reviews reveal — and what they quietly skip over.

Most G2 Ratings Miss the Metric That Matters Most in AEO

G2’s scoring framework was built for traditional SaaS: usability, implementation speed, relationship quality. Those dimensions matter. But they don’t measure what AEO tools are actually hired to do.

In traditional SEO, a tool tracks a blue link on a static results page. The rank is linear. Verification is simple. AEO is different. Brand visibility in ChatGPT or Perplexity is probabilistic — the same query can return different citations depending on prompt history, geographic parameters, and model version. G2’s evaluation rubric wasn’t designed for that variability.

Here’s what that means in practice:

| G2 Evaluation Dimension | What Users Score | What It Misses |

|---|---|---|

| Usability Index | How intuitive is the dashboard? | Clean UIs can hide outdated “model freeze” data |

| Implementation Index | How fast can we get started? | Rapid setup often means shallow crawler integration |

| Relationship Index | How responsive is support? | Great support can’t fix a tool that ignores DeepSeek |

| Results Index | Is the tool providing value? | Users may optimize for mentions, not CVR |

The tools with the highest G2 scores in early 2026 are often the ones with the best customer success teams — not necessarily the strongest technical infrastructure.

That’s the gap this article helps you close.

What G2 Reviews Actually Say About AEO Tool Performance

The aggregate star score is the least useful number on the page. The useful signal is buried in the 1-3 star reviews.

A granular read of low-star feedback across established AEO players reveals three consistent clusters of dissatisfaction: data accuracy, platform latency, and what practitioners are calling the “actionability gap.”

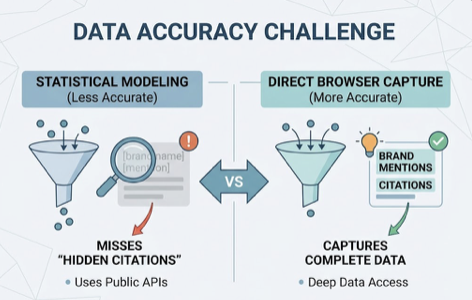

Data accuracy is the most contested area. Tools that rely on statistical modeling rather than direct browser capture tend to miss what researchers call “hidden citations” — brand mentions inside LLM training sets that don’t surface through standard public APIs. Users from platforms like Similarweb and BrightEdge have flagged this specifically in niche markets and smaller sites.

Latency is the second major pain point. With AI models updating their retrieval-augmented generation (RAG) datasets more frequently in 2026, a weekly data refresh cycle is often inadequate for high-velocity marketing teams. When content changes don’t show up in the dashboard for days, teams lose the ability to respond to real-time shifts.

The actionability gap is the complaint that’s hardest to see coming. Many teams discover post-purchase that their tool functions as an intelligence center, not an execution engine. The data is clean. The reports look sharp. But there’s no built-in mechanism to act on what the dashboard surfaces.

If you’re reading G2 reviews to make a purchase decision, these are the complaint tags worth filtering for:

| G2 “Con” Tag | Technical Meaning | Business Impact |

|---|---|---|

| Expensive / High Pricing | Hidden add-on fees or steep entry cost | Prohibitive for SMBs; high pressure to prove ROI fast |

| Overwhelming UI | Legacy SEO features bundled with AEO | Steep learning curve; AEO insights get buried |

| Slow Loading / Latency | Backend struggles with large dataset processing | Can’t respond to real-time model updates |

| Limited Customization | Rigid reporting templates | Hard to present visibility data to CMO vs. SEO Manager |

Skip the high-level satisfaction score. Read the cons. That’s where the technical reality lives.

The AEO Tools With the Strongest G2 Profiles in 2026

The G2 Spring 2026 Grid for AEO identifies a clear set of leaders across different market segments. Here’s how the top five platforms compare on the dimensions that actually matter for answer engine performance:

| Platform | G2 Status | AI Platforms Covered | Starting Price | Key Differentiator |

|---|---|---|---|---|

| Topify | Emerging Technical Standard | ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and more | $99/mo | 7-metric framework + One-Click Agent Execution |

| Profound | Category Leader | 10+ platforms | $499/mo | SOC 2/HIPAA compliance; enterprise infrastructure |

| Quattr | Highest Performer | ChatGPT, Gemini, Perplexity, Copilot | Custom | CMS-integrated content execution; 3X content velocity |

| Visby AI | High User Satisfaction | ChatGPT, Claude, Gemini | $79/mo | Funnel-stage monitoring |

| AirOps | Momentum Leader | 30+ AI models | Custom | Workflow automation at enterprise scale |

1 Topify: The 7-Metric Operating System for AI Search

Topify has positioned itself as more than a rank tracker. Built by a team of former OpenAI researchers and Google SEO champions, the platform achieves 95-98% citation accuracy — a benchmark that most category tools don’t publish because they can’t match it.

The architecture centers on seven KPIs that connect brand visibility to revenue: visibility, volume, position, sentiment, mentions, intent, and CVR. Most tools stop at “mentions.” Topify’s Sentiment Analysis adds a 0-100 scoring mechanism that captures whether an AI is describing your brand enthusiastically, neutrally, or with caveats. That distinction changes strategy.

Its Source Analysis feature is what separates Topify from monitoring tools entirely. Rather than telling you that a competitor is cited more often, it reverse-engineers the exact domains and URLs that AI platforms are pulling from — and identifies the semantic gaps your content needs to close to reclaim that position.

The most significant competitive advantage for lean teams is One-Click Agent Execution. Most AEO tools require manual export, manual content writing, and manual publishing. Topify’s AI agent identifies the gap, builds the content, and deploys it in a single workflow. That’s the difference between an intelligence center and an execution engine.

Pricing is structured for teams at every stage. The Basic plan starts at $99/mo and includes a 30-day trial with 100 prompts and 9,000 AI answer analyses — enough data to cross-verify against manual results before committing.

2 Profound

Profound holds the G2 Category Leader position, backed by a client list that includes 10% of the Fortune 500. Its Query Fanouts Analysis maps how a single user prompt breaks into a chain of LLM sub-queries — useful for enterprise teams targeting the entire reasoning journey. SOC 2 Type II and HIPAA compliance make it the default choice for regulated industries. Entry price starts at $499/mo.

3 Quattr

Quattr is recognized as a Highest Performer on G2 for ease of use. Its GIGA AI agent unifies content gap identification, optimized content generation, and direct CMS publishing into one workflow. Teams report a 3X increase in content velocity. It functions as a bridge between traditional SEO and conversational AI visibility for mid-market brands.

4 Visby AI

Visby AI categorizes brand mentions by funnel stage — awareness, consideration, or decision. Growth teams use this to identify exactly where they’re losing ground to competitors in the customer journey, then receive prioritized GEO tasks like content fixes and schema improvements. Starting at $79/mo, it’s well-suited for focused conversion-stage work.

5 AirOps

AirOps recently closed a $40M Series B and covers 30+ AI models. It’s favored by high-volume content teams that need to scale AEO efforts across thousands of pages simultaneously, with optimization recommendations tuned for both traditional search and AI citation engines.

What the Scores Don’t Capture: AI Visibility Accuracy

This is the part of the G2 evaluation process that most procurement checklists skip entirely.

The non-deterministic nature of modern LLMs means that two identical queries — run 30 seconds apart — can return different brand citations. A tool that ranks highly for “Ease of Use” on G2 might be using API shortcuts that return averaged or static data, rather than capturing this real-world variability through direct browser crawling. The result is a clean dashboard built on a misleading foundation.

In 2026, there’s a second filter that G2 scores can’t measure: E-E-A-T as a binary gate.

Data from late 2025 shows that 47% of all AI Overview citations now come from pages that rank below the top five positions in Google organic search. AI engines aren’t prioritizing the highest-DA domain — they’re running content through an Experience, Expertise, Authoritativeness, and Trustworthiness threshold. Pages that clear it get cited. Pages that don’t, don’t.

The three content factors with the highest measurable impact:

| E-E-A-T Factor | Impact on AI Citation Probability |

|---|---|

| Structured Data (FAQ, Product, HowTo schema) | +73% selection rate |

| Multimodal Content (text + image + video for RAG) | +156% selection rate |

| Atomic Answer Format (passage-level extractability) | ~3X citation improvement |

Most G2-rated tools don’t audit for any of these. They show you that your visibility dropped. They don’t tell you why the AI stopped citing your content, or what specific structural change would bring it back.

The real question for any procurement team isn’t how users rate the dashboard. It’s whether the data provided changes the strategy.

How to Use G2 Insights to Actually Choose the Right AEO Tool

G2 is a starting point, not a verdict. Here’s a three-step framework for using it without getting misled.

Step 1: Filter reviews for implementation realism. Skip the aggregate score and search G2 comments for specific terms: “data lag,” “hallucination detection,” “manual verification,” “accuracy variance.” These phrases describe what actually breaks down when a team tries to run an AEO program at scale. Also filter by your industry — a tool that works well for an e-commerce brand may fail a healthcare provider, where AI Overviews appear on nearly 49% of all queries and accuracy is non-negotiable.

Step 2: Validate platform coverage. Research shows that 47% of

AI search users regularly switch between two or more platforms. A tool that only covers ChatGPT — which currently drives 87.4% of AI referral traffic — is not a forward-looking investment. You need coverage across at least ChatGPT, Gemini, Perplexity, and Claude, with a roadmap that includes emerging global engines like DeepSeek and Doubao. Also check methodology: direct browser capture versus API output produces meaningfully different data.

Step 3: Run high-volume trial data before committing. Topify’s Basic plan includes a 30-day trial with 100 prompts and 9,000 AI answer analyses. That’s a large enough dataset to cross-verify tool-reported citations against manual spot checks. If the platform’s recommendations don’t move your citation frequency within a 30-60 day window, the long-term ROI case collapses.

On the flip side, a short trial on a limited prompt set tells you very little. Negotiate for trial volume, not trial duration.

Conclusion

The AEO tool market in 2026 has split into two camps: measurement tools and execution engines. G2 scores are largely a proxy for the former — they reward clean dashboards and responsive support, not the technical accuracy needed to clear E-E-A-T filters or influence an AI’s reasoning chain.

Bottom line: prioritize tools that close the full loop — from analysis to content creation to deployment. Topify’s 7-metric framework, Source Analysis, and One-Click Agent Execution put it at the technical frontier for teams that need to move from visibility data to actual search influence. For regulated enterprises, Profound offers the strongest compliance infrastructure. For teams that need CMS-integrated execution, Quattr is a strong option.

In the answer economy, visibility isn’t guaranteed by domain authority. It’s earned through semantic clarity and structured content. Choose the tool that helps you earn it — not just measure it.

Start with Topify’s 30-day trial and test your actual citation rate before committing to any platform.

FAQ

What is AEO and how is it different from SEO in 2026?

AEO (Answer Engine Optimization) focuses on structuring content so it gets retrieved, cited, and accurately represented by AI answer engines. Where SEO targets clicks on a results page, AEO targets clarity and influence inside the AI’s synthesized response. In 2026, over 60% of search queries resolve without a single click — which is why AEO has moved from experimental to essential.

Are AEO tools listed as a separate category on G2?

Yes. G2 launched the Answer Engine Optimization category in March 2025. Some hybrid tools still appear in both AEO and traditional SEO categories, and some legacy platforms sell AEO features as add-on “AI Visibility Toolkits” within existing suites.

What specific technical complaints should I look for in G2 reviews?

Prioritize reviews that mention “slow loading,” “data latency,” “interface complexity,” and “accuracy variance.” These terms describe a tool’s inability to keep pace with the non-deterministic, frequently-updated outputs of modern LLMs — which is the core technical challenge of AEO.

Does Topify have a G2 profile?

Topify is one of the fastest-growing platforms in the 2026 AEO market. Early user feedback highlights fast innovation speed and user-centric design. Comparative G2 badges are updated quarterly as review volume scales. You can explore the platform directly at topify.ai.

How many AI platforms should a comprehensive AEO tool cover?

A minimum viable tool covers ChatGPT, Gemini, and Perplexity. Professional-grade platforms like Topify extend coverage to DeepSeek, Doubao, and other global engines — which matters if any portion of your audience uses non-English AI tools or operates in markets where ChatGPT isn’t dominant.