You’ve spent months polishing your website copy. Every page is optimized, every heading is deliberate. Then someone asks ChatGPT about your product category, and the AI describes your brand using language pulled from a two-year-old Reddit thread you never saw.

That’s not a hypothetical. That’s how social listening became a search visibility problem.

Social listening was always about understanding what people say about your brand. In 2026, it’s also about understanding what AI is learning from those conversations, and whether that narrative is one you’d choose for yourself.

Social Listening Has a New Job Description

Traditional social listening was defensive. Track mentions, catch crises early, measure sentiment over time. Useful, but reactive.

The shift comes from how AI search engines actually work. Platforms like ChatGPT, Perplexity, and Google’s AI Overviews use Retrieval-Augmented Generation to synthesize brand descriptions from across the web. They don’t prioritize your “About Us” page. They prioritize authentic, peer-validated, conversational content.

That means social listening is now dataset engineering. The conversations happening in forums, review platforms, and Q&A sites today are feeding the AI answers that will describe your brand tomorrow.

Community platforms and Wikipedia now capture 52.5% of all AI citations, frequently outperforming brand-owned domains. On the flip side, a brand’s own website accounts for only 9% of its mentions in AI-generated answers. The other 91% comes from third-party environments your team may not be monitoring at all.

That gap is the new job description of social listening.

The Platforms Where Brand Conversations Shape AI Answers

Not all platforms carry equal weight in the AI citation economy. Generative engines have clear preferences, and most brands are looking in the wrong places.

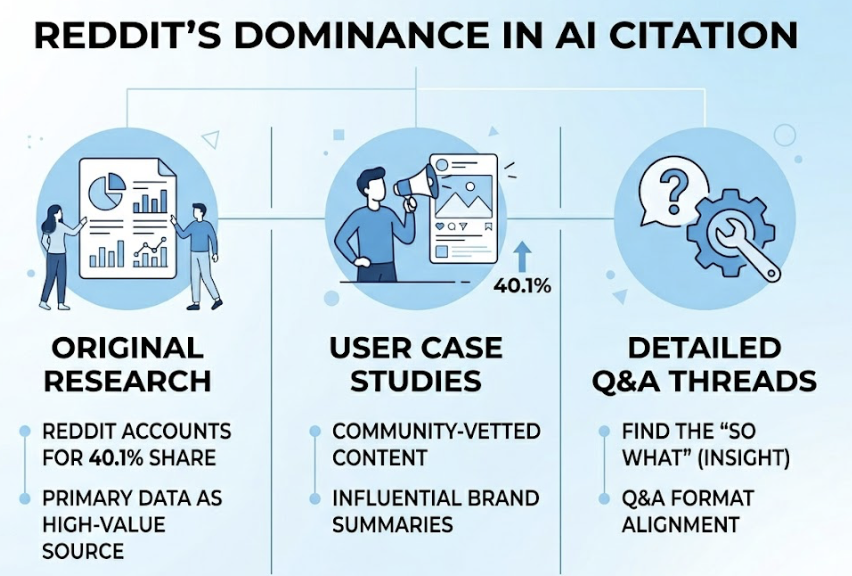

Reddit accounts for 40.1% of AI citation share across ChatGPT, Perplexity, and Google AI Overviews. Its Q&A format and community-vetted content make it the single most influential source for AI brand summaries. AI models use Reddit to find the “so what” behind technical facts.

Quora provides structured answers that map directly to how AI engines retrieve information for long-tail, high-intent queries. For B2B brands, platforms like G2, Capterra, and TrustRadius function as credibility layers. Perplexity uses reviews in 100% of its product-related responses.

Most brand teams monitor their own social accounts. That’s where the disconnect starts.

The conversations with the most AI influence are happening in spaces you don’t own: industry subreddits, niche Slack communities, product review threads, and vertical forums. If your brand mention monitoring stops at Instagram and LinkedIn, you’re tracking the wrong audience.

| Platform | AI Citation Share | Why It Matters |

|---|---|---|

| 40.1% | High-trust Q&A, community-vetted | |

| Wikipedia | 26.3% | Foundational training data |

| YouTube | 23.5% | How-to and tutorial authority |

| News & Media | 20.3% | Temporal relevance |

| Review Sites | ~8.5% | Structured brand evaluation |

5 Social Listening Signals That Tell You What AI Is Picking Up

Most teams track volume and sentiment. That’s fine for a quarterly dashboard. It’s not enough for online reputation tracking in an AI-first environment.

Here are the five signals that actually indicate how AI is building its understanding of your brand.

Signal 1: Sentiment Shifts, Not Just Scores

Don’t just track whether sentiment is positive or negative. Track changes in tone and engagement depth. When customer communication length drops by an average of 55% or response time to brand outreach increases from hours to days, users are 3.2x more likely to churn within 30 days. AI models pick up on this tonal flatness across community threads and tend to incorporate the “general vibe” of recent discourse into brand summaries.

Signal 2: Competitor Citation Gaps

Where are competitors being cited while you’re absent? If a competitor dominates citations for “best project management tool for remote teams,” they’ve successfully seeded those communities with content AI considers authoritative for that sub-query. Mapping these gaps tells you exactly where to build.

Signal 3: Unanswered Questions

Unanswered questions in high-authority forums are a direct source of content voids. When AI can’t find a definitive answer to a user’s specific problem, it either hallucinates or recommends whoever does have an answer. Brand conversation tracking across Reddit and Quora for unanswered questions in your niche is one of the highest-ROI moves a content team can make.

Signal 4: Entity Associations

AI models build brand understanding through co-occurrence. If a software product is consistently mentioned alongside “steep learning curve” in Reddit discussions, the AI starts to hard-code that association. Social listening can surface whether these associations are accurate or need to be actively corrected.

Signal 5: Karma Velocity

AI engines don’t just index for popularity. They index for helpfulness. A thread that gets rapidly upvoted and updated within the last 30 days signals to models like Perplexity that it’s fresh and community-validated. Monitoring high-velocity threads gives you the early window to intervene before a narrative gets baked in.

How to Set Up Brand Mention Alerts Across Reddit, Quora, and Beyond

The foundation is a broad keyword architecture. Your monitoring list should go well beyond the brand name.

Include brand name variations and common misspellings. Add problem-intent phrases: “how do I fix [your product category problem]” or “best way to [task your product solves].” Layer in comparison clusters: “[your brand] vs [competitor]” and “alternative to [your product].” Don’t overlook executive names, because mentions of leadership influence brand perception in ways that show up in AI responses.

Platform priority: Reddit and Quora first, then G2/Trustpilot/Capterra for B2B, then Twitter/X and relevant industry newsletters. Niche Slack and Discord communities are high-signal but harder to access systematically.

On response SLAs: brands that respond to active frustration signals with a meaningful resolution within 24 hours see a 67% retention rate. High-intent mentions, like direct recommendation requests, should get a response within 30 to 90 minutes.

Two guardrails matter here. First, don’t prioritize speed over quality. A generic response is worse than a delayed useful one. Second, follow a value-first framework on community platforms: mirror the community’s language, provide a genuinely helpful answer of 4 to 8 sentences, and disclose your affiliation. Transparency builds the trust that translates into AI training data. Astroturfing, on the other hand, can get content removed and excluded from AI training sets entirely.

Turning Social Listening Data into a Content Strategy

The most direct path from social listening to ROI is closing the content loop: using what you hear in communities to build assets that AI engines want to cite.

Unanswered questions in your niche become blog post topics. User-reported pain points become FAQ sections structured with clear H2/H3 headers formatted as questions. High-frequency negative associations become product messaging opportunities.

When you create content from these insights, structure matters as much as substance. Princeton research shows that adding original statistics, expert quotes, and authoritative citations can boost AI visibility by 30–40%. Lead with the direct answer in the first 40 to 60 words of each section. Use semantic chunking. Make it easy for AI crawlers to extract a clear, citable claim.

80% of consumers trust UGC more than traditional ads, and social media posts featuring UGC drive 10.38x higher conversions. Capturing positive community testimonials and integrating them into your site using schema markup is one of the most underused moves in audience insight tools.

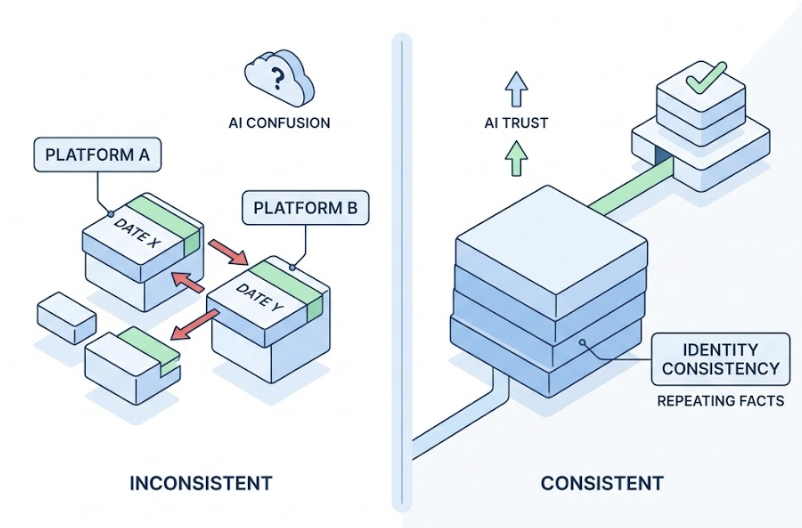

One often-overlooked priority: Identity Consistency. AI models trust facts that repeat across independent surfaces without contradiction. Social listening data frequently surfaces where a brand’s information is inconsistent across platforms, like conflicting founding dates on LinkedIn vs. Crunchbase. Cleaning up entity data is unglamorous work, but it directly affects how AI synthesizes your brand.

What Social Listening Misses About AI Search

Here’s the gap most brands discover too late.

Traditional social listening tools can tell you that a Reddit thread exists. They can tell you how many upvotes it has, what the sentiment is, and whether it mentions your brand. What they can’t tell you is whether ChatGPT is using that thread as its primary source for describing your product’s pros and cons.

That’s a category difference, not a feature gap.

Zero-click searches have reached 83% in AI-enhanced environments. That means the majority of queries involving your brand now end without a user visiting any website. The AI answer is the destination. If you’re only tracking human engagement metrics like click-through rate, you’re measuring a shrinking slice of the actual discovery landscape.

This is where Topify adds a layer that traditional social listening tools don’t cover. Rather than monitoring what humans see in forums, Topify tracks how AI systems actually present your brand across ChatGPT, Perplexity, Gemini, and other major platforms. The platform’s Sentiment Analysis tracks how AI characterizes your brand on a 0–100 scale, while Source Analysis shows which domains AI is citing when it describes you. You can see if that Reddit thread is appearing in AI answers, and more importantly, whether the content on your own domain is earning citations at all.

For teams that have invested in community listening but haven’t yet tracked AI representation, the combination covers both layers: what people are saying, and what AI is learning from what they say.

Scaling Social Listening Without Overwhelming Your Team

The practical challenge isn’t knowing what to monitor. It’s building a workflow that surfaces the right signals without drowning in noise.

For smaller marketing teams, the priority is platform coverage over frequency. Monitor fewer platforms with higher quality attention rather than setting up alerts across 15 channels that nobody has time to review. Reddit and a vertical review site specific to your industry will typically deliver more signal per hour than a broad sweep of lower-trust sources.

For growing teams, integrating real-time mention alerts into a shared Slack channel creates a lightweight triage system. Route high-priority mentions to a #brand-signals channel. Use emoji reactions to track status: reviewing, escalated, handled. This keeps the loop tight without requiring dedicated headcount.

For teams managing multiple brands or clients, a shared monitoring infrastructure with per-brand filtering is a prerequisite. Manual workflows don’t scale past three or four brands.

The table below shows what to prioritize based on team size and focus:

| Team Context | Primary Focus | Tool Priority |

|---|---|---|

| Small in-house team | Reddit + 1 vertical review platform | Mention alerts + weekly digest |

| Growing marketing team | 4–5 platforms + competitor monitoring | Real-time Slack routing + response SLAs |

| Agency or multi-brand | Cross-platform + AI search layer | Topify Sentiment & Source Analysis + Competitor Monitoring |

The key addition for any team thinking about AI search visibility is building an AI monitoring layer alongside traditional community sentiment analysis. Topify’s platform tracks brand mentions across AI responses directly, measuring Visibility, Sentiment, and Position in a single dashboard. It’s the step that connects what you’re hearing in communities to what AI is actually saying about you.

Conclusion

Social listening used to end at the community. You heard what people said, you responded, you reported. That was the loop.

The loop is longer now. What people say in communities feeds into how AI describes your brand, which shapes whether new customers find you at all. The brands that treat social listening as a passive monitoring function will keep optimizing for an audience that’s increasingly not the first stop in the decision journey.

The question isn’t whether to monitor brand conversations. It’s whether you’re also tracking what AI is learning from them, and whether you’re using what you hear to build the kind of content AI actually cites.

Start with the signals. Build the assets. Then check how AI is representing you.

FAQ

Q: What’s the difference between social listening and social media monitoring?

A: Social media monitoring tracks mentions on owned channels and collects surface metrics like likes, shares, and brand mention counts. Social listening goes further: it analyzes patterns, sentiment shifts, and context across third-party platforms to surface strategic insights. In the AI era, social listening also includes tracking how community conversations influence what AI search engines say about your brand.

Q: How do I set up brand mention alerts for Reddit and Quora?

A: Build a keyword list that includes brand name variations, competitor comparison phrases, and problem-intent queries relevant to your category. Use tools that support Boolean search to filter high-signal mentions from noise. Route alerts to a shared Slack channel with clear ownership for response. Prioritize threads with high karma velocity, since those are the discussions most likely to be picked up by AI crawlers within 30 days.

Q: How does social listening data connect to GEO optimization strategy?

A: Social listening identifies the specific questions, pain points, and language your audience uses in communities. GEO optimization takes that input and structures it into content that AI engines are more likely to cite. Concretely: an unanswered question on Reddit becomes a blog post with semantic chunking and a direct answer in the first 50 words. Community sentiment around a competitor weakness becomes a comparison asset. The loop closes when AI starts citing your content instead of the forum thread.

Q: What are the best social listening tools for marketing teams in 2026?

A: The right toolset depends on coverage needs. For traditional community sentiment analysis, tools like Sprout Social and Brandwatch cover social channels well. For tracking how brand conversations influence AI search specifically, platforms like Topify add a layer those tools don’t offer: cross-platform AI visibility tracking, sentiment scoring within AI responses, and source analysis showing which domains AI is citing for your category.