Your domain authority is solid. Your backlink profile looks strong. Your pages rank in the top 10 for a dozen competitive keywords. Then someone asks ChatGPT for a recommendation in your category, and your brand doesn’t show up once.

That gap between traditional SEO performance and AI search visibility is widening. Brand mentions now correlate at 3:1 over backlinks when it comes to AI Overview placement. The signals that made your site rank aren’t the same signals that make AI recommend you. And the difference between an LLM citation and a backlink isn’t just technical. It’s structural.

Backlinks Built the Old Web. LLM Citations Are Building the New One.

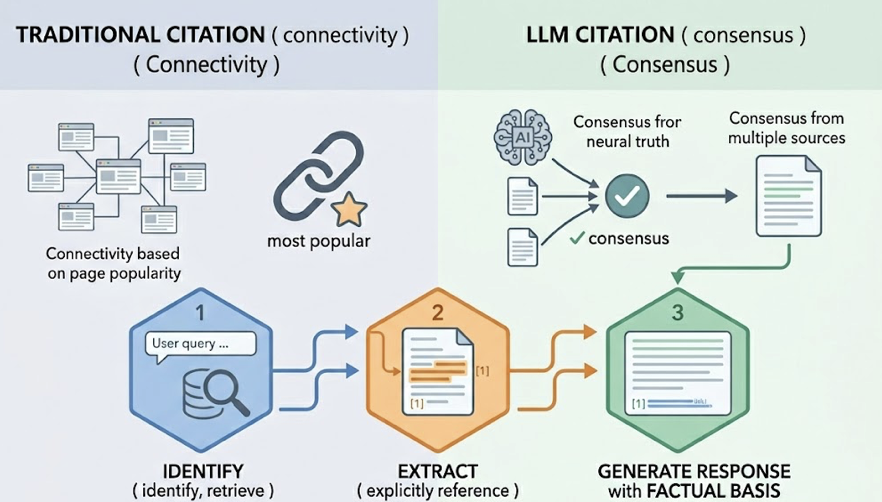

For more than twenty years, the hyperlink was the internet’s primary trust instrument. Google’s PageRank algorithm treated each backlink as a vote of confidence from one domain to another, and that logic shaped how every brand thought about authority. Rank higher, get more links, rank higher still. The entire system was built around navigation: guiding users to the most authoritative destination.

LLM citations work on a completely different principle. When an AI model generates a response, it doesn’t look for the most popular page. It looks for the most corroborable passage. An LLM citation happens when a model identifies, retrieves, and explicitly references a specific piece of content as the factual basis for its answer. The currency isn’t connectivity. It’s consensus.

The scale of this shift is already measurable. AI-driven website sessions grew by roughly 527% year-over-year in early 2025. As of 2026, approximately 48% of Google queries trigger an AI Overview, pushing traditional organic results further down the page. Being the top result in a traditional index no longer guarantees visibility if your brand isn’t also synthesized into the AI’s narrative.

| Dimension | Link Economy | Consensus Economy |

|---|---|---|

| Primary Signal | Hyperlinks (Backlinks) | Brand Mentions and Citations |

| Authority Logic | Popularity-based (PageRank) | Corroboration-based (Consensus) |

| Search Mechanism | Crawling and Indexing | Retrieval-Augmented Generation |

| User Value | Navigation to a source | Direct answer with attribution |

| Content Unit | The Domain/Page | The Passage/Atomic Claim |

The shift boils down to this: from “who links to you” to “who is talking about you.”

Where Backlinks and LLM Citations Actually Diverge

Traditional search engines map the web through links. LLMs map the web through entities. That’s not a subtle distinction. It changes what counts as authority, how content gets evaluated, and what marketers should measure.

The numbers make the disconnect clear. While 76% of URLs cited in Google’s AI Overviews also rank in the organic top 10, that correlation falls apart for standalone LLMs. Around 80% of URLs cited by ChatGPT don’t rank in Google’s top 100 for the same query. AI models are searching a different universe of content.

In that universe, unlinked brand mentions carry more weight than most SEO practitioners realize. Brands that show up consistently across four or more non-affiliated platforms are 2.8 times more likely to appear in ChatGPT responses. Mentions on Quora and Reddit alone correlate with 4x higher citation likelihood. Third-party review profiles on G2, Capterra, and Trustpilot increase citation chances by 3x.

| Metric | Traditional SEO | LLM / Generative Engine |

|---|---|---|

| Authority Measurement | Domain Rating (0-100) | Entity Confidence Score |

| Ranking Correlation | High (Links = Rank) | Low (Links ≠ Citation) |

| Impact of Mentions | Minimal / Indirect | Critical (3:1 over links) |

| Multi-Platform Signal | Optional | Essential (4+ platforms = 2.8x lift) |

| Conversion Rate | Standard Organic (~2-3%) | 4.4x Higher (pre-qualified intent) |

That last row matters more than most teams think. AI-referred visitors aren’t just more visible. They convert at dramatically higher rates because the model has already pre-qualified the recommendation.

Why a High DR Doesn’t Guarantee an LLM Citation

Here’s where the old mental model breaks. A website with a DR of 70+ can be entirely bypassed by AI citation engines in favor of a niche blog with a DR of 30.

LLMs don’t evaluate authority through link equity. They evaluate it through topical depth, neutral guidance, and structural extractability. An AI model will prefer a vendor-neutral comparison guide from an industry-specific publisher over a Forbes article that mentions a brand in passing. The niche content is easier to rephrase as a factual answer, and that’s what matters to the model’s confidence layer.

Each platform also pulls from a different source pool. ChatGPT matches Bing’s top 10 results about 87% of the time, making Bing optimization directly relevant. Perplexity leans heavily on user-generated content, with Reddit accounting for 46.7% of its preferred source citations. AI Overviews draw 76.1% of their sources from Google’s own organic index.

This means a brand can dominate Perplexity (strong Reddit presence) and be invisible on ChatGPT (weak Bing rankings) at the same time. Optimizing for a single model misses citation opportunities on the others.

A few data points sharpen this further. Content with statistics and named citations gets 30-40% higher visibility in AI responses. About 44.2% of all LLM citations come from the first 30% of an article’s text. And content updated within the last 30 days is 3.2x more likely to be cited than older material. Freshness isn’t optional in generative search. It’s a filter.

The Content Formats That Earn LLM Citations

LLMs don’t read your entire 3,000-word article. They use retrieval-augmented generation (RAG) to pull specific passages that contain a direct answer to a user’s prompt. Content that’s structured in atomic, extractable fragments wins.

Comparative listicles are the clear front-runner. Articles that rank or compare multiple tools or services account for 32.5% of all AI citations, the highest-performing format identified in 2026. The reason is mechanical: listicles provide the entity-relationship mapping that LLMs need to synthesize comparisons.

Other high-performing structures include FAQ sections with schema markup (3.2x more likely to appear in AI Overviews), question-based H2/H3 headings (3x higher citation rate), and original data tables (4.1x more citations than text-only equivalents). The first paragraph of each section matters disproportionately: providing a direct answer in the first 40-60 words increases citation probability by 67%.

Specificity is a trust signal. An LLM is far more likely to cite “Companies see 4.4x conversion rates from AI search (Semrush, 2026)” than “Companies see significant improvements in search performance.” Vague claims get filtered out. Specific, attributed claims get quoted.

Earned media distribution also plays a larger role than most brands expect, with a median lift of 239% in AI citations. Journalistic and earned media sources now account for roughly 25% of all citations generated by large language models.

E-E-A-T has shifted too. Content with clear author bylines and real credentials achieves 2.3x more citations than anonymous corporate content. If an LLM can’t find a real person with real experience attached to the advice, it’s less likely to cite it.

How to Track LLM Citations When There’s No Search Console for AI

There’s no native “LLM Citation Report” in Google Search Console. Traditional analytics can tell you about clicks and rankings, but AI visibility is often a zero-click experience where the user gets the answer and the brand awareness without ever visiting your site.

That tracking gap is exactly what Topify was built to fill. The platform monitors brand visibility across ChatGPT, Gemini, Perplexity, and other major AI engines through a seven-metric framework: Visibility Score, Sentiment Score, Position Rank, Source Analysis, Mention Volume, Intent Alignment, and CVR (Conversion Visibility Rate).

The practical value is in the cross-platform view. A brand might discover it has an 80% Visibility Score on Perplexity (driven by Reddit consensus) but only a 12% Visibility Score on ChatGPT (due to weak presence in Bing-indexed publications). That kind of gap is invisible without platform-specific tracking. Topify’s Source Analysis feature lets you reverse-engineer which domains and URLs AI platforms are actually citing, so you can see whether your content or your competitor’s content is driving the model’s recommendations.

For teams already using Ahrefs or SEMrush, this isn’t a replacement. It’s a different layer. Traditional tools cover the infrastructure of rankings and backlinks. AI visibility tools cover the citation layer that determines whether your brand gets recommended in the first place.

Backlinks Still Matter. Just Not the Way You Think.

The “backlinks are dead” narrative is wrong. What’s changed is their role.

Since 76% of AI Overview citations come from pages that already rank in Google’s organic top 10, strong traditional SEO is still the primary filter AI uses to determine what’s worth citing. A page that can’t rank on Google is unlikely to get cited by ChatGPT. Backlinks are the gatekeepers to the AI’s citation pool.

But the reverse is also true. AI systems cite content that Google barely registers. Pages that never ranked for their target keyword but provide the best structured answer to a specific question can earn consistent LLM citations. The relationship isn’t either/or. It’s layered.

In a 2026 search strategy, backlinks function as infrastructure: they build the crawl priority, index authority, and baseline trust that get your content into the retrieval pool. LLM citations function as the growth layer: they determine whether the AI actually surfaces your brand when someone asks a question that matters. The two signals compound. High-quality backlinks increase the odds of retrieval. Structured, extractable content increases the odds of citation.

The most effective approach is hybrid. Create content that uses traditional SEO fundamentals (keywords, internal linking, authority building) to secure a position in Google’s top 10, then layer in atomic answer blocks, data tables, and FAQ schema to secure a citation in the AI summary. One piece of content, two discovery surfaces.

Conclusion

The shift from backlinks to LLM citations isn’t about one replacing the other. It’s about a new layer of authority that most brands aren’t tracking yet. Backlinks still build the foundation. But LLM citations decide whether AI recommends your brand or your competitor’s.

The practical path forward has three steps. First, audit your current AI visibility across platforms using a tool like Topifyto see where you’re cited and where you’re missing. Second, restructure your highest-value content into extractable formats: comparison tables, FAQ sections, and atomic answer blocks in the first 40-60 words of each section. Third, build off-page consensus through earned media and brand mentions on the platforms that AI models actually pull from.

The brands that figure this out early won’t just rank. They’ll be the ones AI recommends.

FAQ

What is an LLM citation?

An LLM citation is a reference within an AI-generated answer that attributes a fact, recommendation, or data point to a specific source URL. When ChatGPT, Perplexity, or Google AI Overviews cite your content, they’re using it as supporting evidence for their response.

Do backlinks still help with AI search visibility?

Yes. Backlinks remain the primary foundation for index authority. Since 76% of AI Overview citations come from pages ranking in Google’s organic top 10, backlinks are the entry ticket to being considered for an LLM citation. They’re necessary, but no longer sufficient on their own.

How can I check if my brand is being cited by ChatGPT?

There’s no native dashboard for AI search citations. You’ll need a third-party AI visibility tool like Topify that runs simulated prompts across multiple LLMs to track where your brand is mentioned, cited, and recommended compared to competitors.

What content formats get the most LLM citations?

Comparative listicles (“Best X for Y”) account for 32.5% of all AI citations, making them the highest-performing format. FAQ sections with schema markup, structured data tables, and question-based headings also perform well. The first 40-60 words of each section carry disproportionate weight.