Your team spent six months building content authority. Domain rating is climbing, organic traffic is strong, and your top 50 keywords all sit in the first three positions on Google. Then a prospective buyer asks ChatGPT, “Which platform offers the most reliable solution for [your category]?” The response names three competitors, links to a niche blog and a Reddit thread, and doesn’t mention your brand once.

That disconnect has a name: the Visibility Gap. It’s the growing space between where your brand ranks on Google and whether AI systems mention you at all. Research shows only 11% of domains get cited by both ChatGPT and Perplexity for the same queries. Traditional search dominance no longer guarantees presence in the discovery layer that’s reshaping how buyers find brands.

The signal that determines whether you show up in that layer is called an LLM citation.

What Is an LLM Citation, and How Is It Different from a Backlink?

An LLM citation is any instance where a large language model references, mentions, or links to a brand while generating a response. It shows up in two forms. Explicit citations include a direct URL or hover-over source link, common on platforms like Perplexity and Google AI Overviews. Implicit mentions happen when the AI recommends or describes a brand in its prose without linking to the source.

That distinction matters because the signals driving each are fundamentally different from traditional SEO.

Backlinks are static, page-to-page hyperlinks designed to pass link equity. An LLM citation is a declaration of entity relevance within a specific semantic context. While traditional SEO has long prioritized backlink volume and domain authority, those factors show a weak to neutral correlation with whether an LLM will actually cite a brand. The strongest predictor of LLM citation frequency is brand search volume, with a correlation coefficient of 0.334. In practice, LLMs prioritize brands that already have high mental availability among human users, mirroring real-world authority rather than technical link-building strength.

| Feature | Traditional Backlink | LLM Citation |

|---|---|---|

| Core intent | Navigation and SEO authority | Attribution and factual support |

| Stability | Relatively permanent | Highly volatile, changes per inference |

| Discovery path | Link graph crawling | Parametric knowledge + RAG |

| User impact | Direct referral traffic | Brand perception and shortlist inclusion |

| Evaluation metric | Domain Rating / Page Authority | Entity salience and source reliability |

Here’s the thing: an LLM citation represents a model’s “confidence” that your brand is a necessary component of a correct answer. That’s a fundamentally more powerful signal than a hyperlink.

The Economics of Being Cited: Why LLM Citations Drive Buyer Decisions

The shift toward AI-powered discovery isn’t speculative. Estimates suggest over $750 billion in U.S. revenue will funnel through AI-powered search environments by 2028. Roughly one-third of consumers now start their research directly within AI tools rather than traditional search engines, and in B2B, that figure is even steeper: 51% of software buyers report using AI chatbots more frequently than Google for initial vendor research.

The economic value of an LLM citation is significantly higher than a traditional search impression. In a Google search, a brand must win a click to start influencing the buyer. In an AI response, brand exposure happens directly within the answer. The buyer is pre-educated before they ever visit your website.

When users do click through from an AI citation, the traffic converts at 14.2%, roughly five times higher than traditional organic traffic. The user arrives pre-qualified by the AI’s synthesis.

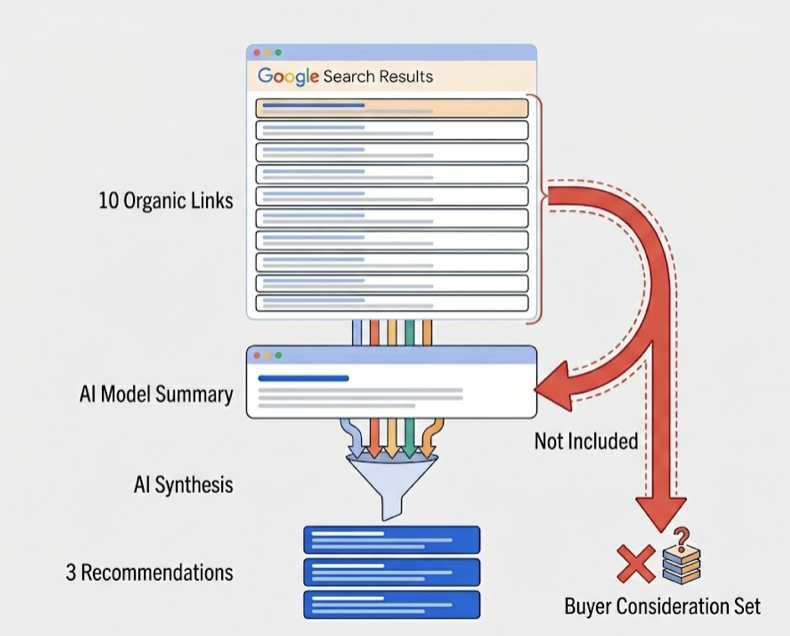

The most significant risk is compression of choice. Google typically displays ten organic links per page. An AI model often synthesizes a shortlist of just three recommendations. Being excluded from that response is equivalent to being excluded from the buyer’s entire consideration set. And the effect compounds: every time a brand is cited as a top choice, that response contributes to future training data and reinforces the model’s association between the brand and the category.

The traditional research phase, which used to involve 12 to 15 separate search sessions over several weeks, is being compressed into a single interaction with an AI agent.

How LLMs Decide Which Brands to Cite

When a user asks a broad question, the AI doesn’t process it as a single query. It performs “query fan-out,” decomposing the prompt into 8 to 12 sub-queries covering pricing, reviews, technical specifications, and more. A brand that only provides high-level marketing copy but lacks presence in those sub-categories gets skipped.

Five factors determine which brands pass the selection filter.

Entity authority. The model evaluates how clearly a brand is recognized as an authority in a specific niche. Wikipedia alone provides nearly 48% of all citations for ChatGPT. If your brand doesn’t exist in ground-truth reference sources, you’re starting at a disadvantage.

Structural extractability. Content that uses clear H2/H3 hierarchies and leads with a direct answer in the first 30% of the text is 44.2% more likely to be selected as a source. LLMs can’t cite what they can’t easily parse.

Cross-platform consensus. A single source claiming a brand is “the best” isn’t enough. Most AI models require verification from five or more independent, high-authority sources before triggering a citation. 85% of citations come from third-party sites like Reddit, G2, or industry publications.

Content freshness. More than 70% of pages cited by AI models were updated within the last year. Pages that fall out of a quarterly update cycle are three times as likely to lose their citations.

Entity consistency. If your brand name varies across LinkedIn, your website, and directory listings, the AI’s entity resolution algorithms may fail to connect the signals. One airline brand unified its naming across all platforms and saw a 35% increase in citation rates within three months.

Each AI platform also has its own source preferences. ChatGPT leans on Wikipedia (47.9% of citations). Perplexity favors Reddit (46.7%). Google AI Overviews mix Reddit (21%) with YouTube (18.8%). That platform divergence is what makes the 11% overlap statistic so important: optimizing for one platform doesn’t guarantee visibility on another.

Why Your Google Rankings Don’t Protect You from the Visibility Gap

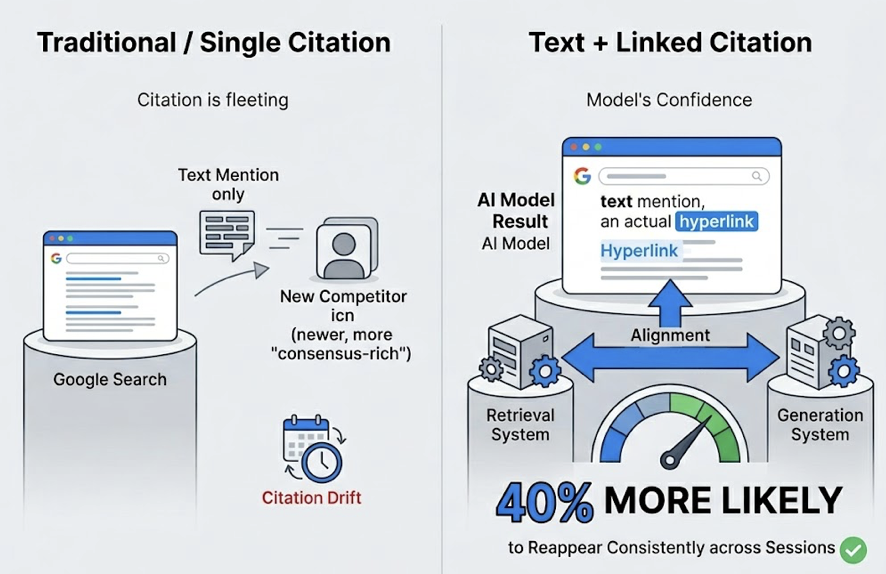

A Google ranking is relatively stable over a week. An AI’s citation profile can fluctuate wildly between identical queries because of the model’s inference randomness.

Research into brand persistence found that only 30% of brands maintain visibility from one answer to the next for the same prompt. Even more concerning, just 20% remain present across five consecutive queries.

That instability makes one-off manual checks misleading. A marketing team might check ChatGPT once, see their brand cited, and assume success. In reality, they could be invisible to 80% of users asking the same question.

Being cited once doesn’t protect against “citation drift” either. When models retrain or update their indices, a long-standing citation can be replaced by a newer or more consensus-rich competitor. Brands that earn both a text mention and a linked citation are 40% more likely to reappear consistently across sessions, suggesting the model’s confidence is strongest when its retrieval system and generation system align.

This volatility makes continuous, automated tracking a requirement, not a nice-to-have.

How to Track and Improve Your LLM Citation Performance

Tracking LLM citations requires a different framework than keyword tracking. The process starts with building a prompt library rather than a keyword list.

Build a prompt universe. Move beyond single keywords like “marketing software” and create 20 to 50 conversational prompts that reflect actual buyer intent. “What are the best marketing automation tools for a mid-market ecommerce brand looking to reduce churn?” is the kind of query AI users actually type. Segment by persona, region, and intent stage.

Track across platforms. Because of the fragmentation between AI engines, manual tracking doesn’t scale. Topifyautomates three core functions that map directly to LLM citation performance. Source Analysis identifies which specific third-party domains are driving competitor citations, so you can reverse-engineer where to earn more mentions. Visibility Tracking monitors your brand’s presence across ChatGPT, Perplexity, Gemini, and Claude on a daily or weekly cadence. And Sentiment Analysis evaluates the tone AI uses to describe you, because high visibility with negative framing is often worse than no visibility at all.

Measure AI Share of Voice. The ultimate metric for the AI era is Share of Voice: your brand’s mentions divided by total mentions across a category-relevant set of prompts. Topify weights this score by sentiment and recommendation position to produce a blended AI-SoV that serves as a leading indicator of future market share.

| AI-SoV Score | Brand Status | Recommended Strategy |

|---|---|---|

| > 50% | Dominant entity | Defend position, monitor sentiment velocity |

| 20%-49% | Contender | Differentiate with original data and frameworks |

| 5%-19% | Niche player | Expand semantic relevance with broader guides |

| < 5% | Invisible | Launch entity salience campaign across 4+ platforms |

5 Quick Wins to Boost Your Brand’s LLM Citation Rate

Front-load your answers. LLMs exhibit “lost in the middle” behavior, where information buried in a document gets ignored during synthesis. Writing each section with a direct, self-contained answer chunk in the first 150 to 300 words makes content 2.8x more likely to be extracted and cited.

Trigger the consensus mechanism. AI systems rarely cite a brand based solely on its own website. Brands mentioned across four or more trusted platforms (Reddit, YouTube, G2, industry press) are 2.8x more likely to appear in ChatGPT responses.

Optimize for AI crawlers. Fast-loading pages with FCP under 0.4 seconds are three times more likely to be cited. Implementing FAQ, HowTo, and Organization schema acts as a map for AI crawlers, helping them resolve entities and understand relationships.

Lead with data. Content that includes statistical data provides a +22% improvement in citation likelihood. Direct quotes from subject matter experts add a +37% boost on Perplexity specifically. LLMs prioritize primary evidence over marketing fluff.

Refresh on a quarterly cycle. Pages that haven’t been updated in the last 90 days are three times more likely to lose their citations to newer content. A systematic content refresh cycle, with visible “last updated” dates, is one of the simplest ways to maintain a strong AI Share of Voice.

Conclusion

The shift from a ranking economy to a citation economy isn’t coming. It’s here. LLM citations aren’t just links. They’re a verification of your brand’s place in the knowledge graph of the machine. The brands moving now to build entity authority, structural citability, and cross-platform consensus are building a compounding advantage that gets harder to overcome with each model update.

The practical path is clear: audit where you stand today, benchmark against the competitors AI is already recommending, and optimize the assets that drive citation. Start with a baseline AI visibility audit through Topify, and you’ll know within a week exactly where your brand stands in AI search.

FAQ

What’s the difference between an LLM citation and a traditional backlink?

A backlink is a static hyperlink between two web pages, designed to pass link equity for SEO. An LLM citation is a dynamic reference generated in real time when an AI model determines your brand is relevant to a user’s query. Backlinks are permanent until removed. LLM citations can change with every inference.

How often should I track my brand’s LLM citations?

Weekly tracking is the minimum recommended cadence. Because only 30% of brands maintain visibility between consecutive answers, single checks provide a misleading picture. Brands in fast-moving sectors like SaaS or finance should track daily to catch citation drift early.

Can I improve my LLM citation rate without changing my website content?

Yes. Building brand presence across four or more third-party platforms (Reddit, G2, YouTube, industry directories) is one of the highest-impact levers. Unifying your brand name across all digital properties and earning PR coverage on high-authority outlets also directly improve citation rates without touching your own site.

Which AI platforms should I prioritize for citation tracking?

At minimum, track ChatGPT, Perplexity, and Google AI Overviews. Each uses fundamentally different source preferences: ChatGPT relies on Wikipedia and parametric knowledge, Perplexity favors Reddit and real-time retrieval, and Google AI Overviews mix organic results with forum and video content. Only 11% of domains are cited across both ChatGPT and Perplexity, so cross-platform monitoring is non-negotiable.