Your domain authority is 70. Your keyword rankings are climbing. Your content team shipped 40 articles last quarter, and organic traffic looks healthy. But when someone asks ChatGPT, “What’s the best tool for [your category]?” your brand isn’t in the answer. Worse, you don’t even know it’s missing, because nothing in your SEO dashboard tracks what AI models choose to cite.

That disconnect is growing. Fewer than 10% of the sources cited in AI-generated answers even rank in the top 10 on Google for those same queries. And with projections showing web traffic from traditional search engines dropping by as much as 25% by 2026, the gap between what your dashboard tells you and what’s actually happening in AI search is becoming a strategic liability.

Your SEO Dashboard Can’t Tell You Who ChatGPT Is Citing

Traditional SEO dashboards were built for a different era. They track rankings in a list of ten blue links. They measure clicks, impressions, and backlink authority. None of that tells you whether an AI model is citing your content when it synthesizes an answer.

The core difference: SEO dashboards track popularity. LLMs track consensus.

An AI model doesn’t rank pages in a list. It selects sources that provide extractable, factual data points it can weave into a synthesized response. Those sources are often Reddit threads, niche comparison pages, or independent reviews, not the highest-ranking brand sites. In B2B SaaS categories, Reddit has become a dominating force for citations in both ChatGPT and Perplexity, while brand-owned content often lags behind.

That’s a problem traditional tools can’t diagnose. Without LLM citation tracking software, you’re optimizing for a scoreboard that no longer reflects how buyers discover brands. About 82% of users now report that AI-powered search results are more helpful than traditional SERPs, and roughly 60% of modern searches end without a single click. The audience is shifting. The question is whether your measurement infrastructure is shifting with it.

| Discovery Metric | Traditional SEO | Generative AI |

|---|---|---|

| Primary Goal | Top 10 SERP positioning | Inclusion in synthesized answer |

| User Intent | Keyword-based discovery | Prompt-based conversational synthesis |

| Attribution | Direct clicks and impressions | Citation share and brand recommendation |

| Logic Basis | Backlink equity and popularity | Semantic density and entity reliability |

| Result Type | Consistent list of links | Personalized, synthesized response |

What LLM Citation Tracking Software Actually Measures

LLM citation tracking isn’t a rebranding of brand monitoring. It’s a technical analysis of how AI models consume, synthesize, and attribute information. Four core metrics define the discipline.

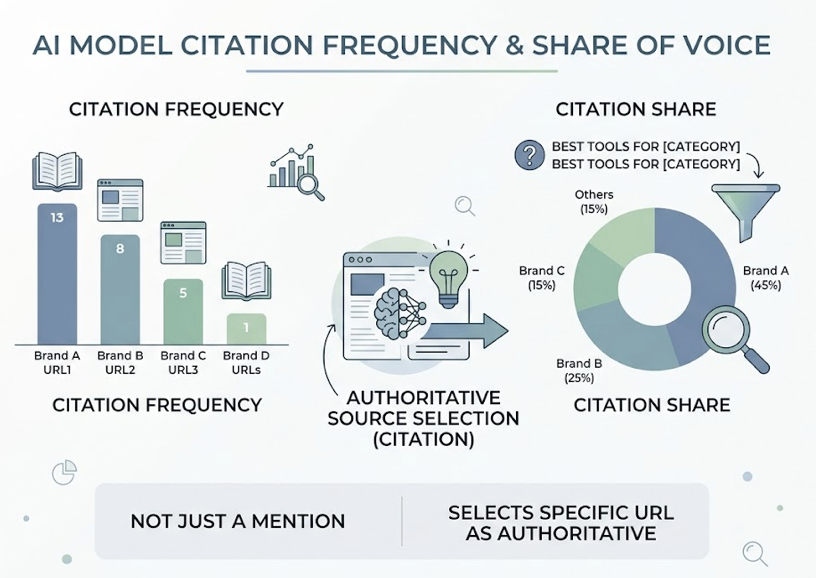

Citation frequency and share of voice. The most fundamental metric is how often an AI model cites your content across a standardized set of prompts. This isn’t the same as a “mention,” where the model simply names your brand in passing. A citation means the model selected a specific URL as an authoritative source. Professional LLM citation tracking tools measure this as “citation share,” comparing your frequency against competitors for high-intent prompts like “best tools for [category].”

Source domain analysis. AI models pull from a diverse array of sources: official websites, news outlets, user-generated content. Source domain tracking identifies exactly which domains are feeding the AI’s logic. This matters because AI systems often rely on third-party consensus rather than brand-owned content. An LLM citation tracking platform that provides URL-level provenance lets you see not just that “Reddit” was cited, but which thread and which comment triggered it.

Sentiment context. A citation can work against you. If an AI cites your brand as a “risky option” or discusses a past product failure, the visibility is actively harmful. LLM citation tracking analytics quantify the sentiment surrounding each mention, surfacing what some practitioners call “zombie narratives,” outdated information that persists in the model’s training data and keeps resurfacing in responses.

Cross-platform behavior. No two AI models cite the same way. A study of over five million responses found that Gemini and OpenAI’s models share a 42% domain overlap, suggesting some convergence in training data. But Perplexity’s citation density runs two to three times higher than parametric models because its architecture mandates source attribution for nearly every claim. A strategy that wins in Perplexity may leave your brand invisible in ChatGPT. That variability makes a multi-engine LLM citation tracking system non-negotiable.

5 Things That Quietly Kill Your Brand’s AI Citation Rate

The market for AI visibility tools is maturing fast. But not every tool that calls itself an LLM citation tracking solution actually measures what matters. Five capabilities separate professional-grade platforms from surface-level wrappers.

Multi-engine coverage. A platform that only tracks ChatGPT is flying with one eye closed. Users navigate between Perplexity for research, Claude for technical tasks, and Gemini for integrated Google searches. Enterprise LLM citation tracking software must monitor at least five to ten platforms simultaneously, including emerging engines like DeepSeek and Grok.

URL-level citation provenance. Domain-level awareness isn’t enough. Knowing “Reddit” was cited doesn’t help. Knowing which thread and which comment triggered the citation does. That granularity turns raw data into a direct roadmap for content optimization.

Competitor citation benchmarking. In AI search, visibility tends to be zero-sum. If a competitor is being recommended, your brand is being excluded. Side-by-side citation share analysis for the exact prompts your buyers use is the only way to spot where you’re losing.

Longitudinal trend and decay monitoring. AI models aren’t static. Citation preferences evolve as new data is indexed and model weights update. Research shows that citations from high-velocity sources like Reddit or LinkedIn have a median decay window of just 47 days. Without historical tracking, you can’t tell a temporary fluctuation from a meaningful shift.

Actionable workflow integration. Data without a path to action is noise. The strongest LLM citation tracking platforms connect insights to content execution: identifying refresh opportunities, suggesting structural changes like FAQ schema or HTML data tables, and flagging the third-party domains the AI currently favors.

| Platform Feature | Strategic Value | Marketing Impact |

|---|---|---|

| Multi-engine support | Prevents blind spots across platforms | Unified visibility across ChatGPT, Gemini, Perplexity |

| URL-level tracking | Identifies specific source of AI’s logic | Direct roadmap for reverse-engineering citations |

| Sentiment analysis | Detects zombie narratives or brand risk | Proactive reputation management in AI responses |

| Competitor benchmarking | Reveals relative share of voice | Competitive gap analysis for high-intent prompts |

| Historical auditing | Tracks citation durability and decay | Long-term strategy adjustment based on model updates |

How Topify Turns LLM Citation Data into a Repeatable Workflow

Most LLM citation tracking dashboards stop at reporting. Topify is built around what it calls the “Actionability Gap,” the space between seeing a problem and fixing it.

The core workflow starts with reverse-engineering citations. When a marketing manager at a SaaS company discovers their brand is absent from a “best CRM” list on Perplexity, Topify doesn’t just report the omission. It identifies the specific competitor pages and third-party reviews that Perplexity did cite, then analyzes the structure of those pages. In practice, AI models often prefer concise HTML comparison tables and “answer-first” paragraph structures over marketing copy. That structural insight gives teams a concrete playbook for what to change.

Topify’s seven-metric framework, covering visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate), provides a more complete picture than citation counts alone. You can track not just whether you’re being cited, but how the AI perceives your brand, where you rank relative to competitors, and what the estimated conversion impact looks like.

The platform covers ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI engines, addressing the cross-platform variability problem head-on. For agencies managing multiple clients, Topify supports multi-project setups with dedicated dashboards per brand.

Then there’s the execution layer. Once a citation gap is identified, Topify’s one-click agent can trigger an automated workflow to close it, generating and deploying bot-optimized content variations that AI crawlers like GPTBot or PerplexityBot can more easily parse and prioritize.

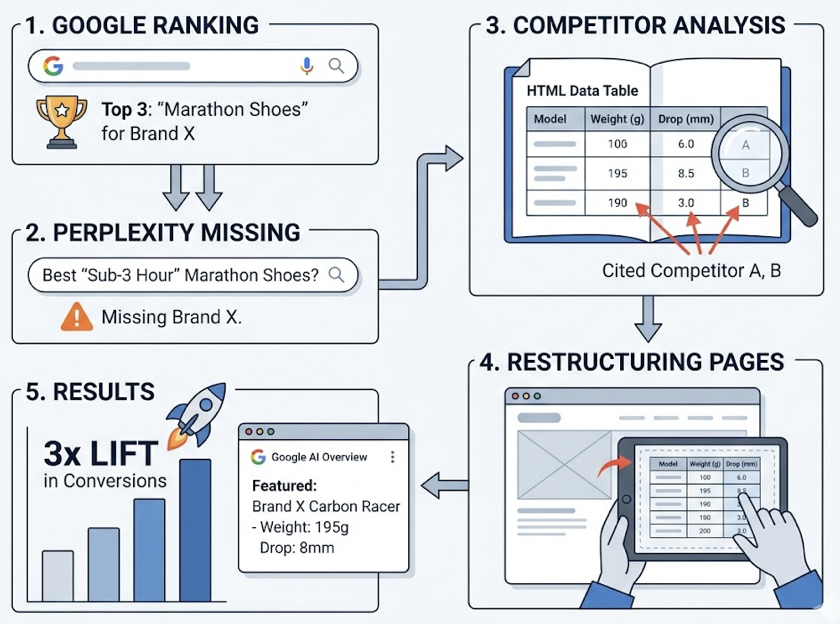

Here’s a scenario that illustrates the full loop. An e-commerce brand specializing in marathon footwear ranks in the top three on Google for “marathon shoes.” But Topify reveals they’re entirely missing from Perplexity’s recommendations for “sub-3 hour marathon shoes.” The analysis shows Perplexity is citing competitors who provide specific weight specs in grams and drop measurements in HTML tables, data the brand’s current pages bury in marketing copy. Within three weeks of restructuring their product pages based on Topify’s reverse-engineering insights, the brand appeared as a featured citation in Google AI Overviews, resulting in a 3x lift in conversion rates.

Pricing starts at $99/month for the Basic plan (100 prompts, ChatGPT/Perplexity/AI Overviews tracking), with Pro at $199/month for expanded coverage. Teams ready to get started can run a baseline audit in minutes.

GEO vs SEO: Why LLM Citation Tracking Fills a Gap Traditional Tools Can’t

If you’re wondering about the difference between AI search optimization GEO vs SEO, it comes down to what you’re optimizing for and what you’re measuring.

Traditional SEO is a game of link popularity. It assumes that enough high-quality backlinks make you an authority, and that ranking on page one means you’ll be found. GEO (Generative Engine Optimization) operates on a different logic: semantic density and entity reliability. An AI model may ignore a high-DA site in favor of a lower-authority page that provides a clearer, more factual answer it can synthesize into prose.

LLM citation tracking is the only toolset that can measure this gap. Data shows that while 92% of AI citations come from sites in the top 10 search results, the specific source selected for the AI’s summary is often chosen for extractability, not rank. That distinction changes the entire optimization strategy.

The financial impact is significant. Organic click-through rates have dropped by as much as 61% for queries with AI Overviews. But brands that are cited in those AI responses see a 35% increase in clicks compared to brands that are present but not cited. Even more telling: AI-referred visitors have shown a 23x advantage in conversion signups over traditional organic traffic, because they arrive “pre-qualified” by the AI’s recommendation.

The bottom line on AI search optimization GEO vs SEO difference: they’re parallel systems, not replacements. SEO remains the foundation for capturing existing demand on Google. GEO is how you build trust and get recommended in the conversational interfaces where a growing share of high-intent research happens.

| Feature | Traditional SEO | Generative Engine Optimization (GEO) |

|---|---|---|

| Focus | SERP ranking | AI citation and synthesis |

| Keywords | Short-form, volume-based | Long-form, conversational prompts |

| Content | Keyword placement and length | Data-backed authority and extractability |

| Primary tool | Rank trackers (Ahrefs, Semrush) | LLM citation trackers (Topify) |

| KPI | Organic traffic | Citation rate and brand score |

Where LLM Citation Tracking Analytics Belong in Your Marketing Stack

LLM citation tracking analytics shouldn’t live in a silo. They connect to three specific workflows in a modern marketing operation.

Content production: the “answer-first” model. Traditional content follows a “search volume first” playbook. In the AI era, this shifts to a “citation readiness” model. Research shows that 44.2% of AI citations reference the first 30% of a page, which means leading with direct answers (the BLUF rule) is the single most effective structural change for AI visibility. LLM citation tracking software lets editors verify whether their content structure, including short paragraphs, clear headings every 120 to 180 words, and HTML tables, is actually resulting in citations.

Reputation defense. In the age of LLMs, your brand is an entity in a knowledge graph. If an AI model associates your brand with incorrect facts or outdated pricing, your visibility becomes a liability. An LLM citation tracking system acts as an early warning layer, flagging where hallucinations or negative narratives are taking root so you can proactively build “trust centers” with rich schema markup.

Agency services. For SEO agencies, this is a major new revenue line. As traditional rankings become harder to defend, agencies can offer “AI Visibility Audits” and “GEO Strategy” as premium services. A formatted LLM citation tracking dashboard showing a client’s AI share of voice versus competitors demonstrates value in a way that traditional SEO reports no longer can.

AI search already captures over 1.5 billion users monthly. That number is growing. The brands that build citation tracking into their stack now will have a structural advantage over those that wait.

Conclusion

The shift from click-based search to AI-synthesized answers isn’t coming. It’s here. Traditional SEO dashboards still matter for Google rankings, but they can’t tell you who ChatGPT is citing, what Perplexity is recommending, or how Gemini describes your brand. That’s the gap LLM citation tracking software fills.

Start with a baseline audit. Find out where your brand actually stands in AI-generated answers, not just in Google’s index. Then engineer your content for extractability: direct answers first, structured data, and the kind of factual clarity that AI models select as citation-worthy. The infrastructure exists. The data is available. The only real risk is not looking.

FAQ

Q: What is LLM citation tracking software?

A: LLM citation tracking software automatically queries multiple AI platforms, including ChatGPT, Gemini, and Perplexity, to detect when they cite or link to your brand’s URLs. Unlike traditional rank tracking, it measures presence and context in synthesized, generative answers rather than a numerical list position.

Q: What’s the difference between AI search optimization GEO vs SEO?

A: Traditional SEO focuses on ranking pages in a list of search results to drive clicks. GEO (Generative Engine Optimization) focuses on getting your brand cited, recommended, and synthesized into the AI’s text-based answer. SEO prioritizes keyword density and backlink authority. GEO prioritizes factual accuracy, content structure, and machine-readable formatting.

Q: How often should I check LLM citation data?

A: Monthly monitoring is a baseline because AI models update frequently. For competitive markets, bi-weekly or real-time monitoring is better. Citations from high-velocity sources like Reddit and LinkedIn can decay in as little as 47 days, so more frequent checks help you catch shifts early.

Q: Can LLM citation tracking tools track multiple AI platforms at once?

A: Yes. Professional-grade platforms like Topify monitor visibility across ChatGPT, Perplexity, Gemini, Claude, DeepSeek, and Google AI Overviews simultaneously, providing a unified dashboard that accounts for each model’s unique citation behavior.

Read More

- AI Citation Tracking Platforms in 2026: Which Tools Actually Show What ChatGPT, Perplexity, and Claude Are Citing

- Why Most AI Visibility Products Miss the Citation Layer: LLM Citation Tracking, Compared

- AI Search Optimization: What It Is, Why Google Rankings Don’t Cover It, and How to Build a Real Strategy