You installed a GEO skill in Claude Code last week. It scanned your site, spit out a score of 54, and flagged a dozen issues across four dimensions you’d never heard of before: technical accessibility, content citability, structured data, brand signals. You fixed the robots.txt and added some FAQ schema. The score climbed to 62. Then you asked ChatGPT to recommend a product in your category, and your brand still wasn’t mentioned.

The score went up. The visibility didn’t. That’s not a tool problem. It’s a coverage problem.

Most free AEO tools audit one or two dimensions well. None of them cover all four. And none of them can tell you what AI actually says about your brand when a real user asks a real question.

The Four AEO Skill Dimensions and Why Free Tools Only Cover Half

Every AEO skill, whether it’s an open-source GitHub repo or an enterprise platform, measures some subset of four dimensions. The GEO Score framework used by the geoskills project formalizes these with specific weights:

| Dimension | Weight | What It Measures |

|---|---|---|

| Technical Accessibility | 20% | Can AI crawlers find and parse your content? |

| Content Citability | 35% | Does AI treat your content as a citable authority? |

| Structured Data | 20% | Can AI extract semantic meaning from your markup? |

| Brand & Entity Signals | 25% | Does AI trust and recommend your brand? |

Here’s the problem: roughly 80% of free AEO tools concentrate on Technical Accessibility, which accounts for just 20% of the total score. Content Citability and Brand Signals, together representing 60% of the influence on whether AI cites you, are almost entirely unaddressed by open-source solutions.

That’s not a minor blind spot. It’s the majority of what determines your AI visibility.

Technical Accessibility: The One AEO Skill Free Tools Get Right

If you’re looking for a free tool that does its job thoroughly, technical accessibility is where you’ll find it. The geo-optimizer-skill audits against 47 research-backed methods drawn from the Princeton KDD 2024 and AutoGEO ICLR 2026 papers. It checks crawler permissions for 27 specific AI bots, validates heading hierarchy, flags JavaScript rendering issues, and verifies whether your site has an llms.txt file for rapid LLM context ingestion.

The standard audit now covers three tiers: AI discovery files (like .well-known/ai.txt), crawler access rules in robots.txt, and HTML semantic structure including front-loaded answers and section word counts.

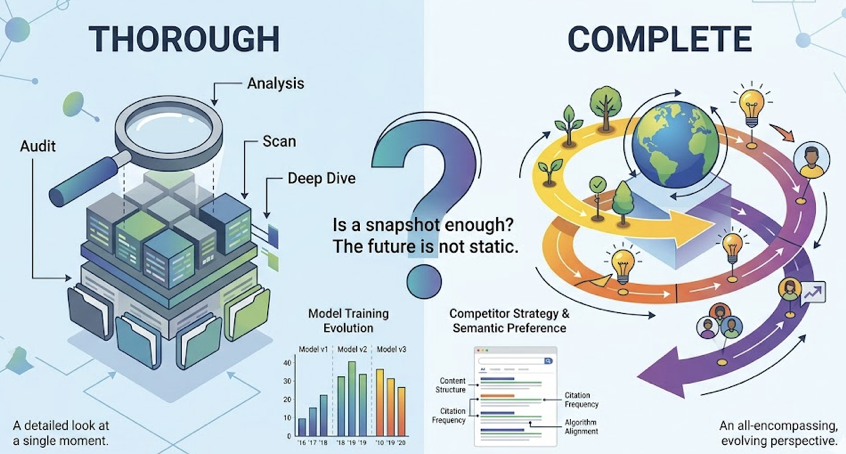

That said, “thorough” and “complete” aren’t the same thing. These tools give you a snapshot. They don’t track how crawler behavior evolves as models retrain. And they can’t tell you if your competitor, who scored 10 points lower on the same audit, is getting cited more often because their content structure better matches the model’s current semantic preferences.

Free technical audits are table stakes. They’re the floor, not the ceiling.

Content Citability: Where Free AEO Skills Start Breaking Down

Content citability carries the heaviest weight in the GEO Score at 35%, and it’s also where the gap between free and paid tools is widest.

The Princeton 2024 study evaluated 10,000 queries and found that specific content modifications boost AI citation rates by 30% to 41%. The winning tactics aren’t what most SEO practitioners expect:

| Tactics That Work (+30-41%) | Tactics That Don’t |

|---|---|

| Citing credible third-party sources | Keyword stuffing |

| Adding expert quotations with attribution | Content padding for word count |

| Using precise statistics over vague claims | Artificially simplified language |

| Improving linguistic fluency | Sales-heavy persuasive copy |

| Writing in an authoritative, expert voice | Optimizing purely for length |

A free skill like the content-quality-auditor in the seo-geo-claude-skills library can check whether your content includes expert quotes and statistics. That’s useful. But it can’t answer the question that actually matters: is the AI attributing the answer to your domain, or to your competitor’s?

That’s the gap Topify fills with its Source Analysis feature, which maps exactly which URLs each AI platform cites for a given set of prompts. You don’t just know your content is “good enough.” You know whether ChatGPT is pointing users to your site or someone else’s.

There’s another wrinkle free tools miss entirely: platform disparity. AI engines don’t read the internet the same way. Perplexity pulls 46.7% of its top citations from Reddit. ChatGPT leans on Wikipedia for 47.9% of its top citations. Google AI Overviews favor YouTube at 23.3%. Claude prefers long-form blog content, which accounts for 43.8% of its top citations.

A free tool gives you one score. It doesn’t tell you that you’re visible on ChatGPT but invisible on Perplexity because your content doesn’t match the community-validated format Perplexity prefers.

Schema Markup: The AEO Skill Gap Hiding in Your Source Code

Structured data accounts for 20% of the GEO Score and acts as a semantic bridge between your unstructured content and the internal data models of generative engines. Free tools like the geo-fix-schema skill in the geoskills library can generate JSON-LD markup for you. That’s a genuine time-saver.

But generating schema and having AI actually use it are two different things.

The hierarchy of AI-friendly schema types has shifted in the AEO era. Basic Organization and Website schema offer minimal competitive advantage. The types that drive citations look different:

| Schema Type | AI Citation Probability | Why It Matters |

|---|---|---|

| FAQPage | High (67%+) | Mirrors the Q&A format LLMs use natively |

| Article | Medium-High | Defines authorship, date, and topic focus |

| HowTo | Medium | Provides step-by-step logic for RAG agents |

| Product | Variable | Feeds specification data to transactional models |

Layering 3-4 complementary schema types, like Article + FAQPage + BreadcrumbList, can increase citation rates by 2x compared to using a single type. That’s a significant multiplier most brands don’t realize they’re leaving on the table.

The deeper problem is verification. A free skill generates the code. It can’t tell you if the AI is actually parsing that schema correctly, or if there’s a semantic mismatch between your markup and your on-page content, which can trigger trust penalties and de-weighting. That kind of feedback loop requires tracking what AI engines do with your structured data over time, not just whether the code validates.

Brand Signals: The AEO Dimension No Free Tool Can Touch

Brand and Entity Signals make up 25% of the GEO Score. They’re also the dimension where free tools are most completely absent.

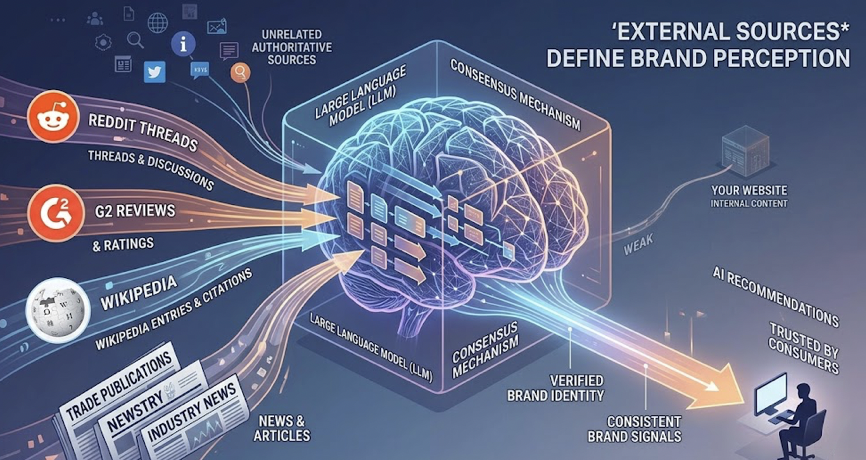

Here’s why: brand signals aren’t determined by anything on your website. They’re determined by what the rest of the internet says about you. LLMs synthesize perceptions from training data and real-time retrieval, governed by what researchers call a “consensus mechanism.” If multiple unrelated authoritative sources, like Reddit threads, G2 reviews, Wikipedia entries, and trade publications, describe your brand in consistent terms, the AI treats that as verified fact and recommends you accordingly.

Free tools can’t monitor this because they lack access to cross-platform prompt history and real-time sentiment analysis. They can’t detect “semantic drift,” where an AI model keeps associating your brand with an outdated incident because newer positive signals haven’t yet overridden the training data.

Only 30% of brands maintain consistent visibility across multiple regenerations of the same query. That means the other 70% are getting inconsistent or absent recommendations, and they don’t even know it.

Topify addresses this through continuous tracking of visibility, sentiment, and position across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms. When your brand’s positioning starts diverging from reality in AI responses, you’ll know within a day, not after a quarterly audit.

The Full Comparison: Free AEO Skills vs. Integrated Monitoring

Here’s where every dimension comes together. The free tools reference list on GitHub is a solid starting point for initial diagnostics. But the coverage gap becomes clear when you map free tools against a full-stack platform:

| Capability | Free Open-Source Skills | Topify |

|---|---|---|

| Technical Audit | Strong (47 methods) | Included |

| Content Citability Analysis | Basic (presence check only) | Source-level attribution tracking |

| Schema Generation | Generates code | Tracks AI parsing of schema |

| Brand Signal Monitoring | Not available | Sentiment, position, and visibility tracking |

| Execution | Manual dev work | One-click agentic execution |

| Competitive Benchmarking | Not available | Real-time share-of-model tracking |

| Platform Coverage | Usually ChatGPT only | ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen |

| Monitoring Frequency | One-time snapshots | Continuous daily tracking |

| Metrics | Technical health score | 7 metrics: visibility, sentiment, position, volume, mentions, intent, CVR |

| Price | Free | Starting at $99/mo |

The bottom line: free tools audit. They don’t track, they don’t execute, and they don’t benchmark you against competitors. For initial technical hygiene, they’re genuinely useful. For understanding what AI actually says about your brand and why, they’re structurally incapable.

The business case backs this up. AI-referred traffic converts at rates up to 803% higher than traditional organic search. B2B SaaS companies running full-stack GEO optimization have seen 527% increases in AI-referred sessions. E-commerce brands that converted marketing copy into data-rich comparison tables tripled their conversion rates from 2% to 6%.

Those numbers don’t come from running an audit once and fixing your robots.txt. They come from continuous monitoring and execution across all four AEO skill dimensions.

Conclusion

Free AEO tools handle the 20% of the GEO Score that’s easiest to fix. The remaining 80%, including content attribution, cross-platform citation patterns, and brand sentiment, requires infrastructure that open-source projects aren’t built to provide.

The practical path forward: use free tools like geo-optimizer-skill and geoskills for your initial technical baseline. Then move to Topify for continuous visibility tracking, competitive benchmarking, and one-click execution across the dimensions that actually determine whether AI recommends your brand or your competitor’s.

A GEO score tells you where you stand today. What it doesn’t tell you is whether AI will still mention your brand tomorrow. That’s the gap worth closing.

FAQ

Q: What is an AEO skill and why does it matter for AI visibility?

A: An AEO skill is an executable agent workflow or diagnostic tool, often installed in IDEs like Claude Code or Cursor, designed to audit how well a website is structured for AI search engines. It matters because generative engines use chunking and semantic parsing to retrieve information. If your content lacks proper heading hierarchy, FAQ schema, or citable data points, the RAG process will likely skip it regardless of quality.

Q: Can free GEO tools replace a paid AI visibility platform?

A: They can’t. Free tools are audit-only. They tell you what’s wrong with your code but can’t track what AI actually says about you or how you compare to competitors over time. Paid platforms add tracking and execution layers, including reverse-engineering competitor citations and identifying high-volume AI prompts that have zero traditional keyword volume.

Q: Which AEO skill dimension has the biggest impact on AI citations?

A: Content Citability, weighted at 35% of the GEO Score, has the highest impact. The Princeton study found that adding statistics and expert quotations produced the single largest visibility lift, up to 115% in some categories. Brand Signals (25%) is the second most influential, measuring how much AI trusts your brand based on third-party consensus.

Q: How often should I audit my site’s GEO score?

A: Run a baseline audit monthly. But high-intent prompts should be monitored daily. AI models exhibit drift, and visibility can drop within 2-3 days if competitors update their content or if the model retrains. Enterprise tools automate this daily checking so brands don’t lose their share of AI recommendations without warning.