Your domain authority is strong. Your keyword rankings are climbing. But when a prospect asks Perplexity, “What’s the best platform for [your category]?”, the answer pulls from a Reddit thread you’ve never seen, a competitor’s blog post, and a YouTube transcript from last quarter. Your brand isn’t mentioned once.

That’s the gap most SEO teams can’t diagnose with traditional tools. The metrics that powered a decade of search strategy don’t measure what AI engines actually cite, which sources they trust, or why your competitor keeps showing up in the answer while you don’t. Building an AI citation tracking strategy isn’t optional anymore. It’s the only way to understand where your brand stands in the answers that are replacing search results.

Answer Engine Optimization Trends Reshaping Brand Discovery in 2026

The shift from links to answers is accelerating faster than most marketing teams realize. According to Gartner, roughly 25% of organic search traffic will move from traditional search engines to AI chatbots and virtual assistants by 2026. Zero-click searches on Google have jumped from 56% in 2024 to 69% in 2025, meaning more than two-thirds of queries now resolve without a single click to any website.

That alone would be enough to rethink your visibility strategy. But the numbers get sharper.

AI Overviews now trigger on roughly 48% of queries, up from 31% a year ago. For queries where an AI Overview appears, organic click-through rates have dropped from 1.76% to 0.61%, a 65% decline. Meanwhile, brands that are cited inside those AI-generated answers see 35% higher organic CTR and 91% higher paid CTR compared to brands that aren’t.

Here’s what makes this tricky: visibility in AI answers is volatile. Research shows only 30% of brands maintain consistent presence across consecutive AI responses, and just 20% stay visible across five consecutive queries on the same topic. You can be cited on Monday and gone by Thursday. That volatility is exactly why answer engine optimization trends in 2026 are pointing toward continuous citation monitoring, not periodic ranking checks.

Why Traditional SEO Metrics Can’t Track What AI Engines Actually Cite

If you’re still relying on domain authority, backlink counts, and keyword position tracking as your primary visibility signals, you’re measuring the wrong game.

The decoupling is already visible in the data. In Google’s AI Overviews, only 38% of cited sources come from pages ranking in the organic top 10. That number was 76% just a year earlier. Today, 31% of citations pull from pages ranked 11 to 100, and 36.7% come from pages ranked beyond position 100.

In other words, a page that doesn’t rank on page one of Google can still be the primary source AI cites in its answer.

ChatGPT adds another layer of complexity. Its top citation source is Wikipedia, accounting for 47.9% of its top-10 cited domains. For B2B and SaaS queries, ChatGPT leans toward competitor official websites at rates 11.1 percentage points higher than Google does. Perplexity, on the other hand, pulls 46.7% of its high-frequency citations from Reddit. Each platform has a different “citation personality,” and none of them map cleanly to your existing SEO dashboard.

The takeaway isn’t that SEO is dead. It’s that SEO metrics alone can’t tell you whether AI trusts your content enough to cite it.

The Core of an AI Citation Tracking Strategy: What to Measure and Where

An effective AI citation tracking strategy tracks four distinct dimensions, each requiring different data and different responses.

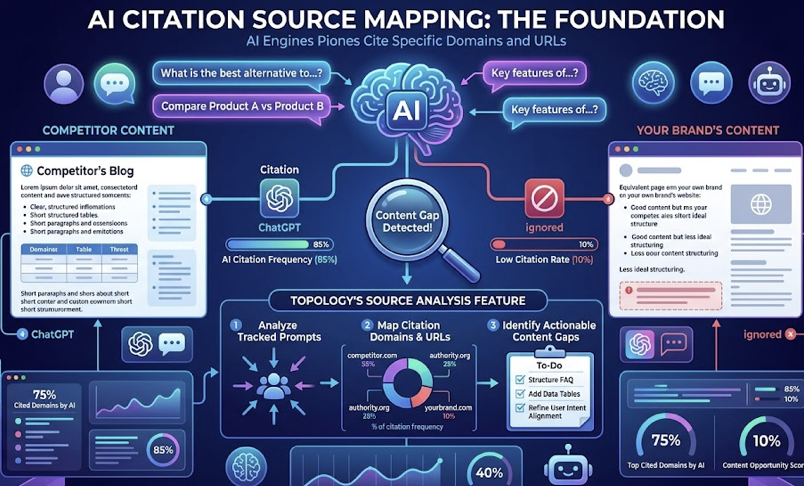

Citation Source Mapping. Which specific domains and URLs are AI engines citing when users ask questions relevant to your brand? This is the foundation. If a competitor’s blog post is the go-to reference for ChatGPT while your equivalent page gets ignored, that’s a content gap you can close. Topify‘s Source Analysis feature handles this at scale, showing exactly which domains each AI platform cites for your tracked prompts.

Citation Frequency. How often does your brand appear across a set of relevant queries? Top-performing brands achieve visibility rates around 12% per 1,000 relevant queries, while the average sits at just 0.3%. Tracking this over time reveals whether your optimization efforts are working or whether citation share is shifting to competitors.

Citation Context. Being mentioned isn’t the same as being recommended. AI might cite your product as “a budget option” when your positioning is premium. Sentiment tracking across platforms catches these narrative misalignments before they calcify into the model’s default description of your brand.

Platform-Specific Coverage. ChatGPT, Perplexity, and AI Overviews don’t cite the same sources or frame brands the same way. Perplexity links 78% of its assertions to specific sources, while ChatGPT manages 62%. A brand might dominate Perplexity citations but be invisible in ChatGPT. Cross-platform tracking is non-negotiable.

Topify’s Visibility Tracking combines all four dimensions into a single dashboard, covering ChatGPT, Gemini, Perplexity, Claude, and AI Overviews. In practice, that means you can spot a drop in mentions on one platform and trace it back to a specific source that stopped being cited, without toggling between five different tools.

5 Emerging Trends in Answer Engine Optimization That Should Shape Your 2026 Strategy

The answer engine optimization landscape isn’t standing still. Here are five shifts that directly affect how brands should approach citation tracking and content strategy this year.

1. Reddit and YouTube now dominate AI citations.

This is the single biggest structural change in AI citation patterns. Reddit’s share of AI citations grew by at least 73% between October 2025 and January 2026, and in some verticals it doubled. YouTube citations jumped from 27,203 to 42,262 in a single month, a 55% increase. In Google’s AI Overviews, YouTube accounts for 23.3% of citations and Reddit covers 21%.

Why? AI engines use Reddit threads and YouTube transcripts to “humanize” technical answers with real-world experience. For brands, this means your Reddit presence and video content strategy directly influence whether AI cites you.

2. Entity-based citations are replacing keyword-based matching.

AI doesn’t match keywords anymore. It identifies entities: people, companies, products, concepts. The shift from pattern matching to semantic understanding means your brand needs to exist as a well-defined node in the AI’s knowledge graph, not just appear in pages that contain the right phrases. Consistent brand attributes across your website, social profiles, and third-party mentions help AI verify your entity identity.

3. Content freshness has become a hard requirement.

Pages that aren’t updated within a quarter are 3x more likely to lose citations. For commercially valuable queries, 83% of cited sources come from pages updated within the past year, with over 60% updated in the last six months. Brands that regularly refresh content earn citations at 30% higher rates than those that don’t.

4. Structured content dramatically increases citation probability.

The data here is specific. Pages using strict H1-H2-H3 hierarchy see a 2.8x increase in citation rates. Sections between 120 and 180 words get cited 70% more often than sections under 50 words. And 87% of cited pages use a single H1 tag. Adding a 40-to-60-word summary at the top of each section (an “answer block”) increases AI Overview extraction probability by 40%.

5. Schema markup is now table stakes for AI citation.

About 61% of pages cited in AI Overviews use three or more types of Schema markup. Pages with multiple Schema types see a 13% lift in citation probability. For brands, this means going beyond basic Article schema to include FAQ, HowTo, Product, and Organization markup.

From Tracking to Action: Turning Citation Data into Visibility Gains

Data without action is just a dashboard you check on Mondays. The real value of an AI citation tracking strategy comes from a closed-loop process: Track, Analyze, Optimize, Monitor.

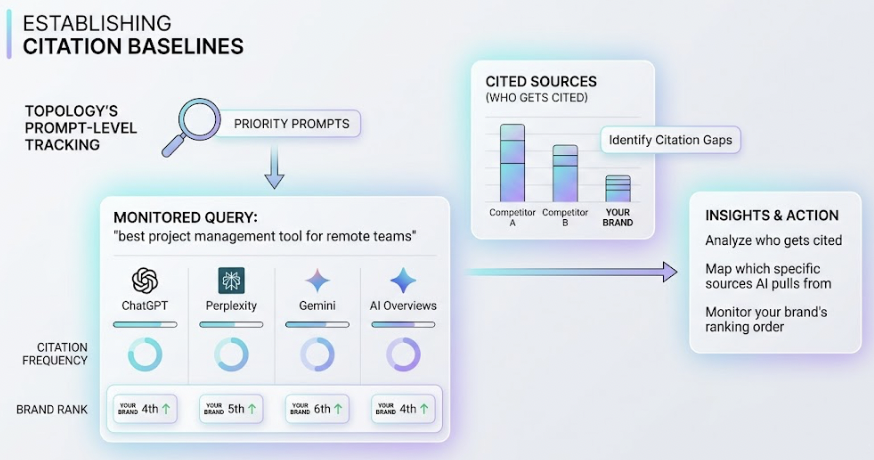

Here’s what that looks like in practice. You start by establishing a citation baseline across your priority prompts and platforms. Topify’s prompt-level tracking lets you monitor specific queries (like “best project management tool for remote teams”) across ChatGPT, Perplexity, Gemini, and AI Overviews simultaneously, showing who gets cited, which sources AI pulls from, and where your brand ranks in the recommendation order.

Next, you analyze the gaps. If Perplexity cites a competitor’s Reddit AMA but ignores your equivalent content, that’s a signal to invest in community-driven content on that platform. If ChatGPT consistently cites a particular third-party review site, getting your brand reviewed there becomes a priority.

Then you optimize. Content restructuring (adding answer blocks, tightening heading hierarchy, refreshing outdated stats) can shift citation patterns within weeks. Topify’s one-click GEO execution feature lets you define optimization goals in plain English and deploy the strategy without manual workflows, turning insights into action faster than most teams can schedule a content sprint.

Finally, you monitor. Citation patterns shift constantly. A source that AI favored last month might drop off this month. Continuous tracking through Topify’s platform ensures you catch these shifts before they erode your visibility.

What Most Brands Get Wrong About AI Citation Tracking

Three mistakes show up repeatedly in how brands approach this space.

Tracking only one platform. ChatGPT, Perplexity, and AI Overviews each have different citation preferences. A brand visible in ChatGPT might be completely absent from Perplexity because Perplexity weights Reddit content that ChatGPT largely ignores. Single-platform tracking gives you a fraction of the picture.

Confusing mentions with endorsements. Your brand might appear in an AI answer as “an alternative to consider” while your competitor gets described as “the top-rated option.” Topify’s Sentiment Analysis scores these distinctions on a 0-to-100 scale, so you know not just whether you’re mentioned, but how you’re framed.

Updating content too slowly. A quarterly content calendar doesn’t match AI’s refresh cycle. When 83% of commercially cited sources were updated within the past year and quarterly non-updates triple your odds of losing citations, the cadence needs to be faster. Building a 90-day refresh cycle for core commercial pages isn’t aggressive. It’s baseline.

Conclusion

The brands winning AI visibility in 2026 aren’t the ones with the highest domain authority or the most backlinks. They’re the ones that know exactly what AI cites, why it cites it, and how to make sure their content stays in the citation pool.

An AI citation tracking strategy built around the emerging trends in answer engine optimization, from Reddit’s citation dominance to entity-based discovery to structured content requirements, gives you the operating system for this new reality. The gap between brands that track citations and brands that don’t will only widen as AI handles more of the discovery layer. Start by auditing where your brand stands today across ChatGPT, Perplexity, and AI Overviews, then build the tracking and optimization loop that keeps you visible.

FAQ

Q: What is an AI citation tracking strategy?

A: It’s a systematic approach to monitoring which sources AI platforms (ChatGPT, Perplexity, Gemini, AI Overviews) cite when answering queries relevant to your brand. It covers four dimensions: citation source mapping, citation frequency, citation context (sentiment and positioning), and cross-platform coverage. The goal is to understand where your brand appears in AI-generated answers and take action to improve visibility.

Q: What are the biggest trends in answer engine optimization for 2026?

A: Five trends stand out: the dominance of Reddit and YouTube as AI citation sources, the shift from keyword matching to entity-based citations, content freshness becoming a hard requirement for citation eligibility, structured content (heading hierarchy, answer blocks) dramatically increasing citation rates, and Schema markup becoming a baseline expectation for pages that want to get cited.

Q: How do you track which sources AI engines cite for your brand?

A: Platforms like Topify simulate real user queries across multiple AI engines and track exactly which domains, URLs, and content types get cited in the responses. This provides prompt-level visibility into citation patterns, competitive positioning, and sentiment across ChatGPT, Perplexity, Gemini, Claude, and AI Overviews.

Q: How often should you monitor AI citation data?

A: Continuously, or at minimum weekly. AI citation patterns are volatile. Research shows only 30% of brands maintain consistent visibility across consecutive AI responses. A source cited today can drop off within days as AI models update their preferences. Quarterly reviews are too slow for this environment.