Your content ranks on page one. Your domain authority is solid. Your backlink profile would make most competitors jealous. Then a potential buyer types a category question into ChatGPT, and the response names four brands with inline sources. Yours isn’t one of them.

That gap between Google rankings and AI recommendations is costing brands real pipeline. Data shows that when an AI summary appears, click-through rates on traditional results drop from roughly 15% to 8%. And the traffic that does come through AI search converts at up to 9x the rate of traditional organic. The brands getting cited aren’t necessarily the ones with the highest DA. They’re the ones whose content is built for how LLMs actually retrieve, evaluate, and synthesize information.

What “LLM Citation” Actually Means (and Why It’s Not a Backlink)

An LLM citation is a reference, recommendation, or direct link to an external source inside an AI-generated answer. It’s how ChatGPT, Perplexity, and Gemini tell the user: “This is where the information came from.”

But not all citations look the same. There are two distinct types. Explicit citations show up as superscript numbers or source cards with clickable URLs. You’ll see these on Perplexity and in ChatGPT’s Search mode. Implicit citations happen when the model mentions a brand or product by name as an authority without linking directly. This is common in ChatGPT’s standard conversational mode and Gemini’s knowledge-integrated responses.

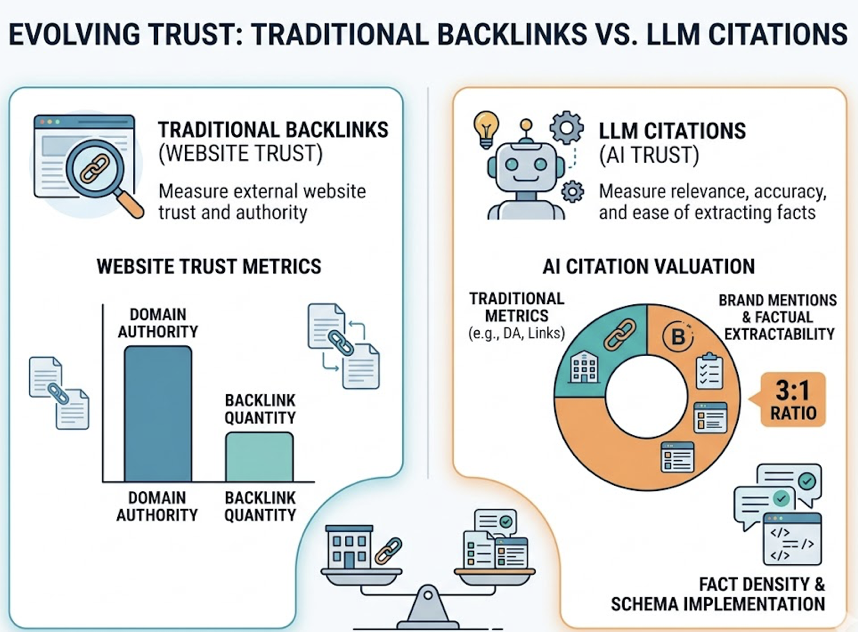

Here’s the thing: traditional backlinks measure how much other websites trust you. LLM citations measure how much the AI trusts you. Research suggests that citations value brand mentions and factual extractability at a 3:1 ratio over traditional backlink metrics. A page with lower domain authority but higher “fact density” and better schema implementation will frequently beat a high-DA page for an AI citation.

That distinction matters because only 17-32% of sources cited by LLMs overlap with Google’s top 10 organic results. The two systems are running on separate logic.

How ChatGPT, Perplexity, and Gemini Choose What to Cite

Each platform has a distinct retrieval architecture. Treating them as one monolithic system is the first mistake most brands make.

| Platform | Citations Per Response | Primary Signal | Content Preference |

|---|---|---|---|

| Perplexity | ~21.87 | Freshness and data density | Research reports, benchmarks, case studies |

| ChatGPT | ~7.92 | Reasoning and depth | How-to guides, nuanced explainers |

| Gemini | ~8.34 | E-E-A-T and entity trust | Official brand pages, structured product data |

Perplexity operates as a precision retrieval engine. It uses a proprietary index combined with Bing to perform real-time searches, delivering responses in a median of 6.8 seconds. Freshness is non-negotiable here. Content updated within the last 30 days has an 82% citation rate. After six months, that drops to 37%. If you haven’t touched a page in half a year, Perplexity has likely stopped citing it.

ChatGPT is more selective. It cites fewer unique domains but applies a higher bar for topical authority. It’s looking for content that answers “why” and “how,” not just “what.” Long-form guides that anticipate follow-up questions and offer balanced perspectives tend to perform well.

Gemini leans on Google’s Knowledge Graph and prioritizes “consensus signals,” meaning information verified across multiple authoritative sources like Wikipedia, LinkedIn, and government databases. It’s also more likely to cite brand-owned websites (52.15% of its citations) compared to ChatGPT, which pulls heavily from third-party directories and review sites (48.73%).

The shared thread: all three platforms reward content that is structured, specific, and consistent across multiple sources.

5 Strategies That Actually Drive LLM Citations

Earning an LLM citation isn’t about keyword stuffing. It’s about making your content easy for the AI’s retrieval system to extract, verify, and trust. These five strategies map directly to how LLMs evaluate sources.

Put the Answer First

LLMs don’t read entire pages. They retrieve specific passages or “chunks.” The closer your key claim is to the top of a section, the more likely it gets pulled.

Start every article and major section with a 2-3 sentence summary that directly answers the target question. This “Bottom Line Up Front” approach reduces the compute resources the AI needs to verify your content. Use H2/H3 tags that mirror natural language questions. Instead of “Features,” write “What Are the Core Features of [Product]?”

Structured schema matters too. Implementing FAQ, Organization, and Product schema (JSON-LD) can increase AI visibility by up to 67%, because it gives the model explicit, machine-readable context for your content.

Build a Credibility Chain

AI models evaluate how well-researched your content is by checking whether you cite authoritative external sources. Including references to academic research, industry reports (Gartner, IDC, Forrester), or government data within your own content creates what researchers call a “credibility chain.” This practice can increase your citation probability by up to 40%.

Author bios matter too. Include credentials, years of experience, and links to LinkedIn profiles to satisfy E-E-A-T requirements. Gemini, in particular, weights these signals heavily.

Capture Long-Tail Conversational Intent

AI search queries average 23 to 60 words, compared to 3-4 words on Google. Users aren’t typing keywords. They’re asking compound, scenario-specific questions like “Best CRM for small B2B teams with Slack integration under $50/user.”

Create content that maps to these compound queries. Use “People Also Ask” phrasing in your subheadings. Build FAQ sections that address the specific, multi-variable questions your buyers actually ask AI platforms.

Maintain the Freshness Advantage

In AI search, outdated information often gets treated as wrong information. This is especially true for commercial and transactional queries where pricing, features, or competitive landscapes change frequently.

Implement a quarterly update cycle for cornerstone content. Use IndexNow to alert Bing (and by extension ChatGPT and Perplexity) immediately when content is refreshed. This reduces discovery time from days to hours. The payoff is significant: content freshness within the last 30 days is associated with a 115% increase in AI visibility.

Own the Multi-Platform Consensus

AI models triangulate truth. They check whether your brand information is consistent across your website, G2, Reddit, TrustRadius, and industry publications. If the information conflicts, the model’s confidence score drops and it excludes you.

This is especially important because 85% of AI citations come from third-party sites, not from brand-owned pages. Your off-site presence isn’t optional. Brands that maintain consistent information across four or more platforms see a 2.8x to 4.0x increase in AI recommendation rates.

Audit your external profiles. Make sure your core value proposition, pricing tier, and product descriptions are identical everywhere.

Why Most Brands Can’t Tell If They’re Being Cited

Here’s the uncomfortable truth: most marketing teams are flying blind in the AI search era.

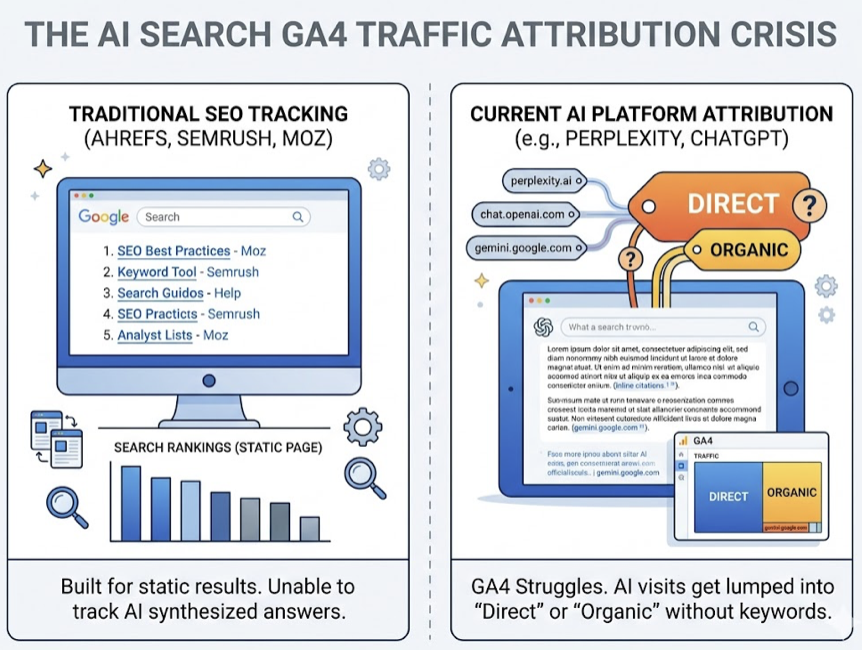

Traditional SEO tools like Ahrefs, Semrush, and Moz were built to track rankings on a static results page. They’re structurally incapable of monitoring synthesized AI answers. Google Analytics (GA4) struggles to categorize traffic from AI platforms accurately. Some visits show up as chat.openai.com or perplexity.ai, but many get lumped into “Direct” or “Organic” without keyword context.

The bigger problem is scale. Because AI responses are probabilistic, the same prompt can yield different results in different sessions or locations. A manual check (“Does ChatGPT recommend me?”) is statistically meaningless. You’d need to simulate hundreds of prompt variations across multiple models to get a reliable visibility score.

And there’s a risk most teams don’t even think about: semantic drift. That’s when an AI model’s description of your product diverges from reality. It might describe your enterprise platform as a “free utility for students” based on outdated training data. Without automated monitoring, you won’t know until a prospect mentions it in a sales call.

How to Track and Measure LLM Citations at Scale

Specialized AI visibility platforms bridge the gap that traditional tools can’t. Topify is built specifically for this problem, providing continuous monitoring across the generative ecosystem.

Source Analysis is where most teams should start. It reverse-engineers the exact URLs and domains that AI models cite for your target keywords. If Perplexity is citing a competitor’s comparison table or a specific Reddit thread instead of your content, Source Analysis shows you exactly which sources are driving those recommendations. That’s the gap between “we’re invisible” and “here’s why, and here’s what to create next.”

Visibility Tracking monitors your brand across ChatGPT, Gemini, Perplexity, and other major platforms. It calculates a Visibility Score (0-100) based on the percentage of target prompts where your brand appears. Since the #1 ranked brand in an AI-generated list typically captures 62% of the share of voice, knowing your position isn’t optional.

Competitor Monitoring auto-detects which brands are surfaced alongside yours and identifies the content gaps that allow them to hold top recommendation slots.

The practical starting point: Topify’s free GEO Score Checker audits your site’s AI bot access, structured data, and content signals with no signup required. It tells you whether your technical foundation is intact before you invest in broader optimization.

A B2B SaaS team used this approach to discover that despite ranking #1 on Google, they were invisible in AI answers. Perplexity and ChatGPT were exclusively citing a competitor’s comparison table and three niche forum threads. By creating structured “answer-first” content, engaging in the identified forums, and aligning their review profiles, they increased their AI Visibility Score by 35% within 45 days and saw a 14% lift in self-reported attribution from leads who found the brand through ChatGPT.

3 LLM Citation Mistakes That Keep Brands Invisible

The “Google-Only” Optimization Bias

Ranking #1 on Google doesn’t guarantee AI citations. The overlap between Google’s top 10 results and LLM-cited sources is only 17-32%. AI models prioritize “extractability” over “link equity.” A site with lower DA but higher fact density and better schema will frequently outperform a high-DA competitor in AI answers.

The fix: optimize for information gain. Provide data, benchmarks, and perspectives that don’t exist elsewhere on the web.

“Self-Centric” vs. “Answer-Centric” Content

Traditional “About Us” pages loaded with vague adjectives (“passionate,” “innovative,” “world-class”) are useless to an LLM trying to construct a factual answer. The model needs declarative, verifiable claims.

The fix: replace “We are experts in security” with “Our platform provides end-to-end AES-256 encryption and is SOC 2 Type II compliant.” Specifics get cited. Adjectives don’t.

Ignoring the Consensus Gap

If your website says one thing and your G2 reviews or Reddit mentions say something different, AI models experience a “trust break.” LLMs are designed to identify and neutralize bias by cross-referencing multiple sources. Contradictions lead to exclusion.

The fix: audit every external platform where your brand appears. Ensure consistent messaging about your positioning, pricing, and capabilities across all third-party profiles and review sites.

Conclusion

LLM citation is becoming the new unit of brand authority in AI search. The mechanics are different from traditional SEO, the platforms don’t all work the same way, and most existing tools can’t measure it.

Three things matter most: make your content structurally extractable (schema, clear headings, answer-first format), own the consensus across third-party platforms (85% of citations come from sources you don’t own), and measure what you can’t see with specialized AI visibility tracking. The brands building this discipline now are the ones AI will recommend next quarter.

FAQ

What is an LLM citation? An LLM citation is a reference, link, or mention of a brand or source within an answer generated by an AI model like ChatGPT or Perplexity. It serves as a machine-generated endorsement and a primary source of high-intent referral traffic.

How does Perplexity decide which sources to cite? Perplexity uses a real-time Retrieval-Augmented Generation (RAG) model. It prioritizes factual density, structured content, and extreme freshness (updates within 30 days) to provide verifiable answers with explicit URL citations.

Can you optimize content for ChatGPT citations? Yes. ChatGPT prioritizes topical depth, comprehensive how-to guides, and content that anticipates follow-up questions. It uses the Bing index and values authoritative, well-reasoned narratives over simple keyword matching.

How often do LLMs update their citation sources? It depends on the platform. RAG-based systems like Perplexity update their indices in near-real-time (daily or hourly via IndexNow). Foundational models like standard ChatGPT or Gemini rely more on training data but are increasingly grounded in real-time search.

What’s the difference between LLM citation and traditional backlinks? Traditional backlinks are hyperlinks used by search engines to measure site authority and rank pages. LLM citations are synthesis signals used by AI models to construct answers. Citations value brand mentions and factual extractability at a 3:1 ratio over traditional backlink metrics.