You spent years building domain authority. Your pages rank. Your backlinks are solid.

Then someone asks ChatGPT to recommend a tool in your category, and your brand isn’t in the answer. Not even close.

That’s the gap most brands still can’t see, and it’s getting more expensive to ignore.

The Great Decoupling: Why SEO Rankings No Longer Predict AI Visibility

Traditional search and generative AI operate on completely different logic.

Google is a librarian. It retrieves pages ranked by authority signals like backlinks and keyword relevance. LLMs are analysts. They ingest dozens of sources, compress them into a single answer, and cite only the passages that best ground their response. A brand with thousands of backlinks but thin, keyword-stuffed content will rank on Google and be ignored by ChatGPT.

The data confirms this isn’t a niche problem. Only 12% of URLs cited by ChatGPT, Perplexity, and Copilot overlap with the organic top-10 results for the same query. In roughly 43% of cases, Google AI Overviews cite sources that don’t appear in top traditional results at all. Meanwhile, when an AI summary is present, organic click-through rates have dropped by approximately 61%, from 1.76% to 0.61%.

The metric that matters in 2026 isn’t your ranking. It’s your citation frequency.

What an LLM Citation Tracking Tool Actually Does

An LLM citation tracking tool is an automated system that queries AI platforms like ChatGPT, Gemini, and Perplexity with hundreds or thousands of natural language prompts, then extracts how each platform responds to those queries about your brand and category.

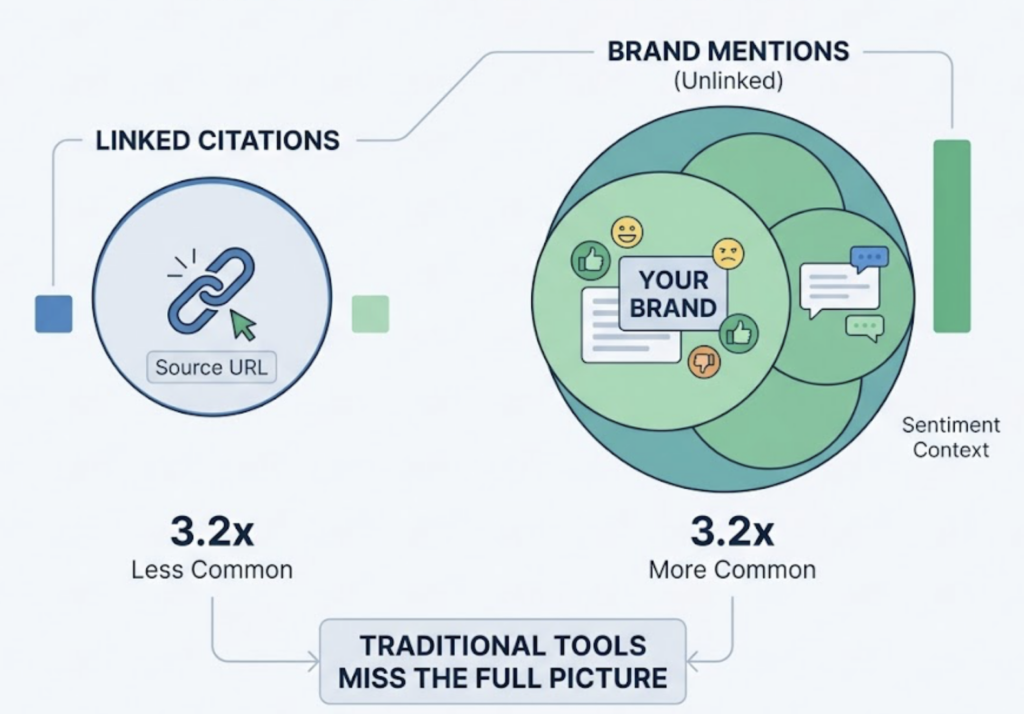

It captures three types of data from each AI response: linked citations (clickable URLs provided as sources), unlinked brand mentions (your name appears but no link is given), and the sentiment context around each mention. Research shows brands are mentioned 3.2x more often than they’re cited with links, which means most “brand monitoring” tools are measuring only a fraction of what’s actually happening.

The more important distinction is at the passage level. Legacy SEO tools evaluate a URL as a single unit. An LLM citation tracker recognizes that AI models retrieve dozens of pages but cite specific sentences from only a few. It identifies which segments of your content are being extracted and which are being discarded, even when your domain authority is higher than the competitors getting cited.

That passage-level insight is what makes the difference between knowing you have a visibility problem and knowing exactly why.

5 Things a Good LLM Citation Tracking System Should Tell You

Not all tools measure the same things. A professional-grade LLM citation tracking system needs to answer five specific questions.

1. Which domains AI cites most for your topic. AI citations follow a power law: roughly 30 domains capture 67% of citations within a specific topic on ChatGPT. You need to know whether the AI in your category relies on community forums, encyclopedic sources, or trade publications, since this shapes your entire content distribution strategy.

2. Whether your own URLs are in the grounding pool. There’s a critical difference between a crawlability issue and an authority issue. A tracker should show which specific pages are being retrieved by the AI, not just whether your brand was mentioned.

3. Competitive share of citation. If your brand appears in 40% of relevant responses but a rival appears in 75%, that gap is your target. Visibility is always relative to who else is in the answer.

4. Which content formats are getting cited. The data here is specific enough to change your editorial calendar. Comparative listicles capture 32.5% of all AI citations. Comprehensive guides with data tables achieve 67% citation rates. FAQ schema drives 3.2x higher AI Overview inclusion. If you’re writing narrative blog posts for a category where the AI only cites tables and statistics, you’re producing the wrong format.

5. Citation stability over time. Only 30% of brands stay visible from one AI answer to the next, and only 20% remain visible across five consecutive runs. LLM responses are probabilistic. A tracker that only shows a snapshot is missing the volatility that defines whether your visibility is durable or accidental.

Topify’s Source Analysis: LLM Citation Tracking at Scale

Most AI visibility tools were built on top of legacy SEO infrastructure. Topify was built from the ground up by LLM algorithm researchers with backgrounds from Stanford and peer-reviewed publications at NeurIPS, AAAI, and ICLR.

That research foundation matters in practice. The team’s work on how LLMs acquire domain-specific semantics through contextual exposure informs Topify’s approach to “Entity Confidence,” essentially measuring how well the model has learned to trust a brand as a reliable source. It’s the difference between tracking whether you were mentioned and understanding whether the model treats you as a reference standard.

Topify’s Source Analysis dashboard covers four capabilities that most LLM citation tracking platforms don’t combine in one place.

Cross-platform citation audit. Topify tracks citations across ChatGPT, Gemini, Perplexity, and Google AI Overviews simultaneously. This matters because content overlap between these platforms is only 10-15%. Ranking well on one platform doesn’t carry over to the others.

Dark query discovery. When an AI processes a complex prompt, it internally decomposes it into sub-queries, a process called “query fan-out.” These hidden sub-queries are where most citation gaps originate. Topify surfaces the exact prompts where competitors are recommended while your brand is absent, including sub-queries that traditional tools can’t see.

URL-level provenance tracking. The platform identifies which specific passages from your site are being used as grounding material, down to the sentence level. Content teams can see exactly which sentences are being extracted by the model and which pages are being retrieved but not cited.

GEO strategy insights. Topify goes beyond monitoring. It analyzes the structural characteristics of cited competitor content and recommends specific changes, like adding an answer capsule or restructuring a section as a table, to increase citation probability. The platform’s GEO execution layer lets teams deploy those changes with one click, no manual workflows required.

Starting at $99/month on the Basic plan with support for 100 prompts and 9,000 AI answer analyses across four projects, it’s structured for teams that are just starting to build an AI visibility function, not just enterprise budgets.

3 Mistakes Brands Make When They Start Tracking LLM Citations

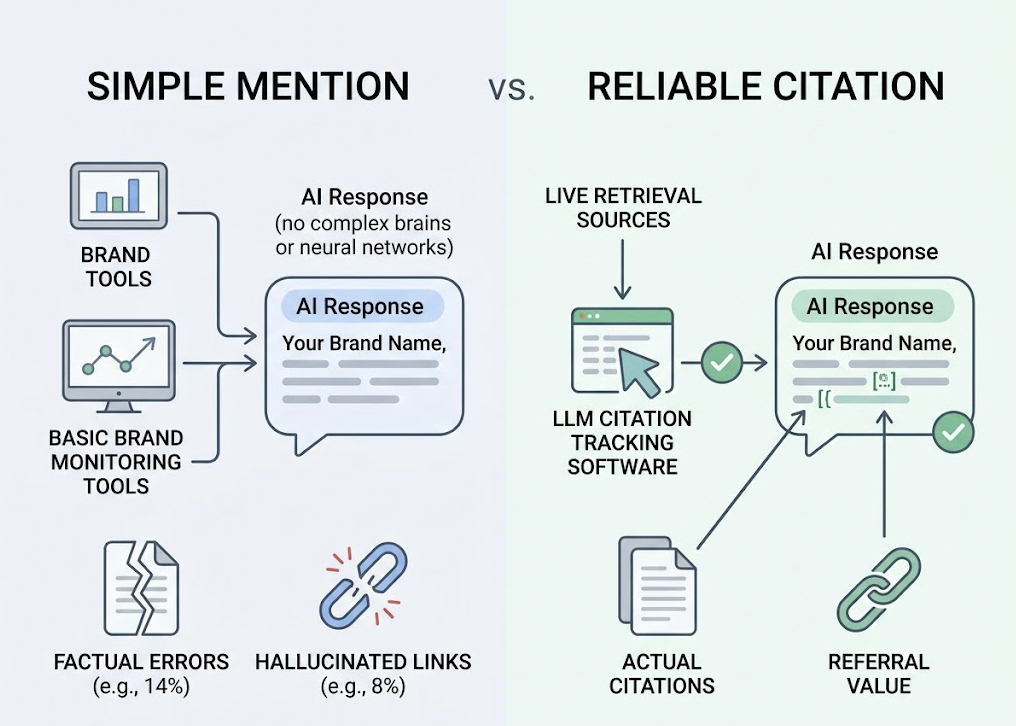

Tracking mentions instead of citations. Many teams use basic brand monitoring tools, see their name in a ChatGPT response, and conclude they’re visible. A mention based on the model’s parametric training data is not the same as a citation from live retrieval. 14% of AI responses about brands contain factual errors, and 8% of links are hallucinated. Without a dedicated LLM citation tracking software, you can’t separate actual citations from hallucinated ones, or from mentions that carry no referral value at all.

Single-platform monitoring. ChatGPT holds roughly 79-81% of the chatbot market, so many teams optimize for it exclusively. The problem is that ChatGPT favors Wikipedia-style authoritative depth, while Perplexity favors Reddit-style community consensus and freshness. An LLM citation tracking solution that covers at least four platforms simultaneously gives brands 2.8x higher likelihood of citation across the ecosystem. Optimizing for one platform while ignoring the others is a structurally incomplete strategy.

Siloing AI data from SEO data. Teams sometimes treat LLM citation analytics and SEO metrics as separate universes, which leads to decisions like removing a high-ranking page because it isn’t getting citations, or ignoring a low-traffic page that happens to be a primary grounding source for Perplexity. The right framing is that traditional SEO gets you indexed; GEO makes you extractable. Success in 2026 requires optimizing for both surfaces at once.

From Citation Gap to Content Action: A 3-Step Framework

Tracking data is only useful if it changes what you publish. Here’s how to move from analysis to execution.

Step 1: Map your dark queries. Identify the hidden sub-queries where competitors are winning and you’re absent. These are often high-intent questions the AI generates internally while processing a broader prompt. If your content doesn’t cover them as standalone topics, you’ll be excluded from the final response even when the surface-level query is directly about your category.

Step 2: Restructure for extractability. 44% of AI citations are pulled from the first third of a page. Structure your content with direct answer capsules at the top of each section, 40-60 words that give the AI a clean, factual unit to extract. Adding statistics increases AI visibility by up to 22%, and content with three or more data points per passage has 2.5x higher citation rates. Add FAQ schema. It maps directly to how AI prompts are structured and drives significantly higher inclusion in AI Overviews.

Step 3: Build your entity footprint off-site. 85% of brand mentions in AI answers come from third-party sources, like Wikipedia, Reddit, G2, and industry publications. Getting cited on platforms the AI already trusts is one of the fastest ways a firm helps brands appear more often in AI answers. Active participation in relevant subreddits, for instance, can drive 4-7x citation increases, since forums are the primary citation source for Perplexity at 46.7% and a top-3 source for Google AI Overviews at 21%.

Topify’s GEO execution layer connects these three steps. It identifies the gaps, recommends structural changes, and lets you deploy them without managing a separate workflow.

Conclusion

Google rankings measure whether you’re retrievable. LLM citations measure whether you’re trusted.

Gartner projects traditional search volume will decline 25% by the end of 2026 as users shift to AI search. McKinsey estimates $750 billion in consumer spending will be directly influenced by AI search by 2028. In that environment, being invisible in an AI answer isn’t a visibility problem. It’s a revenue problem.

An LLM citation tracking tool is the starting point for fixing it. Topify combines citation monitoring, competitive benchmarking, and GEO execution into a single platform, built on research that understands why AI models trust certain sources over others. If your brand isn’t showing up in AI answers today, that’s the data you need to start with.

FAQ

What is LLM citation tracking? It’s the process of using automated tools to query multiple AI platforms like ChatGPT, Perplexity, and Gemini, then detecting when those platforms cite your brand or content as a source of truth for specific queries. It measures “Share of Model” rather than search rankings.

How is LLM citation tracking different from backlink monitoring? Backlinks are hyperlinks between websites that Google uses as ranking signals. LLM citations are passage-level attributions within a synthesized AI response, indicating which content grounded the AI’s logic. A page can have zero backlinks and still be heavily cited by Perplexity.

Which AI platforms should I track citations on? At minimum: ChatGPT, Perplexity, and Google AI Overviews. There’s only an 11-15% overlap in what these models cite, and each has a distinct retrieval preference. ChatGPT favors authoritative depth; Perplexity favors freshness and community sources.

How often should I run citation tracking analysis? Weekly is the recommended cadence. 40-60% of cited domains can change monthly for identical prompts as models update their indexes. Monthly reporting misses the volatility that matters for content decisions.

Can a firm help brands appear more often in AI answers? Yes. Specialized GEO platforms like Topify identify citation gaps, surface hidden dark queries, and restructure content to achieve up to 40% higher visibility in model responses. The combination of tracking data and one-click execution is what separates passive monitoring from active optimization.