Your domain authority is 70. Your keyword rankings are solid. Your team’s SEO dashboard shows green across the board. Then someone asks ChatGPT, “What’s the best tool in your category?” and your brand doesn’t show up once.

That disconnect isn’t a fluke. Organic CTR on queries that trigger AI Overviews has dropped roughly 61%, and nearly 64% of all Google searches in the U.S. now end without a single click to any website. The metrics that defined success for two decades don’t measure what matters in a world where AI provides the answer directly.

Clicks Measured the Old Search. AI Visibility Needs Its Own Language.

Impressions and clicks were designed for a directory-style search engine. You optimized a URL, the search engine ranked it, and users clicked through to your site. The entire model assumed that “being found” meant “being listed.”

AI search doesn’t work that way. Generative engines like ChatGPT, Perplexity, and Gemini don’t serve a list of links. They synthesize a single answer, pulling from dozens of sources, and present a curated response. Your brand is either in that answer or it isn’t.

That’s a fundamentally different game.

Traditional keyword queries average 3 to 4 words. Conversational prompts sent to AI engines today average between 23 and 60 words, reflecting deeper, more specific intent. And a 2025 McKinsey study found that half of all consumers now intentionally use AI-powered search engines for buying decisions. The front door to discovery has moved, and legacy metrics can’t tell you whether your brand made it through.

Metric 1: AI Mention Rate, the New Baseline for Visibility

AI Mention Rate measures how often your brand appears in synthesized responses for a set of category-relevant prompts. It’s the most fundamental AI search visibility metric because it answers the simplest question: are you in the room?

Unlike impressions, which count how many times a link was displayed, a mention means the model actively selected your brand as relevant enough to include in its answer.

For most brands, the average AI visibility rate sits around 0.3%. Market leaders in 2026 aim for 60% to 80% inclusion across their core prompts. The gap between those two numbers is where competitive advantage lives.

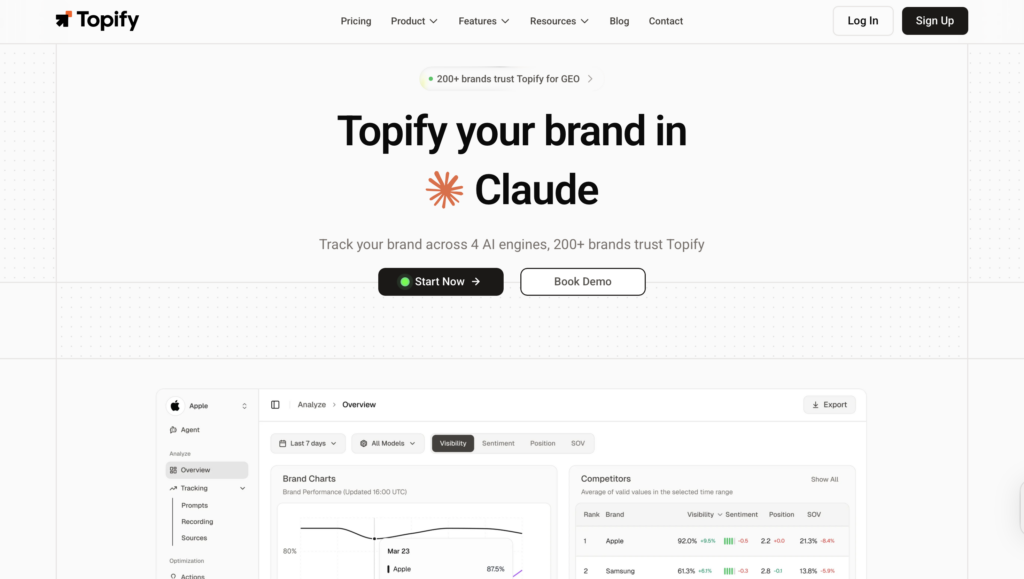

Calculating it is straightforward: run a matrix of dozens or hundreds of conversational prompts across multiple AI platforms and record the percentage of times your brand appears. Topify automates this process across ChatGPT, Perplexity, Gemini, and other major AI engines, tracking mention rate changes over time so you can measure the impact of content and PR efforts directly.

Metric 2: Sentiment Score, Because Being Mentioned Isn’t Always Good

AI models don’t just mention brands. They characterize them. One brand gets described as “innovative” and “user-friendly.” Another gets labeled “legacy” or “expensive.” Both are technically “visible,” but only one is being recommended.

Sentiment scoring uses NLP to analyze the polarity of how AI describes your brand, typically on a scale from negative to positive. A score below 40 generally indicates a structural reputation issue that content optimization alone won’t fix.

Here’s the thing: AI sentiment is often shaped more by third-party sources like Reddit threads, industry reviews, and news coverage than by your own website. Topify’s Sentiment Analysis tracks this across platforms with a 0-100 scoring system, flagging shifts before they compound.

If your mention rate is climbing but sentiment is flat or declining, you’ve got a problem that raw visibility numbers won’t show you.

Metric 3: Position in AI Recommendations

When ChatGPT lists five products in a recommendation, the first one mentioned captures the largest share of user trust. This is the “Position One” of generative search.

But tracking position in AI is harder than tracking it in traditional SERPs. Generative responses aren’t fixed. They shift based on prompt phrasing, model updates, and the sources available at query time. A brand that appears first for “best CRM for startups” might appear fourth for “top CRM tools 2026.”

Topify’s Position Tracking uses a weighted index to account for this variability. A primary recommendation in the opening paragraph scores higher than a secondary mention buried under “other options.” Consistently landing at the bottom of recommendation lists signals what the external research calls a “Confidence Deficit”: the model knows your brand but doesn’t trust it enough to lead with.

Metric 4: AI Search Volume, the Prompts No Keyword Tool Shows You

Traditional keyword volume measures what people type into Google. AI Search Volume measures the conversational questions people are asking inside ChatGPT, Perplexity, and similar platforms.

These are different queries entirely. Instead of “best CRM 2026,” an AI prompt might be: “I run a 50-person B2B startup and need a CRM that integrates with Slack and handles automated follow-ups. What should I look at?”

That level of specificity reveals buying intent that keyword tools miss completely. Topify’s AI Volume Analytics surfaces these high-value prompts so brands can prioritize content that answers the questions AI is actually being asked, not keywords that belong to the old search paradigm.

Metric 5: Source Citation Tracking

AI engines don’t generate answers from thin air. They pull from specific URLs and domains to construct their responses. Source Citation Tracking tells you exactly which sources the AI is using as its “ground truth” for your category.

This matters for two reasons. First, citations from third-party domains like trade publications, Reddit, and review platforms carry roughly 6.5 times more weight in building AI authority than self-published content. Second, a Princeton study found that citing authoritative sources within your own content can boost AI visibility by up to 115% for lower-ranked pages.

Topify’s Source Analysis tracks which domains AI platforms cite when answering questions in your category. If a competitor’s blog or a third-party review site is dominating citations, that’s a direct visibility loss you can act on.

Metric 6: Conversion Visibility Rate

If clicks are declining, how do you justify investing in AI search visibility? Conversion Visibility Rate is the metric that connects AI mentions to revenue.

AI-referred visitors behave differently than organic search visitors. The AI has already done the preliminary research, narrowed the options, and presented your brand as a recommendation. Users who click through are what the research calls “Decision-Ready.” Data shows AI visitors convert at rates 1.2 to 5 times higher than traditional organic search visitors. In B2B SaaS, conversion rates from AI traffic (6.69%) are now virtually identical to branded search traffic (6.71%), which has traditionally been the highest-converting channel.

Topify’s CVR metric weights mentions based on prompt intent. A mention in a “best of” list for a high-intent prompt is worth significantly more than a passing reference in an informational summary.

Metric 7: Competitor Share of Voice in AI

AI visibility is closer to a zero-sum game than traditional search. A Google SERP shows ten or more links. An AI response typically names three to five brands. If a competitor takes one of those slots, it often means you don’t.

Competitor Share of Voice measures the percentage of mentions your rivals secure across the same set of prompts. It reveals which brands are winning on which platforms and which queries are being dominated by companies you might not even consider competitors.

Only 11% of websites are cited by both ChatGPT and Perplexity simultaneously. That means your competitive picture looks different on every AI platform. Topify’s Competitor Monitoring automatically detects rivals across platforms and tracks their visibility, sentiment, and position relative to yours.

How to Build an AI Search Visibility Dashboard Without Drowning in Data

Tracking seven metrics across multiple AI platforms can feel overwhelming. The most effective approach is to layer your implementation.

Phase 1 (Week 1-2): Start with Mention Rate, Sentiment, and Position across ChatGPT and Google AI Overviews. This gives you a baseline for awareness and reputation on the two highest-traffic platforms.

Phase 2 (Month 1): Expand coverage to Perplexity and Gemini. Add Source Citation tracking to identify which third-party platforms are feeding AI answers in your category.

Phase 3 (Month 2+): Integrate AI Search Volume and CVR. This is where you tie AI search visibility directly to your sales pipeline.

Topify consolidates all seven metrics into a single dashboard, making it possible to spot a drop in ChatGPT mentions and trace it back to a specific source change, all within the same view. For teams that have been reporting AI visibility through spreadsheets and manual prompt checks, the difference in speed and accuracy tends to be significant.

3 Measurement Mistakes That Make Your AI Data Misleading

Counting mentions without checking sentiment. A brand that appears in AI answers as “outdated” or “overpriced” is worse off than a brand that doesn’t appear at all. Negative mentions actively train the model to exclude you from “best” and “top” recommendation prompts.

Tracking one AI platform and assuming it represents all of them. ChatGPT accounts for roughly 82% of AI referral traffic, but its citation patterns differ significantly from Gemini, Claude, and Perplexity. A strategy optimized for one platform misses more than half the discovery journey.

Reporting AI metrics on your SEO dashboard. Domain Authority explains less than 4% of the variance in AI citations. Backlink counts and average keyword positions have almost no correlation with whether AI recommends your brand. AI visibility needs its own dashboard, its own language, and its own reporting cadence.

Conclusion

The gap between “ranking well” and “being recommended by AI” is only getting wider. Impressions and clicks aren’t wrong. They’re just measuring a different game.

AI search visibility requires its own metrics: mention rate, sentiment, position, volume, citations, conversion potential, and competitive share of voice. Start with the first three. Build from there. The brands that track what AI actually says about them, not just whether Google lists their URL, are the ones that’ll own the next generation of discovery.

FAQ

Q: What is AI search visibility?

A: AI search visibility measures how often and how favorably your brand appears in AI-generated answers from platforms like ChatGPT, Perplexity, and Gemini. It’s different from traditional search rankings because it tracks whether AI models actively include and recommend your brand, not just whether your URL is indexed.

Q: How is AI search visibility different from traditional SEO?

A: Traditional SEO focuses on ranking URLs in a list of search results. AI search visibility focuses on whether your brand is mentioned, how it’s described, and where it’s positioned inside a synthesized answer. The metrics, optimization strategies, and measurement tools are fundamentally different.

Q: What tools can track AI search visibility metrics?

A: Topify is one of the leading platforms for tracking AI search visibility across ChatGPT, Perplexity, Gemini, and other AI engines. It covers all seven core metrics: mention rate, sentiment, position, volume, source citations, CVR, and competitor share of voice.

Q: How often should I check AI search visibility?

A: AI models update their citation patterns and source preferences frequently. Weekly monitoring is a good baseline for mention rate and sentiment. Source citation analysis and competitive share of voice benefit from monthly deep reviews with trend analysis.