Your team spent months building domain authority, publishing content, and climbing Google’s organic rankings. Then a potential customer asked ChatGPT, “What’s the best tool for [your category]?” and got a list of five recommendations. Your brand wasn’t on it.

The gap between traditional SEO performance and AI search visibility is widening fast. Organic click-through rates drop by roughly 61% when AI Overviews appear on the page, and zero-click searches now account for 60% of all Google queries. The problem isn’t that your SEO failed. It’s that nobody built an AI answer monitoring tracker to measure what AI actually says about your brand.

Most Brands Can’t Answer a Simple Question: “Does AI Know We Exist?”

An AI answer monitoring tracker is a specialized system that monitors how generative AI platforms mention, rank, and describe your brand across conversational responses. It’s not a traditional rank tracker. Traditional SEO monitoring identifies where a specific URL sits within a vertical list of results. An AI answer monitoring tracker evaluates whether your brand is included in the narrative an AI constructs when a user asks a question.

That distinction matters more than it sounds.

Traditional search queries average about four words. AI prompts average 23 words, packed with intent qualifiers like budget constraints, industry context, and persona-driven goals. An effective AI answer monitoring tracker uses conversational prompts that replicate how real users interact with ChatGPT, Gemini, Perplexity, and other platforms.

Here’s how the tracking mechanism works in practice. Advanced systems run automated queries through AI APIs or browser-level simulations at regular intervals. To eliminate bias from personalized user histories, tools like Topify use “stateless” requests that measure what a generic, unprejudiced user would see. Once the AI generates a response, natural language parsing extracts brand mentions, calculates position weighting, and identifies citation URLs.

One technical detail worth flagging: API-based tracking and browser-level rendering produce different results. API endpoints bypass real-world interface elements like browsing plugins, memory context, and visual citations. Research suggests API-based tracking only matches manual search data about 60% of the time. Browser-level simulation remains the more accurate approach, especially for Google AI Overviews, which often require an authenticated session to render.

| Dimension | Traditional Rank Tracking | AI Answer Monitoring Tracker |

|---|---|---|

| Primary Input | Short-tail keyword strings | Conversational prompts (23+ words) |

| Logic Basis | Vertical list position (1-100) | Semantic inclusion and position weighting |

| Output Type | URL position on a SERP | Narrative text, sentiment, and citations |

| Data Methodology | HTML DOM scraping | LLM probing and API metadata analysis |

| Deterministic Level | High (mostly consistent) | Low (probabilistic, non-deterministic) |

Why an AI Answer Monitoring Tracker Is Now a Revenue Problem, Not Just an SEO Problem

The scale of AI search adoption makes this impossible to ignore. ChatGPT reached 900 million weekly active users as of early 2026. Google’s AI Overviews expanded to 2 billion monthly users across 200 countries. These aren’t early adopter numbers. This is mainstream behavior.

For informational queries, AI Overviews trigger 88% of the time. When they do, organic CTR drops from a traditional 15% to roughly 8%. Even when AI Overviews aren’t present, users who’ve been retrained to expect instant answers show a 41% year-over-year decline in clicking traditional results.

That’s the traffic side. The conversion side tells a different story.

Users who click through from an AI-generated citation convert at rates between 7.05% and 11.4%, nearly double the 5.3% to 5.8% seen in traditional organic search. In B2B SaaS, AI-referred traffic converts at up to 6x higher rates than organic search. The reason is what researchers call the “pre-vetting effect”: by the time a user clicks a citation in a conversational response, the AI has already validated the brand’s relevance to their specific problem.

So every missed AI mention isn’t just a visibility gap. It’s a revenue leak from your highest-converting channel.

There’s also the hallucination risk. Hallucination rates across major models sit between 15% and 52%. Without an AI answer monitoring tracker, brands can’t detect when an AI fabricates product features, promotes discontinued items, or misattributes a competitor’s flaws to their brand. That kind of semantic drift compounds over time if nobody’s watching.

5 Metrics Your AI Answer Monitoring Tracker Should Actually Measure

Not all AI visibility data is created equal. Tracking raw mention counts without context is a vanity metric exercise. The ai seo visibility optimization companies leading this space have converged on a multi-dimensional measurement framework. Here are the five metrics that matter most.

Visibility Score. This measures the percentage of relevant prompts where your brand is explicitly mentioned in the AI’s response. The average brand visibility across 1,000 queries is often as low as 0.3%. Industry leaders maintain scores of 12% or higher. The gap between those two numbers represents the opportunity most brands are missing.

Position Weighting. Order matters in AI responses. The first brand mentioned in a recommendation earns roughly 33% citation probability. The tenth drops to about 13%. An effective tracker weights these positions so you know whether you’re the lead recommendation or buried in a footnote.

Sentiment Scoring. Not every mention is a win. AI might describe your product as a “budget alternative with known limitations” instead of a category leader. Sentiment analysis on a -100 to +100 scale tells you whether AI frames your brand positively or as a cautionary tale.

Citation and Source Analysis. This reveals which domains AI platforms cite to justify their answers. Sometimes AI trusts a site’s data without mentioning the brand by name, creating “ghost citations.” Source analysis also shows where competitors are earning mentions, like Reddit threads, G2 reviews, or industry journals, so you know where to build presence.

Conversion Visibility Rate. The bottom-line metric. CVR estimates the downstream business impact of an AI mention by correlating AI citations with on-site revenue through integrations like Google Analytics 4. This is the metric that gets executive buy-in.

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Visibility Score | % of prompts where brand appears | Measures discovery-phase penetration |

| Position Weighting | Ordinal rank in AI response | Top position earns ~3x more trust than 10th |

| Sentiment Score | Narrative framing (-100 to +100) | Catches reputation risks before they hit revenue |

| Citation Share | % of queries citing your domain | Identifies content AI trusts as a source |

| Conversion Visibility Rate | Revenue impact of AI mentions | Ties AI visibility directly to pipeline |

A Step-by-Step Strategy for Building Your AI Answer Monitoring Tracker

Getting started doesn’t require a six-month project plan. Here’s a practical framework.

Step 1: Build your prompt library. Instead of chasing 500 individual keywords, identify 20 to 50 high-value prompts that mirror how your target audience actually talks to AI. Include branded queries, category shortlist queries (“Who are the top competitors for…?”), and comparison queries across different funnel stages. Topify’s High-Value Prompt Discovery surfaces these automatically by analyzing real AI search behavior.

Step 2: Establish a statistical baseline. AI responses are probabilistic, not deterministic. Run each prompt multiple times to get a reliable baseline visibility score. Map the competitive landscape at the same time: who is the AI recommending instead of you? In some sectors like HR software, top brands dominate 86% of the AI’s consideration set, leaving little room for newcomers who aren’t actively monitoring.

Step 3: Reverse-engineer AI citations. Use source analysis to identify which third-party domains the AI trusts. Research suggests citations from independent, third-party domains carry roughly 6.5x the weight of self-published content in the eyes of LLMs. If an AI consistently cites a competitor via a Reddit thread or a specific trade journal, that’s where you need to build presence.

Step 4: Re-engineer content for machine extraction. Translate your visibility data into action. Place the primary direct answer in the first 50 tokens of each key section so the AI’s retrieval system can extract it easily. Deploy FAQPage, HowTo, and Organization schema to provide machine-readable facts. Consider creating an LLMs.txt file at your site’s root directory to help AI crawlers understand your most important content.

Step 5: Monitor continuously and act fast. AI models update frequently, and competitor content shifts constantly. Topify’s One-Click Execution layer lets teams review a proposed recovery strategy and deploy it directly from the dashboard, closing the loop between data and action without manual workflows.

5 Mistakes That Tank Your AI Answer Monitoring Tracker Results

Even teams with the right tools make these errors.

Monitoring only one platform. This is the most common mistake. ChatGPT, Gemini, Perplexity, and Claude all weigh authority signals differently. Gemini leans heavily on the Google ecosystem, including YouTube and Google Maps. Perplexity prioritizes live web citations from high-trust domains. Tracking just one platform gives you a distorted picture. Topify covers ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and Qwen in a single dashboard.

Treating AI visibility as a silo. Some teams shift their entire budget from technical SEO to “AI hacks.” That backfires. A page that isn’t indexed or poorly structured for Google is often invisible to AI crawlers too. SEO provides the technical foundation, crawlability, site speed, mobile UX, that GEO success is built on.

Ignoring sentiment and position data. Tracking raw mention counts without competitive context or sentiment analysis is misleading. A brand might appear in every AI response but consistently be described as the “budget option” or listed last. Without sentiment and position weighting, you’re celebrating a vanity metric.

Skipping the global AI ecosystem. For brands with international presence, ignoring Chinese AI platforms is a significant blind spot. Chinese models like Doubao and Qwen mention brands at a rate of 88.9% for English queries, compared to just 58.3% for Western models. That’s a 30-point gap in brand representation that most Western-only tools miss entirely.

Running a one-time audit instead of continuous monitoring. AI visibility is dynamic. Model updates, competitor content changes, and shifting citation patterns mean last month’s data is already stale. The brands that win treat their AI answer monitoring tracker as an always-on system, not a quarterly check-in.

What to Look for in an AI Answer Monitoring Tracker Tool

The market has matured quickly. Here’s how the current landscape breaks down for ai seo visibility optimization companies and marketing teams evaluating tools.

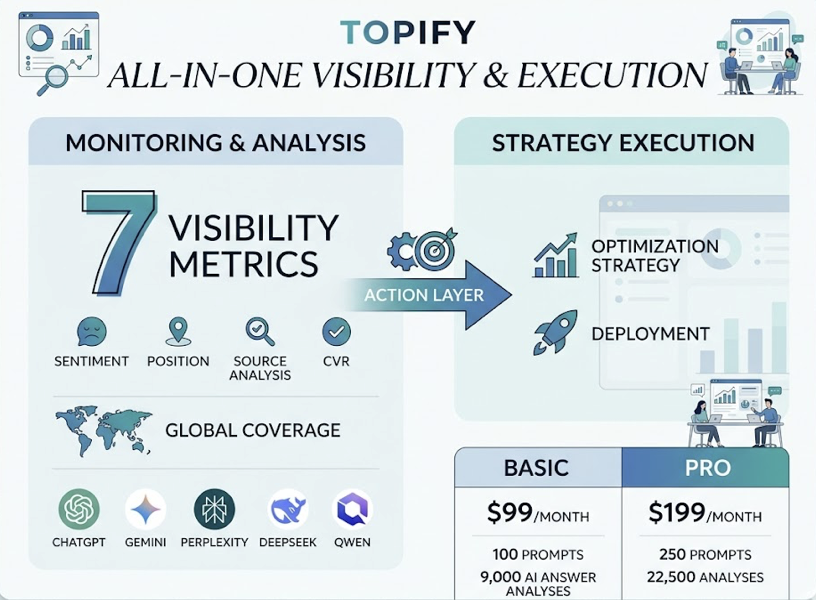

For marketing teams and agencies that need both monitoring and execution, Topify stands out by combining all seven visibility metrics, including sentiment, position, source analysis, and CVR, into a single platform. Its global coverage spans ChatGPT, Gemini, Perplexity, and Chinese LLMs like DeepSeek and Qwen. What separates it from monitoring-only tools is the action layer: you can go from spotting a visibility gap to deploying an optimization strategy without leaving the dashboard. Pricing starts at $99/month for the Basic plan with 100 prompts and 9,000 AI answer analyses, scaling to $199/month for Pro with 250 prompts and 22,500 analyses.

For enterprise teams requiring SOC 2 compliance and log-level crawler analysis, Profound offers deep query fanout analysis across 10+ engines with 18-country coverage. Pricing starts around $99/month for starter tiers.

For teams that prioritize browser-level accuracy, ZipTie.dev uses real browser rendering and screenshot capture. Pricing ranges from $69 to $159/month.

For agencies managing multiple clients, Peec AI offers unlimited seats and Looker Studio integration across 7 platforms, starting at €89/month.

| Platform | Best For | Key Differentiator | Starting Price |

|---|---|---|---|

| Topify | Marketing teams and agencies | 7 metrics, Chinese LLM coverage, One-Click optimization | $99/mo |

| Profound | Enterprise compliance | SOC 2 Type II, 10+ engine log analysis | $99/mo |

| ZipTie.dev | Accuracy-focused teams | Browser-level rendering, screenshot capture | $69/mo |

| Peec AI | Global agencies | Unlimited seats, Looker Studio integration | €89/mo |

Conclusion

The gap between where your brand ranks on Google and where it appears in AI responses isn’t closing on its own. With 900 million weekly users on ChatGPT and 2 billion monthly users seeing AI Overviews, the question isn’t whether AI search matters. It’s whether you’re measuring it.

An AI answer monitoring tracker turns that blind spot into a structured, data-driven growth channel. Start with a 30-prompt audit across ChatGPT, Gemini, and Perplexity. Connect visibility data to revenue through CVR tracking. And treat AI monitoring as an always-on system, not a one-time experiment. The brands that build this infrastructure now will own the discovery layer that’s rapidly replacing traditional search clicks. Get started with Topify to see where your brand stands today.

FAQ

Q: What is an AI answer monitoring tracker?

A: An AI answer monitoring tracker is a system that monitors how generative AI platforms like ChatGPT, Gemini, and Perplexity mention, rank, and describe your brand in their responses. Unlike traditional SEO rank trackers that measure URL positions on a search results page, it evaluates semantic inclusion, position weighting, sentiment, and citation sources within AI-generated answers.

Q: How does an AI answer monitoring tracker work?

A: It runs automated conversational prompts through AI platforms at regular intervals, using stateless requests to eliminate personalization bias. The system then parses each AI response with natural language processing to extract brand mentions, calculate position weighting, identify citation URLs, and score sentiment. Advanced trackers use browser-level rendering rather than API-only analysis for higher accuracy.

Q: How much does an AI answer monitoring tracker cost?

A: Pricing varies by platform and scale. Topify starts at $99/month for 100 prompts and 9,000 AI answer analyses, with a Pro plan at $199/month for 250 prompts. Enterprise plans start from $499/month. Other tools in the market range from $69/month to €495/month depending on features and team size.

Q: What’s the difference between AI answer monitoring and traditional SEO tracking?

A: Traditional SEO tracking measures where a URL ranks in a deterministic list of search results. AI answer monitoring measures whether and how a brand appears within probabilistic, narrative text generated by AI. The inputs are different (conversational prompts vs. short keywords), the outputs are different (sentiment and citations vs. rank positions), and the optimization strategies are different (citation engineering and entity authority vs. link building and keyword density).