Your marketing team spent the last quarter refining keyword rankings, building backlinks, and publishing content that climbed to page one. Then your CMO asked a simple question: “When someone asks ChatGPT which product to buy in our category, do we show up?”

Nobody had an answer. Not because your team dropped the ball, but because the tools you’ve been using were never built to measure what AI chooses to say. AI-referred web sessions grew 527% year-over-year in early 2025, and half of all consumers now use AI-powered search for product research. The brands that can’t see themselves in those AI answers are already losing ground to the ones that can.

Most Brands Track Rankings. AI Tracks Recommendations. That’s a Different Game.

AI answer monitoring is the practice of programmatically querying AI platforms like ChatGPT, Perplexity, and Gemini, then analyzing how your brand appears in their responses. It tracks whether you’re mentioned, how you’re described, where you’re positioned relative to competitors, and which sources the AI cites when recommending you.

This isn’t a variation of traditional SEO monitoring. It’s a fundamentally different discipline.

Traditional search engines retrieve a ranked index of static web pages. Generative engines synthesize answers from diverse sources and deliver a single, conversational response. Up to 93% of those interactions resolve without a single click to an external website. That means if your brand isn’t in the AI’s answer, you’re not even in the consumer’s consideration set.

The performance gap is stark. Traditional organic search converts at roughly 2.1% to 2.8%. AI-referred traffic converts at 14.2% to 27.0%, up to 4.4x higher. The reason is compression: AI answers present a shortlist of one to three brands, and users trust that shortlist enough to act on it immediately.

Topify tracks this entire layer across ChatGPT, Gemini, Perplexity, DeepSeek, and other regional models, giving marketing teams visibility into a channel that traditional dashboards completely miss.

CES 2026 Proved That AI Agents Don’t Browse. They Decide.

The Consumer Electronics Show in January 2026 marked a turning point. AI stopped being a search destination and became infrastructure. It’s now embedded in operating systems, browsers, and device-level identity layers. The traditional marketing funnel, awareness to consideration to intent to purchase, is collapsing into something much shorter.

The defining trend was autonomous AI agents at scale. Consumers don’t just search anymore. They brief specialized agents with qualitative intent: “Find a sustainable, organic mascara under $30” or “Find a family-friendly streaming subscription with offline downloads.” The agent then parses, filters, and negotiates options before presenting a compressed shortlist of one or two brands.

That’s not a funnel. That’s a filter.

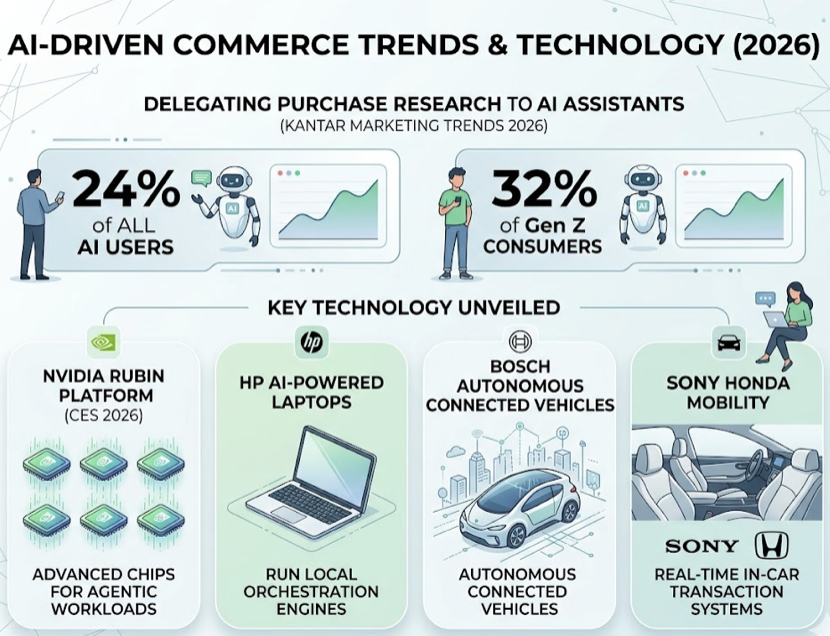

According to Kantar’s Marketing Trends 2026, 24% of AI users already delegate purchase research to AI assistants. Among Gen Z consumers, that number rises to 32%. NVIDIA announced its Rubin platform at CES 2026 with six advanced chips designed for agentic workloads. HP unveiled AI-powered laptops configured to run local orchestration engines. Bosch showcased autonomous connected vehicle platforms, and Sony Honda Mobility demonstrated real-time in-car transaction systems.

For brands, the implication is direct: if your digital presence isn’t structured for machine readability, agents will filter you out. Brand announcements distributed via GlobeNewswire during CES earned nearly 25,000 AI search engine citations, specifically because they were formatted as authoritative, machine-readable sources with verifiable facts and clear timestamps. US advertisers are projected to spend $25.9 billion on AI search ads by 2029, signaling that the market has moved well past experimentation.

AI answer monitoring is no longer a “nice to have.” It’s how you confirm your brand survives the agent’s filter.

How AI Answer Monitoring Actually Works Under the Hood

Traditional SEO crawlers scrape static HTML pages. AI answer monitoring uses a technique called synthetic probing: sending thousands of natural language queries to live APIs of closed-source LLMs, then parsing the generated responses for brand mentions, sentiment, position, and source citations.

When a prompt hits an AI engine, the response is generated through Retrieval-Augmented Generation (RAG). The system interprets the query, retrieves relevant source documents from its index or a live web search, evaluates their authority, and synthesizes a direct answer. The monitoring platform then ingests that unstructured response and extracts structured data.

The 7 Metrics That Define AI Answer Monitoring

Basic tracking tools measure four parameters: visibility, sentiment, position, and source. Topify uses a 7-metric framework that adds the depth needed for real optimization:

| Metric | What It Measures |

|---|---|

| AI Share of Model (Visibility) | Percentage of target queries where the brand appears in the response |

| Average Recommendation Position | Where the brand ranks in the AI’s recommendation order |

| Brand Sentiment Score | Tone of the AI’s description, scored 0 to 100 |

| Citation Frequency | How often the AI hyperlinks to the brand’s domain |

| Query Volume Estimation | Estimated search volume of tracked prompts across AI engines |

| User Intent Classification | Segments prompts into informational, commercial, transactional categories |

| Conversion Visibility Rate (CVR) | Correlation between visibility adjustments and downstream referral conversions |

Position matters more than most teams realize. Research shows the first-mentioned brand in an AI recommendation carries a 33.07% citation probability. By the tenth mention, that drops to 13.04%. If you’re monitoring visibility but ignoring position, you’re missing the signal that actually predicts clicks.

5 Mistakes That Quietly Wreck Your AI Answer Monitoring Data

Most teams don’t fail at AI answer monitoring because they chose the wrong tool. They fail because of methodological blind spots that corrupt their data from day one.

Tracking only ChatGPT. ChatGPT has dominant market share, but only 11% of cited domains appear consistently across multiple AI platforms. Each engine uses different indexing, RAG pipelines, and training data. A brand that ranks first on ChatGPT may be completely invisible on Perplexity, which cites nearly three times as many sources per query.

Using keyword-style prompts instead of conversational queries. Querying a model with “marketing platform” doesn’t capture how real users talk to AI. Conversational search queries average more than eight words. Your tracked prompts need to mirror actual dialogue patterns: “What’s the best marketing platform for a 50-person B2B SaaS company?”

Ignoring sentiment. A brand can achieve 90% visibility and still have a reputation problem. If the AI consistently describes your product as “expensive with limited support,” that high visibility score is masking a PR crisis, not celebrating a win.

Running monthly manual audits. Generative models update their weights, source indexes, and ranking signals continuously. A monthly snapshot is outdated within 48 hours. Effective monitoring requires automated, high-frequency probing.

Skipping competitor benchmarks. A 30% visibility rate looks strong until you discover your closest competitor holds 70% across the same prompt set. Without relative Share of Model data, you’re flying blind on competitive positioning.

A Step-by-Step AI Answer Monitoring Strategy That Actually Produces Results

Here’s a five-phase framework that moves from setup to measurable optimization:

Phase 1: Build your prompt matrix. Start with 100 to 500 high-intent prompts mapped to your buyer’s journey. Awareness-stage prompts (“How does enterprise supply chain coordination work?”), consideration-stage prompts (“What are the most reliable logistics platforms?”), and decision-stage prompts (“Platform A vs. Platform B pricing and API capabilities”) each reveal different aspects of your AI visibility.

Phase 2: Set multi-platform scope. Configure tracking across ChatGPT, Gemini, Perplexity, and DeepSeek at minimum. Regional coverage matters: if your audience spans markets where Qwen or Doubao dominate, include those too.

Phase 3: Establish your baseline. Run the full prompt matrix and record your starting position across all seven metrics. During this phase, audit your technical readiness: verify your robots.txt permits GPTBot, ClaudeBot, and PerplexityBot. Run a GEO score check on target landing pages to evaluate structured data, entity clarity, and topical signal density. Topify’s built-in GEO diagnostic tools automate these checks.

Phase 4: Set cadence and alerts. Enterprise teams in competitive categories need daily data syncs. Mid-market brands can operate on weekly refresh cycles. Either way, configure real-time alerts for visibility drops or negative sentiment spikes.

Phase 5: Execute GEO content actions. Turn monitoring data into optimization. The Princeton GEO study documented specific impact benchmarks that still hold:

| Strategy | Visibility Improvement |

|---|---|

| Cite authoritative external sources | +40% |

| Add statistics every 150 to 200 words | +37% |

| Include verified expert quotations | +30% |

| Use precise technical terminology | +28% |

Topify’s One-Click Agent Execution system identifies visibility gaps, designs a targeted GEO strategy, and deploys content and schema corrections directly to CMS platforms like WordPress, Shopify, and Framer. No manual dev bottlenecks.

The Platforms That Make AI Answer Monitoring Scalable

The AI answer monitoring market splits into three tiers: enterprise intelligence engines with deep analytics and steep price tags, specialized crawler tools focused on verification, and purpose-built GEO orchestration platforms that bridge tracking and action.

| Platform | AI Engine Coverage | Starting Price | Key Differentiator |

|---|---|---|---|

| Topify | ChatGPT, Gemini, Perplexity, DeepSeek, Qwen, Doubao | $99/mo | 7-metric framework, one-click agent execution, built-in GEO diagnostics |

| Profound | ChatGPT, Claude, Perplexity, Gemini, Grok | $99/mo (ChatGPT only) | Log-level crawler analytics, enterprise integrations |

| Peec AI | ChatGPT, Perplexity, Gemini, Copilot, Grok | $95/mo | Unlimited seats, daily automated tracking |

| AthenaHQ | ChatGPT, Gemini, Perplexity, Claude, Grok | ~$295/mo | Narrative tone analysis, corporate risk modeling |

| ZipTie | ChatGPT, Perplexity, AI Overviews | $69/mo | Real browser screenshot verification |

For most marketing teams, the key question isn’t which platform has the most data. It’s which one lets you act on the data without switching tools. Topify’s combination of broad AI engine coverage, a 7-metric analytics layer, and automated execution at a $99/mo entry point makes it the practical starting point for teams that want monitoring and optimization in one workflow.

How to Know Whether Your AI Answer Monitoring Is Actually Working

Three KPIs separate productive monitoring programs from expensive dashboards that nobody checks:

Answer Inclusion Rate (AIR): The percentage of high-intent prompts where your brand appears. A strong baseline target is 30% or higher across core transactional prompts.

Sentiment Velocity: The rate at which the AI’s qualitative description of your brand moves toward positive. Tracking direction matters more than the absolute score, because a brand moving from 45 to 65 in sentiment is outperforming one stuck at 75.

Conversion Visibility Rate (CVR): The connection between AI citations and downstream referral conversions. This is where monitoring becomes a revenue story, not just a visibility story.

Review these across three cadences. Weekly: content teams check alerts, crawl blocks, and position shifts after competitor updates. Monthly: marketing leadership evaluates Share of Model trends and sentiment data to adjust content priorities. Quarterly: executive stakeholders assess Return on Content Investment and align AI visibility with broader brand strategy.

The monitoring loop is continuous: probe, ingest metrics, identify gaps, execute corrections, probe again. Teams that treat it as a one-time audit will fall behind within weeks.

Conclusion

The AI answer layer isn’t coming. It’s here. Half of consumers already use AI search, agents are making purchase decisions on behalf of users, and the brands that aren’t visible in those AI responses are being filtered out before a website visit can even happen.

Start with 100 core prompts across at least four AI platforms. Establish your baseline. Set alerts. Then use the data to drive content optimization that actually changes what AI says about you. Tools like Topify compress this entire workflow into a single platform, from monitoring to execution, at a price point that doesn’t require enterprise budgets.

The brands that build this muscle now will compound their advantage. The ones that wait will spend the next two years wondering why their traffic is declining while their SEO rankings look fine.

FAQ

Q: What is AI answer monitoring?

A: AI answer monitoring is the systematic tracking of how your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, and Gemini. It measures visibility (whether you’re mentioned), sentiment (how you’re described), position (where you rank relative to competitors), and citations (which sources the AI references).

Q: How does AI answer monitoring differ from traditional SEO monitoring?

A: Traditional SEO tracks keyword rankings on static search result pages. AI answer monitoring tracks synthesized, probabilistic responses generated through Retrieval-Augmented Generation. It measures entirely different dimensions: Share of Model, recommendation position, sentiment polarity, and citation frequency, none of which exist in traditional SEO dashboards.

Q: How much does AI answer monitoring cost?

A: Purpose-built platforms like Topify start at $99/mo for 100 tracked prompts and scale to $199/mo for 250 prompts. Enterprise tiers begin at $499/mo with custom configurations. Specialized enterprise tools from other vendors range from $295 to over $900/mo.

Q: Can AI answer monitoring track multiple AI platforms at once?

A: Yes. Multi-platform tracking is strongly recommended because only 11% of cited domains appear consistently across different AI engines. Topify aggregates data from ChatGPT, Gemini, Perplexity, DeepSeek, Qwen, and Doubao into a single dashboard.