Your brand holds top-three rankings for high-intent keywords. Traffic from organic search is solid. But when you type “best [your category] tools” into ChatGPT, your competitors get named first, described in detail, and linked with confidence. Your brand doesn’t appear at all.

That’s not a content quality problem. It’s a monitoring infrastructure problem.

Most marketing teams don’t have a systematic way to track what AI platforms are saying about their brand. They run manual checks once a month, look at one platform, and call it done. Meanwhile, AI-driven referral traffic is converting at rates up to 15.9% on ChatGPT alone, meaning every omission is a qualified lead going to a competitor.

The fix starts with understanding what an AI answer monitoring system actually is, and what separates a professional setup from a glorified manual search.

Most Brands Are “Checking” AI. They’re Not Monitoring It.

There’s a meaningful difference between the two.

Checking is what most teams do: open ChatGPT, type a question, see if your brand appears, close the tab. It’s better than nothing. But it’s not monitoring.

A systematic AI answer monitoring system does something different. It queries multiple AI platforms at scale using a curated set of prompts, captures the outputs, parses them for brand mentions, rankings, sentiment, and citation sources, and tracks all of that data over time. The goal isn’t a snapshot. It’s a trend line.

Why does this matter? Because LLMs are non-deterministic. A study of 2,961 identical prompts found that ChatGPT, Google AI, and Claude return the same brand list less than 1% of the time. A single manual check tells you almost nothing. Weekly, structured sampling tells you everything.

The other problem: 83% of global AI usage happens inside mobile apps, which traditional SEO tools can’t index. That’s dark traffic, and it’s where a large portion of your AI brand narrative is being written without you knowing.

What an AI Answer Monitoring System Actually Tracks

The most common misconception is that AI monitoring is just “mention tracking.” Count how many times the brand appears. Done.

That’s the floor, not the ceiling.

A professional-grade AI answer monitoring system captures five distinct dimensions of brand performance across generative platforms.

The 5 Metrics a Reliable AI Answer Monitoring Dashboard Should Cover

Visibility Rate is the percentage of relevant queries in which your brand is included in the AI’s response. In competitive categories, category leaders typically achieve mention rates of 30% to 50% for high-intent queries. Below that, you’re losing consideration before the conversation starts.

Sentiment Score quantifies how the AI describes your brand, typically on a 0-100 scale. An AI can mention your brand while framing it as “a budget alternative” or “better suited for small businesses,” even when your internal positioning is enterprise. That disconnect is invisible without a sentiment tracking layer.

Position Rank measures where your brand appears in AI recommendation lists. The first recommendation receives 1.5 to 2x more consideration than the third. Tracking rank tells you whether you’re winning the shortlist or just making it onto the list.

Prompt Volume maps which questions users are actually asking. Are they asking informational “What is?” queries, or commercial “Is [Brand] better than [Competitor]?” queries? A brand might dominate educational prompts but be completely absent from transactional ones, which is a funnel alignment problem.

Source and Citation Coverage is the most actionable metric of the five. It identifies the specific URLs the AI uses as evidence when describing your brand or your competitors. If you’re missing from an answer, this tells you exactly which third-party domain filled the gap.

These five dimensions map directly onto what platforms like Topify track across their seven-metric GEO analytics framework: visibility, sentiment, position, volume, mentions, intent, and CVR (Conversion Visibility Rate). The CVR layer goes one step further, projecting the conversion impact of AI visibility, which turns monitoring data into ROI modeling.

Common Mistakes in AI Answer Monitoring Analytics

Most brands aren’t just under-monitoring. They’re monitoring wrong.

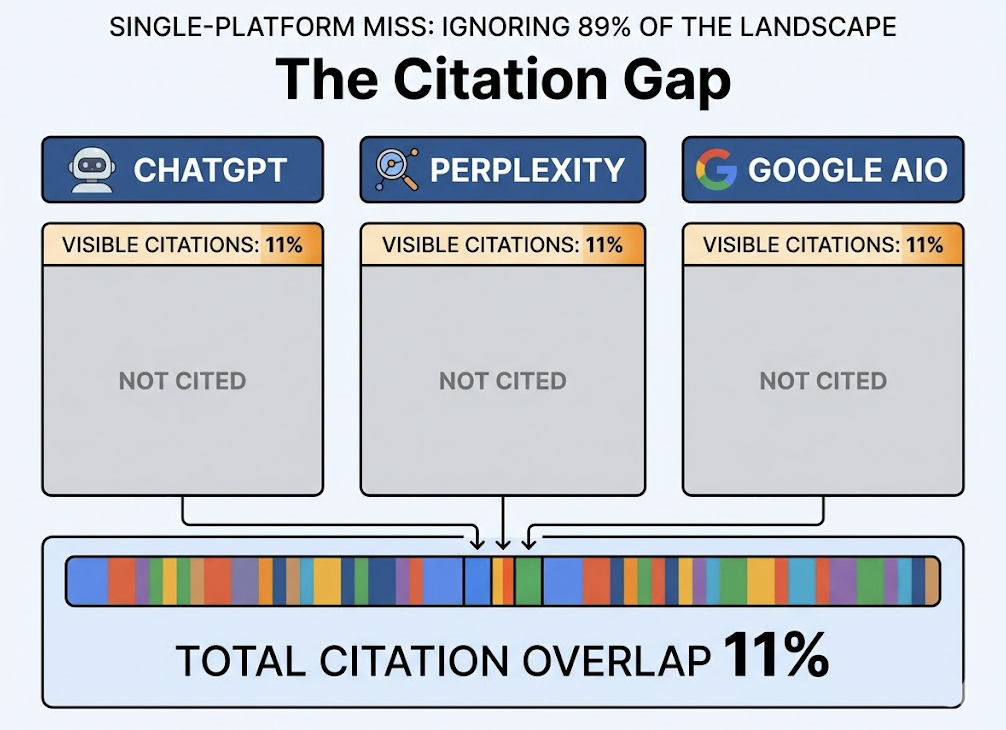

Mistake 1: Single-platform coverage. Most teams focus exclusively on ChatGPT and ignore the rest of the landscape. The problem is that only 11% of cited domains overlap between ChatGPT, Perplexity, and Google AI Overviews. Each platform uses a different retrieval architecture: ChatGPT leans on Bing, Claude on Brave Search, Gemini on Google. A brand can be highly visible on one and completely absent on others.

Mistake 2: Tracking mentions without tracking how. A brand mention in an AI answer isn’t always a positive signal. If the AI is consistently describing your product as a “cheaper alternative” or “best for beginners,” that narrative is shaping buying decisions in real time. Sentiment monitoring catches this. Mention counting doesn’t.

Mistake 3: Monthly monitoring cadence. Research across 2,500 prompts in Google AI Mode and ChatGPT found that 40% to 60% of cited sources change on a monthly basis. Monthly checks create a false sense of stability. Weekly or bi-weekly monitoring is the minimum required to distinguish a fluke omission from a systematic trend.

Mistake 4: No competitive baseline. Monitoring your own brand in isolation misses the point. The metric that matters is Share of Voice: your mention rate compared to competitors for the same category prompts. Without that comparison, a 35% visibility rate looks fine until you realize your main competitor is at 62%.

Mistake 5: Ignoring citation sources. 99.3% of LLM citations come from open-access sources, and Reddit alone powers up to 46.7% of citations on Perplexity and 27% of answers on ChatGPT. If you’re not tracking which external domains the AI is using to build its brand descriptions, you’re missing the most actionable data in the entire monitoring stack.

How to Build an AI Answer Monitoring Strategy That Works

Moving from reactive checking to proactive optimization requires a structured approach. Here’s a five-step framework.

Step 1: Build your Prompt Matrix. Start with 25 to 100 “money prompts” that cover the full buyer journey. Category prompts (“Best [product type] for [industry]”), comparison prompts (“[Brand] vs [Competitor]”), problem-solution prompts (“How to solve [pain point]”), and trust prompts (“Is [Brand] reliable for enterprise?”). This matrix is the foundation. Everything else is built on top of it.

Step 2: Run your baseline. The first monitoring cycle creates your reference point. Capture Visibility Rate, Sentiment, Position, and Source Coverage for your brand and your top three competitors. This baseline turns all future data into signal rather than noise.

Step 3: Run a Source Gap Analysis. For every prompt where you’re missing, identify what the AI is citing instead. That list of domains becomes your “Source Target Backlog.” A G2 review page that consistently appears in competitive answers is a higher priority content target than a page on your own blog.

Step 4: Audit technical accessibility. Cloudflare has changed default configurations to block AI bots, meaning many brands have unintentionally shut off their AI crawl traffic. Check your robots.txt for AI bot exclusions, and verify that key product pages aren’t JavaScript-rendered, since most AI crawlers can’t process client-side content.

Step 5: Connect monitoring to content execution. The output of monitoring isn’t a report. It’s a prioritized content backlog. Citation gap data tells you which prompts to target, source gap data tells you which channels to focus on, and sentiment data tells you which brand narratives need correction.

An AI answer monitoring tool like Topify handles steps 1 through 5 as an integrated workflow. The prompt library management, cross-platform scanning, source gap detection, and one-click content execution all sit in a single platform, so insights don’t get lost in translation between analytics and strategy.

What Topify’s AI Answer Monitoring Platform Covers in Practice

Most AI answer monitoring software stops at data collection. You get a dashboard, a visibility score, and a list of mentions. What you do with that data is your problem.

That’s the gap Topify closes.

Topify is built as an end-to-end AI search optimization platform, covering the full cycle from monitoring to execution. Here’s what that looks like in practice.

Multi-platform AI answer monitoring: Automated scanning across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major platforms. Cross-platform discrepancies, where your brand ranks well on one engine and disappears on another, are surfaced automatically rather than discovered by accident.

Source Analysis: Topify identifies the specific third-party domains the AI is using to form its brand descriptions. This is the “reverse-engineering the RAG pipeline” function that most tools don’t offer. If a niche industry publication is consistently cited in answers that mention your competitor, that’s your next content target.

Dynamic Competitive Benchmarking: Competitive monitoring isn’t a static list. New entrants appear in AI recommendation lists all the time. Topify’s system automatically detects when a new competitor shows up alongside your brand and benchmarks their visibility against yours in real time.

One-Click Execution: Once monitoring data identifies a citation gap or a content opportunity, Topify’s AI agent can generate and deploy optimized content with a single action. The monitoring loop and the execution loop are connected, not separated by a strategy meeting.

The platform is trusted by 50+ enterprises and startups, and the team behind it includes founding researchers from OpenAI and Google SEO practitioners with documented 0-to-1M organic traffic builds. That combination of LLM research depth and practical SEO experience is reflected in the accuracy and actionability of the monitoring data.

AI Answer Monitoring Analytics Pricing: What You’re Actually Paying For

Before evaluating any AI answer monitoring solution, it’s worth understanding what the real cost comparison looks like.

Manual monitoring of 100 prompts across five AI platforms takes an average of 3.6 hours per week per employee. At a fully-loaded cost of $60 to $80 per hour for a mid-level marketing manager, that’s $225 to $300 per week, or roughly $12,000 to $15,000 per year, for coverage that is still statistically unreliable due to the non-deterministic nature of LLM outputs.

Automated platforms typically run at a fraction of that cost and return data that no human process can replicate at scale.

Topify’s pricing is structured around usage volume:

| Plan | Price | What You Get |

|---|---|---|

| Basic | $99/mo | 100 prompts, 9,000 AI answer analyses, 4 platforms, 4 seats |

| Pro | $199/mo | 250 prompts, 22,500 analyses, 8 projects, 10 seats |

| Enterprise | from $499/mo | Custom prompt sets, dedicated account manager, advanced API |

The economics are straightforward. A Pro plan at $199 per month covers 250 prompts across multiple platforms with statistical sampling that a manual process can’t replicate. The ROI threshold is low.

Businesses that adopt AI automation for marketing processes report 50% faster processing times and a 30% reduction in operational costs. In the context of AI answer monitoring, that translates to faster competitive response cycles and more hours redirected toward strategy and execution rather than manual data collection.

Conclusion

The brands winning in AI search in 2026 aren’t necessarily the ones with the best products. They’re the ones that know exactly where they stand in the AI answer ecosystem, and why.

An AI answer monitoring system gives you that knowledge. Not through occasional manual checks, but through structured, multi-platform tracking of visibility, sentiment, position, prompt volume, and citation sources. The data tells you where you’re losing mindshare, which specific third-party domains are shaping your brand narrative, and exactly what to do about it.

The gap between manual checking and systematic monitoring is the gap between operating blind and operating with competitive intelligence. For most brands, closing that gap starts with setting up the right infrastructure.

Topify provides that infrastructure, from prompt management and cross-platform scanning to source gap analysis and one-click content execution, all in a single platform designed for teams that need to move fast.

FAQ

What is AI answer monitoring analytics?

AI answer monitoring analytics is the systematic practice of tracking how a brand is mentioned, described, and cited across generative AI platforms like ChatGPT, Gemini, and Perplexity. It measures frequency (visibility rate), tone (sentiment score), competitive positioning (rank), and citation sources to give marketing teams a structured view of their brand’s narrative health in conversational search.

How does an AI answer monitoring system work?

The system programmatically queries multiple AI models using a curated “Prompt Matrix” of high-intent user questions. It parses each AI response to extract brand mentions, competitive rankings, and the specific source URLs the AI used as evidence. That data is then aggregated into a dashboard to track trends over time. Platforms like Topify automate this entire process across ChatGPT, Gemini, Perplexity, DeepSeek, and other major engines.

What are examples of AI answer monitoring analytics in practice?

Three concrete examples: (1) AI Visibility Score, a weighted metric combining inclusion rate and position rank; (2) Share of Voice, your mention rate versus competitors for a specific category; (3) Source Recurrence, tracking which third-party domains are most frequently cited in answers relevant to your brand. These three alone cover the core of a working monitoring program.

Is there a checklist for AI answer monitoring analytics?

A working 2025/2026 checklist should include: build a prompt set covering the full buyer journey; monitor at least five platforms (ChatGPT, Gemini, Perplexity, Claude, Copilot); audit technical accessibility (robots.txt configuration, JavaScript rendering); analyze citation sources to identify third-party influence targets; track sentiment alignment between AI descriptions and your brand positioning; and establish competitive Share of Voice benchmarks.

What are the best tools for AI answer monitoring analytics?

Topify is the strongest option for teams that need integrated monitoring and execution in one platform. It covers seven metrics across all major AI engines and connects monitoring data directly to content strategy and deployment. For teams with more specific needs, GetMint is useful for tracing AI outputs back to specific source URLs, while enterprise teams needing geographic and historical reporting depth may also evaluate other platforms.