Your brand holds a top-three Google ranking. Traffic looks healthy. Your SEO team is happy.

Then someone searches “best [your category] tools” on ChatGPT, and your brand doesn’t appear once.

That gap — between traditional search performance and AI visibility — is exactly what AI answer monitoring is designed to close. And for most marketing teams, it’s still completely untracked.

The Search Behavior Shift No Ranking Tool Can See

Consumer research behavior has changed faster than most marketing stacks have adapted.

By mid-2025, 51% of consumers reported that generative AI had fundamentally changed how they research products and services, according to Gartner. Of those, 71% started phrasing queries more conversationally, going directly to ChatGPT, Perplexity, or Gemini before touching a traditional search engine.

The ripple effect on traditional SEO metrics is measurable. Zero-click queries have risen to roughly 60% of all searches in some categories. Click-through rates on organic results have dropped anywhere from 18% to 70% depending on the sector.

Here’s the thing: none of that shows up in your Ahrefs dashboard.

How AI Answer Monitoring Differs from Traditional SERP Tracking

This is the question most teams ask first, and it’s worth answering precisely.

Traditional SERP tracking watches where you rank for specific keywords on a static results page. The signals that matter are backlinks, on-page optimization, and crawl authority. The primary output is a rank number and a traffic estimate.

AI answer monitoring tracks something structurally different.

| Dimension | Traditional SERP Tracking | AI Answer Monitoring |

|---|---|---|

| What you’re tracking | Keyword positions and traffic | Brand mentions, sentiment, and citations in AI-generated answers |

| Data source | Search engine index | Live model-generated outputs |

| Competitive view | Position on a results list | Share of voice inside a narrative |

| Optimization signal | Backlinks and meta-tags | Content authority, entity recognition, source citations |

| Primary metric | Click-through rate (CTR) | Brand mention rate and citation share |

The critical distinction: a traditional search engine ranks documents. An AI answer engine synthesizes a response. Your brand either gets included in that synthesis, or it doesn’t — and rank position has almost nothing to do with it.

A study analyzing over 5.5 million AI responses found that holding a top-three organic ranking on Google offers only an 8% chance of being cited in a Google AI Overview. Even more striking: 80% of sources featured in AI-generated summaries don’t rank on the first page of traditional search results for the same query.

Traditional SEO tracking can’t see any of that.

Why Your #1 Ranking Doesn’t Guarantee AI Visibility

The underlying reason is architectural.

Google’s PageRank evaluates importance through link graphs. The more authoritative sites link to you, the higher you rank. It’s a voting system built on hyperlinks.

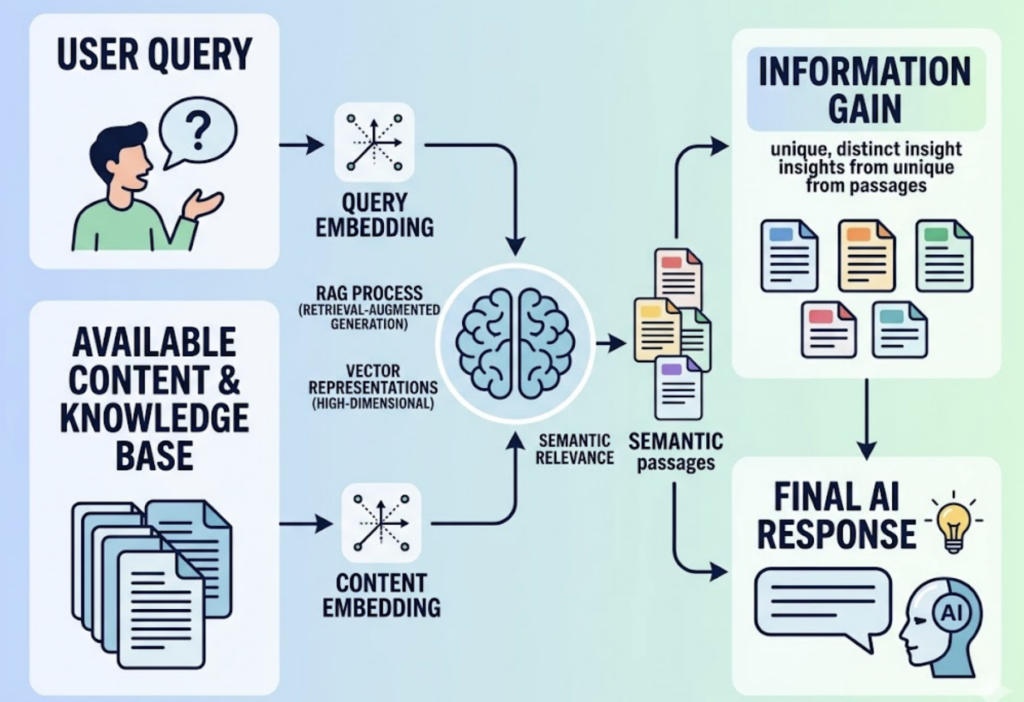

AI platforms use Retrieval-Augmented Generation (RAG). Instead of counting links, the system encodes both the user’s question and available content into high-dimensional vector representations, then surfaces the passages that are semantically most relevant. The system is looking for unique, incremental value — what researchers call “Information Gain” — not aggregate link authority.

The result: a brand with a modest backlink profile but deeply informative, structured content can consistently outperform a link-rich competitor in AI answers.

Backlinks correlate with AI visibility at 0.218. Brand mentions correlate at 0.664. That’s not a minor difference — that’s a different game entirely.

5 Signals a Proper AI Answer Monitoring Dashboard Should Show You

If you’re building (or evaluating) an AI answer monitoring system, these are the five metrics that actually matter.

1. Brand Mention Rate The percentage of tested prompts where your brand appears in the AI-generated response. This is your baseline visibility score. Most serious platforms, including Topify, test this across batches of 100+ prompts per category to establish a reliable baseline.

2. Sentiment Score Not a binary positive/negative rating — that’s too blunt for AI responses. You need contextual sentiment: is the AI describing you as a “budget option,” a “reliable choice,” or an “innovative leader”? Only 25% of marketers report confidence that AI summaries accurately reflect their brand positioning. Monitoring sentiment shifts is how you catch narrative drift before it becomes a reputation problem.

3. Citation Source Mapping AI models don’t pull from your website alone. They synthesize from G2 reviews, Reddit threads, TechRadar articles, and dozens of other third-party sources. Citation mapping tells you which external domains are shaping your AI representation — and which ones you need to influence. Topify’s Source Analysis feature tracks the exact URLs and domains AI platforms cite when mentioning your brand.

4. Position Within Recommendations In comparative queries (“What are the best project management tools for remote teams?”), the order in which brands appear correlates directly with user trust. Tracking your position relative to competitors — not just whether you appear — is how you measure competitive standing in AI answers.

5. Prompt Coverage Are you surfacing across different intent stages, or only when someone searches your brand name directly? Effective monitoring requires testing 20 to 50 unique prompts per category, spanning informational, commercial, and comparison intent. Topify’s AI answer monitoring platform continuously surfaces new prompt opportunities as AI recommendation patterns evolve.

From Raw Data to Action: What a Monitoring Workflow Actually Looks Like

Data without a workflow is just noise.

A professional AI answer monitoring workflow runs in three stages.

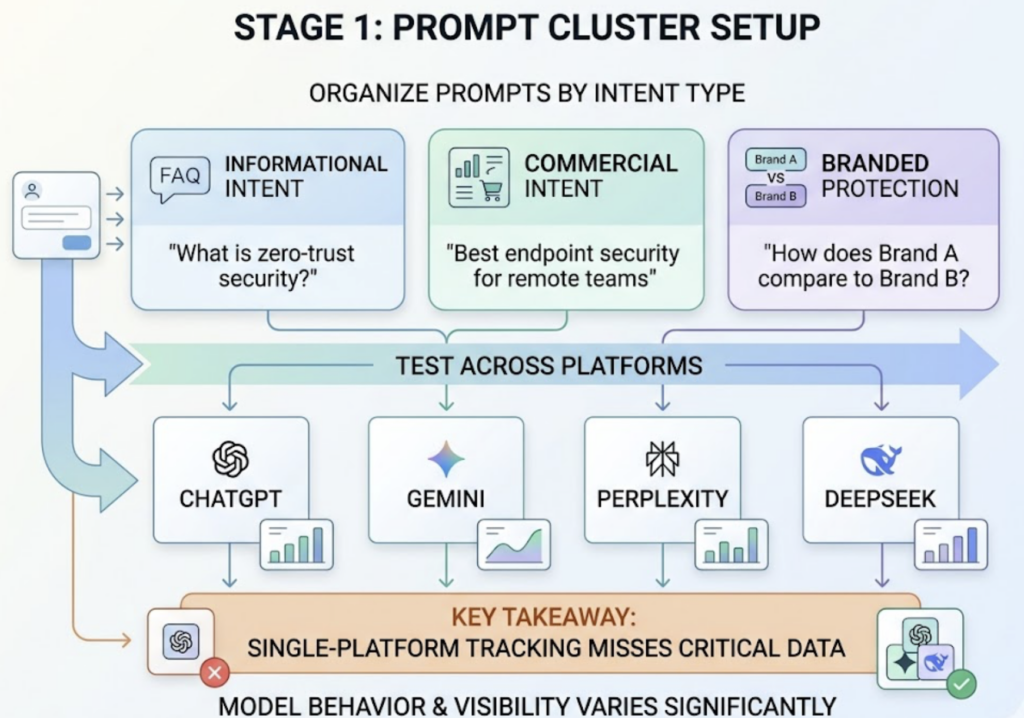

Stage 1: Prompt Cluster Setup Organize prompts by intent type: informational (“What is zero-trust security?”), commercial (“Best endpoint security for remote teams”), and branded protection (“How does Brand A compare to Brand B?”). Test each cluster across ChatGPT, Gemini, Perplexity, and DeepSeek. Single-platform tracking misses too much — model behavior varies significantly across platforms, and so does your visibility.

Stage 2: Mention Rate and Sentiment Monitoring Once tracking is live, watch for two things: drops in mention rate (which may signal a competitor has executed a successful GEO campaign or that a model update changed citation behavior) and sentiment shifts (which give you early warning when AI starts framing your brand differently than you intend).

Stage 3: Citation Gap Analysis A citation gap is when an AI mentions a competitor but skips you for a relevant query. By analyzing which domains the AI does cite, you can identify what content you’re missing. If a competitor’s industry report is consistently getting cited, the tactical response is to publish a more current, more data-dense version of that content to capture the next retrieval cycle.

Including specific named statistics can boost AI citation probability by up to 40%, according to research from Princeton and Semrush. That’s actionable. Most content teams just don’t know to target it.

Picking an AI Answer Monitoring Tool That Actually Covers Your Landscape

Not all platforms are equal, and the differences matter operationally.

Three questions should anchor your evaluation:

Does it cover the AI platforms your audience actually uses? Single-model tracking is a blind spot by design. Your audience isn’t using just ChatGPT — they’re using Gemini for some queries, Perplexity for research-heavy questions, and increasingly DeepSeek in certain markets.

Can you see competitor data? Share-of-voice visibility — knowing how your brand stacks up against specific rivals in AI answers — is what turns monitoring from a reporting function into a competitive intelligence function.

Does it give you source-level citation analysis? Knowing you’re mentioned is table stakes. Knowing which external URLs the AI is citing to describe you (and your competitors) is where optimization decisions get made.

Topify is built around all three. The platform tracks brand performance across ChatGPT, Gemini, Perplexity, DeepSeek, and other major AI platforms via seven core metrics: visibility, sentiment, position, volume, mentions, intent, and conversion rate. The Basic plan starts at $99/month and includes 9,000 AI answer analyses — enough for a mid-sized team to run continuous monitoring across multiple prompt clusters without hitting capacity limits. For teams managing multiple brands or clients, the Pro plan scales to 22,500 analyses across 8 projects.

What separates Topify from point-solution trackers is the execution layer. Beyond surfacing citation gaps, the platform’s AI agent can suggest content interventions and deploy strategies based on what the monitoring data shows — without requiring a separate workflow to act on the insights.

FAQ

How does AI search tracking differ from traditional SERP tracking?

Traditional SERP tracking monitors keyword rankings on search engine results pages, using backlink data and traffic metrics as primary signals. AI search tracking monitors how and whether your brand appears in AI-generated answers across platforms like ChatGPT, Gemini, and Perplexity. The object of measurement is different — rank position versus mention rate and citation share — and the optimization levers are different too. AI visibility depends on content authority, entity recognition, and source citation patterns, not link graphs.

What AI platforms should my monitoring tool cover?

At minimum: ChatGPT, Google Gemini, and Perplexity. These three account for the largest share of AI-driven research behavior in most markets. Depending on your audience geography, DeepSeek and Grok coverage may also be relevant. Any tool that tracks only one or two platforms will systematically undercount your actual visibility gaps.

How frequently should I run AI answer monitoring reports?

Weekly cadence works for tracking long-term trends and quarterly strategy reviews. Daily monitoring is worth the investment in competitive categories where rivals are actively running GEO campaigns — a competitor’s visibility can shift meaningfully within days after a major content push or a model update.

Conclusion

The shift from traditional SERP tracking to AI answer monitoring isn’t a trend to watch. It’s an operational gap that’s already affecting brand visibility for most companies right now.

Traditional ranking tools tell you where you are on Google. They don’t tell you whether ChatGPT recommends you, how Perplexity frames your brand relative to competitors, or which third-party domains are shaping your AI narrative. Those are different questions, and they require a different monitoring system.

The brands that close this gap early — before it becomes a market share problem — will be the ones that treat AI visibility as a measurable, trackable growth channel. Not just something to “keep an eye on.”

Read More