You’ve searched “best AEO tool,” opened G2, and found a dozen platforms with 4.5-star ratings. Every one of them claims to track AI search visibility. Every one of them shows a clean dashboard screenshot. But after reading 40 reviews across five tools, you still can’t tell which one will actually tell you something useful.

That’s not a research problem. That’s a structural problem with how AEO tools get reviewed.

Why G2 Reviews on AEO Tools Are Hard to Parse

The G2 Score is calculated from two inputs: Satisfaction and Market Presence. Satisfaction itself is built from metrics like Ease of Use, Quality of Support, and whether the product “meets requirements.” In a category like AEO, this creates a specific problem.

A tool can score well on Ease of Use because its interface looks polished, while its underlying tracking engine suffers from what analysts call “model freeze,” meaning it can’t capture real-time shifts in how LLMs retrieve and cite sources. You’d never know that from the star rating.

There’s also the incentivized review problem. Review platforms label incentivized entries, but peer-reviewed research in the Journal of Marketing Research found that incentivized reviews systematically use more positive language and fewer negative words than unincentivized ones, which skews the sample toward satisfied customers. Unhappy users don’t usually get an Amazon gift card to share their frustration.

On top of that, the AEO reviewer pool is unusually mixed. A veteran SEO specialist might rate a tool poorly because it lacks API access or granular prompt-level data. A content marketer at the same company might give it five stars for the same dashboard. Both reviews are “authentic.” Neither tells you much about whether the tool can do what you need.

The ratings tell you what users felt. The patterns tell you what actually works.

3 Strengths That Keep Appearing in Top-Rated AEO Tools

Strip out the noise and you start to see consistent signals in reviews for platforms that users actually stick with.

Cross-platform coverage is the most common differentiator. Only 11% of domains are cited by both ChatGPT and Perplexity for the same set of queries. A tool that only tracks Google AI Overviews is leaving most of the picture dark. Reviews that praise multi-engine visibility tend to use specific language: “the only tool that shows us how we look on Perplexity” or “finally seeing DeepSeek data alongside ChatGPT.”

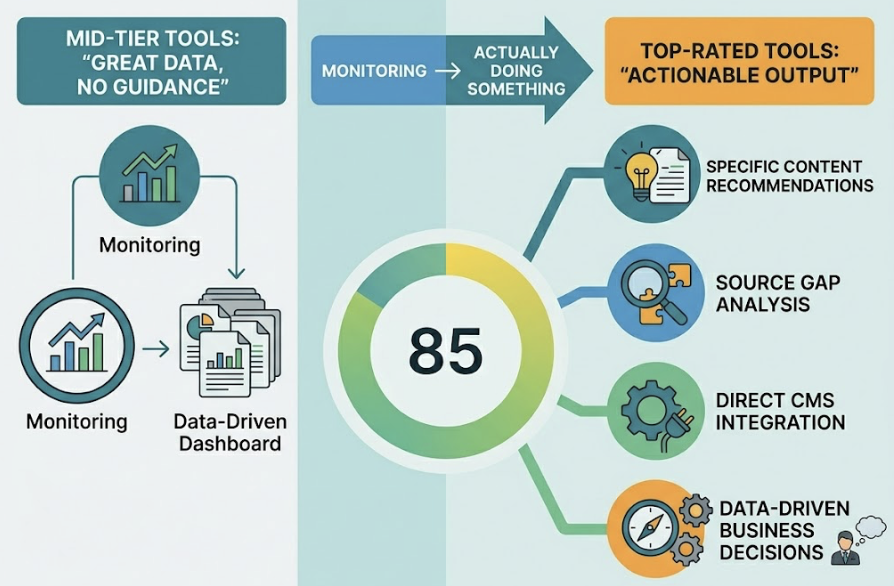

Actionable output is the second consistent signal. The complaint in mid-tier reviews is almost always the same: “great data, no guidance.” Top-rated tools close what analysts call the “actionability gap” by moving beyond dashboards into specific content recommendations, source gap analysis, or direct CMS integration. Users describe the shift as going from “monitoring” to “actually doing something.”

Fast setup matters more than it seems. For teams without a dedicated SEO data scientist, a tool that takes three weeks to configure is a tool that won’t get used. Reviews that mention measurable improvements within days tend to correlate with higher long-term retention scores.

The Gaps Nobody Mentions in the 5-Star Reviews

Here’s where the category gets interesting. Positive reviews are often written within the first 30 to 60 days of using a product, when everything feels fresh and the onboarding is still top of mind. The structural weaknesses don’t show up until later.

Binary visibility tracking is the most common hidden gap. Most basic AEO tools log a brand mention as a “win” regardless of context. But an AI response that reads “Brand X is a budget option with mixed reviews” is not a win. It’s a reputation signal that requires action. Tools that don’t layer sentiment analysis and position tracking on top of mention data are giving you an incomplete picture.

Being mentioned is not the same as being recommended.

Data freshness is the second gap. Research indicates that 40% to 60% of cited domains in AI Overviews can change within a single month. If your AEO tool refreshes data weekly or less, it’s telling you about a citation landscape that no longer exists. For brands that are actively building authority or fixing AI hallucinations, delayed data means delayed action.

Weak competitive benchmarking is the third. Many tools focus exclusively on your own brand’s visibility without showing you where you stand relative to competitors for specific prompts. Without that context, you can’t tell whether your 42% visibility rate is strong or whether your closest competitor is at 78% for the same query set.

You can’t optimize what you can’t benchmark against.

What 3-Star Reviews Tell You That 5-Stars Don’t

Moderate reviews are underrated as a research tool. They tend to come from users who are committed enough to stick around after the honeymoon period but frustrated enough to be specific about what isn’t working.

A few patterns show up consistently across 3-star AEO feedback.

The “better but buggy” syndrome is common. Users acknowledge the feature exists but note it’s not reliable at scale. Common examples include “N/A” rankings appearing frequently for specific prompt sets, fragmented reporting that makes it hard to connect AI data to traditional SEO metrics, and delayed insights that arrive after the opportunity has passed.

Pricing opacity is a high-frequency complaint. Several platforms in the AEO space position themselves as affordable entry points, then gate the features that actually matter behind enterprise tiers or credit-based add-ons. When users discover that full multi-engine coverage or competitor benchmarking requires a custom contract, that’s when the 3-star reviews get written.

Reporting that doesn’t land with stakeholders is the third theme. Practitioners can see the data. Explaining it to a CMO or a board is harder. Tools that provide only raw visibility scores leave teams without a narrative for why AI search matters or what’s changing. Reviews that mention “hard to justify budget internally” often trace back to this exact problem.

5 Things to Check Before Picking an AEO Tool

Based on consistent patterns across G2 feedback and technical analysis of how AI citation works, here’s a practical checklist for evaluation:

1. Does it track more than one AI engine? ChatGPT, Perplexity, Gemini, and Google AI Overviews have meaningfully different citation ecosystems. A tool that only covers one or two is giving you partial data. If your audience uses multiple AI platforms, your tracking needs to match.

2. Can it show you where you rank relative to competitors? “Share of AI Voice” is the metric that turns individual visibility data into competitive intelligence. Without it, you’re tracking effort, not position.

3. How often does it refresh data? Given that citation patterns can shift 40-60% month over month, weekly updates are a floor, not a feature. Daily or near-real-time tracking is what enterprise teams need.

4. Does it explain why AI cites a source, not just that it does? This is the source analysis question. Knowing which third-party domains, forums, and review platforms are driving AI citations in your category tells you exactly where to build authority. Without it, your content strategy is guesswork.

5. Can a non-technical team member actually use the output? A tool that requires a data scientist to interpret findings will have low adoption. The best platforms translate complex tracking data into clear, shareable reports that make sense to a marketing manager, a CMO, or a client.

How Topify Addresses the Gaps G2 Reviews Keep Identifying

Topify was built specifically for the generative era, which means the structural gaps that show up repeatedly in G2 reviews of legacy SEO tools with AI features bolted on aren’t present in the same way.

On multi-platform coverage, Topify tracks ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, Qwen, and other major AI platforms. For brands operating in global markets, this matters. Regional AI engines have their own citation patterns, and a tool that only covers North American platforms misses a significant portion of the picture.

On the sentiment and position gap, Topify uses a seven-metric framework that goes significantly beyond mention tracking. The Sentiment Quotient scores AI descriptions on a -100 to +100 scale. The Answer Placement Score (APS) weights where in the response a brand appears, because a first-mention recommendation carries more authority than a trailing footnote. The CVR (Conversion Visibility Rate) estimation connects AI presence to revenue-relevant behavior, which solves the stakeholder reporting problem.

On source analysis, Topify reverse-engineers what analysts call “aristocratic domains”: the small cluster of high-authority sites like Reddit, YouTube, Wikipedia, and yes, G2 itself, that account for roughly 43% of all AI citations. Knowing that AI engines in your category are consistently pulling from a specific Reddit thread or a particular review page tells you exactly where to invest in authority building, rather than spreading content across channels that aren’t being read by the models.

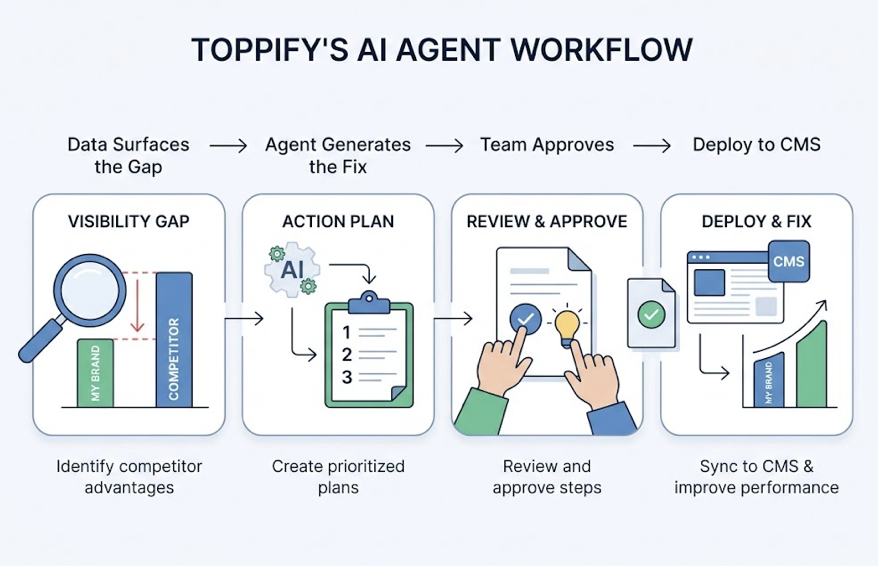

On execution, Topify’s AI Agent closes the actionability gap that shows up in so many 3-star reviews. It maps visibility gaps, identifies where competitors are being recommended instead of your brand, and generates prioritized action plans. Those plans can be implemented directly to a CMS. The workflow is: data surfaces the gap, agent generates the fix, team approves and deploys. Less time between insight and action.

For teams evaluating their options, getting started with Topify takes significantly less time than most enterprise-grade platforms in this category. The Basic plan starts at $99/month and covers ChatGPT, Perplexity, and AI Overviews tracking with 100 prompts and 9,000 AI answer analyses.

Conclusion

G2 reviews on AEO tools are useful, but not in the way most procurement teams use them. The star rating is the least informative data point. The patterns across moderate reviews, the specific complaints about data freshness and competitive context, the features that users mention wishing existed: those are the signals worth extracting.

The short version: look for tools that go beyond binary mention tracking, refresh data frequently, provide competitive benchmarking, and generate output that non-specialists can act on. That combination is rarer than the rating distribution on G2 would suggest.

If you want to know where your brand actually stands in AI search today, the only way to find out is to start tracking it.

Frequently Asked Questions

Q: What does AEO mean in the context of G2 reviews?

A: On G2, AEO (Answer Engine Optimization) refers to software that monitors how brands appear in AI-generated responses across platforms like ChatGPT, Perplexity, and Google AI Overviews. Reviews in this category typically focus on visibility tracking accuracy, ease of use, and whether the tool provides actionable guidance beyond raw data.

Q: How reliable are G2 ratings for AEO tools?

A: G2 ratings provide a useful starting point but come with specific limitations in the AEO category. The space is still relatively new, which means reviewers often have different baselines for what “good” looks like. Research suggests a significant portion of reviews in emerging software categories may be vendor-incentivized. 3-star reviews tend to be more diagnostic than 5-star reviews because they come from users who have spent enough time with the product to identify real friction points.

Q: What’s the difference between AEO and GEO tools?

A: AEO (Answer Engine Optimization) has been around since roughly 2015 and focuses on optimizing for featured snippets, voice assistants, and structured Q&A formats. GEO (Generative Engine Optimization) is newer, emerging around 2023, and focuses on getting brands cited and recommended inside LLM-generated summaries from platforms like ChatGPT and Perplexity. Many tools marketed as AEO tools today are actually doing GEO work. The terms are often used interchangeably, though they represent distinct technical approaches.

Q: Which AEO tool features matter most for a small marketing team?

A: For smaller teams, the three features that tend to drive the most value are rapid setup (visible results within days, not weeks), actionable output that doesn’t require a data scientist to interpret, and cross-platform coverage that goes beyond a single AI engine. Features like one-click execution or AI-assisted content recommendations are particularly useful for teams without dedicated SEO resources.