You spent six months building domain authority, publishing content, and climbing Google rankings. Then a prospect typed “best tool for [your category]” into ChatGPT and got a list of five brands. Yours wasn’t on it. The worst part: you didn’t even know it was happening. The same prompt on Perplexity returned a completely different set of recommendations, and Gemini skipped your brand entirely while featuring two competitors you’d never heard of.

This gap between what traditional SEO dashboards show and what AI engines actually recommend is where most brands are losing ground right now. And it’s growing wider every week.

Why Manual Spot-Checks Don’t Work for AI Recommendation Tracking Monitoring

The first thing most marketing teams do when they hear about AI search visibility is Google themselves on ChatGPT. It feels productive. It’s not.

The core problem is that large language models are non-deterministic. The same prompt can produce different brand recommendations in 30% to 40% of instances, depending on when, where, and how the question is asked. That means a single manual check has roughly the same statistical value as flipping a coin.

It gets worse. AI outputs are shaped by variables most teams never consider: geographical location, model version (GPT-4o vs. GPT-4o-mini), user account history, and even time of day. A brand might rank as the top recommendation in New York but disappear entirely for users in London. The citation rate in the United States sits at roughly 10.31%, nearly three times higher than many non-US markets.

That’s not a rounding error. That’s a visibility blind spot.

On top of that, hallucination rates across major models range from 15% to 52%. These aren’t random errors. They fall into four specific categories of brand risk: fabrication of features your product doesn’t have, omission of key differentiators, use of outdated pricing, and misclassification of your brand as a competitor. Without systematic AI recommendation tracking monitoring, teams end up making budget decisions based on anecdotal evidence, often realizing they’ve been displaced only after leads drop.

What AI Recommendation Tracking Actually Measures

AI recommendation tracking isn’t a new name for rank tracking. Traditional SEO measures where your page appears in a list of ten blue links. AI recommendation tracking measures whether the AI chose to mention your brand at all, where it placed you relative to competitors, and how it described you in a synthesized answer.

The difference matters. In traditional search, users choose between ten results. In AI search, the model selects three to five brands and presents them as vetted recommendations. Your competition isn’t the SERP anymore. It’s the model’s internal reasoning.

Professional monitoring systems built for this shift typically organize metrics around five core dimensions:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Visibility Score | % of AI responses that mention the brand for target prompts | Tells you if the model “knows” your brand exists |

| Position Rank | Order in which the brand appears within a recommendation list | Position 1 carries a 33% citation probability; Position 10 drops to 13% |

| Sentiment Score | NLP-driven rating of the tone AI uses when mentioning the brand | Distinguishes “industry leader” from “budget alternative” |

| AI Search Volume | Estimated monthly demand for specific natural-language prompts | Shows which conversational queries are growing |

| Citation Sources | URLs and domains the AI cites to support its recommendation | Reveals which third-party sites the AI trusts more than yours |

The interplay between these metrics is where the real insight lives. A high Visibility Score paired with a low Sentiment Score means the AI knows your brand but is actively steering users away. High Sentiment with low Position means the model respects you but finds competitors more relevant to the specific prompt. This nuance disappears entirely in traditional rank tracking.

5 Steps to Set Up AI Recommendation Tracking in Practice

Step 1: Build a Prompt Library, Not a Keyword List

The foundation of AI recommendation tracking monitoring isn’t keywords. It’s prompts.

Short-tail keywords like “CRM software” don’t reflect how people query AI assistants. Instead, users ask questions like “What’s the best enterprise CRM for a mid-market manufacturing firm with 50 employees?” These conversational, high-intent queries are what you need to monitor.

The best sources for building your prompt library are already inside your organization. Sales call recordings from platforms like Gong or Chorus reveal the exact decision-making frameworks buyers use. Support tickets surface the feature gaps and bottlenecks users try to solve via AI. And Google Search Console, filtered with Regex for long-tail conversational queries, bridges the gap between traditional search behavior and AI prompts.

Aim for 20 to 50 high-intent prompts grouped by semantic interest: use cases, comparisons, and buyer personas. Topify‘s High-Value Prompt Discovery feature automates this process, continuously surfacing new prompt opportunities as AI recommendations evolve.

Step 2: Monitor Across Multiple AI Platforms

Only tracking ChatGPT is like only tracking Google in 2010. You’d miss half the picture.

Each AI platform has a fundamentally different recommendation logic. Perplexity operates as a research engine, citing an average of 21.87 sources per response, nearly three times more than ChatGPT’s 7.92. Perplexity is heavily biased toward recency: content updated within the last 30 days has an 82% citation rate. If you’re not refreshing content monthly, Perplexity probably isn’t citing you.

ChatGPT, by contrast, is more selective. About 90% of its citations come from domains that already rank in Google’s Top 10, meaning traditional SEO still functions as a trust signal for ChatGPT. Google AI Overviews leans on the Knowledge Graph and E-E-A-T signals. DeepSeek and Qwen are emerging as significant players for technical queries, with Chinese LLMs mentioning brands at an 88.9% rate for English queries compared to 58.3% for international models.

| Platform | Avg. Citations/Response | Key Recommendation Factor |

|---|---|---|

| Perplexity | 21.87 | Recency and factual corroboration |

| ChatGPT | 7.92 | Relevance overlap with Google Top 10 |

| Google AI | 8.34 | E-E-A-T and Knowledge Graph entities |

| DeepSeek | Variable | Technical accuracy and MoE reasoning |

Topify covers ChatGPT, Perplexity, Gemini, DeepSeek, Qwen, and other major platforms from a single dashboard. For teams using ai search engine optimization tools, this cross-platform view is the difference between a partial snapshot and a real baseline.

Step 3: Benchmark Against Competitors

Tracking your own data is only half the equation. The other half is understanding who the AI recommends instead of you, and why.

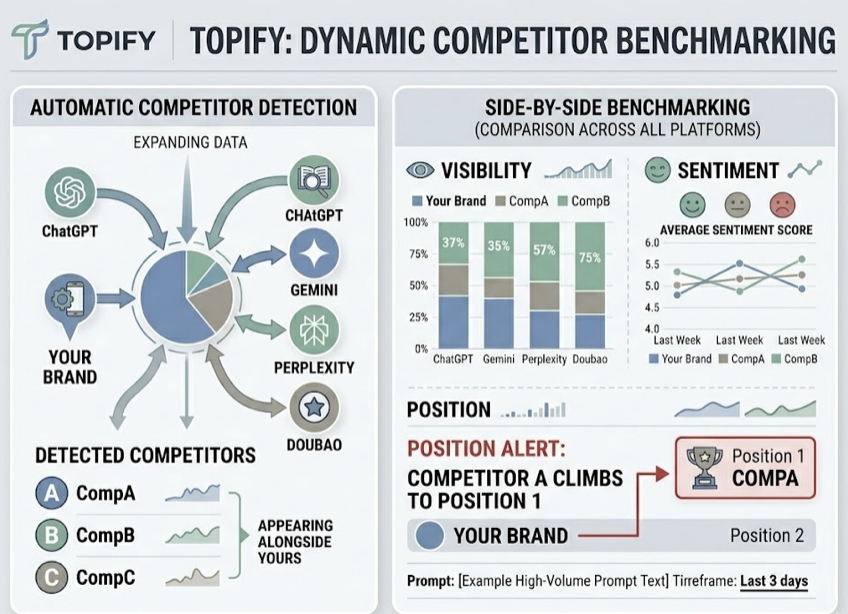

Topify’s Dynamic Competitor Benchmarking automatically detects which brands appear alongside yours in AI responses. You can compare Visibility, Sentiment, and Position side by side, across every platform, for every prompt in your library. When a competitor suddenly climbs into Position 1 for a high-volume prompt, you’ll know within days, not quarters.

Step 4: Reverse-Engineer Citations to Find Content Gaps

Here’s the insight most teams miss: between 82% and 85% of AI citations come from third-party sources, not from the brand’s own website. Media coverage, Reddit threads, G2 reviews, and niche industry forums carry more weight with AI models than your homepage.

If a competitor dominates AI recommendations in your category, it’s often because they’ve built a “citation moat” across these external platforms. The fix isn’t writing another blog post on your domain. It’s identifying the specific URLs the AI cites when recommending competitors and building your brand’s presence in those same contexts.

Topify’s Source Analysis breaks down exactly which domains and URLs AI platforms reference. You can see whether the AI trusts your content or your competitor’s, and where the gaps are. That’s the foundation of any ai-powered search engine optimization strategy: know what the AI reads before you try to change what it says.

AI-Based Search Engine Optimization Tools: What Separates Monitoring from Execution

Most ai-based search engine optimization tools stop at dashboards. They show you the data, then leave you to figure out what to do with it.

The gap between insight and action is where most tracking efforts stall. A team discovers their brand is invisible for 60% of high-intent prompts. The dashboard confirms it. Then what? Without a clear execution path, the data sits in a slide deck.

This is where the market splits. Pure monitoring tools give you visibility metrics. End-to-end platforms connect those metrics to specific actions. When Topify identifies an “Invisibility Gap,” such as missing structured pricing data that causes an AI to skip your brand, its One-Click Execution feature can propose and deploy the fix: adding a comparison table, updating FAQ schema, or creating an llms.txt file to help AI crawlers prioritize your content.

The ROI math supports this approach. AI-referred traffic converts at nearly 2x the rate of traditional organic search. In B2B SaaS specifically, the conversion rate for AI-referred clicks reaches 11.4%, compared to 5.8% for standard organic traffic. That “pre-vetting effect,” where the AI validates your brand before the user even clicks, makes every AI recommendation significantly more valuable than a traditional blue-link click.

For teams evaluating ai tools for search engine optimization, the key question isn’t “does it track?” It’s “does it close the loop between tracking and doing?”

| Capability | Monitoring-Only Tools | End-to-End Platforms like Topify |

|---|---|---|

| Visibility metrics | Yes | Yes |

| Cross-platform coverage | Varies (often 1-2 engines) | ChatGPT, Perplexity, Gemini, DeepSeek, Qwen+ |

| Competitor benchmarking | Limited | Automatic detection and tracking |

| Citation source analysis | Rare | Full URL-level breakdown |

| Execution from dashboard | No | One-Click Optimization |

Topify’s Basic plan starts at $99/month and includes tracking across ChatGPT, Perplexity, and AI Overviews with 100 prompts and 9,000 AI answer analyses. For teams that need broader coverage, the Pro plan at $199/month scales to 250 prompts across additional platforms. Check Topify’s pricing for full plan details.

The Compounding Cost of Starting Late

The brands winning in AI search aren’t optimizing harder. They’re monitoring earlier.

AI platforms are recursive. Each time a model cites a brand and a user validates that recommendation through subsequent actions, the model’s confidence score for that brand increases. Over time, the brand that gets recommended first builds a self-reinforcing cycle: more citations lead to more trust, which leads to more citations.

The flip side is equally powerful. Once a competitor captures more than 50% of category citations, they’ve built a level of topical authority that traditional SEO investment struggles to displace. The “citation moat” compounds. And the longer a brand waits to start tracking, the deeper that moat gets.

In critical B2B sectors, AI-referred traffic now converts at up to 6x the rate of traditional channels. That’s not a future projection. That’s the current gap between brands that monitor AI recommendations and brands that don’t.

The strategic roadmap is straightforward: establish a baseline across ChatGPT, Perplexity, and Gemini. Shift from keyword research to prompt research. Validate your technical setup (schema markup, llms.txt, bot access). Diversify your citation sources across third-party platforms. And build continuous monitoring into your weekly marketing operations, not your quarterly reviews.

The brands that thrive in the AI era won’t be the ones that rank highest on Google. They’ll be the ones that AI chooses to recommend. And the only way to know if that’s happening is to track it.

Get started with Topify to see where your brand stands across every major AI platform.

Conclusion

The shift from “getting found” to “getting recommended” is the defining change in digital marketing right now. Manual spot-checks can’t capture it. Traditional SEO dashboards can’t measure it. And waiting to see if it matters isn’t a strategy.

AI recommendation tracking monitoring gives brands the visibility they need to act: which prompts matter, which platforms recommend you (or don’t), what competitors are doing differently, and where the citation gaps are. The brands building this infrastructure now are the ones AI will keep recommending tomorrow. The ones that delay are building their competitor’s moat for them.

FAQ

Q: What is AI recommendation tracking?

A: AI recommendation tracking is the process of systematically monitoring how AI platforms like ChatGPT, Perplexity, and Gemini mention, rank, and describe your brand in their generated responses. Unlike traditional SEO rank tracking, it measures conversational visibility, sentiment, position, and the specific sources AI models cite when recommending brands.

Q: Which AI platforms should I monitor for brand recommendations?

A: At minimum, track ChatGPT, Perplexity, and Google AI Overviews, as they represent the largest share of AI-driven search behavior. For global or technical brands, add DeepSeek and Qwen. Each platform uses different retrieval mechanisms and citation logic, so cross-platform monitoring is essential for an accurate picture.

Q: How often should I check my AI recommendation data?

A: Weekly monitoring is the practical baseline. AI models update their citation patterns frequently, and Perplexity in particular favors content updated within the last 30 days. Quarterly reviews are too slow to catch competitive shifts or model updates that could change your brand’s visibility overnight.

Q: Can a generative AI search engine optimization agency handle AI recommendation tracking for me?

A: A generative ai search engine optimization agency can manage the tracking and optimization process, especially for brands without in-house GEO expertise. That said, platforms like Topify are designed for marketing teams to self-serve with minimal onboarding, starting at $99/month. Whether you use an agency or build the capability internally, the important thing is that someone is watching what AI says about your brand every week.