Your domain authority is 70. Your keyword rankings are solid. But when someone asks Perplexity for a recommendation in your category, the AI cites your competitor’s blog post instead of yours.

That’s not a ranking problem. It’s a citation problem.

Traditional SEO optimizes for link-based lists. Generative Engine Optimization (GEO) optimizes for something fundamentally different: whether AI models extract, trust, and cite your content when they synthesize answers. The two disciplines share surface-level similarities, but their underlying mechanics diverge in ways that catch most SEO teams off guard.

Research from Princeton University, Georgia Tech, and the Allen Institute for AI (published at ACM KDD 2024) found that pages ranked fifth on Google saw their AI search visibility increase by up to 115.1% after applying GEO-specific content strategies. Meanwhile, first-place pages without those strategies often didn’t appear in AI answers at all.

The takeaway isn’t that SEO is dead. It’s that ranking and being cited are now two separate outcomes, and they require two separate optimization approaches.

Why High-Ranking Pages Go Missing in AI Answers

The disconnect comes down to how generative engines process information versus how traditional search engines rank it.

Google ranks pages. AI models extract passages.

When a user submits a query to ChatGPT or Perplexity, the system doesn’t return a list of links sorted by authority. It runs a Retrieval-Augmented Generation (RAG) pipeline that pulls specific text chunks from indexed content, evaluates their factual density and structural clarity, and synthesizes them into a single coherent answer.

That means your page’s overall authority score matters less than whether individual paragraphs contain extractable, verifiable claims. A well-structured blog post on a DA-30 site can outperform a DA-80 corporate page if its content is easier for the model to parse and cite.

Here’s a side-by-side breakdown of where the two systems diverge:

| Dimension | Traditional SEO | GEO |

|---|---|---|

| Core goal | Higher SERP position for clicks | Cited and recommended in AI answers |

| Visibility model | Hierarchical (top 3 capture most traffic) | Distributed (mid-tier sites can earn citations) |

| Key signals | Backlinks, domain authority, keyword match | Fact density, structured data, entity consistency |

| User interaction | Click-through to website | Zero-click consumption or citation-based verification |

| Success metrics | CTR, impressions, rank position | Mention rate, citation frequency, sentiment, source weight |

With traditional search volume projected to decline 25% by 2026, the brands that don’t adapt their content for AI extraction will lose visibility in the channel that’s growing fastest.

What LLMs Actually Look for When Citing Content

AI models aren’t browsing your page the way a human reader does. They’re scanning for evidence.

Specifically, LLMs prioritize three qualities when selecting which passages to cite: fact density, entity authority, and linguistic clarity.

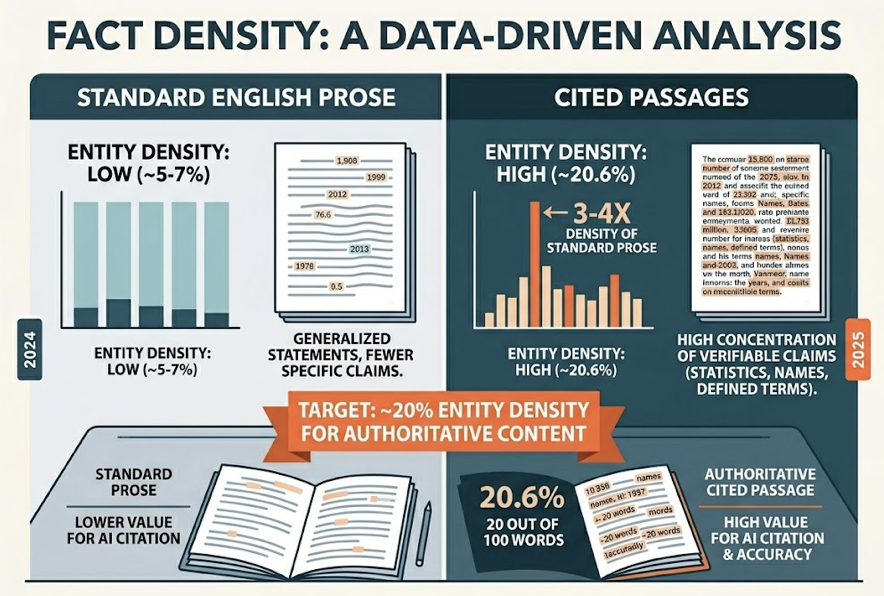

Fact density is the ratio of verifiable claims (statistics, named entities, research conclusions) to total word count. Cited passages average an entity density of 20.6%, roughly three to four times the density of standard English prose. In practical terms, that means a 100-word paragraph needs to contain around 20 words that are specific names, numbers, dates, or defined terms.

Entity authority refers to how consistently your brand, product names, and key claims appear across multiple sources on the web. AI models cross-reference your content with third-party mentions. Inconsistent descriptions across platforms create what researchers call a “Trust Gap” that reduces citation probability.

Linguistic clarity matters more than you’d expect. Content written at a Flesch-Kincaid readability grade of 8 to 10 (roughly high school level) gets cited 20% more often than dense academic prose. AI models function as high-speed summarizers. If your sentence structure is complex or loaded with hedging language, the model moves to a cleaner source.

The research quantifies how specific content improvements affect citation rates:

| Optimization | Citation lift | Why it works |

|---|---|---|

| Adding authoritative citations | +30% to +40% | Strengthens the evidence chain |

| Integrating statistics | +37% to +40% | Provides discrete, extractable fact points |

| Embedding expert quotes | +30% | Adds third-party verification signals |

| Improving readability | +15% to +30% | Reduces the model’s parsing cost |

| Using declarative tone | +10% to +20% | Lowers uncertainty perception |

The pattern is clear: the more your content reads like a well-sourced briefing document, the more likely it is to be cited.

5 Content Structures That Earn AI Search Visibility

Content format directly determines extractability. Not all structures are equal in the eyes of a RAG pipeline. These five formats consistently outperform in citation frequency across ChatGPT, Perplexity, and Google AI Overviews.

1. Definition-First Format

AI models follow what researchers call a “ski slope” retrieval pattern: roughly 44.2% of citations come from the first 30% of a page’s content.

That means the opening sentences under each H2 or H3 carry disproportionate weight. Place a 40-to-60-word direct definition or core claim immediately after each heading. Skip the background buildup. If the AI can extract your answer from the first paragraph under a heading, your chances of being cited multiply.

2. Numbered Step-by-Step Guides

For process-oriented queries (“how to set up,” “steps to implement”), ordered lists are the default extraction target. Each step should be a semantically complete chunk, meaning it makes sense on its own without needing context from surrounding steps.

Use H2 or H3 tags for each step. AI models treat heading-tagged steps as standalone units they can pull into an answer individually.

3. Comparison Tables with Clear Dimensions

Narrative comparisons are hard for AI to parse. Tables are easy.

One SaaS brand converted its narrative product comparison into a structured HTML table with explicit dimensions (pricing, features, target audience) and saw a 35% CTR lift within a week, plus inclusion in Google AI Overview snapshots. If you’re targeting any “X vs Y” or “best tools for Z” query, tables aren’t optional.

4. FAQ Sections with Direct Answers

LLMs handle complex queries by breaking them into sub-questions, a process called “query fan-out.” FAQ sections map directly to this behavior. Each question becomes a potential sub-query match, and each answer becomes a candidate citation.

Pair your FAQ content with FAQPage Schema markup. It won’t guarantee citation, but it improves machine readability, which is the prerequisite.

5. Data-Backed Claims with Source Attribution

Every factual claim should follow a simple formula: claim + statistic + (source, year).

Princeton’s research found that adding statistics alone can boost AI visibility by up to 40%. Perplexity, which operates as a real-time research engine, particularly favors passages with high fact density and clear source attribution. If your content makes a claim without a number or a source, it’s at a structural disadvantage.

How to Reverse-Engineer What AI Platforms Already Cite

GEO isn’t just about optimizing your own site. It’s about understanding the full ecosystem of sources that AI models trust in your category.

Here’s the uncomfortable data point: between 82% and 85% of AI citations come from third-party sources like Reddit, G2, LinkedIn, Wikipedia, and industry publications. Your own website accounts for a small fraction of the citation landscape. That means “off-site authority” isn’t a nice-to-have. It’s the primary driver of AI visibility.

The Manual Approach

Start by building a “Money Prompt Set”: 20 to 30 long-tail questions that reflect real buyer intent in your category. Think “best [product type] for [specific use case]” or “[Brand A] vs [Brand B] for [industry].”

Run each prompt across ChatGPT, Perplexity, and Gemini. Record which brands get mentioned, which sources get cited, and where your brand is absent. Keep in mind that citation overlap between models is only about 11%, which means each platform has its own trust graph. Testing on just one engine gives you an incomplete picture.

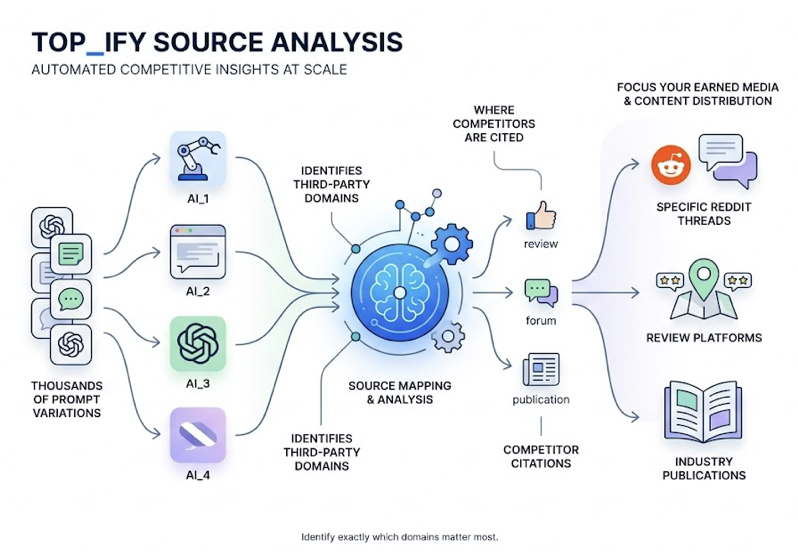

The Systematic Approach

Manual testing hits a wall quickly. LLM outputs are non-deterministic, meaning the same prompt can produce different citations on different runs. A single test gives you a snapshot, not a pattern.

Topify‘s Source Analysis automates this at scale. It runs thousands of prompt variations across multiple AI platforms, maps the citation sources for each response, and identifies exactly which third-party domains your competitors are being cited from. That data tells you where to focus your earned media and content distribution efforts: the specific Reddit threads, review platforms, and industry publications where AI models are sourcing their recommendations.

| Capability | Traditional SEO tools (e.g., Ahrefs) | Topify GEO platform |

|---|---|---|

| Monitors | Keyword rankings, backlink counts | AI mention rate, citation position, brand sentiment |

| Data source | Search index, clickstream | Real-time model outputs, RAG retrieval sources |

| Analysis depth | Domain-level, page-level | Sentence-level fact attribution, semantic drift detection |

| Optimization output | Keyword targeting, link building | Paragraph restructuring, Schema injection, third-party footprint expansion |

The GEO Content Audit Checklist

Not every optimization carries equal weight. Here’s a priority framework based on ROI and implementation difficulty.

Tier 1: Technical AI-Readiness (High ROI, Low Effort)

Check your robots.txt. Make sure you haven’t blocked GPTBot, ClaudeBot, or PerplexityBot. CDN providers like Cloudflare sometimes block AI crawlers by default.

Implement server-side rendering (SSR). AI crawlers typically can’t execute complex client-side JavaScript. If your content loads via JS, it’s invisible to AI.

Create an llms.txt file. This machine-readable file in your root directory tells AI crawlers about your site’s structure and preferred citation format.

Tier 2: Content Citation-Readiness (Medium ROI, Medium Effort)

Optimize your first-paragraph summaries. The opening two to three sentences after each H1 should directly answer the topic. No throat-clearing.

Insert evidence blocks. Every H2 section needs at least one statistic or expert quote. Without them, your content is assertion-heavy and evidence-light.

Break long paragraphs into 50-to-150-word sections with clear headings. Add comparison tables where relevant.

Tier 3: Entity Authority (High ROI, High Effort)

Deploy comprehensive Schema markup: Organization, Person, and Product schemas with sameAs links to Wikipedia, LinkedIn, and other verification nodes.

Build your external footprint. Contribute genuinely to Reddit discussions, Quora threads, and industry forums in your category. AI models assign significant weight to these “human consensus” signals.

| Audit dimension | Example check | Weight (1-10) | ROI expectation |

|---|---|---|---|

| Technical foundation | AI crawler access, SSR | 10 | Baseline requirement |

| Structure optimization | Definition-first blocks, lists, tables | 9 | Significant extraction rate lift |

| Evidence integration | Authoritative citations, statistics, dates | 8 | Increased citation weight |

| Semantic markup | JSON-LD Schema depth | 7 | Improved entity recognition |

| Off-site trust | Third-party media mentions, reviews | 9 | Long-term citation moat |

Tracking Your AI Search Visibility After Optimization

You’ve restructured your content. You’ve added Schema. You’ve planted evidence blocks in every section. Now what?

Traditional analytics won’t tell you if it worked. AI search is largely zero-click, which means improvements in citation frequency don’t show up in Google Analytics as traffic increases. You need a different measurement system entirely.

The Metrics That Matter

AI Mention Rate: the percentage of relevant prompts where your brand appears in the AI’s response. The average brand sits at roughly 0.3%. Top-performing brands reach 12%.

Citation Share: the proportion of all cited links in AI answers that point to your domain. This is your market share in the AI citation economy.

Recommendation Position: when AI lists multiple brands, where do you rank? First position carries significantly more trust than third or fourth.

Sentiment Score: how does the AI describe your brand? Positive, neutral, or subtly negative? “Semantic drift,” where AI’s characterization diverges from your actual positioning, is a real and measurable risk.

Building a Continuous Monitoring Loop

Single-point testing doesn’t work because LLM outputs are probabilistic. The same prompt can return different results on consecutive runs. Topify‘s Visibility Tracking solves this by running each prompt set 10 to 20 times across ChatGPT, Perplexity, Gemini, and AI Overviews, producing statistically stable visibility scores rather than anecdotal snapshots.

The platform also functions as a competitive early-warning system. When a competitor earns a new citation in a high-value “best of” query, the system flags it and identifies what content change drove the shift. That’s the difference between discovering you’ve lost visibility three months later and responding within days.

Conclusion

Optimizing for LLM citations is a separate discipline from traditional SEO. It requires different content structures, different success metrics, and a different understanding of what “authority” means in an AI-driven search environment.

The core loop is straightforward: audit your existing content for AI-readiness, identify which prompts matter to your buyers, reverse-engineer the citation sources AI already trusts, restructure your content for extraction, and track whether it’s working with AI-specific metrics.

The brands that build this practice now are earning a structural advantage. AI models develop citation patterns over time, and early, frequently cited sources tend to maintain their position as the default recommendation. Waiting until AI search becomes the dominant discovery channel means competing against entrenched incumbents who started earlier.

Start with your highest-converting pages. Run the audit. Measure your baseline. Then optimize from there.

FAQ

What is GEO, and how is it different from SEO?

SEO focuses on ranking pages in search engine result lists to earn clicks. GEO focuses on getting your content cited and recommended inside AI-generated answers. SEO optimizes for page-level authority signals like backlinks. GEO optimizes for passage-level extractability: fact density, structured data, and entity consistency.

How long does it take for optimized content to appear in AI answers?

For AI engines with real-time browsing (Perplexity, ChatGPT Search, Google AI Overviews), optimized content can appear within 12 to 24 hours. For static model versions that rely on training data, updates may take months until the next model refresh.

Should I optimize existing content or create new pages?

Start with existing pages that already rank in Google’s top 20. They have retrieval baseline that GEO optimization can amplify. For high-intent long-tail questions that your site doesn’t cover yet, create new “GEO-native” pages designed specifically for AI extraction. Refreshing high-authority existing content typically delivers faster ROI than building from scratch.

Which AI platforms should I prioritize?

ChatGPT handles the largest share of AI search traffic and is the default starting point. Perplexity, despite smaller overall volume, has exceptionally high citation density and is particularly valuable for B2B and research-oriented brands. Google AI Overviews connects most directly to traditional SEO signals. The most effective approach is cross-platform optimization, because the strategies that improve Perplexity citations (data density, clear sourcing) tend to work across all platforms.