G2’s Answer Engine Optimization category didn’t exist before March 2025. Since then, it’s grown by 2,000%. That’s not a trend. That’s a category being invented in real time.

The problem is that a category growing that fast attracts two kinds of tools: ones that genuinely track how AI engines recommend brands, and ones that repackaged their SEO dashboards and added “AI” to the tagline. G2’s listing criteria filter out the obvious fakes. But they don’t tell you which of the remaining tools actually fits your team.

That’s what this framework is for. Four steps, starting with the filter most buyers skip entirely.

G2 Won’t List an AEO Tool Unless It Does These 4 Things

Before anything else, it helps to understand what G2 actually checks before approving a product for the AEO category. These aren’t optional features. They’re the entry requirements.

AI Visibility Tracking monitors where and how often your brand appears in AI-generated responses across LLMs and AI search engines. This isn’t rank tracking. It’s about capturing probabilistic, non-linear outputs, and distinguishing between a “mention” (your name appears in a narrative) and a “citation” (the AI attributes a source or links to your domain). Citations are what actually drive referral traffic.

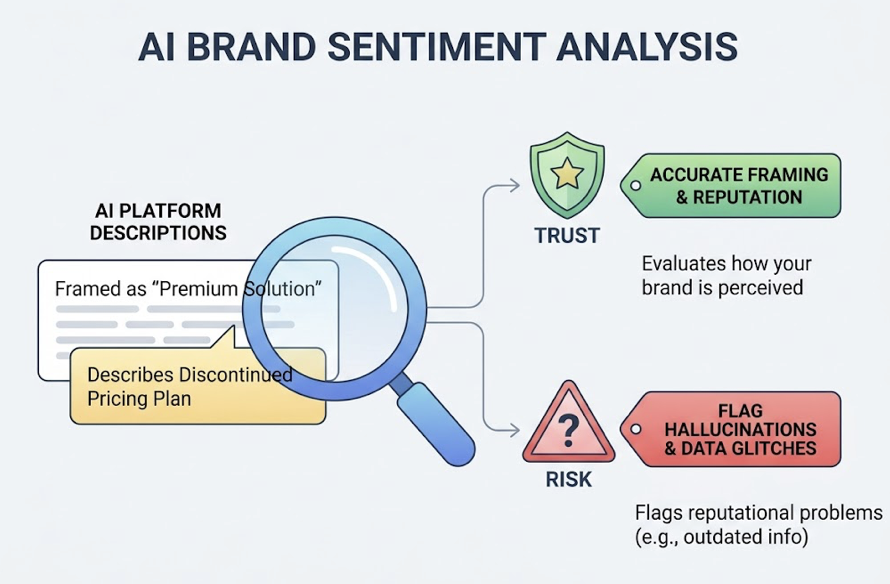

AI Brand Sentiment Analysis evaluates how AI platforms describe your brand. Whether you’re being framed as a “premium solution” or a “budget alternative” matters, especially in finance and healthcare where trust is part of the product. This feature also flags hallucinations: an AI confidently describing a pricing plan you discontinued two years ago is a reputation problem, not just a data glitch.

LLM Ranking Insights explain why an AI chose to cite one brand over another. This moves the focus from keywords to conversational intents, which research shows are phrased differently than Google searches in over 80% of cases. These insights help teams find “answer gaps”: questions where competitors are winning recommendations and you’re invisible.

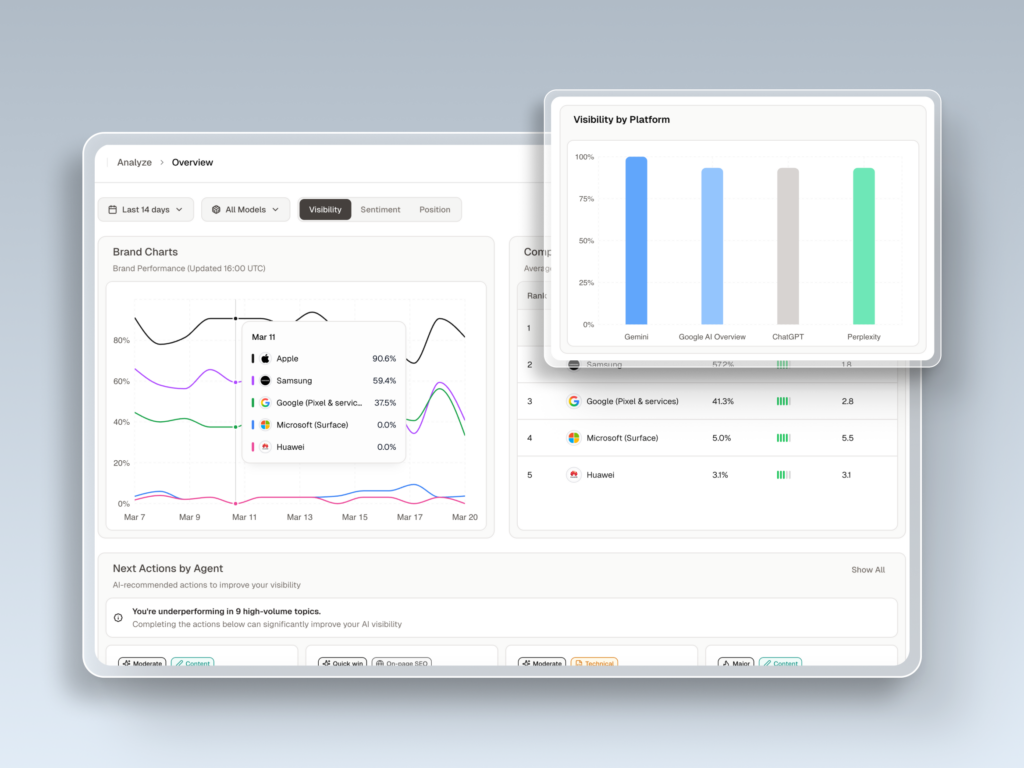

Competitor Benchmarking puts your share of voice in context. In AI answers, a single synthesized response can replace a full page of search results. Knowing your relative position across ChatGPT, Perplexity, and Gemini is the strategic baseline for any media or content budget decision.

All four are table stakes. The question is how deep each tool goes on each one.

| Capability | Practical Application | What to Verify in the Trial |

|---|---|---|

| AI Visibility Tracking | Your SaaS isn’t appearing in “best CRM” lists in Perplexity | Mention vs. citation distinction |

| AI Brand Sentiment Analysis | Gemini is describing a pricing plan you no longer offer | Sentiment polarity + hallucination flagging |

| LLM Ranking Insights | ChatGPT prioritizes your docs over your marketing blog | Answer gap identification |

| Competitor Benchmarking | You own 45% of mentions in your category in GPT-4o | Source-level citation tracing |

Stop Looking at Star Ratings Until You’ve Done This First

Most buyers open G2, sort by rating, and start reading reviews. That’s backwards.

A 4.7-star rating from 200 enterprise users tells you almost nothing if you’re a 12-person marketing team. The aggregate score blends feedback from teams with completely different workflows, budgets, and technical expectations.

G2’s segment filters exist for exactly this reason. Use them before you touch the star ratings.

Small Business (under 50 employees) typically means no dedicated AEO staff and limited time for setup. The right G2 filter here isn’t “Most Popular.” It’s the Ease of Setup and Ease of Use scores within the Small Business segment. A tool that takes three weeks to configure properly isn’t a tool for a five-person team, regardless of how good its enterprise benchmarking is.

Mid-Market (51 to 1,000 employees) companies are in the scaling middle: formal teams, multi-regional operations, and a need for integrations with existing SEO or CRM stacks. For this segment, the G2 Relationship Index is the most predictive metric. It measures support quality and ease of doing business with the vendor. Mid-market teams don’t have the procurement muscle to escalate support tickets the way enterprises do. Vendor responsiveness matters more than it appears in a feature list.

Enterprise (1,001+ employees) procurement runs on compliance. SOC 2 Type II, SSO support, and the ability to process tens of thousands of prompts across global markets aren’t nice-to-haves. They’re blockers. G2’s Enterprise Business category filter requires a minimum of 10 reviews from enterprise-level users before a product qualifies, which is a meaningful signal of genuine adoption at scale.

| Segment | What to Filter By | Deal-Breaker Requirement |

|---|---|---|

| Small Business | Ease of Setup score | No-code onboarding, fast “aha” moment |

| Mid-Market | Relationship Index | Flexible seats, reliable support SLA |

| Enterprise | Implementation Index | SOC 2 Type II, SSO, high prompt volume |

The Pricing Trap That Catches Most Buyers Mid-Budget

The base subscription price is the least useful number in an AEO tool evaluation.

Here’s why. Traditional SEO platforms typically charge per user. AEO-native tools charge per tracked prompt or per AI answer analysis. These are fundamentally different cost structures, and mixing them up leads to budget surprises.

Per-user models are predictable, but they scale poorly when four departments need access: marketing, PR, content, and product. Shared logins become a security risk. Per-prompt models are better aligned with actual value, but a team tracking 50 prompts across six AI engines is effectively tracking 300 prompts, since some tools bill per engine, not per query.

Don’t guess. Read the G2 reviews with these three cost signals in mind.

Credit multiplication: Does the tool charge once per prompt or once per engine per prompt? This is rarely stated clearly in pricing pages but comes up constantly in mid-tier reviews.

Add-on gating: Sentiment analysis and Gemini coverage are frequently locked behind higher tiers. A tool that looks affordable at the Basic plan can double in price once you add the capabilities you actually need.

Data latency costs: A tool refreshing data weekly might seem like a budget win. It isn’t. If AI is hallucinating incorrect information about your brand for seven days before you find out, that’s a reputation cost that doesn’t appear on an invoice.

For teams under $100/month, entry-level plans from smaller players can work if the use case is narrow. At the $100 to $500/month range, the tradeoff is between multi-engine coverage depth and execution features. Topify’s Basic plan sits in this range at $99/month with ChatGPT, Perplexity, and AI Overviews tracking included, plus 9,000 AI answer analyses per month, which is more than sufficient for most growing marketing teams.

Not Every Team Needs All Four Capabilities in Year One

Buying a tool with four core capabilities doesn’t mean your team will use all four effectively. Implementation complexity and team bandwidth matter.

AI Visibility Tracking has the lowest implementation complexity and the highest immediate ROI. It’s the right starting point for any brand that doesn’t yet have a baseline understanding of where they appear in AI recommendations. SaaS and e-commerce teams benefit most, particularly for “Best [category] for [persona]” queries, which research shows are the most influential for B2B shortlisting decisions.

Brand Sentiment Analysis becomes worth the effort when reputation management is an active priority: post-launch, post-crisis, or in regulated industries. If you’re not actively monitoring and correcting AI narratives about your brand, you’re essentially outsourcing your brand positioning to a probabilistic model.

LLM Ranking Insights are powerful and expensive to act on. The data tells you why an AI prefers a competitor’s content. Acting on it means rewriting content, updating schema, and restructuring documentation. If your team doesn’t have the bandwidth to execute on 20 content changes a month, prioritize tools that offer built-in content generation or automated schema deployment rather than raw ranking data alone.

Competitor Benchmarking is where the “surface feature trap” is most common. A share-of-voice chart looks convincing in a slide deck. The feature that actually creates strategic value is the ability to trace which specific URLs a competitor is being cited from. Which third-party review sites, Reddit threads, or documentation pages is the AI treating as authoritative sources for them? That’s the intelligence that informs a real content gap strategy.

| Capability | Best Use Case | Complexity | Time to Value |

|---|---|---|---|

| AI Visibility Tracking | Establishing a baseline | Low | Days |

| Brand Sentiment | Reputation management | Medium | 1-2 weeks |

| LLM Ranking Insights | Content optimization | High | 1-3 months |

| Competitor Benchmarking | Strategic planning | Medium | 2-4 weeks |

A 4.8-Star Rating Can’t Tell You If a Tool Tracks DeepSeek

G2 ratings are lagging indicators. They reflect how a tool performed for users who left reviews, which may have been six months ago, before the latest round of LLM updates.

That’s not a criticism of G2. It’s a structural limitation of review platforms. The only way to verify current performance is a structured trial with a clear evaluation plan.

Here’s a 7-day framework that works.

Day 1: Manually run 10 high-intent prompts through ChatGPT, Perplexity, and Gemini. Record which domains are cited and what the sentiment is. This is your independent baseline.

Day 2: Onboard the tool and input the same 10 prompts. Compare its reported data against your Day 1 manual findings. Gaps here are your first signal of data reliability.

Day 3: Change a meta description or schema tag on a key page. Check how long it takes for the tool to detect and reflect that change. Weekly refresh cycles are a problem in a market where AI model updates can shift citation landscapes in 48 hours.

Day 4: Use the benchmarking feature to identify a specific source a competitor is being cited from. Verify independently that the source exists and that the tool’s reasoning makes sense.

Day 5: Run prompts with known negative associations or common hallucination triggers in your industry. Test whether sentiment flagging catches them.

Day 6: Test the API or data export. Ask support a specific technical question about their data retrieval methodology, specifically whether they use live browser rendering or API snapshots. Browser-rendered tools almost always provide more accurate real-world data.

Day 7: Build a mini-ROI case. If the trial uncovered three actionable answer gaps, estimate the lead value of closing them. That calculation is what gets budget approved.

Topify’s free trial is designed for exactly this kind of evaluation. The Basic plan includes up to 9,000 AI answer analyses per month, which gives enough data volume to run meaningful comparisons rather than relying on a sample size of 50 prompts. The 7-metric framework it tracks, covering Visibility, Sentiment, Position, Volume, Mentions, Intent, and CVR (Conversion Visibility Rate), is worth mapping directly to your Day 1 manual audit. The CVR metric in particular connects AI visibility to downstream conversion probability, which is the number most marketing managers need to justify the spend to a CFO.

Use the trial to cross-verify whatever G2 shortlist you’ve built. If a tool’s reported data consistently diverges from your manual spot checks, that divergence will scale.

What G2 Reviews Miss (And Where to Find It Anyway)

G2 reviews are excellent for gauging support quality and user satisfaction. They’re not reliable for surfacing technical architecture gaps. Three blind spots come up repeatedly in AEO tool evaluations.

New platform support: 47% of AI search users switch between two or more platforms regularly. A tool that covers ChatGPT well but only does shallow polling on DeepSeek or Grok isn’t a complete picture. The hidden signal in reviews: look for mentions of “reasoning traces” or “chain-of-thought analysis.” That language indicates the tool can actually see the selection logic newer models use, not just the output.

Data refresh frequency: A clean dashboard can hide a stale dataset. If a tool relies on static API caches rather than live browser rendering, you might be looking at citation data that shifted 24 hours ago. Search reviews for the words “latency,” “refresh,” “missed,” or “delayed.” If users mention that manual checks showed different results, that’s a refresh problem, not a UI problem.

Actionability depth: The most common post-purchase regret in AEO is discovering that a tool functions as an intelligence center but doesn’t connect to execution. Five-star reviews often praise dashboard clarity. A year later, teams abandon the tool because it doesn’t integrate with their CMS. Look for reviews that mention “one-click execution” or “agentic workflows” as signals that the tool can deploy changes, not just report them.

These three gaps won’t appear in a vendor’s feature page. They show up in six-month-old reviews from users who’ve hit them.

Conclusion

G2’s AEO category is a useful filter, not a buying decision. It tells you which tools have met a minimum capability bar. It doesn’t tell you which one fits a team of 8 versus a team of 800, or which pricing model won’t surprise you in month three.

The framework here does the work G2 can’t: segment first, then pricing structure, then capability matching, then trial verification. That sequence eliminates tools before you spend time reading reviews that aren’t relevant to your situation.

The trial is the final step, not an afterthought. Run it with a structured plan, use Topify to cross-verify your shortlist against real AI answer data, and build the ROI case before the trial ends. That’s how you go from a G2 shortlist to a procurement decision you can defend.

FAQ

Q: Is “AEO tool” and “GEO tool” the same thing on G2?

Largely yes. G2 uses “AEO” (Answer Engine Optimization) as the official category label, but many vendors use “GEO” (Generative Engine Optimization) interchangeably. The practical distinction: AEO traditionally focused on featured snippets and voice assistants, while GEO focuses on generative outputs from ChatGPT, Perplexity, and similar platforms. On G2, they live in the same category.

Q: How often does G2 update the AEO category rankings?

G2 publishes major Grid Reports quarterly (Winter, Spring, Summer, Fall). However, the real-time G2 Score and Popularity metrics on category pages are updated daily as new reviews and market presence data come in.

Q: Can a small team (under 10 people) realistically use an AEO tool?

Yes, and small teams often get a better proportional return. They can’t compete with enterprise backlink budgets, but AEO provides visibility through structured, high-intent content, which doesn’t require headcount to scale. The key is prioritizing tools with fast setup times and high-intent prompt tracking rather than full enterprise reporting suites.

Q: What’s the fastest way to compare two shortlisted tools?

Ask both vendors directly about their data retrieval methodology: live browser rendering versus API snapshots. Beyond that, the G2 side-by-side comparison tool is useful, but the real test is running both trials simultaneously against the same 10 prompts and comparing the outputs against your own manual checks.