Half of all B2B software buyers no longer start their research on Google.

According to a March 2026 survey of 1,076 B2B software decision-makers, 51% now initiate vendor research inside an AI chatbot — up from 29% just eleven months prior. That’s not a slow drift. That’s a structural break.

G2 recognized this shift early. In March 2025, it formalized Answer Engine Optimization (AEO) as an official software category. Fourteen months later, 248 tools are competing inside it. This report breaks down what that ecosystem actually looks like, why buyer behavior has shifted so decisively, and what it means for how you should be thinking about AI search visibility in 2026.

51% Didn’t Start on Google. Here’s What That Actually Means.

The number is striking enough on its own. But the underlying driver is what makes this a durable change, not a novelty effect.

Fifty-three percent of buyers say that research conducted via AI is significantly more productive than traditional search, up from 36% seven months ago. When a behavior shift is driven by productivity gains, it tends to stick. Buyers aren’t using ChatGPT because it’s new. They’re using it because it saves time.

The downstream consequence is a “zero-click” reality. Research indicates zero-click searches now account for nearly 60% of all queries, and as high as 93% in Google’s AI Mode. A buyer asks ChatGPT which CRM to evaluate. ChatGPT names three vendors. The buyer never visits a search engine. That exchange happens entirely outside your organic SEO reach.

There’s also a shortlist disruption happening that most marketing teams haven’t fully priced in. Sixty-nine percent of buyers indicated they chose a different software vendor than initially planned based on AI guidance. One-third purchased from a vendor they were previously unfamiliar with. Brand moats built on name recognition are weakening. Technical relevance and peer-validated authority are replacing them.

G2’s AEO Category Has 248 Tools. Most Teams Are Using the Wrong Layer.

The rapid expansion of G2’s AEO category — over 2,000% demand growth since launch — has created a market that looks more crowded than it is confusing. The 248 tools aren’t really competing with each other across the board. They occupy four distinct functional layers.

Layer 1: Brand Mention and Share of Voice Monitoring. These are entry-level tools that track how often a brand name appears in AI-generated answers across a predefined prompt set. They’re useful for establishing a visibility baseline. They’re not useful for understanding why your brand appears or how to improve it.

Layer 2: Citation and URL-Level Analysis. This is where operational-grade AEO work happens. These tools move beyond mentions to identify the specific URLs and domains the AI is actually citing. A mention builds recall. A citation builds authority. Knowing which competitor pages are being cited — and why — is what allows teams to close citation gaps with targeted content.

Layer 3: Multilingual and Global AI Search Visibility. As DeepSeek, Qwen, and Doubao gain market share in non-Western markets, Layer 3 tools track brand presence across AI ecosystems in different languages and regions. For global brands, this layer isn’t optional.

Layer 4: Enterprise Risk and Hallucination Detection. The most advanced layer monitors for AI “hallucinations” — cases where a model makes inaccurate or fabricated claims about a brand. In a world where 64% of buyers encounter inaccurate AI recommendations often, Layer 4 tools are increasingly critical for regulated industries like healthcare and finance.

Most B2B SaaS teams should be focused on Layer 2 first. The gap between “we appear in some AI answers” and “we appear in the right AI answers for the right reasons” lives in citation-level data.

Why 74% of B2B Buyers Default to ChatGPT

ChatGPT’s dominance in B2B research isn’t just about market share. It’s about how the model communicates.

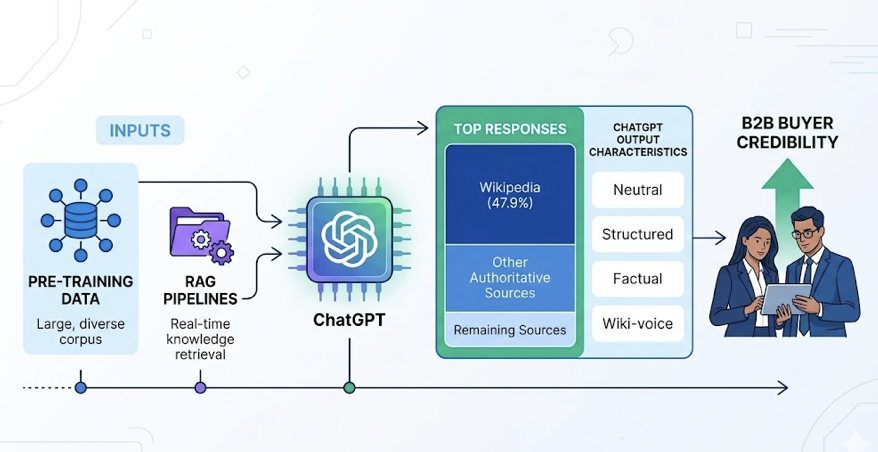

ChatGPT now reaches over 800 million weekly active users and accounts for 87.4% of all AI-driven referral traffic. Its retrieval combines pre-training data with RAG pipelines that strongly favor authoritative, “Wiki-voice” content — neutral, structured, and factual. Wikipedia alone appears in 47.9% of its top responses. For B2B buyers, this neutrality reads as credibility.

The trust signal is measurable. Eighty-five percent of buyers report thinking more highly of a vendor when an AI chatbot mentions them in a recommendation. Eighty-three percent feel more confident in their final purchase decision when AI was part of their research process.

On the flip side, Perplexity operates differently. It searches the live web by default and provides inline citations for every claim, making it the platform where “statistical freshness” determines visibility. Gemini integrates Google’s Knowledge Graph and YouTube signals, and its 1 million token context window makes it especially powerful for deep research on complex B2B decisions.

Each platform has a distinct trust architecture. That’s the part most AEO strategies ignore.

What the G2 Grid Doesn’t Tell You About These 248 Tools

G2’s standard scoring framework measures ease of use, customer support quality, and market presence. These are useful proxies for software quality in general. They’re less useful for evaluating AEO tools specifically.

Here’s the thing: G2 doesn’t score whether a tool itself is being cited by AI. That gap matters more than it sounds. An AEO tool that monitors your AI visibility but isn’t authoritative enough to appear in AI recommendations has a credibility problem built into its own use case.

G2 scores also don’t capture cross-platform coverage depth. A tool that tracks ChatGPT only gives you 87.4% of the AI referral picture — and misses entirely the emerging platforms where early positioning is cheapest. The evaluation dimensions that actually matter for AEO tools are: prompt coverage breadth, citation attribution accuracy, data freshness frequency, and whether there’s an execution layer or just a dashboard.

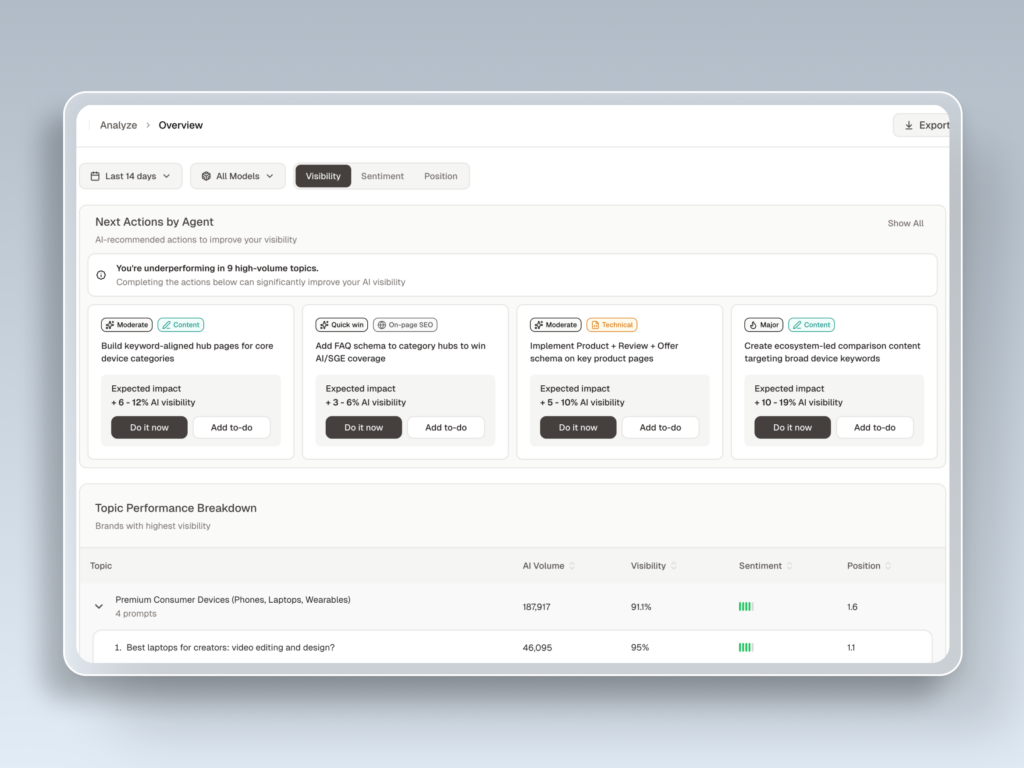

That last point separates monitoring tools from optimization tools. The “Actionability Gap” — the difference between a tool that reports your AI visibility and one that helps you improve it — is the most underappreciated dimension in the current G2 AEO grid.

The 7-Metric Framework Every AEO Team Should Track

The analysis of 248 tools converges on a framework of seven core metrics for quantifying AI visibility. Traditional SEO KPIs like organic CTR are losing predictive power. These replace them.

1. AI Visibility Rate. The percentage of tracked prompts where your brand is cited or mentioned. Industry leaders typically sit above 30%, though this benchmark varies by vertical. Healthcare AI Overviews, for example, trigger at 48.7%.

2. Answer Placement Score. Position matters. A primary recommendation that appears first in a ChatGPT response carries fundamentally different weight than a “you might also consider” mention at the end. APS weights mentions by their narrative position in the AI’s response.

3. Sentiment Polarity Score. Visibility without positive framing is a liability. NLP-based sentiment analysis tracks whether AI describes your brand in a way that drives conversions — or quietly undercuts them. A brand with high visibility but a sentiment score suggesting “expensive but error-prone” has a citation gap problem, not a content volume problem.

4. Source Citation Share. Roughly 85% of AI citations come from third-party sources, not brand-owned domains. This metric shows which external sites — Reddit, G2 reviews, industry publications — are serving as the “trust neighborhoods” the AI uses to validate your brand.

5. Feature Association Coverage. Does the AI associate your brand with the value propositions you actually want to own? If your CRM is only cited in “lowest cost” conversations but never in “enterprise scalability” ones, there’s a misalignment between brand strategy and AI-learned perception.

6. Prompt Coverage. AEO tracks prompts, not keywords. A prompt averages 23 words vs. 4 for a keyword. Full-funnel prompt coverage means your brand appears across discovery (“What is…”), evaluation (“Best for…”), and comparison (“Brand X vs. Brand Y”) queries.

7. Conversion Visibility Rate (CVR). Despite low click-through rates overall, traffic arriving from AI platforms converts at 4.4 times the rate of traditional organic users. CVR predicts the probability that an AI response leads to a brand interaction.

Most teams track one or two of these. The brands pulling ahead in 2026 are tracking all seven.

The Monitoring Layer Is Where Most B2B Teams Underinvest

Content optimization tools attract most of the budget. Monitoring tools get treated as optional add-ons. That’s backwards.

You can publish optimized content all quarter and have no way of knowing whether it changed your AI citation rate, improved your sentiment score, or shifted your answer placement. Without measurement, optimization is guesswork dressed as strategy.

The monitoring layer also catches something most content tools miss: negative drift. AI models update their training and retrieval patterns continuously. A brand that was positively positioned six months ago may have slipped without any change in content output. Only active monitoring catches that before it costs you pipeline.

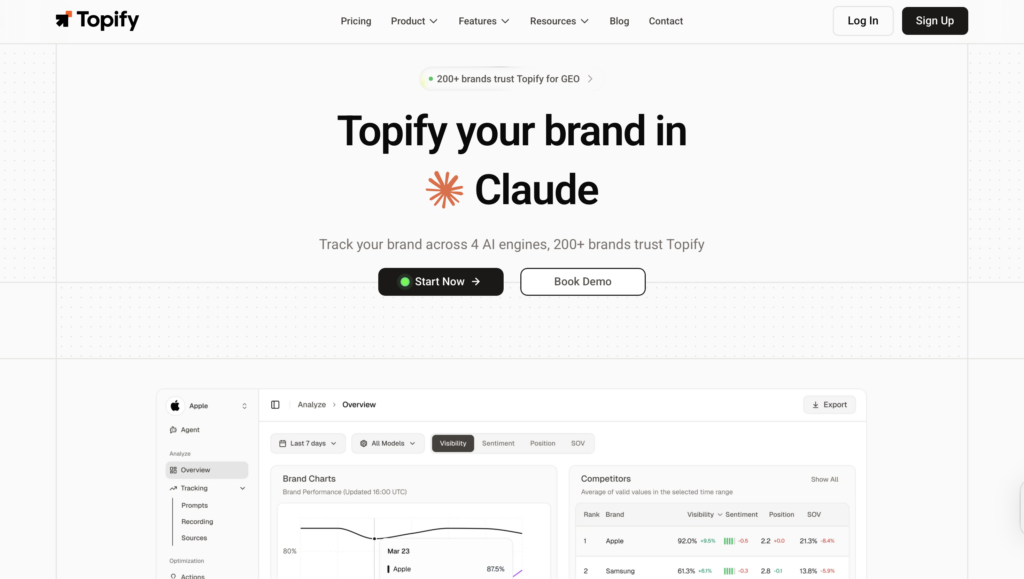

Topify is built around this exact logic. The platform tracks all seven metrics outlined above — visibility, sentiment, position, volume, mentions, intent, and CVR — across ChatGPT, Gemini, Perplexity, DeepSeek, Doubao, and Qwen. The cross-platform coverage is what separates a monitoring strategy from a single-platform snapshot.

The Source Analysis feature specifically addresses the 85% third-party citation reality. Rather than guessing which external content is driving AI recommendations, Topify maps the exact domains and URLs the AI is citing, then surfaces gaps where competitors are being cited and you’re not. That’s the data that informs where to publish, not just what to publish.

How Topify Sits in the G2 AEO Ecosystem

In the G2 AEO category taxonomy, Topify operates squarely in Layer 2 with selective Layer 3 capabilities. The platform’s technical approach uses browser-based simulation to replicate real user queries, capturing “hidden” citations that API-based tools often miss — a meaningful distinction when citation attribution accuracy determines whether your optimization effort is pointed at the right target.

The pricing structure aligns with how most mid-market SaaS teams actually buy tools. The Basic plan starts at $99/month and covers 100 prompts and 9,000 AI answer analyses across four projects. The Pro plan at $199/month scales to 250 prompts and 22,500 analyses. For teams that have historically budgeted for SEO tools in the $150-$300/month range, the entry point is comparable. The difference is that AEO monitoring is measuring a channel where 51% of your buyers now start their research.

The “One-Click Agent Execution” layer sets it apart from pure monitoring tools. Once the data identifies a citation gap — say, a competitor is being cited for “AI-native CRM scalability” on three domains you’re not present on — Topify’s agent can propose and deploy a content strategy to close that gap without manual workflow orchestration.

For agencies managing multiple B2B brands, the multi-project structure matters. Each client’s visibility profile, competitive position, and citation gap analysis sits in a separate project, allowing the same 7-metric framework to be applied consistently across accounts.

The structural reality the G2 data confirms is this: AI visibility is not a marketing experiment. It’s an infrastructure decision. The brands that treat it that way in 2026 will be harder to displace in 2027 — not because of brand budget, but because citation authority compounds in the same way backlink authority once did.

Conclusion

The G2 AEO category didn’t exist eighteen months ago. It now has 248 tools and over 2,000% demand growth because the buyer journey rewired itself faster than most marketing stacks could respond.

The data is unambiguous: 51% of B2B buyers start in AI, 69% change their shortlist based on AI guidance, and 33% buy from vendors they’d never heard of before an AI mentioned them. Content strategy, SEO investment, and brand spend that don’t account for AI citation behavior are increasingly disconnected from where decisions are actually being made.

The 7-metric framework isn’t a new dashboard to fill. It’s the measurement infrastructure that makes the rest of your content and brand investment legible in a world where machines are synthesizing your market position before any human reads your website.

Start with the monitoring layer. Understand which layer of the G2 AEO grid your current tooling covers — and which layers it doesn’t. The gap between what your AI visibility looks like today and what it needs to look like to compete in the Answer Economy is measurable. That’s the first step.

FAQ

What is an AEO tool?

An AEO (Answer Engine Optimization) tool helps brands track and improve their visibility within AI-generated answers from platforms like ChatGPT, Perplexity, and Gemini. Unlike traditional SEO tools that track keyword rankings on search engine results pages, AEO tools measure citation frequency, sentiment, answer placement, and source attribution in AI responses.

How is AEO different from SEO?

SEO optimizes for search engine ranking pages (SERPs). AEO optimizes for how, where, and whether an AI model cites your brand in its answers. The core distinction is the measurement unit: SEO tracks keyword positions, AEO tracks prompt coverage, citation share, and answer placement across AI platforms. With 51% of B2B buyers now starting research in AI chatbots, both disciplines are necessary — but they require different tools and content strategies.

What does G2’s AEO category include?

G2 formalized the AEO software category in March 2025. It currently indexes 248 tools divided into four functional layers: brand mention monitoring, citation and URL-level analysis, multilingual and global AI search visibility, and enterprise risk and hallucination detection. The category has grown over 2,000% in demand since launch.

Which AEO tools work best for B2B SaaS brands?

Mid-market B2B SaaS teams typically need Layer 2 tools that go beyond basic mention tracking to provide citation-level attribution and content gap analysis. Platforms that track the full 7-metric framework — visibility, sentiment, position, volume, mentions, intent, and CVR — across multiple AI engines are most appropriate for growth-focused teams.

How do I know if my brand is visible in AI search?

Run your 10 most important buying-stage prompts (e.g., “best [category] tools for [use case]”) through ChatGPT, Perplexity, and Gemini manually. Note whether your brand appears, how it’s described, and what sources are cited. That manual baseline is step one. An AEO monitoring platform automates this across hundreds of prompts and surfaces competitive gaps you wouldn’t catch manually.